How a simple circuit could offer an alternative to energy-intensive GPUs

On a table in his lab at the University of Pennsylvania, physicist Sam Dillavou has connected an array of breadboards via a web of brightly colored wires. The setup looks like a DIY home electronics project—and not a particularly elegant one. But this unassuming assembly, which contains 32 variable resistors, can learn to sort data like a machine-learning model.

While its current capability is rudimentary, the hope is that the prototype will offer a low-power alternative to the energy-guzzling graphical processing unit (GPU) chips widely used in machine learning.

“Each resistor is simple and kind of meaningless on its own,” says Dillavou. “But when you put them in a network, you can train them to do a variety of things.”

A task the circuit has performed: classifying flowers by properties such as petal length and width. When given these flower measurements, the circuit could sort them into three species of iris. This kind of activity is known as a “linear” classification problem, because when the iris information is plotted on a graph, the data can be cleanly divided into the correct categories using straight lines. In practice, the researchers represented the flower measurements as voltages, which they fed as input into the circuit. The circuit then produced an output voltage, which corresponded to one of the three species.

This is a fundamentally different way of encoding data from the approach used in GPUs, which represent information as binary 1s and 0s. In this circuit, information can take on a maximum or minimum voltage or anything in between. The circuit classified 120 irises with 95% accuracy.

Now the team has managed to make the circuit perform a more complex problem. In a preprint currently under review, the researchers have shown that it can perform a logic operation known as XOR, in which the circuit takes in two binary numbers and determines whether the inputs are the same. This is a “nonlinear” classification task, says Dillavou, and “nonlinearities are the secret sauce behind all machine learning.”

Their demonstrations are a walk in the park for the devices you use every day. But that’s not the point: Dillavou and his colleagues built this circuit as an exploratory effort to find better computing designs. The computing industry faces an existential challenge as it strives to deliver ever more powerful machines. Between 2012 and 2018, the computing power required for cutting-edge AI models increased 300,000-fold. Now, training a large language model takes the same amount of energy as the annual consumption of more than a hundred US homes. Dillavou hopes that his design offers an alternative, more energy-efficient approach to building faster AI.

Training in pairs

To perform its various tasks correctly, the circuitry requires training, just like contemporary machine-learning models that run on conventional computing chips. ChatGPT, for example, learned to generate human-sounding text after being shown many instances of real human text; the circuit learned to predict which measurements corresponded to which type of iris after being shown flower measurements labeled with their species.

Training the device involves using a second, identical circuit to “instruct” the first device. Both circuits start with the same resistance values for each of their 32 variable resistors. Dillavou feeds both circuits the same inputs—a voltage corresponding to, say, petal width—and adjusts the output voltage of the second circuit to correspond to the correct species. The first circuit receives feedback from that second circuit, and both circuits adjust their resistances so they converge on the same values. The cycle starts again with a new input, until the circuits have settled on a set of resistance levels that produce the correct output for the training examples. In essence, the team trains the device via a method known as supervised learning, where an AI model learns from labeled data to predict the labels for new examples.

It can help, Dillavou says, to think of the electric current in the circuit as water flowing through a network of pipes. The equations governing fluid flow are analogous to those governing electron flow and voltage. Voltage corresponds to fluid pressure, while electrical resistance corresponds to the pipe diameter. During training, the different “pipes” in the network adjust their diameter in various parts of the network in order to achieve the desired output pressure. In fact, early on, the team considered building the circuit out of water pipes rather than electronics.

For Dillavou, one fascinating aspect of the circuit is what he calls its “emergent learning.” In a human, “every neuron is doing its own thing,” he says. “And then as an emergent phenomenon, you learn. You have behaviors. You ride a bike.” It’s similar in the circuit. Each resistor adjusts itself according to a simple rule, but collectively they “find” the answer to a more complicated question without any explicit instructions.

A potential energy advantage

Dillavou’s prototype qualifies as a type of analog computer—one that encodes information along a continuum of values instead of the discrete 1s and 0s used in digital circuitry. The first computers were analog, but their digital counterparts superseded them after engineers developed fabrication techniques to squeeze more transistors onto digital chips to boost their speed. Still, experts have long known that as they increase in computational power, analog computers offer better energy efficiency than digital computers, says Aatmesh Shrivastava, an electrical engineer at Northeastern University. “The power efficiency benefits are not up for debate,” he says. However, he adds, analog signals are much noisier than digital ones, which make them ill suited for any computing tasks that require high precision.

In practice, Dillavou’s circuit hasn’t yet surpassed digital chips in energy efficiency. His team estimates that their design uses about 5 to 20 picojoules per resistor to generate a single output, where each resistor represents a single parameter in a neural network. Dillavou says this is about a tenth as efficient as state-of-the-art AI chips. But he says that the promise of the analog approach lies in scaling the circuit up, to increase its number of resistors and thus its computing power.

He explains the potential energy savings this way: Digital chips like GPUs expend energy per operation, so making a chip that can perform more operations per second just means a chip that uses more energy per second. In contrast, the energy usage of his analog computer is based on how long it is on. Should they make their computer twice as fast, it would also become twice as energy efficient.

Dillavou’s circuit is also a type of neuromorphic computer, meaning one inspired by the brain. Like other neuromorphic schemes, the researchers’ circuitry doesn’t operate according to top-down instruction the way a conventional computer does. Instead, the resistors adjust their values in response to external feedback in a bottom-up approach, similar to how neurons respond to stimuli. In addition, the device does not have a dedicated component for memory. This could offer another energy efficiency advantage, since a conventional computer expends a significant amount of energy shuttling data between processor and memory.

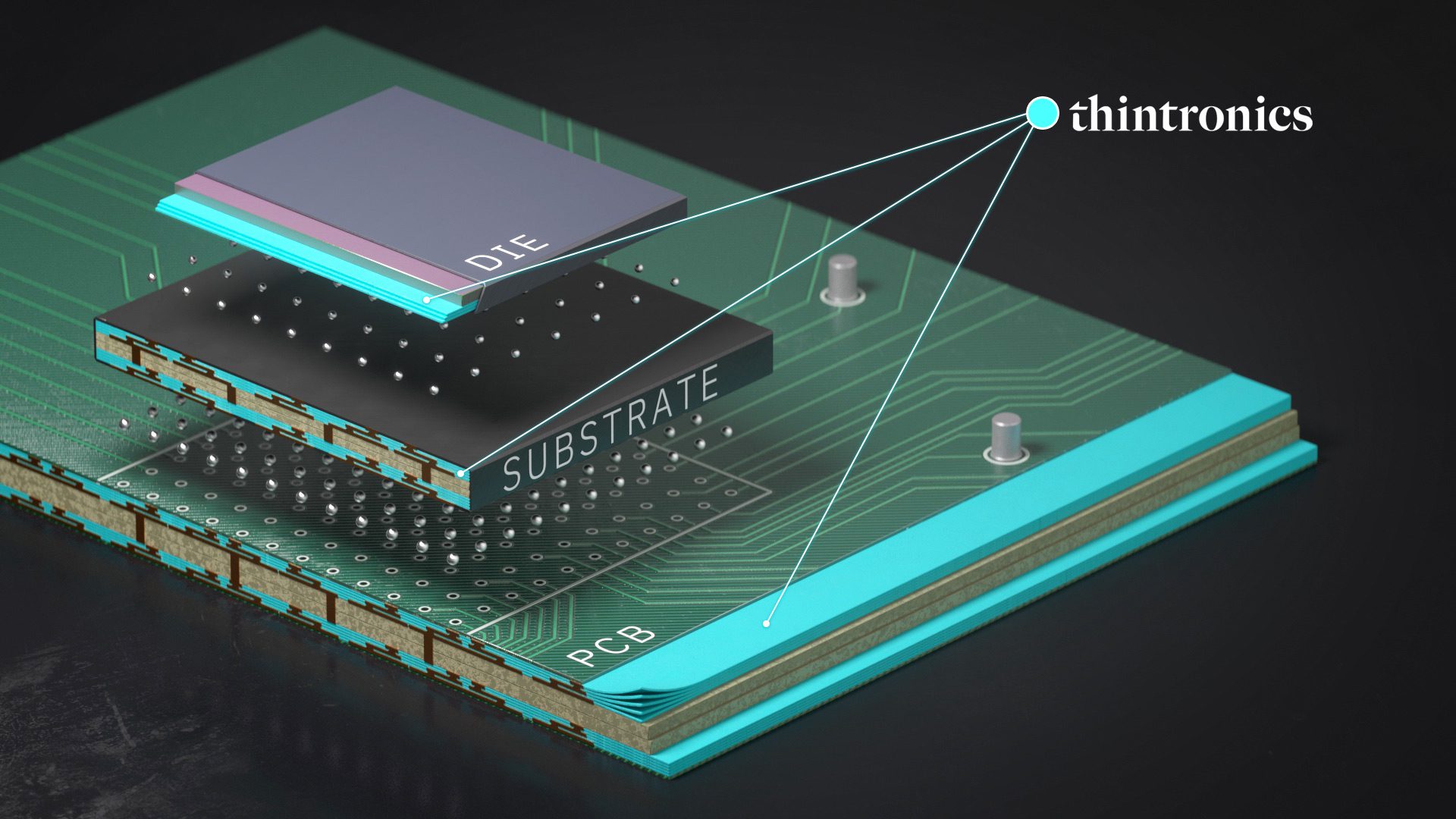

While researchers have already built a variety of neuromorphic machines based on different materials and designs, the most technologically mature designs are built on semiconducting chips. One example is Intel’s neuromorphic computer Loihi 2, to which the company began providing access for government, academic, and industry researchers in 2021. DeepSouth, a chip-based neuromorphic machine at Western Sydney University that is designed to be able to simulate the synapses of the human brain at scale, is scheduled to come online this year.

The machine-learning industry has shown interest in chip-based neuromorphic computing as well, with a San Francisco–based startup called Rain Neuromorphics raising $25 million in February. However, researchers still haven’t found a commercial application where neuromorphic computing definitively demonstrates an advantage over conventional computers. In the meantime, researchers like Dillavou’s team are putting forth new schemes to push the field forward. A few people in industry have expressed interest in his circuit. “People are most interested in the energy efficiency angle,” says Dillavou.

But their design is still a prototype, with its energy savings unconfirmed. For their demonstrations, the team kept the circuit on breadboards because it’s “the easiest to work with and the quickest to change things,” says Dillavou, but the format suffers from all sorts of inefficiencies. They are testing their device on printed circuit boards to improve its energy efficiency, and they plan to scale up the design so it can perform more complicated tasks. It remains to be seen whether their clever idea can take hold out of the lab.