If you’ve been running Google Ads for more than a few years, your job description has changed without your consent. Match types that once signaled precision now target “related intent”; a 2023 rebuild made Broad Match competitive again; and Smart Bidding shifted the focus from keywords to outcomes like return on ad spend (ROAS) and cost-per-action (CPA). Now, with AI Max, keywords are becoming optional in Search campaigns altogether.

I joined Google in 2002 as one of its first few hundred employees and spent a decade as the first AdWords Evangelist. Back then, the keyword was the undisputed foundation of paid search. After 24 years in the industry, my conclusion is simple: Keywords are dead.

This isn’t a slogan. It is a technical reality. The core system is being replaced, even if the legacy interface remains. As users shift from search queries to conversational prompts, the “synthetic keyword” – a distillation of complex intent – is replacing the legacy keyword. We are moving toward an auction that runs on pure intent, with no keyword abstraction required. We aren’t there yet, but if you still define PPC as “picking the right keywords,” the ground is shifting under you.

Here is what we are losing, and gaining, as this transition plays out.

The Original Deal

For most of Google Ads’ history, keywords worked like a contract.

You agreed to put in the work to research relevant keywords for your business so that Google could show useful ads to searchers. You structured your account around them. You wrote ads that spoke to their intent. In return, Google agreed to only show your ads when matching queries, based on the match type you chose, lit up in the auction.

- Exact meant exact.

- Phrase meant phrase.

- Broad was the wildcard for advertisers willing to trade precision for reach.

That arrangement gave us something valuable: diagnosability. When a campaign underperformed, you opened the search terms report and saw, line by line, exactly what you were paying for. Bad queries got negatived. Good queries got promoted. Match type was the main lever we had, and we used it carefully.

That’s the world I helped build. It worked for a long time because the tech underpinning the search experience was limited and couldn’t realistically do anything useful with more precise keywords that exceeded the max of 10 words.

What Changed, One Product Decision At A Time

The deal didn’t break in a single moment. It came apart over a decade of decisions that often raised advertisers’ blood pressure and brought us to this moment.

Close variants came first. Exact started including misspellings, then plurals, then function-word variations. By the mid-2010s, “exact match” was already a misnomer. The match type hadn’t changed, but the definition of a match had.

Smart Bidding shifted the center of gravity. Once bids were being set against conversion probability, the question of which keyword triggered the auction mattered less than the question of whether this user would convert. Match type became a throttle for how aggressively the system could explore new queries.

The 2023 Broad Match overhaul changed the narrative. Google invested real engineering into making Broad the semantically intelligent match type – and publicly reported ~25% more conversions in Target CPA campaigns. Advertisers who’d spent 15 years feeling Broad was a money pit were now being told Broad was the future.

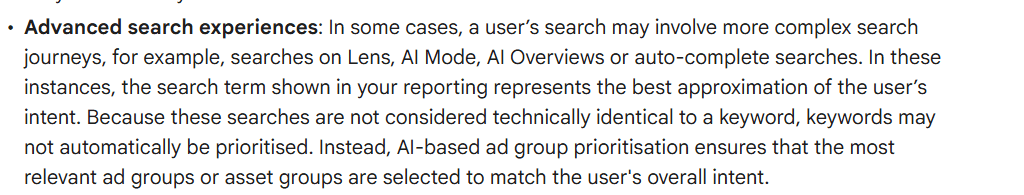

AI Max is where the synthetic keyword shows up. Give Google your URL, your assets, and your business data, and the system finds the intent. From the advertiser’s side, keywords become optional. But the auction itself still runs on a keyword substrate. What’s changed is who picks the keywords that continue to underpin the ads auction and how visible those are to advertisers. Instead of you declaring a keyword list, Google now generates intent matches on the fly from the user’s prompt and your business signals.

And it isn’t just Google.

At Optmyzr, we recently started placing ads on ChatGPT. On OpenAI’s ad surface, keywords are optional from day one. You feed the system signals about your business, and it matches your ad to the shape of the user’s question rather than a phrase you pre-declared.

When the company that defined keyword advertising and the company reinventing search both land on keyword-optional intent matching, that’s a pretty clear signal that intent itself has outgrown the keyword as the unit of targeting. The signals now live in your pages, products, prompts, and context, not in a list you typed into an ad group.

What We’re Losing

I’m not going to pretend this transition is costless. Three things are being taken away.

Granular diagnosability is the first casualty. When a keyword-less campaign underperforms, the old debugging playbook of reading the search terms report, finding the bad queries, adding negatives, and tightening match type only half works. Negative keywords still exist and still matter. But the intent-matching engine is harder to reason about. “Why did my ad show here?” has a fuzzier answer in 2026 than it did in 2016.

The craft of account structure is second. For two decades, one of the hallmarks of a good PPC manager was the ability to architect a campaign. Tight ad groups. Themed structures. Clean branded-versus-non-branded separation. A lot of that structure was a proxy for control. Once the system handles more of the targeting, the strategic value of elaborate structure drops. Some of it was always over-engineering. But the practice of thinking carefully about how intent maps to campaigns was real craft, and it’s at risk of atrophying.

Training is the third. Junior PPC analysts used to build their intuition inside the search terms report. You’d watch queries for a week and start to understand how users phrase problems, how language drifts, how seasonal variations leak into the data. That was a masterclass in consumer psychology. A system that abstracts the keyword away also abstracts away one of the best teaching tools this discipline has ever had. And it removes our ability as marketers to detect shifts in consumer behavior that would normally help us evolve our strategy.

What We’re Gaining

But for all we’re losing in this necessary shift, we’re also gaining a few things.

Coverage of queries no keyword list ever catches. Zero-click queries, brand-new phrases, generational vernacular, localized slang. These are exactly the places where intent-based matching outperforms manual keyword selection. Not because humans are inattentive, but because the space is too big and too dynamic to enumerate.

A lower maintenance tax. Negative keyword lists that stretch into the thousands, endless query audits, SKAG construction, quarterly match type experiments. A lot of that work was overhead imposed by the gap between advertiser language and user language. Closing that gap algorithmically frees up hours for strategy, creative, and measurement.

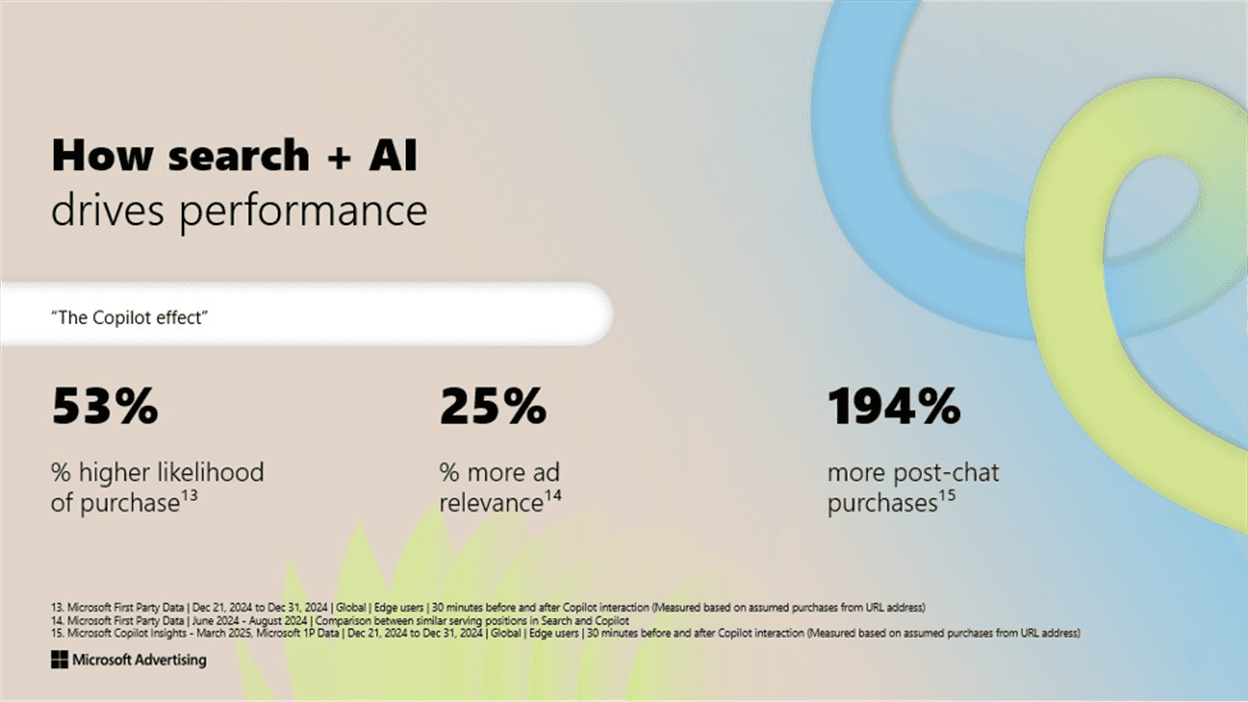

Access to signals no advertiser can match manually. Google’s LLM-driven query understanding sees more of the user’s journey than any keyword list ever will. If you’re unwilling to let those signals into your targeting, you’re choosing to compete in an auction where your opponents have information you don’t.

The Data Already Shows The Shift

We just ran Optmyzr’s 2026 Match Type Study across nearly 130,000 non-branded campaigns in more than 14,000 accounts, totaling roughly $99 million in spend. It’s the clearest quantitative sign I’ve seen that practitioners are already adapting to this new reality, whether they’ve articulated it or not.

A few highlights that matter here:

- Exact Match’s share of non-branded spend has collapsed from 37.1% in 2022 to 27.6% today. Most of that drop happened in the last 24 months. Advertisers haven’t stopped using Exact, but they are shifting towards less control and letting the AI handle more of the targeting.

- On branded terms, the story flips. Exact Match delivers 6.61x ROAS at a $0.90 CPC, nearly double the ROAS of either alternative. Brand intent is known intent, and Exact still owns it.

- Phrase Match is now the workhorse. It drives 40% of non-branded conversions and posts a 15.7% conversion rate, well above Exact’s 10.5% and Broad’s 8.5%. Phrase has become what Exact used to be: the default tool for scalable, intent-respectful discovery.

- Broad Match keeps climbing. It now represents 38.8% of non-branded spend, the single largest bucket. Its ROAS still trails the other two, but its volume contribution makes it no longer optional for most advertisers.

The industry has already been migrating toward looser, more intent-driven matching on non-branded queries while preserving tight control where intent is certain. AI Max just turns the dial further in the same direction.

Menachem Ani, who runs the agency JXT Group and joined me on a recent episode of PPC Town Hall, described the same playbook from the trenches. Start new lead gen campaigns on manual CPC and Phrase Match. Collect good-quality traffic for a few weeks. Promote the winners to Exact. Only then layer Broad and Smart Bidding on top.

Exact, he said, is “too specific for a new campaign.” Broad is “overly aggressive right at the beginning.” Phrase is “the sweet middle spot” – flexible enough to find intent the advertiser didn’t think of, tight enough to keep the data usable.

It’s a big shift when the agency practitioners you’d expect to defend keyword-level control now independently arrive at “Phrase first, Exact later, Broad on top.”

5 Shifts Worth Making In The Next 6 Months

1. Separate Branded And Non-Branded Campaigns

This was always best practice. In a world where intent-based matching blurs campaign boundaries, a sloppy brand separation is the difference between 6.61x ROAS and 3.0x. Build a dedicated branded campaign. Lock it to Exact. Stop letting non-branded creep in.

2. Invest In The Signals Google Actually Reads – Including Offline Conversions

Your landing pages, feed quality, asset library, and business data aren’t just conversion-rate inputs anymore. They’re the targeting inputs AI Max uses to decide when you appear. If you spent 10 years refining keyword lists and one year refining URL and asset hygiene, flip the ratio.

For lead gen specifically, the highest-leverage move is piping qualified-lead data back to Google. Menachem’s rule of thumb was practical: Salesforce, HubSpot, and Zoho have native integrations. For anything else, Make.com or Zapier will send the events back for you. You need roughly 100 leads a month to generate the 30+ qualified conversions Smart Bidding and AI Max want to see before they’ll optimize toward them.

The difference between optimizing to “cost per lead” and “cost per qualified lead” is usually the difference between a campaign that looks good on a dashboard and one that actually grows the business.

3. Treat Negative Keywords As Your Last Line Of Control

In the AI Max era, negatives still work. They’re the most powerful remaining tool for saying “not that, not ever” to the machine. Maintain them aggressively. Automate the additions. This is where brand safety, budget discipline, and irrelevance prevention now live.

4. Test AI Max Where You’d Already Run PMax – And Test It With A Hold-Out

Menachem put this more cleanly than I could: “Use AI Max when you would use PMax.” The underpinnings are the same. The algorithm is pulling search and shopping signals together and deciding where your ad fits. The prerequisites are the same too: enough conversion volume, clean tracking, and a business the algorithm has been taught to recognize.

The accounts getting the most out of AI Max aren’t the ones that flipped the switch and walked away. They’re the ones that ran it against a proper hold-out, measured incrementality, and kept their keyword-based campaigns running on the traffic where those still had an efficiency edge – usually branded terms and proven high-intent exact queries.

5. Upgrade Your Own Skill Stack

The future PPC manager isn’t a keyword picker. They’re an intent engineer; someone who can translate business goals into the signals Google’s system will learn from, debug a semi-black box with query reports and experiments, and explain to a client what’s actually happening inside their account, even when the dashboard only shows aggregate results.

That’s a harder job than picking keywords. It’s also a more defensible one.

The Bigger Picture

I helped build a system designed for control; what’s being built now is a system designed for leverage. Conflating the two is why so many practitioners feel frustrated by tools that are actually performing. Control was about dictating terms to Google. Leverage is about feeding the engine the right signals and letting the auction execute at a scale no human team can match.

Our 2026 data shows the industry is already halfway through this transition. For PPC teams, the question isn’t whether to adapt, but how fast. The keyword as an advertiser artifact is dead. We are moving toward an auction powered by intent alone. Your job is no longer to defend the old interface, but to master the inputs the new one requires.

More Resources:

Featured Image: Accogliente Design/Shutterstock