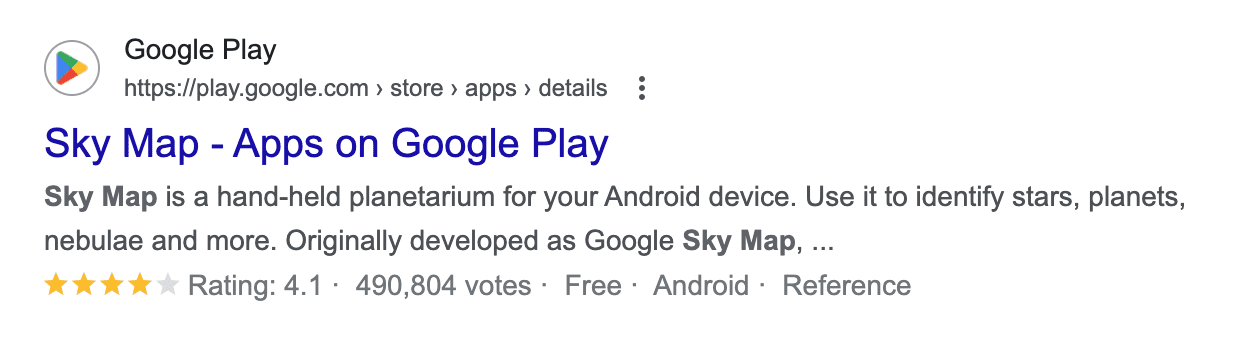

In a series of tweets, Google’s SearchLiaison responded to a question that connected click-through rates (CTR) and HCU (Helpful Content Update) with how Google ranks websites, remarking that if the associated ideas were true it would be impossible for any new website to rank.

Users Are Voting With Their Feet?

Search Liaison’s answer was to a tweet that quoted an interview answer by Google CEO Sundar Pichai, the quote being, “Users vote with their feet”.

Here is the tweet:

“If the HCU (Navboost, whatever you want to call it) is clicks/user reaction based – how could sites hit by the HCU ever hope to recover if we’re no longer being served to Google readers?

@sundarpichai “Users vote with their feet”,

Okay I’ve changed my whole site – let them vote!”

The above tweet appears to connect Pichai’s statement to Navboost, user clicks and rankings. But as you’ll see below, Sundar’s statement about users voting “with their feet” has nothing to do with clicks or ranking algorithms.

Background Information

Sundar Pichai’s answer about users voting “with their feet” has nothing to do with clicks.

The problem with the interview question (and Sundar Pichai’s answer) is that the question and answer are in the context of “AI-powered search and the future of the web.”

The interviewer at The Verge used a site called HouseFresh as an example of a site that’s losing traffic because of Google’s platform shift to the new AI Overviews.

But the HouseFresh site’s complaints predate AI Overviews. Their complaints are about Google ranking low quality “big media” product reviews over independent sites like HouseFresh.

HouseFresh wrote:

“Big media publishers are inundating the web with subpar product recommendations you can’t trust…

Savvy SEOs at big media publishers (or third-party vendors hired by them) realized that they could create pages for ‘best of’ product recommendations without the need to invest any time or effort in actually testing and reviewing the products first.”

Sundar Pichai’s answer has nothing to do with why HouseFresh is losing traffic. His answer is about AI Overviews. HouseFresh’s issues are about low quality big brands outranking them. Two different things.

- The Verge-affiliated interviewer was mistaken to cite HouseFresh in connection with Google’s platform shift to AI Overviews.

- Furthermore, Pichai’s statement has nothing to do with clicks and rankings.

Here is the interview question published on The Verge:

“There’s an air purifier blog that we covered called HouseFresh. There’s a gaming site called Retro Dodo. Both of these sites have said, “Look, our Google traffic went to zero. Our businesses are doomed.”

…Is that the right outcome here in all of this — that the people who care so much about video games or air purifiers that they started websites and made the content for the web are the ones getting hurt the most in the platform shift?”

Sundar Pichai answered:

“It’s always difficult to talk about individual cases, and at the end of the day, we are trying to satisfy user expectations. Users are voting with their feet, and people are trying to figure out what’s valuable to them. We are doing it at scale, and I can’t answer on the particular site—”

Pichai’s answer has nothing to do with ranking websites and absolutely zero context with the HCU. What Pichai’s answer means is that users are determining whether or not AI Overviews are helpful to them.

SearchLiaison’s Answer

Let’s reset the context of SearchLiaison’s answer, here is the tweet (again) that started the discussion:

“If the HCU (Navboost, whatever you want to call it) is clicks/user reaction based – how could sites hit by the HCU ever hope to recover if we’re no longer being served to Google readers?

@sundarpichai “Users vote with their feet”,

Okay I’ve changed my whole site – let them vote!”

Here is SearchLiaison’s response:

“If you think further about this type of belief, no one would ever rank in the first place if that were supposedly all that matters — because how would a new site (including your site, which would have been new at one point) ever been seen?

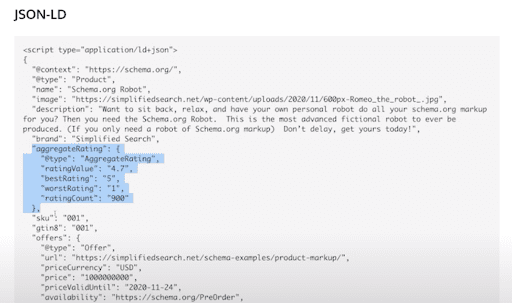

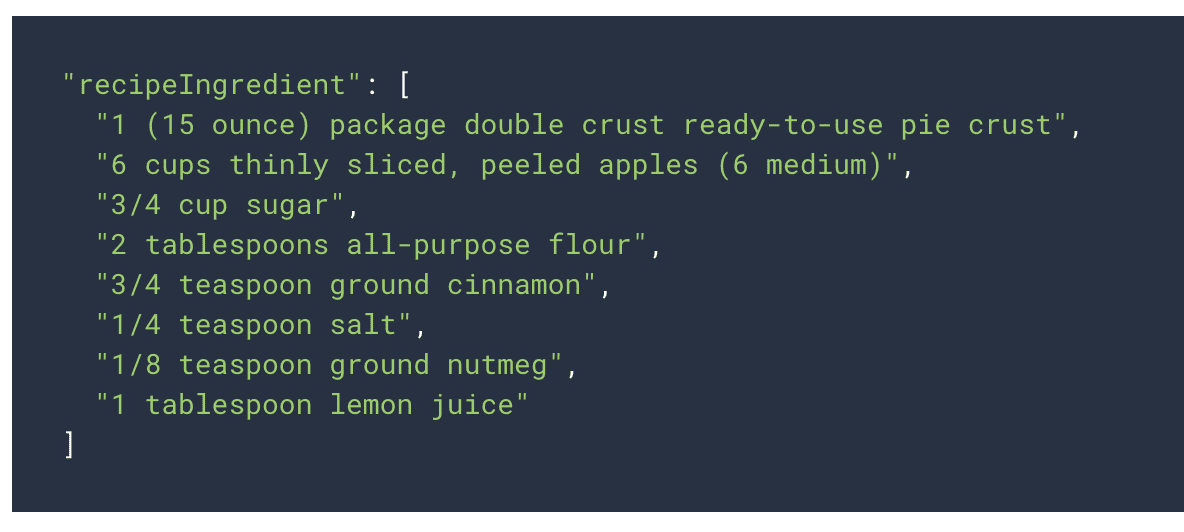

The reality is we use a variety of different ranking signals including, but not solely, “aggregated and anonymized interaction data” as covered here:”

The person who started the discussion responded with:

“Can you please tell me if I’m doing right by focusing on my site and content – writing new articles to be found through search – or if I should be focusing on some off-site effort related to building a readership? It’s frustrating to see traffic go down the more effort I put in.”

When a client says something like “writing new articles to be found through search” I always follow up with questions to understand what they mean. I’m not commenting about the person who made the tweet, I’m just making an observation about past conversations I’ve had with clients. When a client says something like that, they sometimes mean that they’re researching Google keywords and competitor sites and using that keyword data verbatim within their content instead of relying on their own personal expertise and understanding of what the readers want and need.

Here’s SearchLiaison’s answer:

“As I’ve said before, I think everyone should focus on doing whatever they think is best for their readers. I know it can be confusing when people get lots of advice from different places, and then they also hear about all these things Google is supposedly doing, or not doing, and really they just want to focus on content. If you’re lost, again, focus on that. That is your touchstone.”

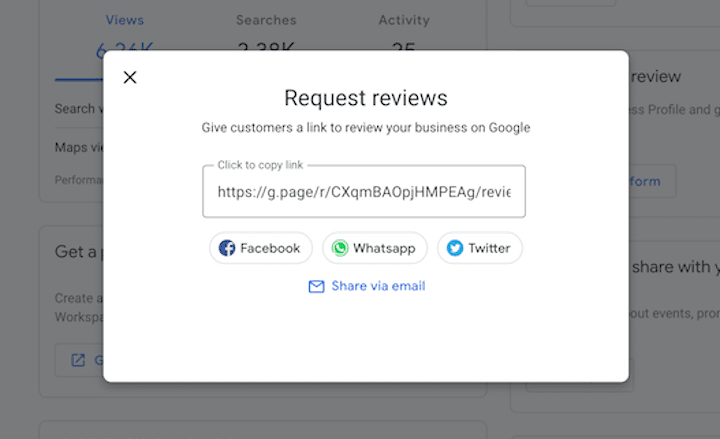

Site Promotion To People

SearchLiaison next addressed the excellent question about off-site promotion where he strongly asserted focusing on the readers. A lot of SEOs focus on promoting sites to Google, which is what link building is all about.

Promoting sites to people is super important. It’s one of the things that I see high ranking sites do and, although I won’t mention specifics, I believe it feeds into higher rankings in an indirect way.

SearchLiaison continued:

“As to the off-site effort question, I think from what I know from before I worked at Google Search, as well as my time being part of the search ranking team, is that one of the ways to be successful with Google Search is to think beyond it.

Great sites with content that people like receive traffic in many ways. People go to them directly. They come via email referrals. They arrive via links from other sites. They get social media mentions.

This doesn’t mean you should get a bunch of social mentions, or a bunch of email mentions because these will somehow magically rank you better in Google (they don’t, from how I know things). It just means you’re likely building a normal site in the sense that it’s not just intended for Google but instead for people. And that’s what our ranking systems are trying to reward, good content made for people.”

What About False Positives?

The phrase false positive is used in many contexts and one of them is to describe the situation of a high quality site that loses rankings because an algorithm erroneously identified it as low quality. SearchLiaison offered hope to high quality sites that may have seen a decrease in traffic, saying that it’s possible that the next update may offer a positive change.

He tweeted:

“As to the inevitable “but I’ve done all these things when will I recover!” questions, I’d go back to what we’ve said before. It might be the next core update will help, as covered here:

It might also be that, as I said here, it’s us in some of these cases, not the sites, and that part of us releasing future updates is doing a better job in some of these cases:

SearchLiaison linked to a tweet by John Mueller from a month ago where he said that the search team is looking for ways to surface more helpful content.

“I can’t make any promises, but the team working on this is explicitly evaluating how sites can / will improve in Search for the next update. It would be great to show more users the content that folks have worked hard on, and where sites have taken helpfulness to heart.”

Is Your Site High Quality?

Everyone likes to think that their site is high quality and most times it is. But there are also cases where a site publisher will do “everything right” in terms of following SEO practices but what they’re unaware of is that those “good SEO practices” that are backfiring on them.

One example, in my opinion, is the widely practiced strategy of copying what competitors are doing but “doing it better.” I’ve been hands-on involved in SEO for well over 20 years and that’s an example of building a site for Google and not for users. It’s a strategy that explicitly begins and ends with the question of “what is Google ranking and how can I create that?”

That kind of strategy can create patterns that overtly signal that a site is not created for users. It’s also a recipe for creating a site that offers nothing new from what Google is already ranking. So before assuming that everything is fine with the site, be certain that everything is indeed fine with the site.

Featured Image by Shutterstock/Michael Vi