How AI Overviews Surface Negative Reviews, Without Anyone Searching for Them via @sejournal, @EraseDotCom

This post was sponsored by Erase.com. The opinions expressed in this article are the sponsor’s own.

Why is my brand appearing in AI comparisons I didn’t ask to be in?

How do I find out what AI tools are saying about my brand?

What’s the difference between traditional reputation management and AI reputation management?

Any issues with your brand’s reputation are what AI decides to show searchers, unprompted.

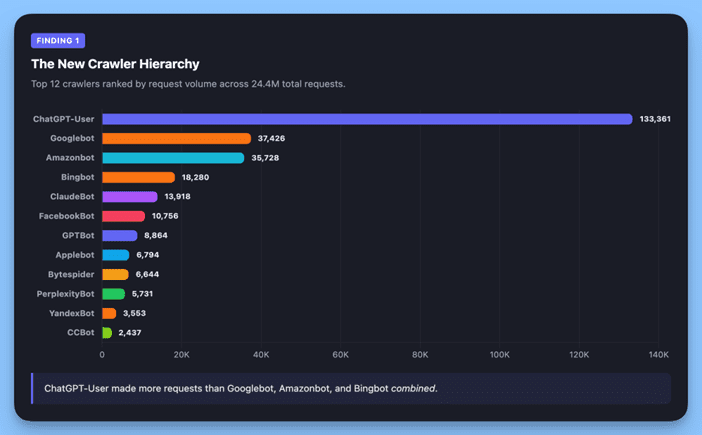

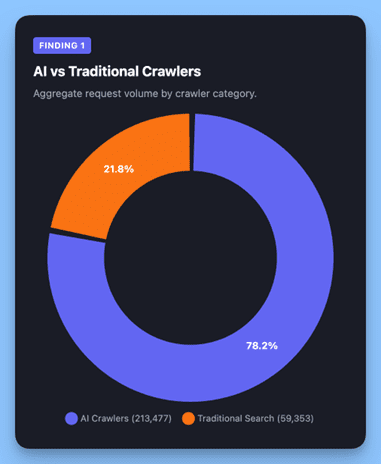

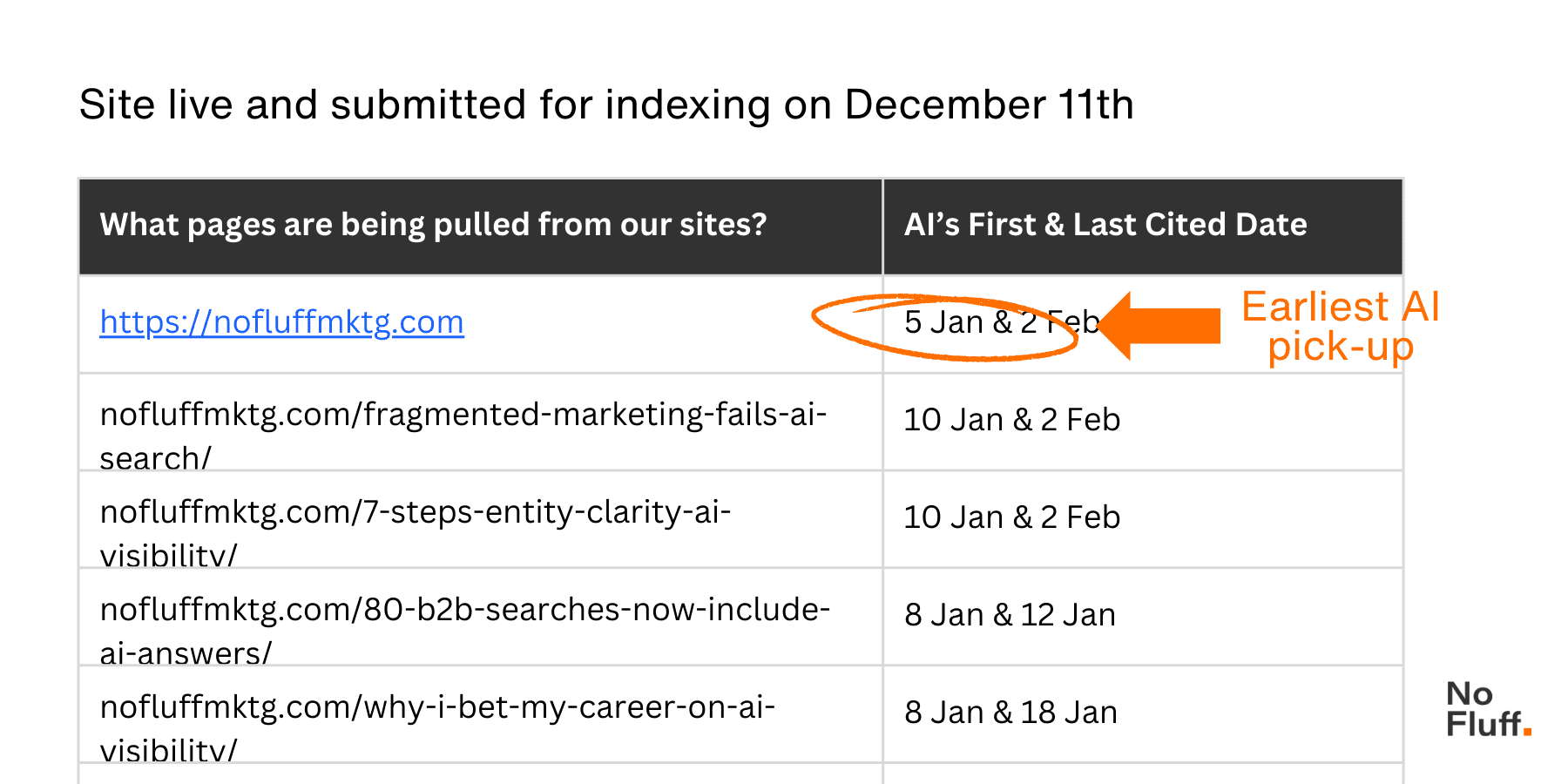

Throughout Q1 2026, we’ve seen a behavioral shift in how prospects discover brand reputation issues. AI-assisted research tools now autonomously surface negative content, such as reviews, complaints, forum threads, social media discussions, inside comparison queries, without users deliberately searching for problems.

When someone asks ChatGPT “which CRM should I choose,” these AI engines don’t just list features. They pull in user complaints, Reddit gripes, and years-old forum threads as part of their comparison. Your brand’s negative signal can appear in an answer about your competitor. Even more concerning, as Fast Company recently reported, there’s growing evidence of AI engines misquoting or misrepresenting brand statements, compounding the challenge of maintaining an accurate reputation in AI-generated summaries.

AI Comparison Queries Are Now Reputation Audits. Here’s What That Means.

Traditional reputation management focused on suppressing results when someone searched “[your brand] + reviews.” That’s still important, but it’s no longer sufficient.

It’s time for a reputation audit.

AI Overviews and LLM-powered search engines treat every product comparison as an opportunity to synthesize user sentiment. When evaluating options, these tools actively scan for negative reviews on complaint sites, Reddit discussions, forum threads, gripe site entries, and customer support complaints that made it into public view.

The critical difference: users aren’t asking about problems. They’re asking about solutions. But AI engines interpret “helping” as including negative signals from your brand footprint.

Why Some Complaints Show Up in AI Answers & Others Don’t

Not every negative mention gets pulled into AI-generated answers, but certain patterns increase surfacing likelihood:

- Recency + volume: Fresh complaints with multiple corroborating sources rank high.

- Specificity: Vague posts get filtered out. Detailed complaints that include product names and outcomes are weighted as valuable context.

- Platform authority: Reddit, Trustpilot, G2, and industry forums get treated as trusted sources.

- Recurrence across sources: If the same issue appears in multiple places, AI engines treat it as a verified pattern.

The 4-Step Framework: How to Audit, Remove, Rebuild, and Suppress Your Brand’s AI Reputation Signals

Understanding what’s in your negative signal footprint, prioritizing what can and should be addressed, and building a positive content layer that represents your brand accurately when AI tools pull information is the key to success.

Map what AI engines can access about your brand across platforms where complaints surface.

- Open ChatGPT or Perplexity and type: “What are the pros and cons of [your brand] vs [top competitor]?” Take a screenshot of the response and note any negative claims.

- On Google, search site:[key platform].com “[your brand name]” + “scam” OR “complaint”. This forces the search engine to show you only the filtered conversations AI models are currently scraping.

- Search for your brand on Google and check the featured snippets for anything negative, other SERP features like People also ask for negative or adversarial searches.

Key platforms to check:

- Review platforms (Trustpilot, G2, Capterra, Yelp, Google Business Profile).

- Reddit (search your brand name + product category + complaint terms).

- Industry forums (Stack Overflow for tech, niche communities for specialized services).

- Facebook groups and community pages (particularly industry-specific or local groups where your customers congregate).

- Social media (Twitter/X, LinkedIn discussions, TikTok comments).

- Legacy gripe sites (RipoffReport, Complaintsboard); while largely deindexed, content may still be cited by AI engines.

Document these details:

- Content type and platform.

- Date posted.

- Specific claims made.

- Factual accuracy.

- Current visibility in Google and AI summaries.

Focus on detailed complaints with enough context that AI engines might treat them as credible sources.

Step 2: Prioritize Based on Surfacing Likelihood

Focus on:

- High priority: Recent complaints with specific details, issues mentioned across multiple platforms, content on high-authority platforms (Reddit, major review sites), complaints naming features or pricing specifically.

- Medium priority: Older complaints (1-2 years) still in search results, isolated reviews without corroboration.

- Low priority: Very old content (3+ years) with low engagement, complaints about discontinued products.

How To Create A Priority Matrix

Create a simple scoring matrix to decide what to tackle first:

- High Priority: Content that appears in AI summaries AND has high organic visibility (check Semrush or Ahrefs for estimated monthly visits to that specific URL) or compare them against queries for those keywords that you have available in search console – if it’s a branded search, you should have full visibility on this from search console.

- Verified Impact: For platform-specific reviews (G2, Trustpilot, Google Business), use your internal analytics to track how many users are clicking “Helpful” on negative reviews. A review with 50+ “Helpful” votes is a massive signal that AI engines will not ignore.

Step 3: Remove or Respond Where Possible

Some negative content can be removed outright. Some deserve a response, and some require both.

How to Get Negative Content Taken Down

If the content violates platform policies (false information, impersonation, harassment), request removal through the platform’s reporting process.

For legacy complaint sites and gripe sites, professional content removal services can often negotiate takedowns based on inaccuracies or policy violations, though as reputation defense strategies evolve for AI, the focus has shifted from simply removing content to building stronger positive signals.

For content that mentions you but doesn’t necessarily focus on your brand (like a Reddit thread comparing five tools where yours gets one negative mention), removal usually isn’t an option, but you can dilute its impact by ensuring positive mentions appear more frequently in similar discussions.

When Responding Publicly Actually Helps You

Legitimate complaints about real issues, misunderstandings you can clarify with facts, or service failures where an explanation adds credibility. Keep responses factual, non-defensive, and focused on resolution. AI engines can pull your response into summaries, giving you a chance to reframe the narrative.

When Engaging Makes Things Worse — Skip It

Fake reviews, emotional rants without substance, old complaints about discontinued products, or situations where engagement will amplify visibility.

Step 4: Build a Positive Content Layer That AI Engines Prefer

This is where ongoing reputation management becomes critical. You need owned and earned content that AI engines will preferentially cite when answering comparison queries.

What Goes Into A Positive Content Layer

- Structured FAQ content: Create pages answering common objections and questions with clear headers and schema markup.

- Case studies: Detailed examples with metrics, timelines, and direct customer quotes give AI engines concrete data to cite.

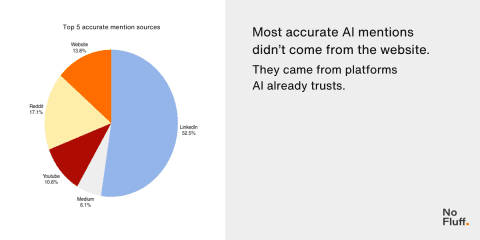

- Community presence: Contribute to Reddit and forums where your audience asks questions. Build credibility through value, not promotion.

- Third-party validation: Get featured in roundups and comparison articles on authoritative sites.

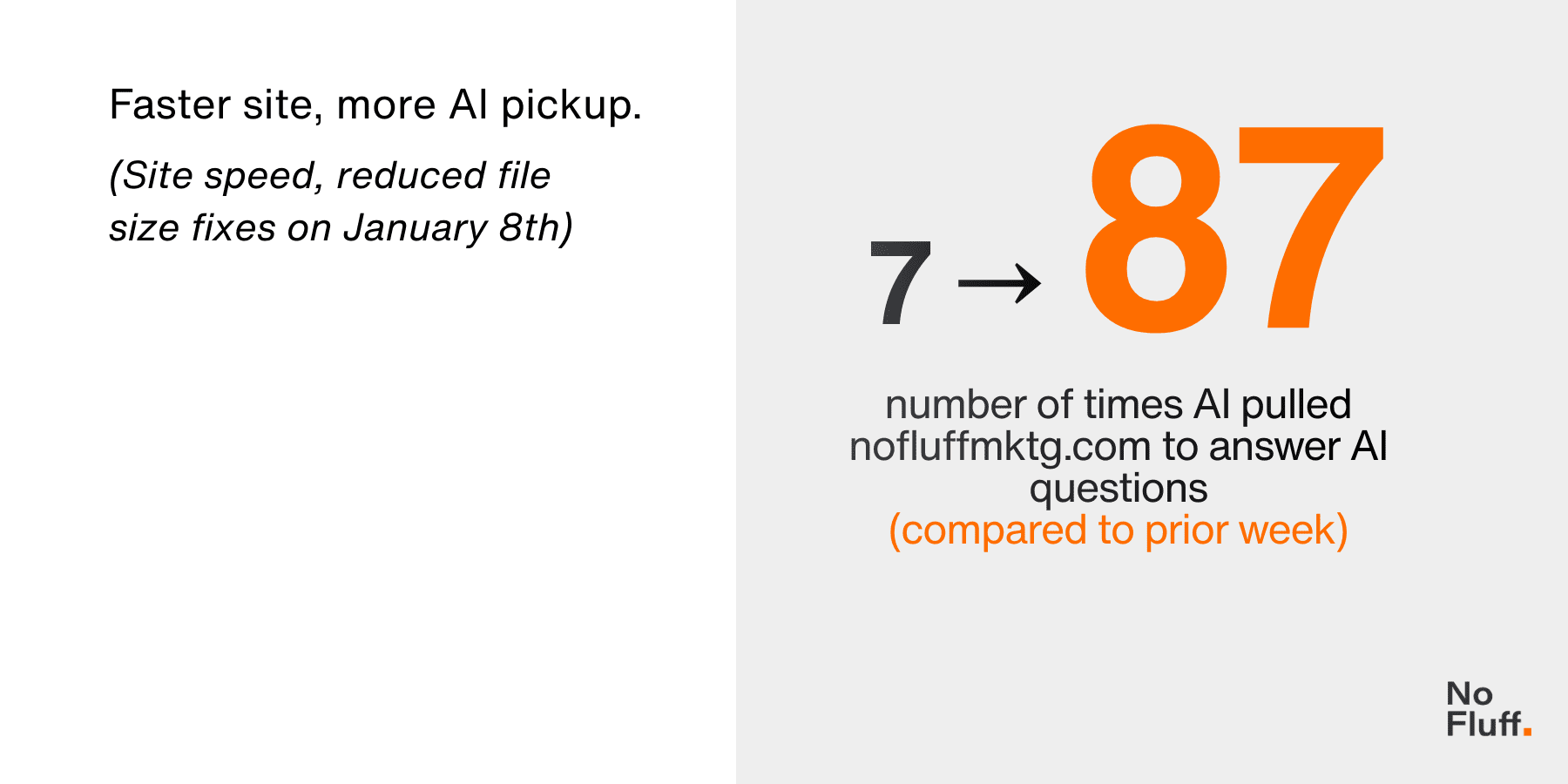

- Regular content updates: AI models prioritize recent content. Keep your owned content fresh.

- How this plays into broader online reputation management: What you’re building isn’t just an AI strategy—it’s a defensible reputation infrastructure. Comprehensive, recent, authoritative content across multiple touchpoints creates a buffer that makes it harder for isolated negative signals to dominate.

How To Build A Positive Content Layer

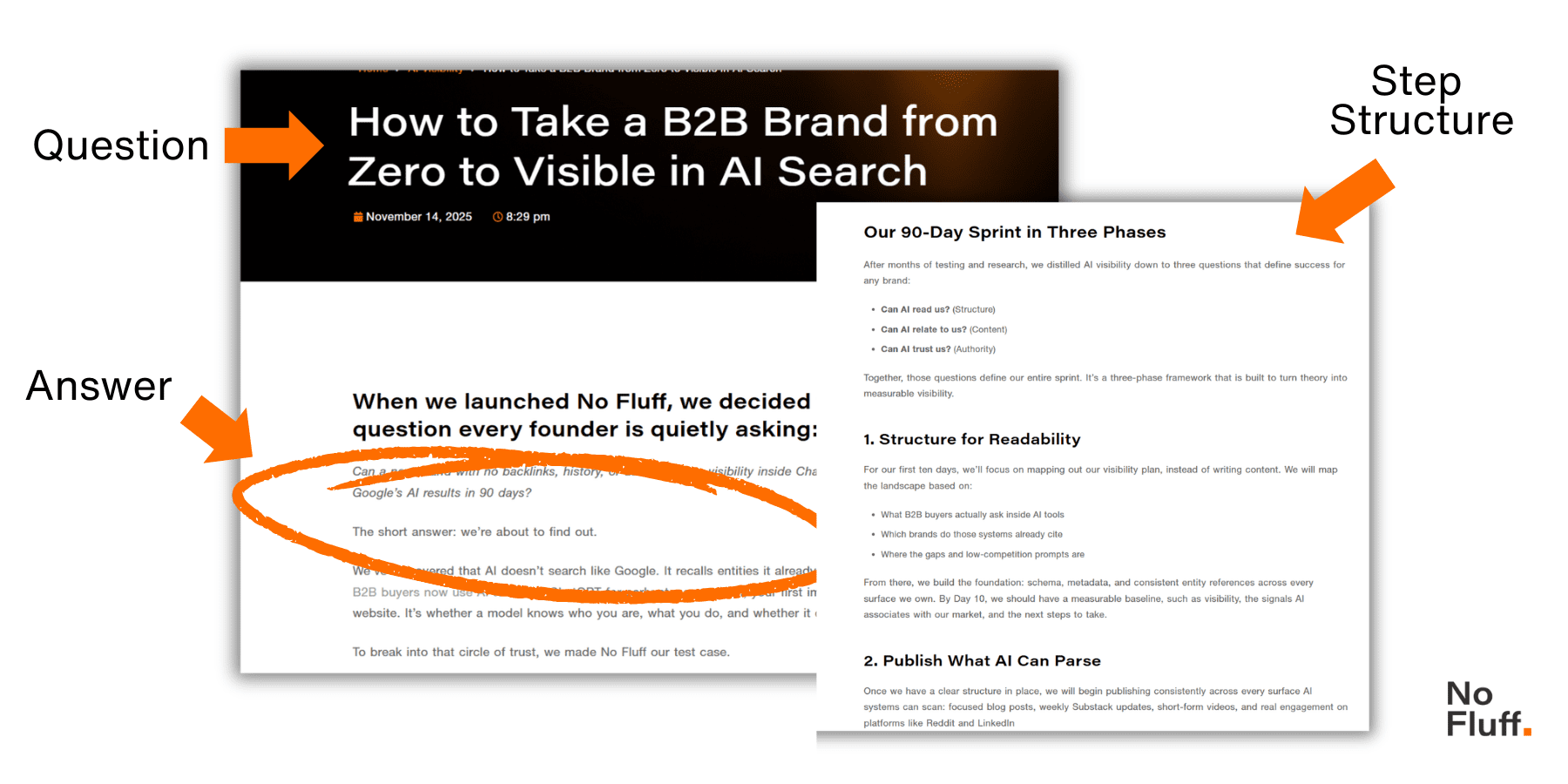

- Turn your FAQ into a knowledge base that addresses common objections (e.g., “Is [your brand] worth the price?”). Depending on how much reach and authority your brand has, it can be worthwhile to publish these as their own pages with a clear H1 question as the headline and breadcrumb the Q and As in a format like /faq/[service area]/[objection] to create more internal linking opportunities and depth rather than just having everything on a massive FAQ page.

- Reach out to some of your satisfied customers and ask for a 2–3 sentence quote about a specific outcome they achieved. Publish these as a case study snippet on your site. Specificity (metrics, timeframes) helps to ensure LLMs treat content as credible evidence rather than marketing copy. Link to their LinkedIn or business website, if possible, to help reinforce that it is a real review for a real customer.

- Identify high-authority “Best of” lists or industry roundups where your brand is missing and email the editors to provide a unique expert insight or updated product data for inclusion. These seed high-trust citations that AI engines prioritize when synthesizing brand comparisons and reputation summaries. The higher they rank on Google, the better.

Monitoring becomes essential at this stage. Track which keywords trigger AI Overviews that mention your brand, watch for new complaints surfacing in high-authority platforms, and measure whether your positive content is getting cited in AI-generated comparisons. This isn’t a one-time project; it’s an ongoing program.

Start Here: Your Easy Steps to Managing Your AI Reputation

If you’re dealing with high-stakes reputation issues where missteps could amplify problems, specialized online reputation management services and experts like our team at erase.com can help you move faster and avoid pitfalls. The goal isn’t just reacting to what’s already out there; it’s building a system where positive signals consistently outweigh isolated negatives when AI engines scan for information.

The shift is already here. The question is whether you’re managing it proactively or discovering it reactively when a prospect mentions “something they saw in ChatGPT.”

Image Credits

Featured Image: Image by Erase.com. Used with permission.