Most SEO failures are not optimization failures. They are reasoning failures that occur before optimization even begins.

In enterprise SEO escalations, the pattern is remarkably consistent. Teams jump straight to causes, debate theories, and assign blame before anyone clearly articulates the actual problem they are trying to understand.

Once blame enters the conversation, problem definition disappears. Teams shift into CYA mode, and without a shared understanding of the problem, every proposed fix becomes guesswork.

The Failure Pattern Everyone Recognizes

If you’ve worked in enterprise SEO long enough, you’ve seen this meeting.

A stakeholder raises an issue. Google is showing the wrong title or site name. Search visibility dropped. A location isn’t represented correctly. The room doesn’t go quiet. It fills with explanations.

Someone points to a lack of internal links. Another suggests Google rewrote the titles. Yet another CMS defect is mentioned. A recent Google update is blamed. Someone inevitably asks whether hreflang is broken.

Each explanation sounds plausible in isolation. Each reflects real experience. But none of them is grounded in a clearly stated problem.

Everyone is trying to be helpful. No one has actually said what outcome the system produced.

SEO discussions often collapse not because teams lack expertise, but because they skip the most important step: precisely describing the system outcome they are trying to explain.

Meeting Two: Activity Without Clarity

What usually follows is a second meeting. On the surface, it feels productive.

Teams arrive having done work. The CMS has been reviewed. A detailed technical SEO audit is complete. Google update trackers and industry forums have been checked for similar impacts, along with LinkedIn commentary. Multiple diagnostic tools have been run.

There is evidence of many man-hours of activity presented. There are screenshots of issues and non-issues, and it all looks like progress toward a resolution. In reality, it is often a misdirected effort.

If the original problem was vague or incorrectly framed, all of that analysis is aimed at the wrong target. Only later does the realization set in. While the audits detected issues, they are not related to this problem.

Time and attention were spent validating assumptions instead of diagnosing system behavior.

That’s not an execution failure. It’s a problem definition failure.

Why SEO Conversations Go Off The Rails

That failure isn’t accidental. It’s structural, and SEO is uniquely exposed to it.

I have often been critical, stating that the search industry lacks root cause analysis. That’s true, but it’s not because teams aren’t trying. There is no shortage of audits, checklists, or prescriptive processes when a traffic drop or SERP anomaly appears. The problem is that those tools narrow thinking rather than clarify it. They push teams toward doing something before anyone has agreed on what actually happened.

In many SEO conversations, signals are treated as probabilistic guesses rather than observed outcomes. Rankings fluctuate, a listing looks different, traffic dips, and the discussion quickly drifts toward familiar explanations. Google must have changed something. A ranking factor shifted. An update rolled out.

What gets missed is far more mundane and far more common. Control is spread across teams. Changes are made inside one department and are never communicated to another. Content, templates, navigation, schema, analytics, and infrastructure evolve independently. Cause and effect don’t move in straight lines, and no single team sees the whole system.

When no one clearly states the outcome the system produced, the group defaults to what feels responsible: activity.

Root cause analysis turns into a checklist exercise. Teams start debating causes before agreeing on the outcome itself. Meetings fill with effort, artifacts, and action items, but clarity never quite arrives.

Systems, however, don’t respond to effort. They respond to inputs.

The Missing Skill: Problem Deduction

The most important SEO skill isn’t keyword research, schema, technical audits, GEO, or any other optimization acronym that happens to be in fashion. Those are all processes and tools. Useful ones. But they only matter after the real work has been done. That work is problem deduction.

Problem deduction is the discipline of slowing the conversation down long enough to understand what the system actually produced, not what the team expected it to produce. It requires stepping outside of assumptions, resisting familiar explanations, and describing the outcome in neutral terms before trying to fix anything.

Only then does real analysis begin. Teams can reason backward through the signals that contributed to the outcome, distinguish between inputs they can change and constraints they inherited, and act without blame or superstition driving the discussion.

In practice, problem deduction means the ability to:

- Observe a system outcome without bias, focusing on what the system produced rather than what was intended.

- Describe that outcome precisely and neutrally, without embedding assumptions about cause.

- Reason backward through contributing signals, identifying which inputs could plausibly influence the result.

- Separate fixable inputs from historical constraints, so effort is spent where it can actually matter.

- Act without blame or superstition, keeping decisions grounded in evidence rather than instinct.

This doesn’t replace technical SEO or root cause analysis. It makes them possible.

Problem deduction is systems thinking applied to search. And almost no one teaches it.

A Real-World Enterprise Example

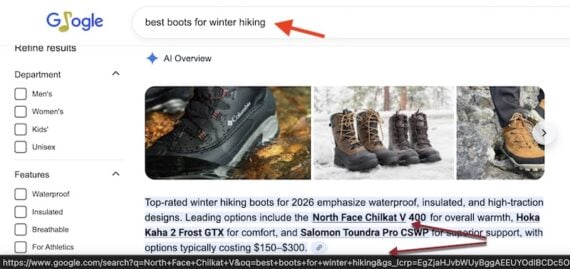

Recently, I reviewed an enterprise case where a client was frustrated that Google consistently displayed a specific location as the site name, regardless of the user’s location or query intent. The conversation followed a familiar arc. At first, explanations came quickly. Someone pointed to internal linking, noting that this location had accumulated more authority over time. Others suggested Google’s automatic title rewrites were to blame. The CMS came up, along with the possibility of injected or inconsistent code. SEO implementation gaps were also mentioned. Each explanation sounded reasonable. All of them were based on real experience. But none of them described the outcome. So we stopped the discussion and reset the conversation by stating the problem plainly:

Google selected a location, not the brand name, as the site name representing the brand in search results.

That single sentence changed the tone of the room. Once the outcome was clearly defined, the reasoning became straightforward. The discussion shifted from speculation to diagnosis, and the signals that led to that result became much easier to trace.

How Google Actually Made That Decision

Google wasn’t confused. It was responding to a consistent set of reinforcing signals.

Once the outcome was clearly defined, the explanation stopped being mysterious. Several independent signals all pointed to the same conclusion, and Google simply followed the strongest, most consistent path.

1. Misapplied WebSite Schema

One issue started at the structural level. Location pages had been marked up as if each were a separate website entity, rather than reinforcing the primary brand domain. Multiple pages effectively claimed to be “the website,” diluting canonical authority and causing the schema signal to cancel itself out through duplication. Google didn’t misunderstand the markup. It received conflicting declarations and discounted them logically.

2. Title Tag Dilution

At the same time, title tags failed to reinforce a clear hierarchy. The homepage HTML title tag attempted to carry too much information at once, referencing the marketing tagline first, then the brand and first location, and finally the other locations, separated by commas, into a single tag. Instead of clarifying the relationship between the brand and locations, the structure blurred it. Google responded by favoring the location that was most consistently reinforced across signals. Google favored the most consistently reinforced location, not arbitrarily, but logically.

3. External Corroboration Bias

External signals reinforced the same outcome. Inbound links, citations, and references disproportionately pointed to a single location. From Google’s perspective, the broader web corroborated what on-site signals already suggested. One location appeared to represent the brand more clearly than the others. This wasn’t favoritism. It was corroboration.

What Could Be Easily Fixed And What Couldn’t

Once the actual problem was clearly identified, the conversation changed. The issue wasn’t that Google was behaving unpredictably. It was that something in the system was consistently telling Google to treat a single location as the site name rather than the brand itself.

With the problem framed that way, analysis became practical. Instead of debating theories, we could examine the systems that contributed to that outcome and begin correcting them. Just as importantly, it allowed us to distinguish between changes that could be made immediately and those that would require sustained effort.

Some corrections were straightforward. Because the schema was generated programmatically, the WebSite markup could be adjusted immediately to reinforce the primary brand entity. The brand team also agreed to simplify the homepage title, focusing it on the brand and tagline, while allowing individual location pages to carry the weight of location-specific signals.

Other signals were less malleable. External corroboration, built up through years of links and citations pointing to a single location, couldn’t be reversed quickly. That work would take time and consistent reinforcement.

Problem deduction didn’t just tell us what to fix. It told us where to start, what to expect, and how much effort each correction would realistically require.

SEO teams waste enormous effort trying to “fix” things that can only change gradually. Problem deduction helps teams focus on directional correction rather than instant reversal.

Why Root Cause Analysis Often Fails In SEO

Root cause analysis breaks down when teams try to answer “why” before agreeing on “what.”

In enterprise SEO, that failure is amplified by how work is organized. Control is decentralized across content, engineering, analytics, brand, legal, localization, and platform teams. No single group owns the full system, yet everyone is accountable to their own KPIs. When an anomaly appears, the instinct isn’t to describe the outcome carefully. It’s to protect territory.

Conversations shift quickly. Causes are proposed before outcomes are defined. Responsibility is implied, then deflected. Each team points to the part of the system it doesn’t control. The discussion becomes less about understanding behavior and more about avoiding fault.

At the same time, the process itself narrows thinking. Root cause analysis turns into a checklist exercise. Teams reach for audits, tools, and familiar diagnostic steps, not because they are wrong, but because they are safe. Checklists create motion without requiring agreement, and activity becomes a substitute for clarity.

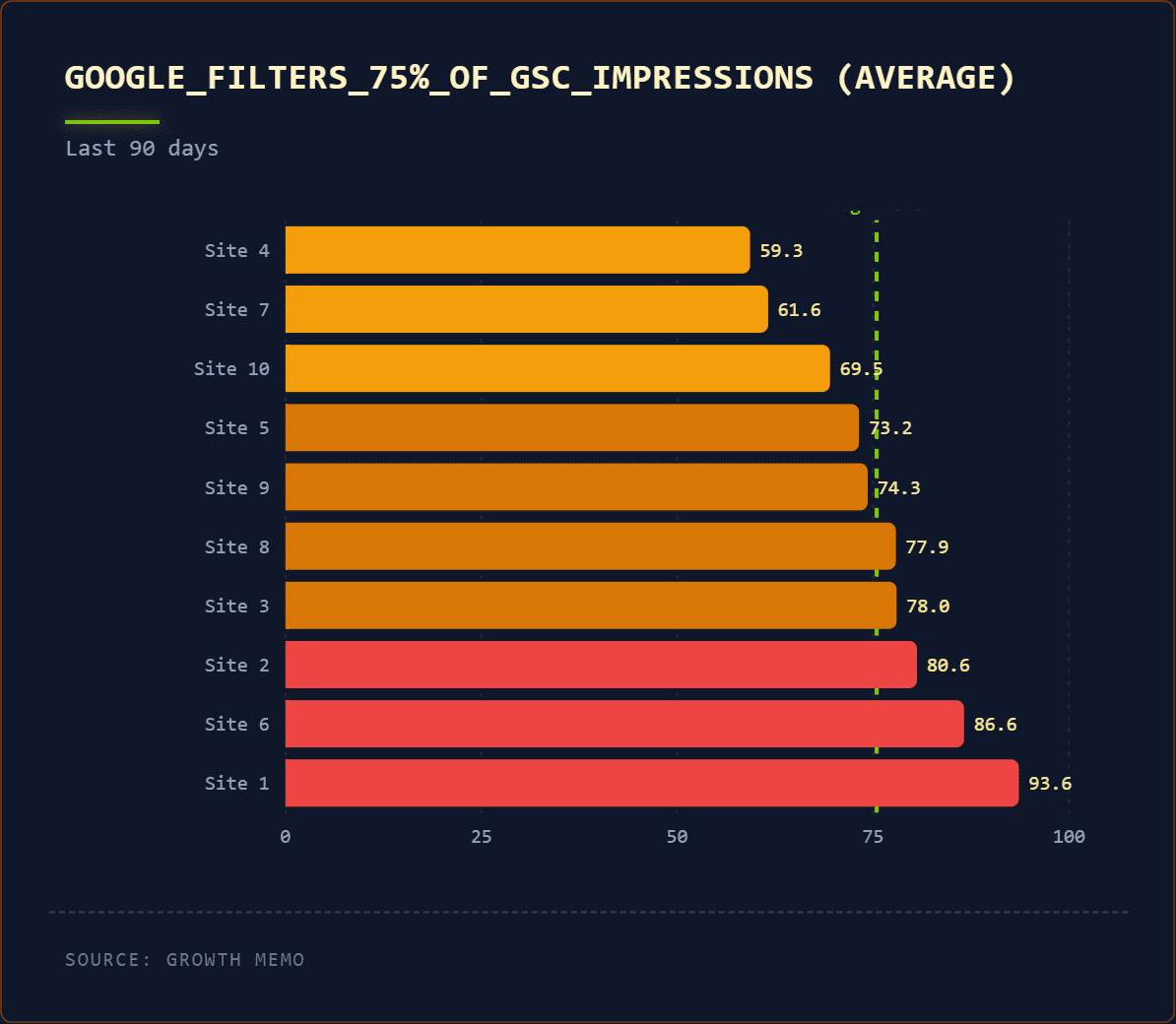

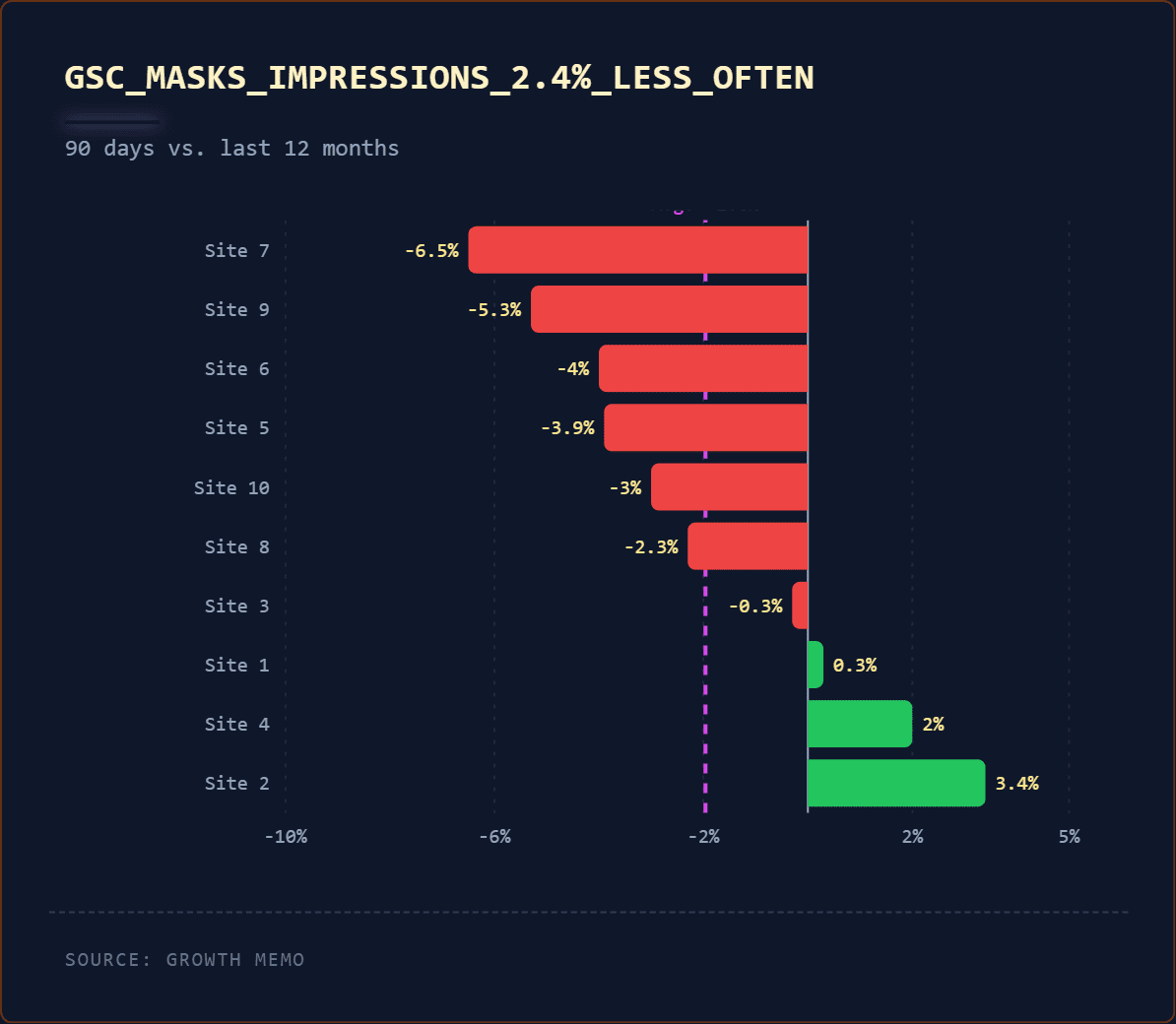

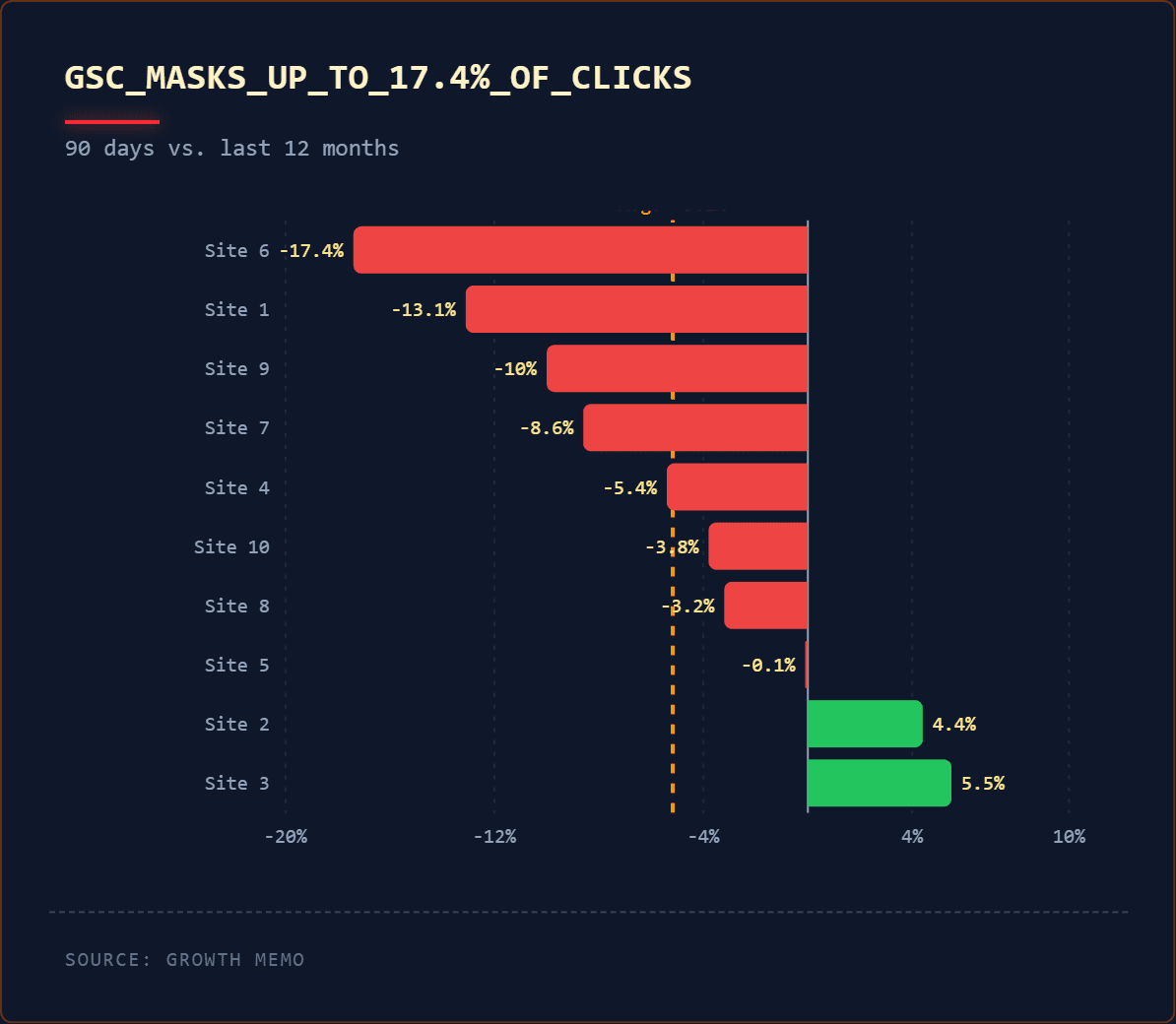

When internal explanations feel uncomfortable or politically risky, attention often shifts outward. Someone cites a recent Google update. Another references a post from a well-known SEO or a chart showing sector-wide volatility. External signals offer a kind of relief. If “everyone” is seeing impact, then no one internally has to explain their system.

But those signals are rarely diagnostic. Used too early, they short-circuit reasoning rather than support it.

The result is a familiar pattern. Meetings generate effort, artifacts, and action items, but the outcome itself remains vaguely defined. Teams stay busy. Nothing really changes.

Problem deduction interrupts that cycle. It forces agreement on what the system actually produced before explanations, defenses, or fixes enter the conversation. Once the outcome is clearly defined, decentralization becomes navigable, blame loses its power, and root cause analysis shifts from performance to purpose.

That’s when it starts working.

The Skill Enterprises Should Be Hiring For First

Not long ago, an advisory client asked me a deceptively simple question while defining a new enterprise search role.

“What is the single most important skill we should hire for?”

They were expecting a familiar answer. Something about technical SEO depth, AI search experience, schema expertise, or platform fluency. That’s usually how these conversations go.

I didn’t give them any of those. Instead, I said critical reasoning.

There was a pause.

Despite what many people in the search industry believe, technical skills are the easy part. Tools can be learned. Platforms change. Gaps get closed. Teams adapt. What’s far harder to teach is the ability to think clearly when the system doesn’t behave the way you expected it to.

Enterprise SEO is full of that kind of ambiguity. Signals conflict. Outcomes are indirect. Ownership is fragmented. And when things go wrong, pressure builds quickly.

In those moments, the people who struggle most aren’t the ones who lack tactical knowledge. They’re the ones who can’t slow the conversation down long enough to reason.

The skill that matters is the ability to observe what the system actually produced without bias, describe it precisely, separate symptoms from causes, reason backward through contributing signals, and resist the urge to jump to conclusions or assign blame.

In other words, problem deduction.

Specifically (as highlighted above), the ability to:

- Observe a system outcome without bias.

- Describe it precisely.

- Separate symptoms from causes.

- Reason backward through contributing signals.

- Resist jumping to conclusions or assigning blame.

I told them plainly: We can teach the mechanics of search. What’s nearly impossible to teach is how to reason critically if that muscle isn’t already there. People either have it or they don’t. Enterprise SEO punishes the absence of that skill more than almost any other digital discipline.

This Is Bigger Than SEO

Once you recognize the pattern, it becomes hard to unsee.

The same failure mode that derails root cause analysis also explains why SEO so often turns political. When outcomes aren’t clearly defined, teams fill the gap with narratives. Best practices harden into superstition. Google updates become a convenient external explanation for internal incoherence. Infrastructure issues quietly masquerade as ranking problems because they’re harder to confront directly.

None of this happens because teams are careless. It happens because modern digital systems are fragmented by design.

As described earlier, control is decentralized across content, engineering, analytics, brand, legal, localization, and platform teams. No one owns the entire system, yet everyone is accountable to their own KPIs. When something goes wrong, describing the outcome precisely feels risky. It invites scrutiny. It raises uncomfortable questions about ownership and handoffs.

So conversations drift. Causes are debated before outcomes are agreed upon. Responsibility is implied, then deflected. Checklists replace reasoning because they allow motion without alignment. And when internal explanations feel politically unsafe, attention shifts outward – to Google updates, industry chatter, or gurus diagnosing sector-wide volatility.

Those external signals provide relief, but not resolution. They describe correlation, not causation. They offer context, not clarity and allow organizations to stay busy without ever confronting how their own systems produced the result.

This is where SEO begins to overlap with something broader: findability.

Whether someone encounters a brand through Google, an AI assistant, a marketplace, or a vertical search engine, the underlying questions are the same. Are we present? Are we represented clearly and consistently? Does that representation invite deeper engagement, or does it confuse and fragment trust?

Those outcomes don’t depend on isolated optimizations. They depend on coherent systems that behave predictably across surfaces.

Problem deduction is what makes that coherence possible. By forcing agreement on what the system actually produced before explanations or fixes enter the room, it cuts through decentralization, neutralizes blame, and restores reasoning. Root cause analysis stops being performative and starts serving its purpose.

That’s when the conversation changes. And that’s when progress actually begins.

The Real Takeaway

Google didn’t choose the wrong site name. It chose the only version of the brand the system clearly defined.

The real SEO skill isn’t knowing what to change. It’s knowing what actually happened before you touch anything at all.

Until enterprises teach, hire for, and reward problem deduction, SEO conversations will continue to spin in circles, fixing symptoms while the system quietly reinforces the same outcomes.

And no amount of optimization can fix a problem that was never clearly defined in the first place.

More Resources:

Featured Image: KitohodkA/Shutterstock