Why SEO Roadmaps Break In January (And How To Build Ones That Survive The Year) via @sejournal, @cshel

SEO roadmaps have a lot in common with New Year’s resolutions: They’re created with optimism, backed by sincere intent, and abandoned far sooner than anyone wants to admit.

The difference is that most people at least make it to Valentine’s Day before quietly deciding that daily workouts or dry January were an ambitious, yet misguided, experiment. SEO roadmaps often start unraveling while Punxsutawney Phil is still deep in REM sleep.

By the third or fourth week of the year, teams are already making “temporary” adjustments. A content cadence slips here. A technical initiative gets deprioritized there. A dependency turns out to be more complicated than anticipated, etc. None of this is framed as failure, naturally, but the original plan is already being renegotiated.

This doesn’t happen because SEO teams are bad at planning. It happens because annual SEO roadmaps are still built as if search were a stable environment with predictable inputs and outcomes.

(Narrator: Search is not, and has never been, a stable environment with predictable inputs or outcomes.)

In January, just like that diet plan, the SEO roadmap looks entirely doable. By February, you’re hiding in a dark pantry with a sleeve of Thin Mints, and the roadmap is already in tatters.

Here’s why those plans break so quickly and how to replace them with a planning model that holds up once the year actually starts moving.

The January Planning Trap

Annual SEO roadmaps are appealing because they feel responsible.

- They give leadership something concrete to approve.

- They make resourcing look predictable.

- They suggest that search performance can be engineered in advance.

Except SEO doesn’t operate in a static system, and most roadmaps quietly assume that it does.

By the time Q1 is halfway over, teams are already reacting instead of executing. The plan didn’t fail because it was poorly constructed. It failed because it was built on outdated assumptions about how search works now.

Three Assumptions That Break By February

1. Algorithms Behave Predictably Over A 12-Month Period

Most annual roadmaps assume that major algorithm shifts are rare, isolated events.

That’s no longer true.

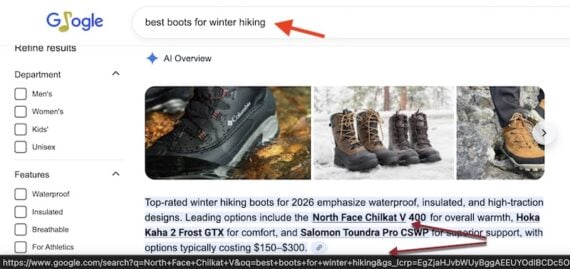

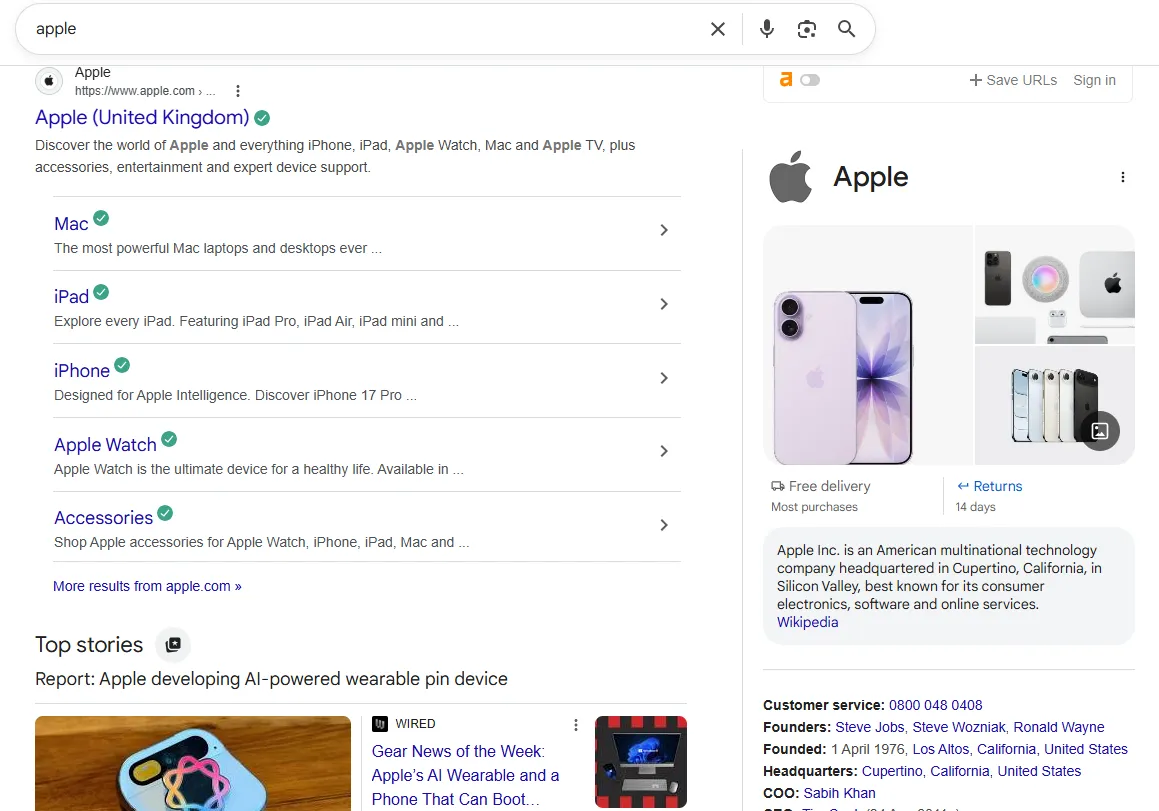

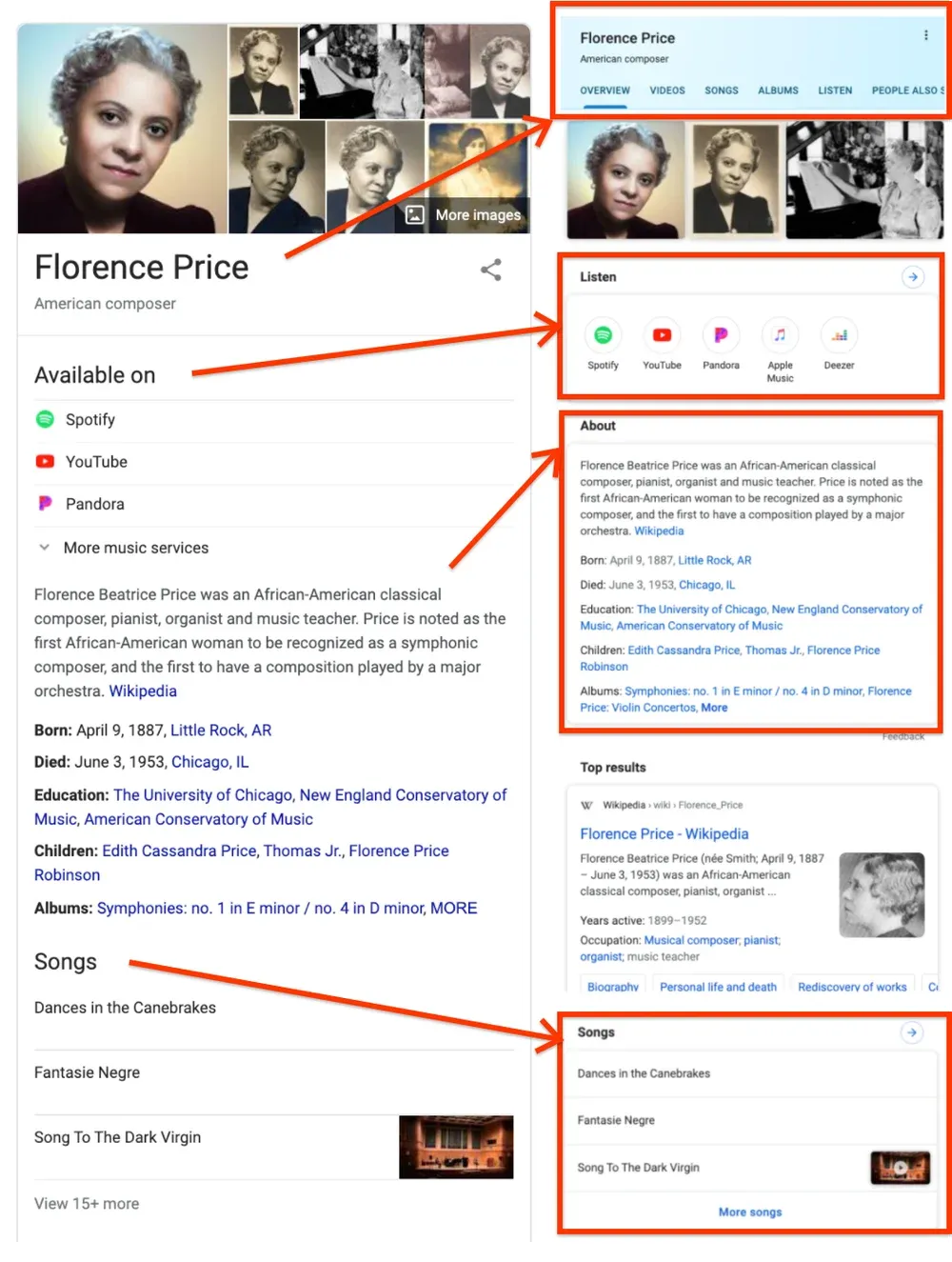

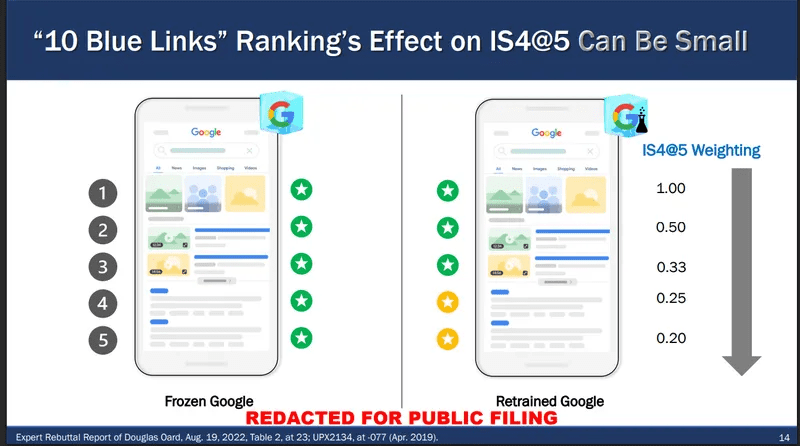

Search systems are now updated continuously. Ranking behavior, SERP layouts, AI integrations, and retrieval logic evolve incrementally – often without a single, named “update” to react to.

A roadmap that assumes stability for even one full quarter is already fragile.

If your plan depends on a fixed set of ranking conditions remaining intact until December, it’s already obsolete.

2. Technical Debt Stays Static Unless Something “Breaks”

January plans usually account for new technical work like migrations, performance improvements, structured data, internal linking projects.

What they don’t account for is technical debt accumulation.

Every CMS update, plugin change, template tweak, tracking script, and marketing experiment adds friction. Even well-maintained sites slowly degrade over time.

Most SEO roadmaps treat technical SEO as a project with an end date. In reality, it’s a system that requires continuous maintenance.

By February, that invisible debt starts to surface – crawl inefficiencies, index bloat, rendering issues, or performance regressions – none of which were in the original plan.

3. Content Velocity Produces Linear Returns

Many annual SEO plans assume that content output scales predictably:

More content = more rankings = more traffic

That relationship hasn’t been linear for a long time.

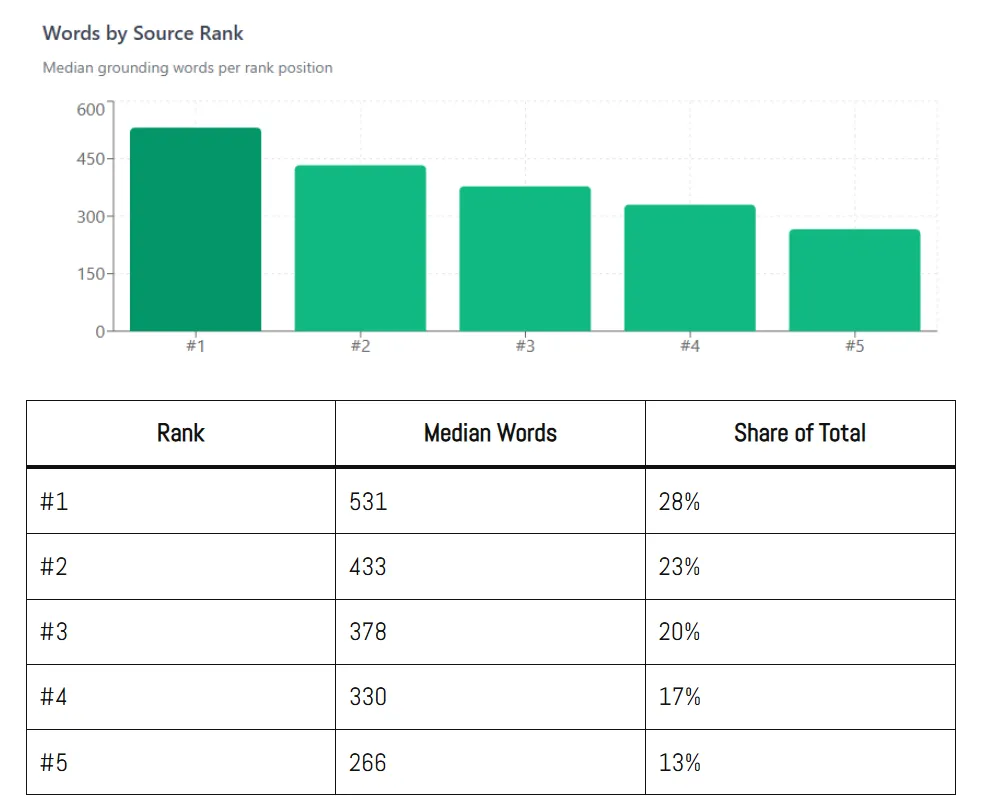

Content saturation, intent overlap, internal competition, and AI-driven summaries all flatten returns. Publishing at the same pace doesn’t guarantee the same impact quarter over quarter.

By February, teams are already seeing diminishing returns from “planned” content and scrambling to justify why performance isn’t tracking to projections.

What Modern SEO Roadmap Planning Actually Looks Like

Roadmaps don’t need to disappear, but they do need to change shape.

Instead of a rigid annual plan, resilient SEO teams operate on a quarterly diagnostic model, one that assumes volatility and builds flexibility into execution.

The goal isn’t to abandon strategy. It’s to stop pretending that January can predict December.

A resilient model includes:

- Quarterly diagnostic checkpoints, not just quarterly goals.

- Rolling prioritization, based on what’s actually happening in search.

- Protected capacity for unplanned technical or algorithmic responses.

- Outcome-based planning, not task-based planning.

This shifts SEO from “deliverables by date” to “decisions based on signals.”

The Quarterly Diagnostic Framework

Instead of locking a yearlong roadmap, break planning into repeatable quarterly cycles:

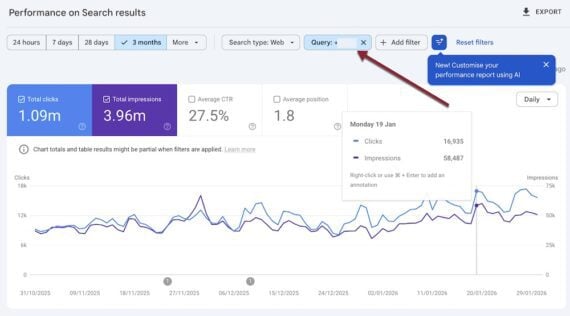

Step 1: Assess (What Changed?)

At the start of each quarter, and ideally again mid-quarter, evaluate:

- Crawl and indexation patterns.

- Ranking volatility across key templates.

- Performance deltas by intent, not just keywords.

- Content cannibalization and decay.

- Technical regressions or new constraints.

This is not a full audit. It’s a focused diagnostic designed to surface friction early.

Step 2: Diagnose (Why Did It Change?)

This is where most roadmaps fall apart: They track metrics but skip interpretation.

Diagnosis means asking:

- Is this decline structural, algorithmic, or competitive?

- Did we introduce friction, or did the ecosystem change around us?

- Are we seeing demand shifts or retrieval shifts?

Without this layer, teams chase symptoms instead of causes.

Step 3: Fix (What Actually Matters Now?)

Only after diagnosis should priorities shift. That shift may involve pausing content production, redirecting engineering resources, or deliberately doing nothing while volatility settles. Resilient planning accepts that the “right” work in February may bear little resemblance to what was approved in January.

How To Audit Mid-Quarter Without Panicking

Mid-quarter reviews don’t mean throwing out the plan. They mean stress-testing it.

A healthy mid-quarter SEO check should answer three questions:

- What assumptions no longer hold?

- What work is no longer high-leverage?

- What risk is emerging that wasn’t visible before?

If the answer to any of those changes execution, that’s not failure. It’s adaptive planning.

The teams that struggle are the ones afraid to admit the plan needs to change.

The Bottom Line

The acceleration introduced by AI-driven retrieval has shortened the gap between planning and obsolescence.

January SEO roadmaps don’t fail because teams lack strategy. They fail because they assume a level of stability that search has not offered in years. If your SEO plan can’t absorb algorithmic shifts, technical debt, and nonlinear content returns, it won’t survive the year. The difference between teams that struggle and teams that adapt is simple: One plans for certainty, the other plans for reality.

The teams that win in search aren’t the ones with the most detailed January roadmap. They’re the ones that can still make good decisions in February.

More Resources:

Featured Image: Anton Vierietin/Shutterstock