Google Research’s ALDRIFT: AI Answers That Do More Than Sound Plausible via @sejournal, @martinibuster

Google Research published a paper that studies how to make generative AI systems produce answers that do more than sound plausible. The researchers say that their ALDRIFT framework “opens exciting avenues” for moving beyond answers that merely have a high probability.

The paper, titled “Sample-Efficient Optimization over Generative Priors via Coarse Learnability,” examines a problem in which generated answers must remain likely under a model while also moving toward a separate goal. The research points toward new avenues for addressing the AI plausibility trap.

Google ALDRIFT

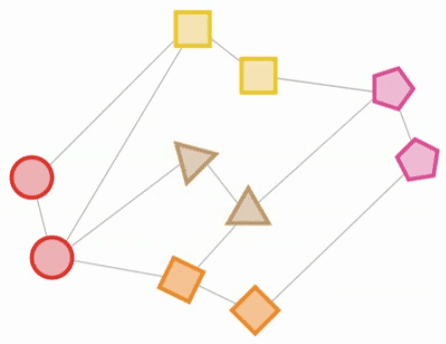

The evidence in the paper centers on a framework called ALDRIFT (Algorithm Driven Iterated Fitting of Targets). The method repeatedly refines a generative model toward lower-cost answers and uses a correction step to reduce accumulated error during the process.

The paper also introduces “coarse learnability.” The term means the learned model does not need to perfectly match the ideal target. It needs to keep enough coverage over important parts of the answer space so useful possibilities are not lost too early. Under that assumption, the authors prove that ALDRIFT can approximate the target distribution with a polynomial number of samples.

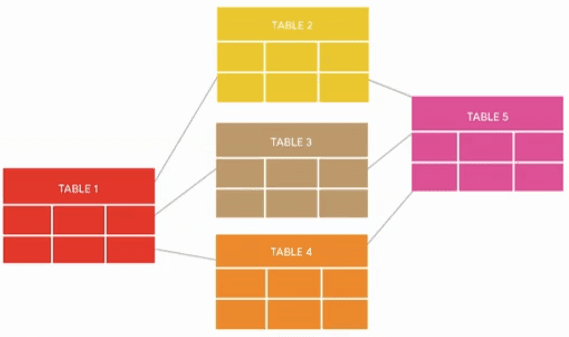

ALDRIFT Operates On A Two-Part Setup

ALDRIFT operates on a two-part setup:

- The generative model represents what kinds of answers remain likely under the model.

- The outside scoring process measures whether a candidate answer performs well against the target goal.

The authors describe that score as a “cost.” The word “cost” refers to the measured penalty assigned to a candidate answer. A lower cost means the candidate did better according to the requirement being checked. ALDRIFT does not simply search for any low-cost answer. It searches for answers that score well while still remaining likely under the generative model.

Some AI Answers Need To Work As A Whole

The researchers are focused on AI answers for problems where the response has to function in the real world such as their examples of route planning and conference planning.

- Route planning: The paper explains that an LLM may evaluate whether individual route segments are scenic, but may struggle to ensure that those segments connect into a valid path.

- Conference planning: An LLM may group sessions by topic, while a classical algorithm may be needed to schedule those sessions into a timetable without conflicts.

These examples show why the paper treats plausible answers as only part of the problem. The harder issue is producing answers that remain coherent when separate parts have to work together as one complete solution.

The Coarse Learnability Assumption

The paper treats this as a problem of guiding a generative model toward answers that hold together across all their parts. The authors connect the problem to inference-time alignment, where a model is adjusted during use based on whether a specific answer works as a complete solution. That connection gives the research practical relevance, although the paper’s contribution remains theoretical and depends on the coarse learnability assumption.

The phrase “coarse learnability assumption” means the paper’s theory depends on an assumption that the model can keep enough useful possibilities available while it is being pushed toward better answers.

It does not mean the model has to learn the target perfectly. It means the model has to preserve enough coverage of the answer space so the process does not get stuck too early or lose possible better answers.

Existing Optimization Methods Leave Sample-Limited Gaps

The paper identifies several gaps in how existing optimization methods are understood:

- Limitation of existing methods: Classical model-based optimization methods rely on “asymptotic convergence arguments.” This means they are theoretically understood after very large amounts of sampling, but not necessarily in practical settings with limited samples.

- Failure with expressive models: The paper says these classical assumptions “break down” when using expressive generative models like neural networks.

- Gap in understanding: The authors say the “finite-sample behavior” of optimization in this setting is “theoretically uncharacterized.” That means the theory does not fully explain how these methods behave when only limited samples are available.

The paper’s solution is to introduce “coarse learnability” to explain how a generative model can be pushed toward better answers while keeping enough useful possibilities available along the way.

The LLM Evidence Is Limited

The paper’s main proof applies to analytic generative models, which are easier to analyze mathematically than modern LLMs. The LLM evidence is narrower: the authors use GPT-2 in simple scheduling and graph-related problems, showing behavior that supports the idea without proving that the same assumptions hold for modern LLMs.

The Research Points To A Foundation For Future Research

The paper offers a theoretical foundation for studying how generative models could be combined with external checking processes.

The research shows that Google researchers are exploring a framework for addressing the “plausible answer” problem, and the authors write that the “framework opens exciting avenues for future research.” They conclude that this research points “toward a principled foundation for adaptive generative models.”

Takeaways

- The “Coverage” Requirement:

Coarse learnability means the model does not have to learn the target perfectly. It needs to avoid losing useful areas of the answer space where better solutions might exist. - The Correction Step Matters:

ALDRIFT uses a correction step to keep the search closer to the intended target as the model is pushed toward better answers. - Two-Part Approach:

The framework uses a division of labor. The generative model handles qualitative or semantic preferences, while a separate process checks whether the answer works as a complete solution. - Limited LLM Evidence:

Tests with GPT-2 showed behavior that supports the idea in simple scheduling and graph-related examples, but not proof that the same assumptions hold for modern LLMs. - Real-World Use Is The Larger Goal:

The research matters to SEOs and businesses because AI answers are increasingly expected to do more than summarize information. They need to support decisions, plans, and actions that hold together outside the chat interface. While the framework is likely not being used in production, it does show Google is making progress on providing answers that are more than plausible.

Read the research paper here:

Sample-Efficient Optimization over Generative Priors via Coarse Learnability (PDF)

Featured Image by Shutterstock/Faizal Ramli