Google On AI Search & Why Browsy Queries Favor Full SERPs via @sejournal, @martinibuster

Google’s Liz Reid recently discussed what goes on behind the scenes of AI Search, particularly with the fragmentation of complex queries into smaller ones and a relatively new concept, Browsy Queries. Her feedback offers insights on what SEOs should be focusing on right now in order to perform better in AI search surfaces.

Search Behavior Is Varied, Not Monolithic

Host Joe Wazenthal asked Liz Reid about user behavior patterns in search, how users choose to use classic search or AI search, and what differences in queries result from choosing one platform over the other.

Liz Reid answered by first defining what she is talking about, linking classic search and AI Mode together as Search, then positioning Gemini as something else that is fundamentally different.

She also stated that there are a massive amount of users whose search behaviors are varies across all search surfaces, in essence saying that there isn’t a monolithic user behavior pattern in which people are doing the exact same searches, the patterns the interviewer was looking for in his question.

Liz Reid answered:

There’s sort of your main search page. There’s AI Mode. That’s part of search.

And then there’s the Gemini app.

And I would say there’s a lot of users, so their behavior varies across all of them.”

Search And AI Usage Patterns Are Complex

The SEO and publishing community often thinks about Search as Google but Liz Reid says that user behavior patterns point to a more complex search ecosystem where users are relying on multiple platforms.

She continued her answer:

“But there are some patterns. There’s plenty of people who co-use across them. There’s plenty of people that are actually using several AI products right now, just in general, not even just within Google.

Across Gemini and Search, the more informational ones… Like, if it’s an informational query, then the probability that they’re using Search or AI Mode is going to be higher.

If it’s a creative query, it’s like more of a productivity question like, please rewrite this to make it sound more formal, right? Those type questions are going to be more Gemini-oriented.

Between AI Mode and Search, the main search page, some people use AI Mode mostly via AI overviews. They start in AI overviews and they transition.

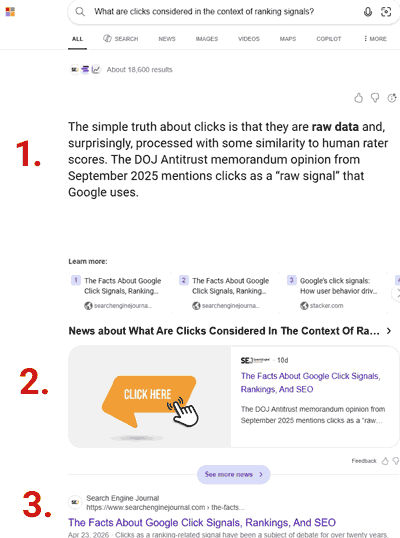

For those who go direct to AI Mode, they tend to do that for queries that they consider sort of more complex, longer questions, questions where they expect that they’re going to do more follow-ups, versus if you’re doing a very browsy query, you might choose to prefer all of the SERP.”

Browsy Queries And Browse Search Intent

When we think about search, it may be useful to consider that people not only search across platforms, but they do it for different reasons.

Takeaways About How People Use AI

- Co-Users

People use multiple platforms simultaneously (co-use) - Informational Queries

These tend to happen on Classic Search and AI Mode - Creative Queries

These tend to happen on Gemini - AI Mode Direct

Queries that originate on AI Mode, where people navigate to AI Mode, tend to be complex, what was traditionally called longtail. - Browsy Queries

This is a relatively new phrase that Googlers apparently use.

What Are Browsy Queries?

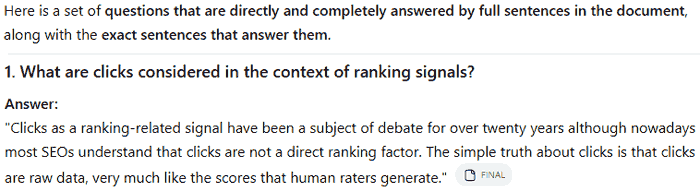

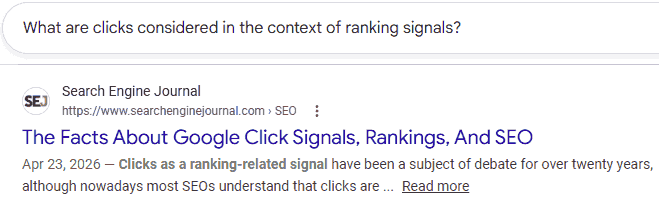

The phrase “browsy queries” must be something that Googlers use internally and maybe is more familiar with people who do Pay Per Click advertising. There aren’t really many instances of the phrase but here’s how Google uses it.

A software engineer formerly of DeepMind and Google describes in her LinkedIn Profile having created a machine learning model that identifies “browse intention” queries on Google Search, an invention that improved click-through rates by 5%.

She wrote:

“Built a machine learning model to identify ‘browse intention’ query on Google Search, which presents engaging content on search result pages for browsy queries (e.g. “best places to visit in Orlando”). Improved global search result click-through rate by 5%”

The phrase “browsy queries” is also used in a Google job description for a commerce software engineer, placing the phrase in the context of shopping queries.

“Commerce Retrieval researches and develops high-precision algorithms to reduce the search space for product queries by 8 orders of magnitude under tight latency and compute constraints. Our solutions are tailored to the unique complexities of the Shopping domain including browsy queries, a hierarchical schema, and short multimodal documents.”

It’s also used in the context of video ads in a Google support page for video ads:

“These new shoppable formats will be shown to potential customers in lower intent, more “browsy” Search placements earlier in their shopping journey.”

What Browsy Queries Means And How To Optimize For it

What’s consistent across all three uses is that “browsy queries” are defined by a discovery-level intent stage.

In each example, Google is identifying what the user keep the user exploring:

- The DeepMind example ties browsy queries to engaging content that a user wishes to browse through, not direct answers.

- The commerce job role positions browsy queries as a quality of commerce search.

- The ads example places browsy queries earlier in the shopping journey at about the discovery phase.

The useful takeaway is that Google treats these queries as exploration problems. What makes browsy queries complex is that they have under-specified user intent and are the result of consumers who may be looking for inspiration.

For an SEO or an online merchant, it means that a user has intent but hasn’t narrowed down what they want. That’s where contexts like “Stylish Outfits For Summer” come in handy. Broad keyword phrases are probably useful here. I like a pyramid structure where the deeper a user gets into a page, the more specific it may become.

Keyword Fragmentation In AI Search

Liz Reid explained that users have always wanted to express longer natural language queries but were forced to narrow them down to keywords like “best restaurants in New York” even though what they really wanted may have been more specific like a restaurant with vegan options and an opening for a party of five.

For as long as I’ve been in SEO, and I’m near 30 years in the business, keyword research has been the foundation of digital marketing. You pick the keywords you want to rank for then create the content in a way that is optimized for that keyword. The problem with optimizing for a short keyword phrase is that there are hidden meanings within that keyword and that’s always been the case.

The way Google used the issue of latent meanings within keywords is to use things like clicks to better understand what users meant when they typed ambiguous keyword phrases like “restaurants in New York.” Some SEOs believe that the clicks were used for ranking websites but another use for clicks is understanding what people mean when they type ambiguous phrases. What Google has done for quite awhile now is to rank the most popular meaning of the keyword phrase first and no matter how many links a page received, if the content aligned with a less popular meaning the page wouldn’t rank.

Liz Reid said that people who use AI-based search are using longer queries that articulate what the problem or information need is, making it easier for Google fetch the information they’re looking for. That change gets to the heart of the problem with organic search that AI search is solving and the implications for SEO are profound.

Liz Reid begins:

“We have seen with AI overviews meaningfully longer queries. We see more natural language queries, but it’s also not even something as basic as that.

It can also be like you were searching for restaurants. We used to laugh about the like before I worked on search, I worked on maps and local, some of the intersection with search, and people would just be like, “restaurants New York.”

And you’re like, what do you want me to do with that query? Like, okay, the best restaurants in New York are going to take three months and 99.9% of the population can’t afford to go to them.

Okay, but like, are you picking 10 random ones, etc.?

But like, part of why people would do that is they had a much more complex– I want a restaurant in this location for five people. It can’t be too pricey. I have a vegan member. I also have kids. That was the question they had in their mind.

And in the old world of keyword-ese, that information would be spread throughout the web. And so you wouldn’t feel confident you could just put in the question.

And now with AI Overviews and AI Mode, you can start to actually, and you see people do this, they tell you the real problem, right?

They don’t take their need and translate it to what the computer understands. They try to give the computer their actual need and expect us to do the translation.”

The big ideas to unpack there are:

- A typical complex question asked in AI Search may not be solved by one web page.

- Complex questions may be one-off and rarely, if ever, repeated, which in many cases may lower the value of optimizing for those phrases, because the time used for crafting them could be more profitably spent doing something else.

- Given that a site will likely share the AI Overviews (AIO) space with another site it increases the need to optimize other factors such as brand icons that stand out in a positive way, use of images that are relevant, and even the use of videos to claim as much AIO space as possible.

- And yet, perhaps the bigger takeaway is that it’s not all longtail because Google breaks down the longtail phrases into smaller highly specific keyword phrases that reflect a portion of the information need, query fan-out, and fires those off to classic search. Google’s AI then picks from among the top three for each query and uses that to synthesize an answer.

So it’s not really that SEOs should optimize for long-tail queries because query fan-out uses Classic Search, bringing it all back to the specific queries that web pages are relevant and optimized for.

Addressing Real Needs

Reid didn’t go into detail about this point but it’s interesting anyway because she said that the process of breaking a complex natural language query into smaller queries becomes a quality issue. One of the problems with AI Search is that people aren’t searching with the same keyword phrases which means that Google can’t cache similar queries in the same way it can with organic search.

She explained:

“I think it means you have to do, it’s a harder job on quality, right?

You have to take this question, there’s many parts, and you have to figure out how you break it apart. And you have to do work to think about things like latency, because you can’t just, you know, if everyone uses the same keyword and it’s not personalized, then you can cache it all. If all of a sudden the queries get much more diverse, you know, it has consequences there.

But I think we just see that it’s very empowering people, right? That it takes some of the work out of searching.

A few years ago, they said, What more can you do with Google search? But if you actually ask them, Okay, when was the last time you spent 20 minutes searching when you would have preferred to spend 2? It’s actually not that hard for me. … And so it’s been kind of exciting to just… make people’s lives easier by helping them address their real need.”

On the surface, the idea of addressing user’s real needs sounds like one of those unhelpful “be awesome” or “content is king” type slogans. But it’s actually a way that every SEO should be auditing web pages. Rather than limiting their scope to keywords, headings, technical issues, take a look at how it’s filling some kind of need.

Someone today asked me to look at their website that was having trouble getting indexed. They suspected that it might be a technical issue. My response is that yeah, everyone hopes it’s a technical issue but in many cases, especially for this one I was looking at, the problem becomes apparent when looked at through the lens of asking, “what need is this page filling?” as well as by asking, “How is this not just different from some other page but different and better?”

Watch the Liz Reid interview here:

Google’s Liz Reid on Who Will Own Search in a World of AI

Featured Image by Shutterstock/Summit Art Creations