Nurturing agentic AI beyond the toddler stage

Parents of young children face a lot of fears about developmental milestones, from infancy through adulthood. The number of months it takes a baby to learn to talk or walk is often used as a benchmark for wellness, or an indicator of additional tests needed to properly diagnose a potential health condition. A parent rejoices over the child’s first steps and then realizes how much has changed when the child can quickly walk outside, instead of slowly crawling in a safe area inside. Suddenly safety, including childproofing, takes a completely different lens and approach.

Generative AI hit toddlerhood between December 2025 and January 2026 with the introduction of no code tools from multiple vendors and the debut of OpenClaw, an open source personal agent posted on GitHub. No more crawling on the carpet—the generative AI tech baby broke into a sprint, and very few governance principles were operationally prepared.

The accountability challenge: It’s not them, it’s you

Until now, governance has been focused on model output risks with humans in the loop before consequential decisions were made—such as with loan approvals or job applications. Model behavior, including drift, alignment, data exfiltration, and poisoning, was the focus. The pace was set by a human prompting a model in a chatbot format with plenty of back and forth interactions between machine and human.

Today, with autonomous agents operating in complex workflows, the vision and the benefits of applied AI require significantly fewer humans in the loop. The point is to operate a business at machine pace by automating manual tasks that have clear architecture and decision rules. The goal, from a liability standpoint, is no reduction in enterprise or business risk between a machine operating a workflow and a human operating a workflow. CX Today summarizes the situation succinctly: “AI does the work, humans own the risk,” and California state law (AB 316), went into effect January 1, 2026, which removes the “AI did it; I didn’t approve it” excuse. This is similar to parenting when an adult is held responsible for a child’s actions that negatively impacts the larger community.

The challenge is that without building in code that enforces operational governance aligned to different levels of risk and liability along the entire workflow, the benefit of autonomous AI agents is negated. In the past, governance had been static and aligned to the pace of interaction typical for a chatbot. However, autonomous AI by design removes humans from many decisions, which can affect governance.

Considering permissions

Much like handing a three-year-old child a video game console that remotely controls an Abrams tank or an armed drone, leaving a probabilistic system operating without real-time guardrails that can change critical enterprise data carries significant risks. For instance, agents that integrate and chain actions across multiple corporate systems can drift beyond privileges that a single human user would be granted. To move forward successfully, governance must shift beyond policy set by committees to operational code built into the workflows from the start.

A humorous meme around the behavior of toddlers with toys starts with all the reasons that whatever toy you have is mine and ends with a broken toy that is definitely yours. For example, OpenClaw delivered a user experience closer to working with a human assistant;, but the excitement shifted as security experts realized inexperienced users could be easily compromised by using it.

For decades, enterprise IT has lived with shadow IT and the reality that skilled technical teams must take over and clean up assets they did not architect or install, much like the toddler giving back a broken toy. With autonomous agents, the risks are larger: persistent service account credentials, long-lived API tokens, and permissions to make decisions over core file systems. To meet this challenge, it’s imperative to allocate upfront appropriate IT budget and labor to sustain central discovery, oversight, and remediation for the thousands of employee or department-created agents.

Having a retirement plan

Recently, an acquaintance mentioned that she saved a client hundreds of thousands of dollars by identifying and then ending a “zombie project” —a neglected or failed AI pilot left running on a GPU cloud instance. There are potentially thousands of agents that risk becoming a zombie fleet inside a business. Today, many executives encourage employees to use AI—or else—and employees are told to create their own AI-first workflows or AI assistants. With the utility of something like OpenClaw and top-down directives, it is easy to project that the number of build-my-own agents coming to the office with their human employee will explode. Since an AI agent is a program that would fall under the definition of company-owned IP, as a employee changes departments or companies, those agents may be orphaned. There needs to be proactive policy and governance to decommission and retire any agents linked to a specific employee ID and permissions.

Financial optimization is governance out of the gate

While for some executives, autonomous AI sounds like a way to improve their operating margins by limiting human capital, many are finding that the ROI for human labor replacement is the wrong angle to take. Adding AI capabilities to the enterprise does not mean purchasing a new software tool with predictable instance-per-hour or per-seat pricing. A December 2025 IDC survey sponsored by Data Robot indicated that 96% of organizations deploying generative AI and 92% of those implementing agentic AI reported costs were higher or much higher than expected.

The survey separates the concepts of governance and ROI, but as AI systems scale across large enterprises, financial and liability governance should be architected into the workflows from the beginning. Part of enterprise class governance stems from predicting and adhering to allocated budgeting. Unlike the software financial models of per-seat costs with support and maintenance fees, use of AI is consumption and usage costs scale as the workflow scales across the enterprise: the more users, the more tokens or the more compute time, and the higher the bill. Think of it as a tab left open, or an online retailer’s digital shopping cart button unlocked on a toddler’s electronic game device.

Cloud FinOps was deterministic, but generative AI and agentic AI systems built on generative AI are probabilistic. Some AI-first founders are realizing that a single agents’ token costs can be as high as $100,000 per session. Without guardrails built in from the start, chaining complex autonomous agents that run unsupervised for long periods of time can easily blow past the budget for hiring a junior developer.

Keeping humans in the loop remains critical

The promise of autonomous agentic AI is acceleration of business operations, product introductions, customer experience, and customer retention. Shifting to machine-speed decisions without humans in and or on the loop for these key functions significantly changes the governance landscape. While many of the principles around proactive permissions, discovery, audit, remediation, and financial operations/optimizations are the same, how they are executed has to shift to keep pace with autonomous agentic AI.

This content was produced by Intel. It was not written by MIT Technology Review’s editorial staff.

Why physical AI is becoming manufacturing’s next advantage

For decades, manufacturers have pursued automation to drive efficiency, reduce costs, and stabilize operations. That approach delivered meaningful gains, but it is no longer enough.

Today’s manufacturing leaders face a different challenge: how to grow amid labor constraints, rising complexity, and increasing pressure to innovate faster without sacrificing safety, quality, or trust. The next phase of transformation will not be defined by isolated AI tools or individual robots, but by intelligence that can operate reliably in the physical world.

This is where physical AI—intelligence that can sense, reason, and act in the real world—marks a decisive shift. And it is why Microsoft and NVIDIA are working together to help manufacturers move from experimentation to production at industrial scale.

The industrial frontier: Intelligence and trust, not just automation

Most early AI adoption focused on narrow optimization: automating tasks, improving utilization, and cutting costs. While valuable, that phase often created new friction, including skills gaps, governance concerns, and uncertainty about long‑term impact. Furthermore, the use cases were plentiful but not as strategic.

The industrial frontier represents a different approach. Rather than asking how much work machines can replace, frontier manufacturers ask how AI can expand human capability, accelerate innovation, and unlock new forms of value while remaining trustworthy and controllable.

Across industries, companies that successfully move into this frontier phase share two non‑negotiables:

- Intelligence: AI systems must understand how the business actually handles its data, workflows, and institutional knowledge.

- Trust: As AI begins to act in high‑stakes environments, organizations must retain security, governance, and observability at every layer.

Without intelligence, AI becomes generic. Without trust, adoption stalls.

Why manufacturing is the proving ground for physical AI

Manufacturing is uniquely positioned at the center of this shift.

AI is no longer confined to planning or analytics. It is moving into physical execution: coordinating machines, adapting to real‑world variability, and working alongside people on the factory floor. Robotics, autonomous systems, and AI agents must now perceive, reason, and act in dynamic environments.

This transition exposes a critical gap. Traditional automation excels at repetition but struggles with adaptability. Human workers bring judgment and context but are constrained by scale. Physical AI closes that gap by enabling human‑led, AI‑operated systems, where people set intent and intelligent systems execute, learn, and improve over time. Humans are essential for scaled success.

Microsoft and NVIDIA: Accelerating physical AI at scale

Physical AI cannot be delivered through point solutions. It requires agentic-driven, enterprise-grade development, deployment, and operations toolchains and workflows that connect simulation, data, AI models, robotics, and governance into a coherent system.

NVIDIA is building the AI infrastructure that makes physical AI possible, including accelerated computing, open models, simulation libraries, and robotics frameworks and blueprints that enable the ecosystem to build autonomous robotics systems that can perceive, reason, plan, and take action in the physical world. Microsoft complements this with a cloud and data platform designed to operate physical AI securely, at scale, and across the enterprise.

Together, Microsoft and NVIDIA are enabling manufacturers to move beyond pilots toward production‑ready physical AI systems that can be developed, tested, deployed, and continuously improved across heterogeneous environments spanning the product lifecycle, factory operations, and supply chain.

From intelligence to action: Human-agent teams in the factory

At the industrial frontier, AI is not a standalone system, but a digital teammate.

When AI agents are grounded in the proper operational data, embedded in human workflows, and governed end to end, they can assist with tasks such as:

- Optimizing production lines in real time

- Coordinating maintenance and quality decisions

- Adapting operations to supply or demand disruptions

- Accelerating engineering and product lifecycle decisions

For example, manufacturers are beginning to use simulation‑grounded AI agents to evaluate production changes virtually before deploying them on the factory floor, reducing risk while accelerating decision‑making.

Crucially, frontier manufacturers design these systems so humans remain in control. AI executes, monitors, and recommends, while people provide intent, oversight, and judgment. This balance allows organizations to move faster without losing confidence or control.

The role of trust in scaling physical AI

As physical AI systems scale, trust becomes the limiting factor.

Manufacturers must ensure that AI systems are secure, observable, and operating within policy, especially when they influence safety‑critical or mission‑critical processes. Governance cannot be an afterthought; It must be engineered into the platform itself.

This is why frontier manufacturers treat trust as a first‑class requirement, pairing innovation with visibility, compliance, and accountability. Only then can physical AI move from promising demonstrations to enterprise‑wide deployment.

Why this moment matters—and what’s next

The convergence of AI agents, robotics, simulation, and real‑time data marks an inflection point for manufacturing. What was once experimental is becoming operational. What was once siloed is becoming connected.

At NVIDIA GTC 2026, Microsoft and NVIDIA will demonstrate how this collaboration supports physical AI systems that manufacturers can deploy today and scale responsibly tomorrow. From simulation‑driven development to real‑world execution, the focus is on helping manufacturers cross the industrial frontier with confidence.

For manufacturing leaders, the question is no longer whether physical AI will reshape operations, but how quickly they can adopt it responsibly, at scale, and with trust built in from the start.

Discover more with Microsoft at NVIDIA GTC 2026.

This content was produced by Microsoft. It was not written by MIT Technology Review’s editorial staff.

Building a strong data infrastructure for AI agent success

In the race to adopt and show value from AI, enterprises are moving faster than ever to deploy agentic AI as copilots, assistants, and autonomous task-runners. In late 2025, nearly two-thirds of companies were experimenting with AI agents, while 88% were using AI in at least one business function, up from 78% in 2024, according to McKinsey’s annual AI report. Yet, while early pilots often succeed, only one in 10 companies actually scaled their AI agents.

One major issue: AI agents are only as effective as the data foundation supporting them. Experts argue that most companies are seeing delays in implementing AI, not because of shortcomings in the models, but because they lack data architectures that deliver business context to be reliably used by humans and agents.

Companies need to be ready with the right data architecture, and the next few months — years, at most — will be critical, says Irfan Khan, president and chief product officer of SAP Data & Analytics.

“The only prediction anybody can reliably make is that we don’t know what’s going to happen in the years, months — or even weeks — ahead with AI,” he says. “To be able to get quick wins right now, you need to adopt an AI mindset and … ground your AI models with reliable data.”

While data has always been important for business, it will be even more so in the age of AI. The capabilities of agentic AI will be set more by the soundness of enterprise data architecture and governance, and less by the evolution of the models. To scale the technology, businesses need to adopt a modern data infrastructure that delivers context along with the data.

More business context, not necessarily more data

Traditional views often conflate structured data with high value, and unstructured data with less value. However, AI complicates that distinction. High-value data for agents is defined less by format and more by business context. Data for critical business functions — such as supply-chain operations and financial planning — is context dependent. While fine-grained, high-volume data, such as IoT, logs, and telemetry, can yield value, but only when delivered with business context.

For that reason, the real risk for agentic AI is not lack of data, but lack of grounding, says Khan.

“Anything that is business contextual will, by definition, give you greater value and greater levels of reliability of the business outcome,” he says. “It’s not as simple as saying high-value data is structured data and low-value data is where you have lots of repetition — both can have huge value in the right hands, and that’s what’s different about AI.”

Context can be derived through integration with software, on-site analysis and enrichment, or through the governance pipeline. Data lacking those qualities will likely be untrusted — one reason why two-thirds of business leaders do not fully trust their data, according to the Institute for Data and Enterprise AI (IDEA). The resulting “trust debt” has held back businesses in their quest for AI readiness. Overcoming that lack of trust requires shared definitions, semantic consistency, and reliable operational context to align data with business meaning.

Data sprawl demands a semantic, business-aware layer

Over the past decade, the most important shift in enterprise data architecture has been the separation of compute and storage, cloud-scale flexibility, says Khan. Yet, that separation and move to cloud also created sprawl, with data housed in multiple clouds, data lakes, warehouses, and a multitude of SaaS applications.

As companies move to AI, that sprawl does not go away. In fact, the problem is growing with more than two-thirds of companies citing data siloes as a top challenge in adopting AI, with more than half of enterprises struggling with 1,000 data sources or more. While the last era was about laying the foundation on which to build software-as-a-service — separating compute and storage and building lakes — the next era is about delivering the right data to autonomous AI agents tasked with various business functions.

“Probably the biggest innovation that occurred in data management was the separation of compute and store,” Khan says. “But what’s really making a distinction now is the way that we harmonize the data and harvest the value of the data across multiple sources of content.”

To do that requires a semantic or knowledge layer that supports multiple platforms, encodes business rules and relationships, provides a business-contextual and governed view of data, and allows humans and agents to access the data in the appropriate ways. But legacy data architectures cannot power the autonomous AI systems of the future, consultancy Deloitte stated in its State of AI in the Enterprise report. Only four in 10 companies believe their data management process is ready for AI, and that’s down from 43% the previous year, suggesting that as companies explore AI deployment, they are realizing their infrastructure’s shortcomings.

Agentic AI does not replace SaaS

Some investors and technologists speculate that AI agents will make SaaS applications obsolete. Khan strongly disagrees. Over the past 15 years, value has steadily moved up the stack, from on-premises infrastructure to infrastructure as a service (IaaS) to platform as a service (PaaS) to SaaS. Agentic AI is simply the next layer. Agentic AI will have its own layer to access the data and interact with the business logic. The value rises up the stack, but nothing below disappears, he says.

“SaaS doesn’t go away,” he says. “It just means SaaS and these agents will cooperate with one another. Companies are not going to throw away their entire general ledger and replace it with an agent. What’s the agent going to do? It doesn’t know anything without business context and business processing.”

In this emerging model, the software stack is being reshaped so that applications and data provide governed context within which AI can act effectively. SaaS applications remain the systems of record, while the semantic layer becomes the business-context source of truth. AI agents become a new engagement layer, orchestrating across systems, and both humans and agents become “first-class citizens” in how they access business logic, he says.

Critically, agents cannot directly connect to every operational system. “If we’re saying agents are going to take over the world … you can’t have an agent talking to every operational backend system,” Khan warns. “It just doesn’t work that way.”

This further elevates the importance of a semantic or business-fabric layer.

Where to start

Most enterprises need to begin where their data already lives — in platforms like Snowflake, Databricks, Google BigQuery, or an existing SAP environment. Khan says that’s normal, but warns against rebuilding old patterns of vendor lock-in.

He suggests that companies prioritize the data that matters most by focusing on preserving and providing business context to operational and application data. Companies should also invest early in governance and semantics by defining shared policies, access rules, and semantic models before scaling pilots. Finally, businesses should prioritize openness and fabric-style interoperability rather than forcing all data into one stack.

Khan cautions against aiming for full automation too early. “There is a new brave opportunity to really engage in the agentic and AI world,” Khan says, “Fully automating [critical business processes] is maybe a stretch, because there’s going to be a lot of extra oversight necessary.” Early wins will likely come from less-critical processes and from agents that work off fresh, stateful data rather than stale dashboards, he adds. As AI begins to deliver value and adoption increases, leaders must decide how to reinvest those gains to drive top-line efficiency or enter new markets.

Register for “The Fabric of Data & AI” virtual event on March 24, 2026. Hear insights from executives and thought leaders who are shaping the future of data and AI.

This content was produced by Insights, the custom content arm of MIT Technology Review. It was not written by MIT Technology Review’s editorial staff. It was researched, designed, and written by human writers, editors, analysts, and illustrators. This includes the writing of surveys and collection of data for surveys. AI tools that may have been used were limited to secondary production processes that passed thorough human review.

Pragmatic by design: Engineering AI for the real world

The impact of artificial intelligence extends far beyond the digital world and into our everyday lives, across the cars we drive, the appliances in our homes, and medical devices that keep people alive. More and more, product engineers are turning to AI to enhance, validate, and streamline the design of the items that furnish our worlds.

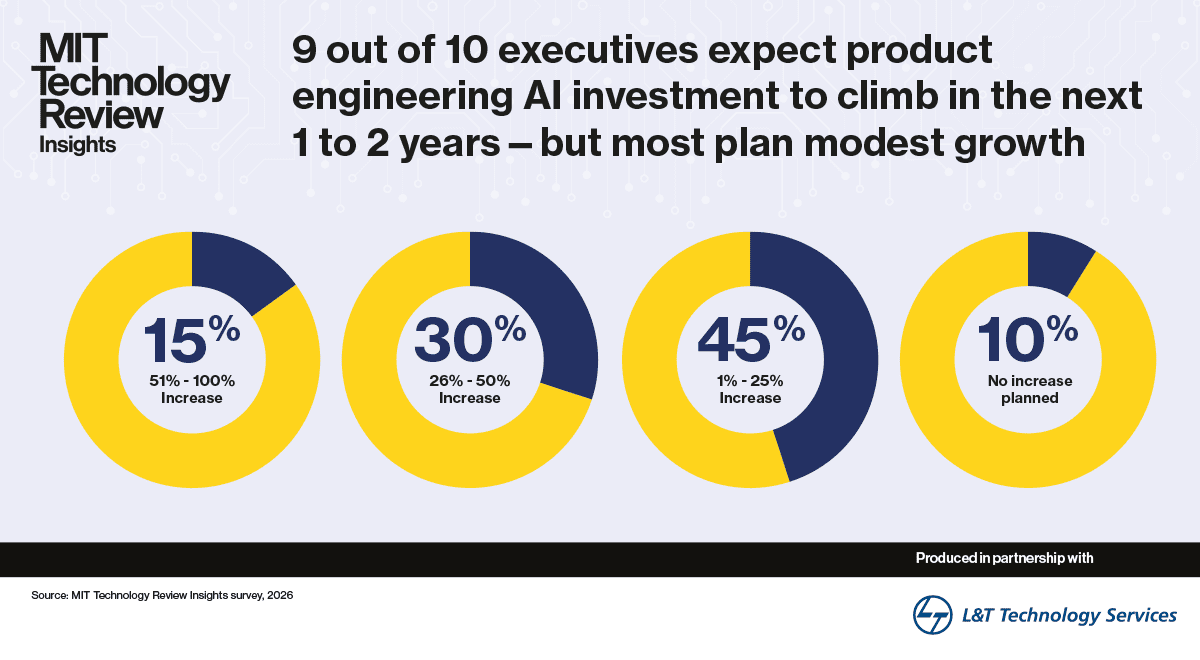

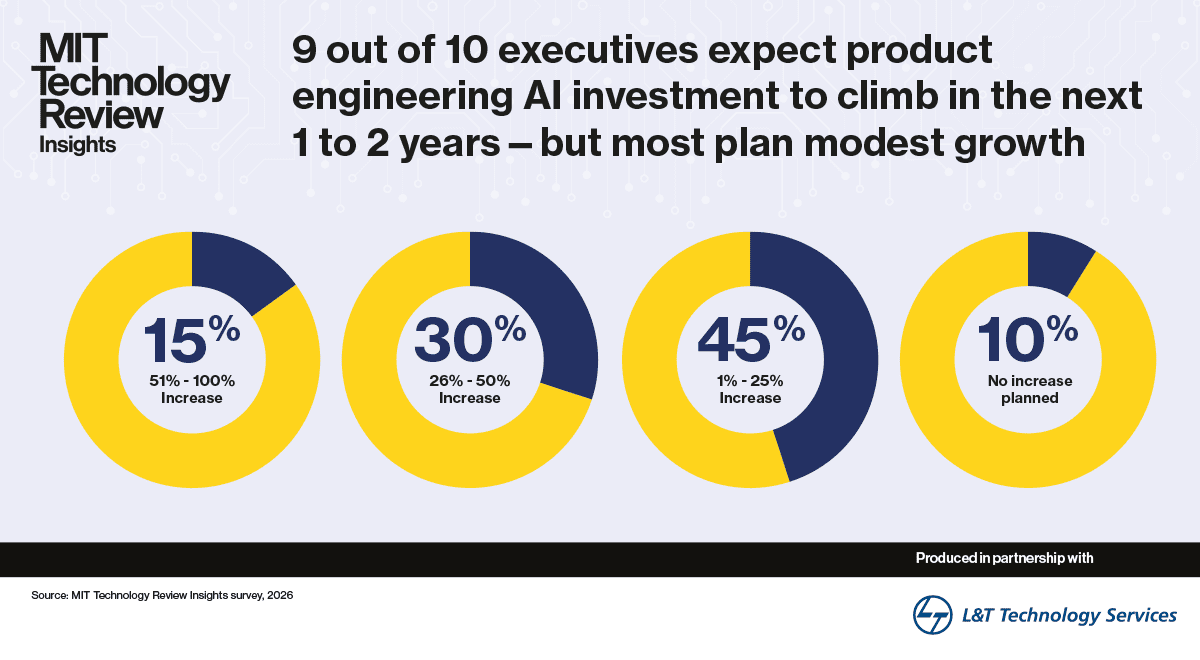

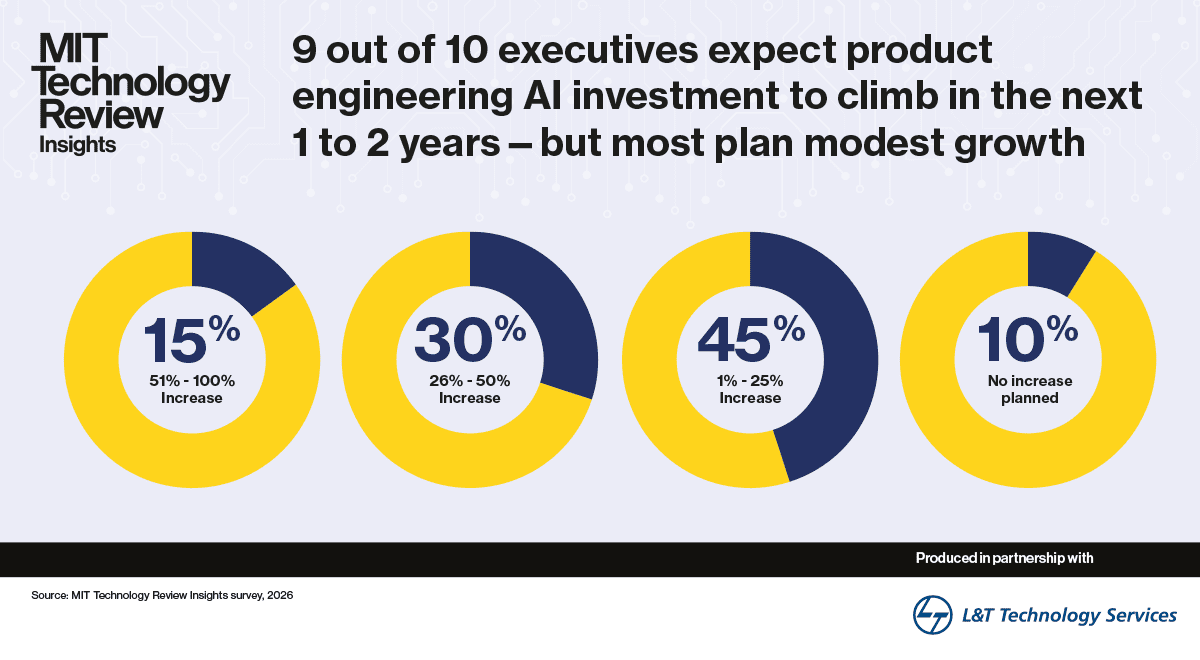

The use of AI in product engineering follows a disciplined and pragmatic trajectory. A significant majority of engineering organizations are increasing their AI investment, according to our survey, but they are doing so in a measured way. This approach reflects the priorities typical of product engineers. Errors have concrete consequences beyond abstract fears, ranging from structural failures to safety recalls and even potentially putting lives at risk. The central challenge is realizing AI’s value without compromising product integrity.

Drawing on data from a survey of 300 respondents and in-depth interviews with senior technology executives and other experts, this report examines how product engineering teams are scaling AI, what is limiting broader adoption, and which specific capabilities are shaping adoption today and, in the future, with actual or potential measurable outcomes.

Key findings from the research include:

Verification, governance, and explicit human accountability are mandatory in an environment where the outputs are physical—and the risk high. Where product engineers are using AI to directly inform physical designs, embedded systems, and manufacturing decisions that are fixed at release, product failures can lead to real-world risks that cannot be rolled back. Product engineers are therefore adopting layered AI systems with distinct trust thresholds instead of general-purpose deployments.

Predictive analytics and AI-powered simulation and validation are the top near-term investment priorities for product engineering leaders. These capabilities—selected by a majority of survey respondents—offer clear feedback loops, allowing companies to audit performance, attain regulatory approval, and prove return on investment (ROI). Building gradual trust in AI tools is imperative.

Nine in ten product engineering leaders plan to increase investment in AI in the next one to two years, but the growth is modest. The highest proportion of respondents (45%) plan to increase investment by up to 25%, while nearly a third favor a 26% to 50% boost. And just 15% plan a bigger step change—between 51% and 100%. The focus for product engineers is on optimization over innovation, with scalable proof points and near-term ROI the dominant approach to AI adoption, as opposed to multi-year transformation.

Sustainability and product quality are top measurable outcomes for AI in product engineering. These outcomes, visible to customers, regulators, and investors, are prioritized over competitive metrics like time to-market and innovation—rated of medium importance—and internal operational gains like cost reduction and workforce satisfaction, at the bottom. What matters most are real-world signals like defect rates and emissions profiles rather than internal engineering dashboards.

This content was produced by Insights, the custom content arm of MIT Technology Review. It was not written by MIT Technology Review’s editorial staff. It was researched, designed, and written by human writers, editors, analysts, and illustrators. This includes the writing of surveys and collection of data for surveys. AI tools that may have been used were limited to secondary production processes that passed thorough human review.

Prioritizing energy intelligence for sustainable growth

Loudoun County, Virginia, once known for its pastoral scenery and proximity to Washington, DC, has earned a more modern reputation in recent years: The area has the highest concentration of data centers on the planet.

Ten years ago, these facilities powered email and e-commerce. Today, thanks to the meteoric rise in demand for AI-infused everything, local utility Dominion Energy is working hard to keep pace with surging power demands. The pressure is so acute that Dulles International Airport is constructing the largest airport solar installation in the country, a highly visible bid to bolster the region’s power mix.

Data center campuses like Loudoun’s are cropping up across the country to accommodate an insatiable appetite for AI. But this buildout comes at an enormous cost. In the US alone, data centers consumed roughly 4% of national electricity in 2024. Projections suggest that figure could stretch to 12% by 2028. To put this in perspective, a single 100-megawatt data center consumes roughly as much electricity as 80,000 American homes. Data centers being built today are gearing up for gigawatt scale, enough to power a mid-sized city.

For enterprise leaders, energy costs associated with AI and data infrastructure are quickly becoming both a budget concern and a potential bottleneck on growth. Meeting this moment calls for a capability most organizations are only beginning to develop: energy intelligence. The emerging discipline refers to understanding where, when, and why energy is consumed, and using that insight to optimize operations and control costs.

These efforts stand to address both immediate financial pressures and longer-term reputational risks, as communities like Loudoun County grow increasingly concerned about the energy demands associated with nearby data center development.

In December 2025, MIT Technology Review Insights conducted a survey of 300 executives to understand how companies are thinking about energy intelligence today, as well as where they’re anticipating challenges in the future.

Here are five of our most notable findings:

- Energy intelligence is becoming a universal business priority. One hundred percent of executives surveyed expect the ability to measure and strategically manage power consumption to become an important business metric in the next two years.

- AI workloads are already driving measurable cost increases, and the surge is just beginning. Two-thirds of executives (68%) report their companies have faced energy cost increases of 10% or more in the past 12 months due to AI and data workloads. Nearly all respondents (97%) anticipate their organization’s AI-related energy consumption will increase over the next 12-18 months.

- Mounting costs are the top energy-related threat to AI innovation. Half of executives (51%) rank rising costs as the single greatest energy-related risk to their digital and AI initiatives. Most companies currently tracking and attempting to optimize data center energy consumption are motivated by cost management.

- Organizations are responding through infrastructure optimization and energy-efficient partnerships. To address mounting energy demands, three in four leaders (74%) are optimizing existing infrastructure, while 69% are partnering with energy-efficient cloud and storage providers. More than half are also implementing AI workload scheduling (61%) and investing in more efficient hardware (56%).

- Closing the measurement gap is the next frontier. Most enterprises still lack the granular data needed for true energy intelligence. This gap is especially pronounced for companies relying on third-party cloud providers and managed services for their data compute and storage needs, where 71% say rising consumption-based costs originate, yet energy metrics are often opaque.

This content was produced by Insights, the custom content arm of MIT Technology Review. It was not written by MIT Technology Review’s editorial staff. It was researched, designed, and written by human writers, editors, analysts, and illustrators. This includes the writing of surveys and collection of data for surveys. AI tools that may have been used were limited to secondary production processes that passed thorough human review.

The usability imperative for securing digital asset devices

When Tony Fadell started working on the iPod, usability often trumped security. The result was an iterative process. Every time someone would find a security weakness or a way to hack the device, the development group would iterate to add measures and fix the issues. Yet, flaws would frequently be found, and the secure design of the product became a moving target.

But when it came to designing a device specifically for security purposes, there could be no iterative process after rolling it out: Security had to be the number one priority.

“As you develop these things, you’re a victim of your own development speed,” says Fadell, who developed Ledger Stax, a signing device for securing digital assets, and is now a board member at digital asset security firm Ledger. “If you introduced these features and functions without the proper review, and now customers are demanding security, you’ll realize that you should have designed it differently from the start, and it’s very hard to undo what you’ve already done.”

A critical aspect of designing secure technology, however, must be ease of use too. Without it, it is all too simple for users to make a mistake or use an unsafe workaround that undermines device protections. Think a post-it stuck to a monitor or some variation of “123456” or “admin” for passwords.

With digital asset security devices like signers—more commonly called “wallets”—such errors could lead to seriously detrimental outcomes. If, for example, a user’s private key falls into the wrong hands, bad actors can use it to steal their digital assets. Estimates suggest that around 20% of all Bitcoin—worth around $355 billion—are inaccessible to owners. One of the reasons for this is likely because they lost their private keys.

In the past, crypto devices have been notoriously difficult to use. As cryptocurrency becomes ever more popular, valuable, and mainstream—attracting greater attention from criminals as the stakes rise—designers and engineers are prioritizing both security and usability when developing digital asset devices, drawing on in-depth research to iterate.

The three components of security

Strong security models for devices like signers, which are used to secure blockchain transactions, require three major components. First, a secure operating system. Second, a secure element to bind the software to the hardware. And third, a secure user interface. Each of which need to be frequently tested by researchers and white hat hackers to simulate real-world attacks and improve product resilience and usability.

The first two elements focus on securing the device software and hardware. Secure software has always been a problem, but one that has improved over the last decade, as security architectures and processes have been refined. Meanwhile, hardware security components have become widely available—from trusted platform modules on computers to secure enclaves in smartphones—allowing digital information to essentially be locked to a device.

For crypto signers, hardware must provide encryption capabilities. And the security of the software must be frequently tested. Ledger, for example, has a secure OS and a Secure Element that handles encryption primitives, and a secure display that prevents device takeover.

Security and usability working hand in hand

Asset recovery is a major consideration when designing signers. If recovery options are not easy to use, an owner could lose access. But if recovery processes are not secure enough, attackers could exploit the system. With SIM swapping attacks, for example, attackers can tap into a mobile communications channel used for account recovery and “recover” a victim’s password to steal their assets.

In the digital-asset ecosystem, the creation of the seed phrase, a sequence of 12 to 24 words that could act as a passphrase for wallets is an example of improving usability and security. Known more formally as Bitcoin Improvement Proposal 39 (BIP-39), the approach gives users a master password to unlock their hierarchical deterministic (HD) wallets.

There is a lot of creative tension between the security team and the UX team that happens to achieve the proper balance between convenience and safety, Fadell says, referring to Ledger’s security research team, the Donjon. “We mock things up, we prototype things from a UX UI perspective, we walk through it, then we walk the Donjon team through it,” Fadell explains. “We push back and forth to find the absolute optimal solution to balance the two.”

Through the research the Donjon team has conducted, Ledger designed its Recovery Key—an NFC-based physical card to back up your 24 words—to be both user-friendly and secure. “What we did, as a first in the industry, was include an NFC card,” says Fadell. “Instead of only writing it down, you can also have an NFC card called a Recovery Key. You can have multiple Recovery Keys and store them in a lockbox, a safety deposit box, or give them to someone you trust for safekeeping.”

A number of government initiatives are working to regulate this balance between security and usability. This includes the US Cybersecurity and Infrastructure Security Agency’s Secure by Design, which aims to build cybersecurity into the design and manufacture of technology products. And the UK’s National Cyber Security Centre’s Software Security Code of Practice, which outlines security principles expected of all organizations that develop or sell software.

Enterprise security presents distinct challenges

Embedding usability and security into devices for companies adds further complexity as businesses need features such as multi-signature capabilities to protect against single points of failure, whether from external attacks or internal bad actors.

Security design can take these requirements into account, with secure governance using multiple signatures (multisig), hardware security modules (HSMs) for key storage, trusted display systems, and other usable security capabilities.

These technologies are critically important for companies who have roles in the blockchain ecosystem. Failure to establish robust security measures can have dire consequences. In 2024, for example, unknown cybercriminals made off with more than $300 million worth of assets from DMM Bitcoin, leading the Japanese cryptocurrency platform to close six months later. Japan’s Financial Services Agency discovered severe risk management issues, including inadequate oversight, lack of independent audits, and poor security practices.

For companies, allowing a multi-stage process that involves a required number of stakeholders is critical, says Fadell. “It’s making sure that the attack vector is not just one person, and so you need to support multiple people with multiple factors on all of their devices as well,” he says. “It gets to be a real combinatoric problem.”

R&D to stay one step ahead

To keep up with requirements and offer strong security with improved visibility, crypto firms need to invest in research and development, Fadell says. Attack labs, such as Ledger Donjon, can conduct real-world testing on specific enterprise security requirements and create scenarios to educate both management and workers of the potential threats.

Such research and development can support device designers and engineers in their never-ending mission to balance security measures with usability so that digital asset devices can support users to safeguard their digital assets in a constantly evolving crypto and cyber landscape.

Learn more about how to secure digital assets in the Ledger Academy.

This content was produced by Insights, the custom content arm of MIT Technology Review. It was not written by MIT Technology Review’s editorial staff.

This content was researched, designed, and written by human writers, editors, analysts, and illustrators. This includes the writing of surveys and collection of data for surveys. AI tools that may have been used were limited to secondary production processes that passed thorough human review.

Bridging the operational AI gap

The transformational potential of AI is already well established. Enterprise use cases are building momentum and organizations are transitioning from pilot projects to AI in production. Companies are no longer just talking about AI; they are redirecting budgets and resources to make it happen. Many are already experimenting with agentic AI, which promises new levels of automation. Yet, the road to full operational success is still uncertain for many. And, while AI experimentation is everywhere, enterprise-wide adoption remains elusive.

Without integrated data and systems, stable automated workflows, and governance models, AI initiatives can get stuck in pilots and struggle to move into production. The rise of agentic AI and increasing model autonomy make a holistic approach to integrating data, applications, and systems more important than ever. Without it, enterprise AI initiatives may fail. Gartner predicts over 40% of agentic AI projects will be cancelled by 2027 due to cost, inaccuracy, and governance challenges. The real issue is not the AI itself, but the missing operational foundation.

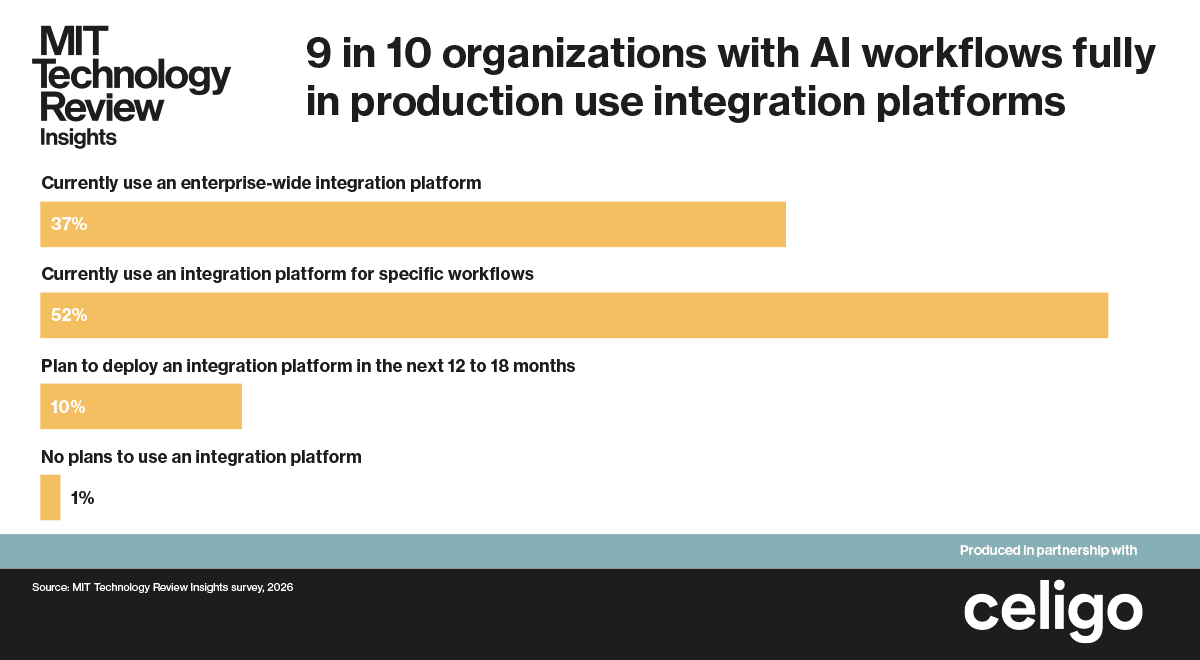

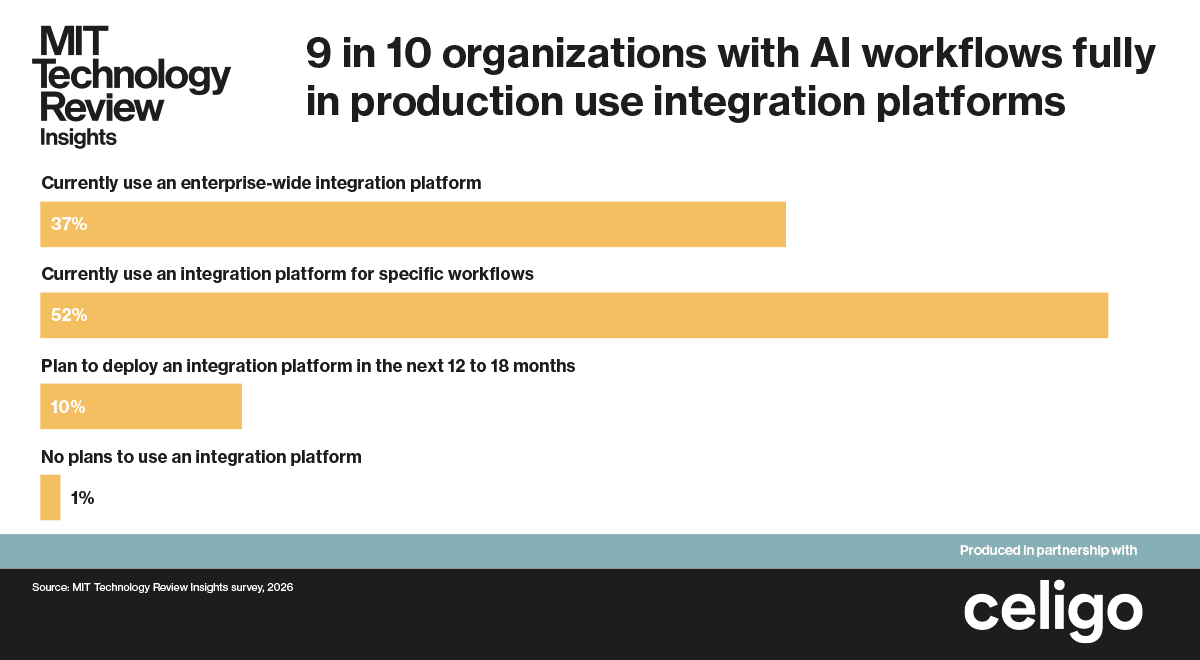

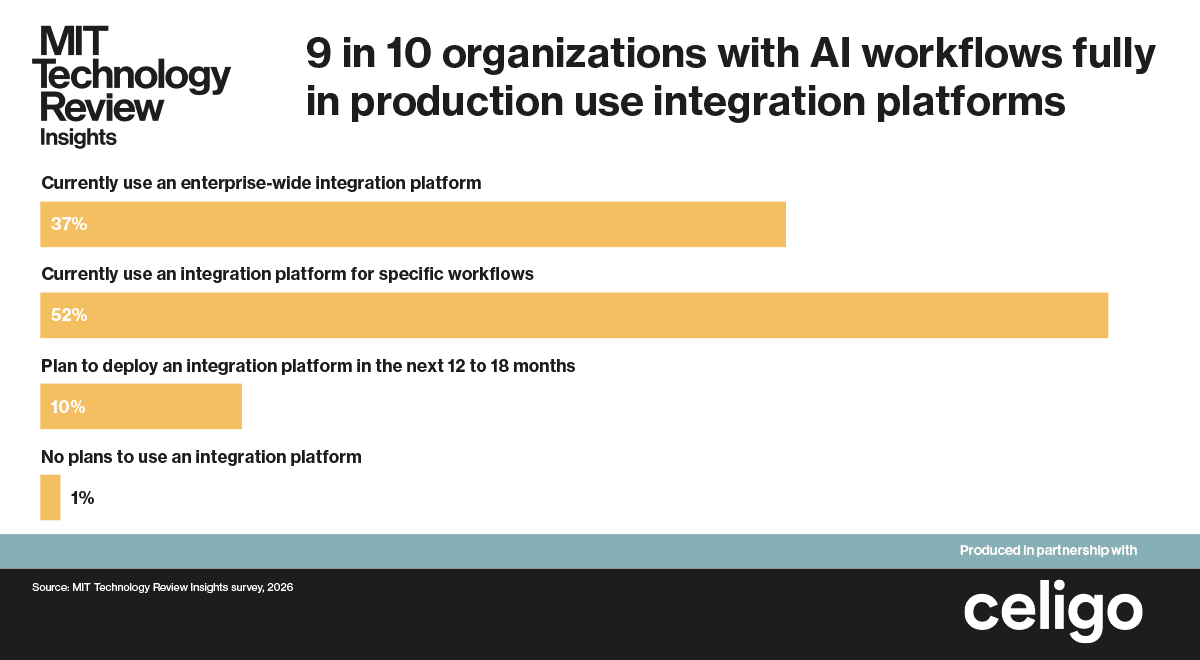

To understand how organizations are structuring their AI operations and how they are deploying successful AI projects, MIT Technology Review Insights surveyed 500 senior IT leaders at mid- to large-size companies in the US, all of which are pursuing AI in some way.

The results of the survey, along with a series of expert interviews, all conducted in December 2025, show that a strong integration foundation aligns with more advanced AI implementations, conducive to enterprise-wide initiatives. As AI technologies and applications evolve and proliferate, an integration platform can help organizations avoid duplication and silos, and have clear oversight as they navigate the growing autonomy of workflows.

Key findings from the report include the following:

Some organizations are making progress with AI. In recent years, study after study has exposed a lack of tangible AI success. Yet, our research finds three in four (76%) surveyed companies have at least one department with an AI workflow fully in production.

AI succeeds most frequently with well-defined, established processes. Nearly half (43%) of organizations are finding success with AI implementations applied to well-defined and automated processes. A quarter are succeeding with new processes. And one-third (32%) are applying AI to various processes.

Two-thirds of organizations lack dedicated AI teams. Only one in three (34%) organizations have a team specifically for maintaining AI workflows. One in five (21%) say central IT is responsible for ongoing AI maintenance, and 25% say the responsibility lies with departmental operations. For 19% of organizations, the responsibility is spread out.

Enterprise-wide integration platforms lead to more robust implementation of AI. Companies with enterprise-wide integration platforms are five times more likely to use more diverse data sources in AI workflows. Six in 10 (59%) employ five or more data sources, compared to only 11% of organizations using integration for specific workflows, or 0% of those not using an integration platform. Organizations using integration platforms also have more multi-departmental implementation of AI, more autonomy in AI workflows, and more confidence in assigning autonomy in the future.

This content was produced by Insights, the custom content arm of MIT Technology Review. It was not written by MIT Technology Review’s editorial staff. It was researched, designed, and written by human writers, editors, analysts, and illustrators. This includes the writing of surveys and collection of data for surveys. AI tools that may have been used were limited to secondary production processes that passed thorough human review.

Finding value with AI and Industry 5.0 transformation

For years, Industry 4.0 transformation has centered on the convergence of intelligent technologies like AI, cloud, the internet of things, robotics, and digital twins. Industry 5.0 marks a pivotal shift from integrating emerging technologies to orchestrating them at scale. With Industry 5.0, the purpose of this interconnected web of technologies is more nuanced: to augment human potential, not just automate work, and enhance environmental sustainability.

Industry 5.0 has ushered in a radically new level of collaboration between humans and machines, one that removes data silos and optimizes infrastructure, operations, and resource use to disrupt business models and create new forms of enterprise value. But without discipline in tracking value creation, investments risk being wasted on incremental efficiency gains rather than strategic growth.

“To realize the promise of Industry 5.0, companies must move beyond cost and efficiency to focus on growth, resilience, and human-centric outcomes,” says Sachin Lulla, EY Americas industrials and energy transformation leader. “This requires not just new technologies, but new ways of working—where people and machines collaborate, and where value is measured not just in dollars saved, but in new opportunities created.”

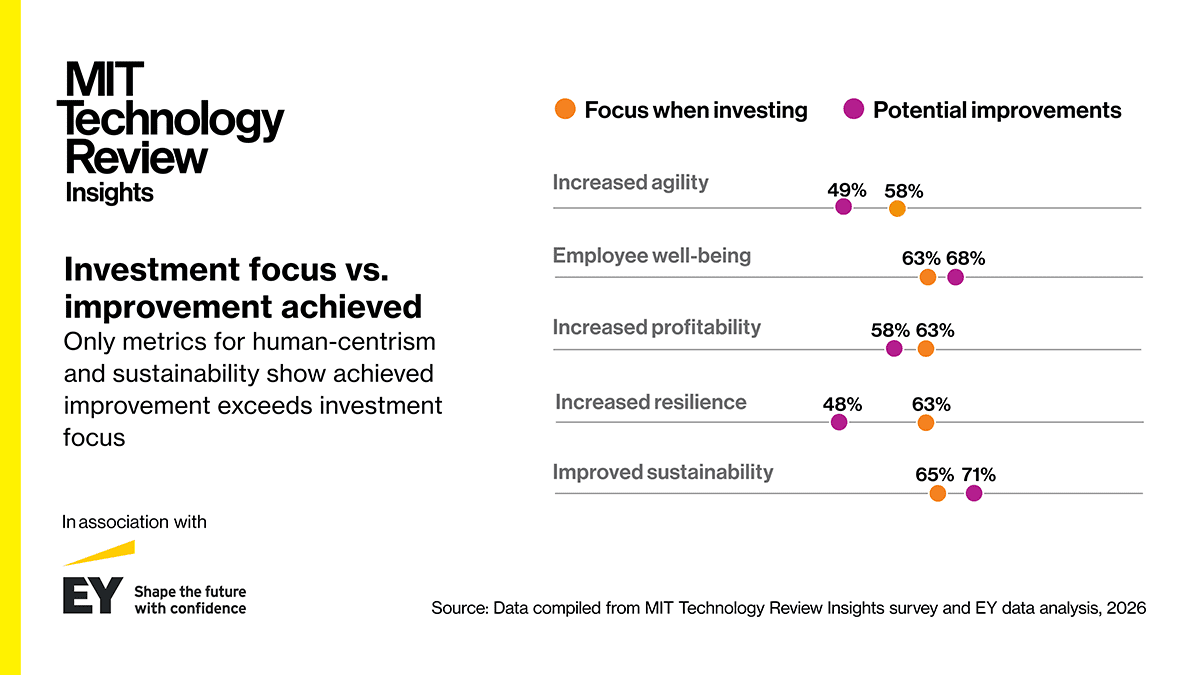

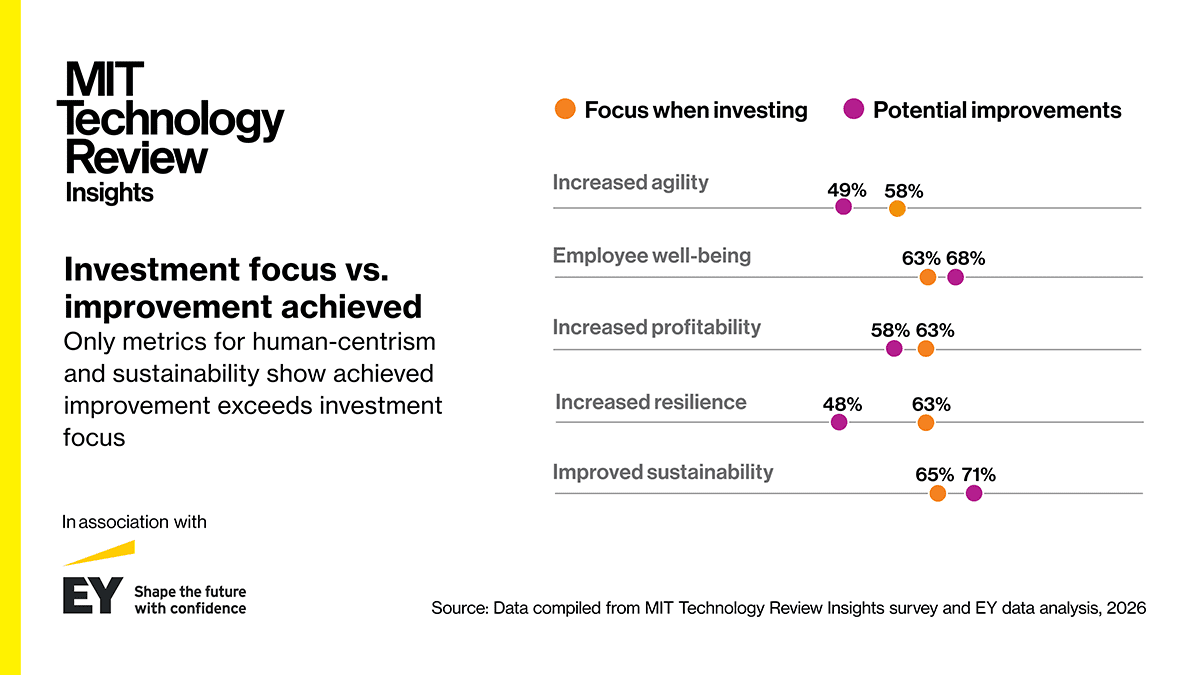

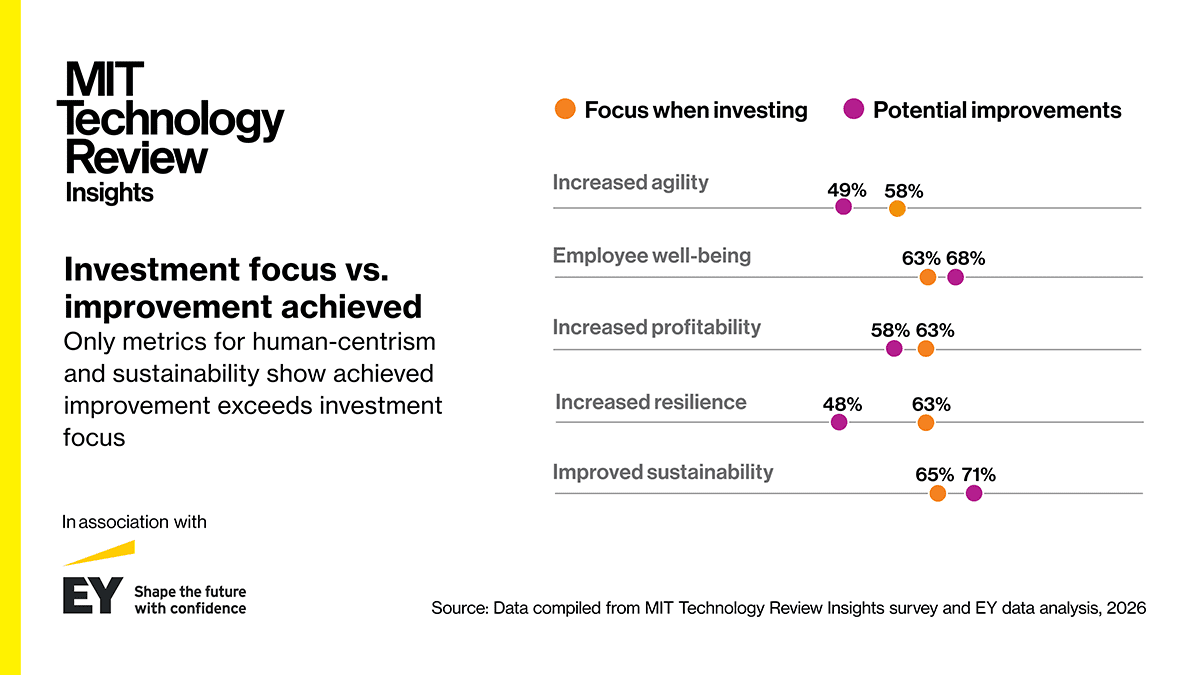

An MIT Technology Review Insights survey of 250 industry leaders from around the world reveals most industrial investments still target efficiency. And while the data shows human-centric and sustainable use cases deliver higher value, they are underfunded. The research shows most organizations are not realizing the full value potential of Industry 5.0 due to a combination of:

• Culture, skills, and collaboration barriers.

• Tactical and misaligned technology investments.

• Use-case prioritization focused on efficiency over growth, sustainability, and well-being.

The barrier to achieving Industry 5.0 transformation is not only about fixing the technology, according to research from EY and Saïd Business School at the University of Oxford, it is also about bolstering human-centric elements like strategy, culture, and leadership. Companies are investing heavily in digital transformation, but not always in ways that unlock the full human potential of Industry 5.0.

“We’re not just doing digital work for work’s sake, what I call ‘chasing the digital fairies,’” says Chris Ware, general manager, iron ore digital, Rio Tinto. “We have to be very clear on what pieces of work we go after and why. Every domain has a unique roadmap about how to deliver the best value.”

This content was produced by Insights, the custom content arm of MIT Technology Review. It was not written by MIT Technology Review’s editorial staff. It was researched, designed, and written by human writers, editors, analysts, and illustrators. This includes the writing of surveys and collection of data for surveys. AI tools that may have been used were limited to secondary production processes that passed thorough human review.

From integration chaos to digital clarity: Nutrien Ag Solutions’ post-acquisition reset

Thank you for joining us on the “Enterprise AI hub.”

In this episode of the Infosys Knowledge Institute Podcast, Dylan Cosper speaks with Sriram Kalyan, head of applications and data at Nutrien Ag Solutions, Australia, about turning a high-risk post-acquisition IT landscape into a scalable digital foundation. Sriram shares how the merger of two major Australian agricultural companies created duplicated systems, fragile integrations, and operational risk, compounded by the sudden loss of key platform experts and partners. He explains how leadership alignment, disciplined platform consolidation, and a clear focus on business outcomes transformed integration from an invisible liability into a strategic enabler, positioning Nutrien Ag Solutions for future growth, cloud transformation, and enterprise scale.

Click here to continue.