In the fast-paced world of PPC advertising, marketers are constantly seeking ways to streamline their workflows and improve performance.

Managing PPC campaigns efficiently requires a delicate balancing act of multiple tasks:

- Analyzing data.

- Optimizing bid strategies.

- Testing creatives.

- Reporting performance.

- And so much more.

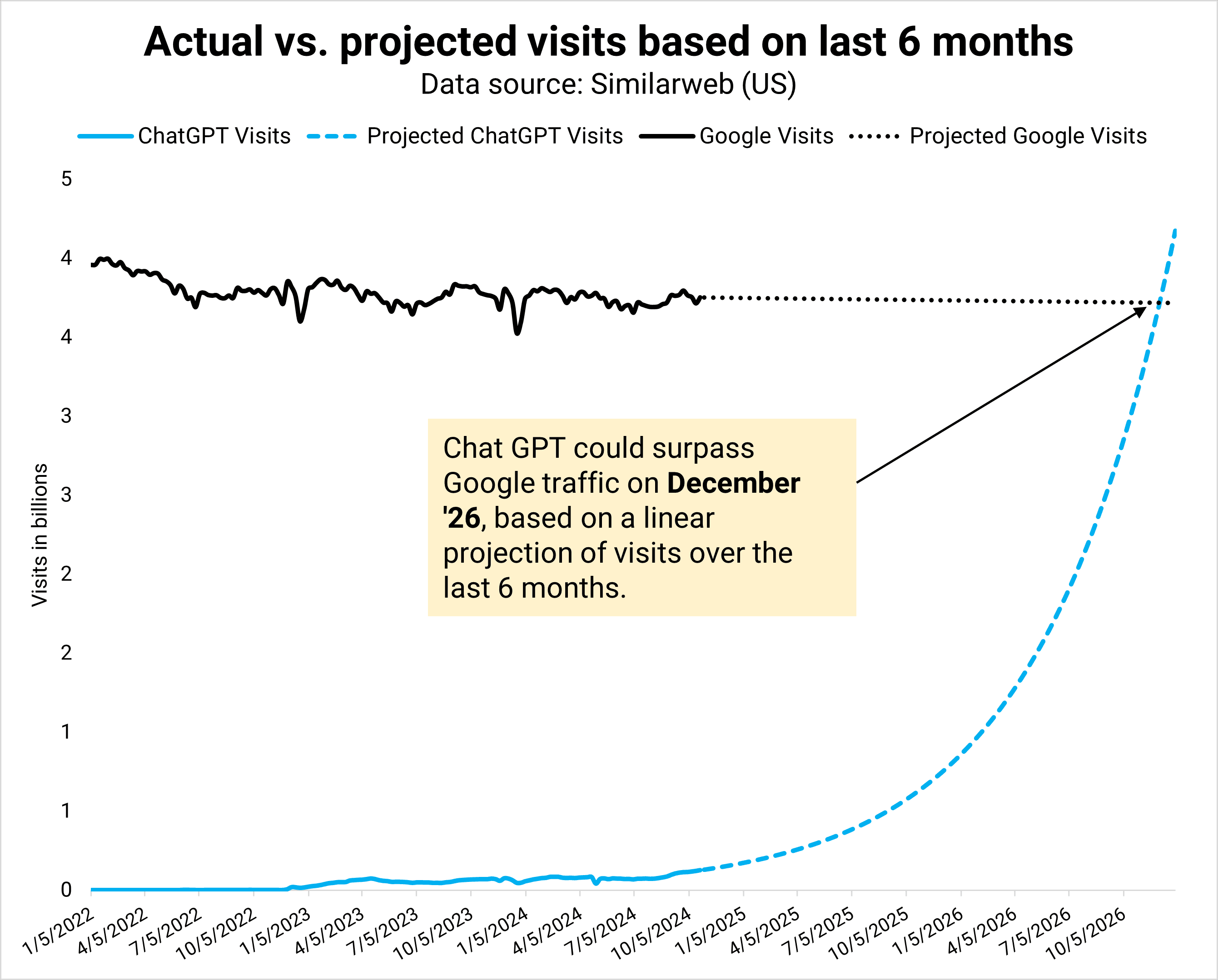

While AI and machine learning have been around in PPC for years, a new wave of AI tools for streamlining productivity and workflows has made its way into the PPC scene.

Whether it’s automating repetitive tasks, enhancing audience targeting, or analyzing vast datasets, AI tools are reshaping how PPC professionals work.

Who doesn’t want to save time doing repetitive, busy work tasks?

In this article, we’ll explore several unconventional ways AI tools can help PPC marketers save time, increase efficiency, and make smarter decisions.

Using AI To Automate Data Interpretation And Trend Insights

PPC campaigns can generate enormous amounts of data that need to be consistently analyzed and interpreted.

AI tools outside of the standard Google and Microsoft Ads platforms can help streamline this process by helping with tasks like:

- Quickly summarizing key trends.

- Look for patterns in performance data.

- Identify any data anomalies for further analysis.

These insights can enable marketers to move from data to action faster.

Using AI Tools For Trend Identification And Insights

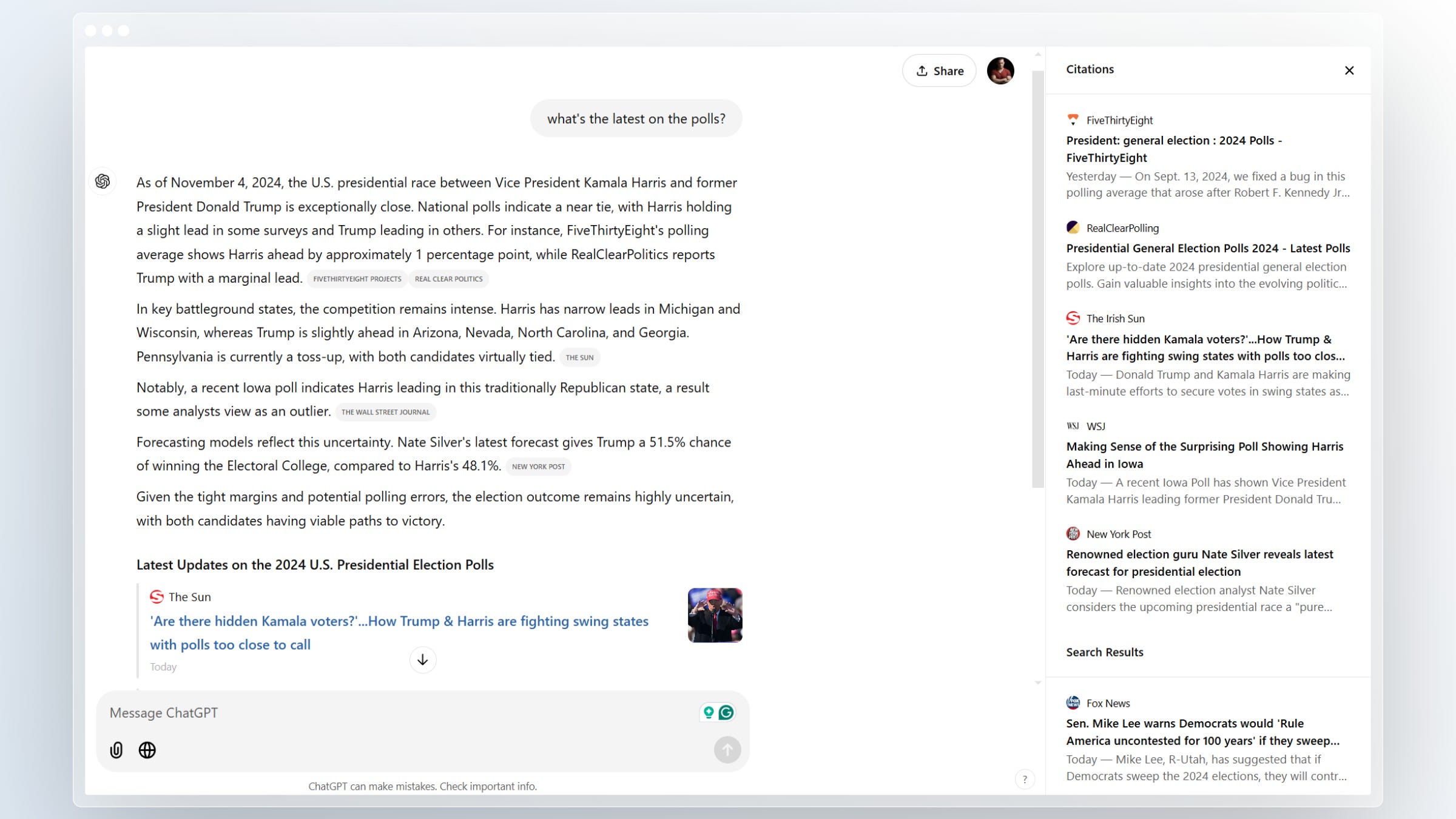

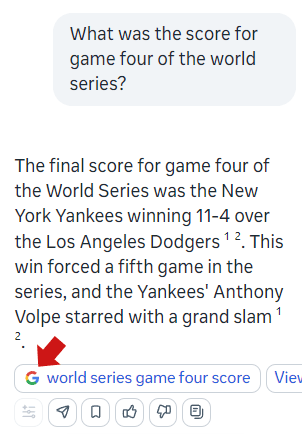

If you’d rather not manually sift through reports identifying changes in performance metrics changes, you can actually feed campaign data into ChatGPT (or similar AI tools) to receive summaries that highlight performance trends.

For example, they can help identify seasonal changes in performance or pinpoint potential issues, such as a sudden dip in conversion rate.

Say you run 20 different campaigns in Google Ads and start to see a significant drop in conversion rates from the platform. It can be daunting to immediately pinpoint the cause of the issue.

By processing raw performance data from your campaigns, these AI tools can quickly analyze the data and provide insight into not only where the problem(s) can lie, but also glean insights as to why performance has shifted, like:

- Ad fatigue.

- Increased competition.

- A shift in consumer behavior.

Using AI tools in this capacity helps marketers cut down on analysis time while helping to identify core issues faster, allowing for quicker optimization.

This automation saves hours of manual work, enabling you to focus on more strategic decision-making instead of spending time analyzing large datasets.

Enhancing Competitor Analysis And Strategy Development

Keeping up with competitors is crucial in the PPC landscape, but the task at hand can be time-consuming and complex.

AI tools simplify this process by providing insights into competitors’ strategies, allowing you to stay one step ahead.

There are plenty of tools to help drive competitor insights, whether in the Google Ads platform, third-party tools, or AI tools.

If you’re looking to take the analysis a step further, you can input reports from other competitive analysis tools into ChatGPT (or a similar tool) to receive a quick summary that highlights a competitor’s recent actions.

For example, this could include information like:

- Shifts in bidding strategies.

- Introduction of new ad copies.

- Keywords being targeted.

Based on this data, the AI tools can suggest ways to adjust your own campaigns or suggest counter-strategies to stay competitive.

By automating competitor analysis tasks, you can gain valuable insights faster, which allows for quicker, more informed decision-making and strategic actions.

Simplifying Multi-Account And Cross-Platform Reporting

Managing campaigns across multiple platforms – whether it’s Google Ads, Microsoft Ads, Meta, or others – means compiling huge data sets from different sources.

Trying to put together a compelling, holistic story about your marketing campaigns can take up a lot of time as you navigate from platform to platform.

This is where the power of AI tools can come in to help aggregate reports and create cohesive summaries.

Streamlining Cross-Platform Reporting

Multi-channel reporting is often a daunting task, especially when managing accounts across Google, Microsoft, and social platforms.

By inputting performance data from these platforms into ChatGPT, marketers can receive a single, unified report that summarizes key performance indicators (KPIs) across channels.

For example, say you manage several campaigns across Google Ads, Microsoft Ads, and Meta Ads.

Instead of switching between dashboards and manually pulling data, you can input the performance metrics from each platform into your AI tool of choice.

The tool can summarize the top-performing platforms, highlight underperforming campaigns, and suggest where to reallocate budgets to maximize ROI.

AI’s ability to consolidate multi-channel data helps reduce reporting time, enabling marketers to spend more time optimizing campaigns and less time on administrative tasks.

Keyword Research And Expansion With AI

Keyword research is at the core of every PPC strategy, and expanding keyword lists can be labor-intensive.

AI tools can make the process more efficient by identifying relevant keywords, negative keywords, and keyword variations that are often missed in traditional tools.

While tools like the Google Keyword Planner are great at providing keyword recommendations, AI tools can take it a step further.

They can generate items like long-tail keyword variations and help identify opportunities for new targeting strategies.

Additionally, they can analyze an existing keyword list and suggest related keywords that reflect user intent or emerging trends.

For example, say you manage PPC campaigns for an ecommerce retailer. You input a list of current top-performing keywords with your latest KPI performance data into your AI tool of choice.

From there, the tool can generate suggestions for new long-tail keywords that may have lower volume, but higher intent to purchase.

Additionally, you can ask the tool to suggest negative keywords to eliminate irrelevant traffic, which improves both relevance and cost efficiency.

To really kick this into high gear, you can then ask the tool to format these new keywords and negative keywords into a format that allows you to upload them into Google Ads Editor, saving you hours of manual work adding each one individually.

Using AI tools beyond the ad platforms can help marketers discover new opportunities faster, ensuring more comprehensive targeting with minimal manual effort.

AI-Assisted Testing And Creative Optimization

There’s no debate that A/B testing is critical to campaign optimization, but interpreting results and making decisions about the next steps is where most people fall flat.

Using AI tools to streamline this process can aid you in analyzing test data and suggest optimizations based on performance.

Say you want to test two different versions of a headline in a PPC campaign. You can upload your test performance data into an AI tool for analysis.

Not only will it summarize which headline performed better, but it goes a step further to help answer why one headline outperformed the other.

By providing insights into which elements contributed to success, it can save you time in the long run and help keep those driving factors top of mind for the next test.

AI For PPC Budget Allocation And Forecasting

Effective budget management is essential for optimizing PPC performance.

The ad platforms are great at automating tasks like changing daily budgets based on scripts, but what about strategic budget allocation decisions?

Using AI tools to assist budget allocation across campaigns or platforms by forecasting potential outcomes based on past performance data can streamline the process of deciding where to invest – and when.

For example, a retail client has an upcoming holiday sale and they want to know if they can expect a higher return than last year’s sale.

Inputting last year’s campaign performance into AI tools like ChatGPT can help analyze performance, while also taking into consideration current market trends.

The output could be to suggest how much of the budget should be allocated to high-performing keywords or certain product categories.

It can also provide a forecast of expected returns based on historical data, current CPC trends, and consumer behavior trends to help you make informed budget decisions ahead of time.

AI-driven budget forecasting helps ensure that resources are allocated to the right areas, reducing wasted spend and improving overall campaign performance.

Automating Market Trend Exploration And Forecasting

Market trends can shift quickly, and staying ahead of these changes is key to successful PPC campaigns.

AI tools can analyze search trends, consumer behavior, and historical campaign data to predict future shifts in demand and help marketers prepare.

For instance, AI tools can identify trends in consumer searches in real time, helping you adjust your campaign strategies proactively.

For example, you manage Google Ads campaigns for a fitness brand, and you’re noticing a seasonal uptick in searches for [home workout equipment].

By using AI tools to analyze Google Trends data, you can forecast how that demand will continue to rise or fall in the coming months, and even if certain geographical areas are driving the high demand.

This allows you to adjust bids based on location, increase overall budgets if necessary to help capture demand, and create relevant ad copy that speaks directly to the emerging trend.

Conclusion

AI is revolutionizing PPC workflows, allowing marketers to work smarter, not harder.

Whether you’re leveraging Google Ads’ AI capabilities, like Gemini’s conversational ad creation or integrating third-party tools for deeper insights, AI is becoming indispensable in managing and optimizing PPC campaigns.

From automating bid management and audience targeting to optimizing ad creatives and providing actionable insights, AI offers opportunities to boost efficiency without sacrificing effectiveness.

As AI tools continue to evolve, those who embrace these technologies will find themselves better equipped to deliver superior results, whether managing in-house campaigns or serving clients.

By integrating both Google’s AI features and powerful third-party tools, you can unlock new levels of performance, save time on manual tasks, and focus on strategy and innovation.

More resources:

Featured Image: 3rdtimeluckystudio/Shutterstock