A Breakdown Of Microsoft’s Guide To AEO & GEO via @sejournal, @martinibuster

Microsoft published a sixteen page explainer guide about optimizing for AI search and chat. While many of the suggestions can be classified as SEO, some of the other tips relate exclusively to AI search surfaces. Here are the most helpful takeaways.

What AEO and GEO Are And Why They Matter

Microsoft explains that AI search surfaces have created an evolution from “ranking for clicks” to “being understood and recommended by AI.” Traditional SEO still provides a foundation for being cited in AI, but AEO and GEO determine whether content gets surfaced inside AI-driven experiences.

Here is how Microsoft distinguishes AEO and GEO. The first thing to notice is that they define AEO as Agentic Engine Optimization. That’s different from Answer Engine Optimization, which is how AEO is commonly understood.

- AEO (Answer/Agentic Engine Optimization) focuses on optimizing content and product information easy for AI assistants and agents to retrieve, interpret, and present as direct answers.

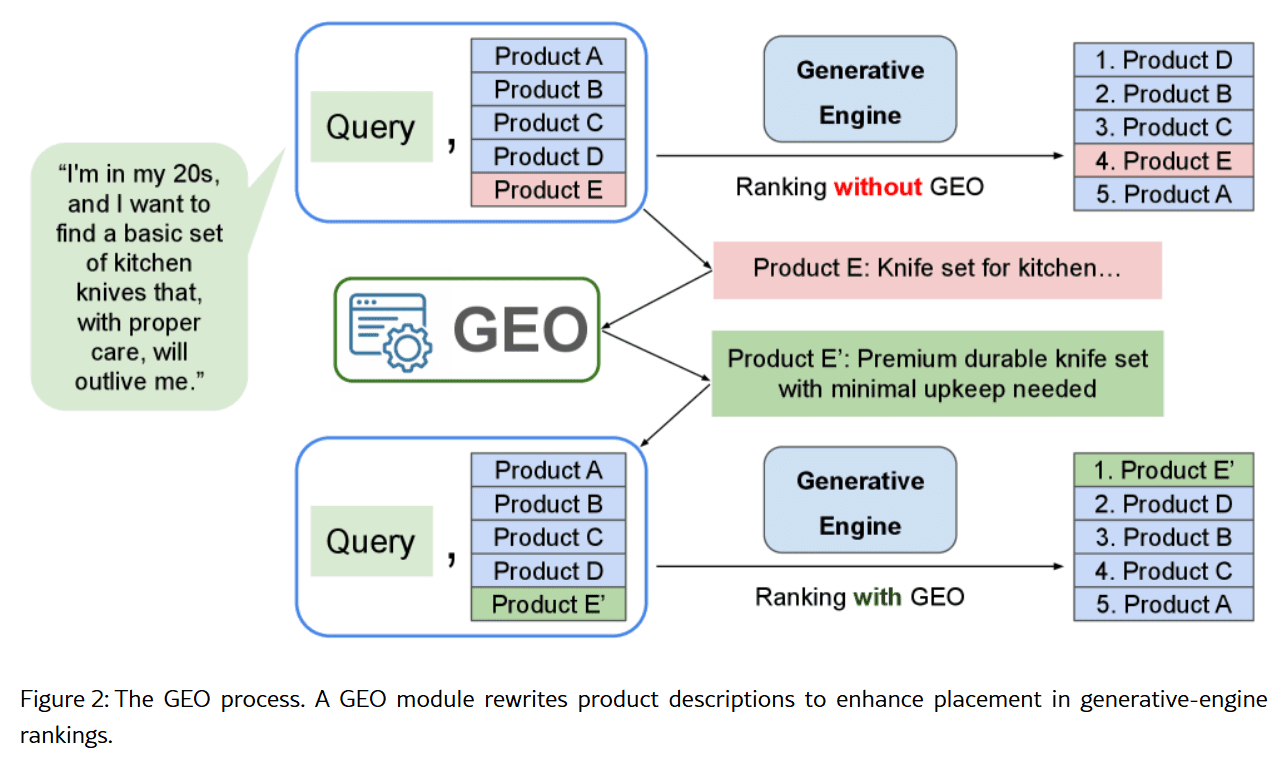

- GEO (Generative Engine Optimization) focuses on making your content discoverable and persuasive inside generative AI systems by increasing clarity, trustworthiness, and authoritativeness.

Microsoft views AEO and GEO as not limited to marketing, but multiple teams within an organization.

The guide says:

“This shift impacts every part of the organization. Marketing teams must rethink brand differentiation, growth teams need to adapt to AI-driven journeys, ecommerce teams must measure success differently, data teams must surface richer signals, and engineering teams must ensure systems are AI-readable and reliable.”

AI shopping is not one channel, it’s really a set of overlapping systems.

Microsoft describes AI shopping as three overlapping consumer touchpoints:

- AI browsers that interpret what’s on a page and surface context while users browse.

- AI assistants that answer questions and guide decisions in conversation.

- AI agents that can take actions, like navigating, selecting options, and completing purchases.

The AI touchpoint matters less than whether the system can access accurate, structured, and trustworthy product information.

SEO Still Plays A Role

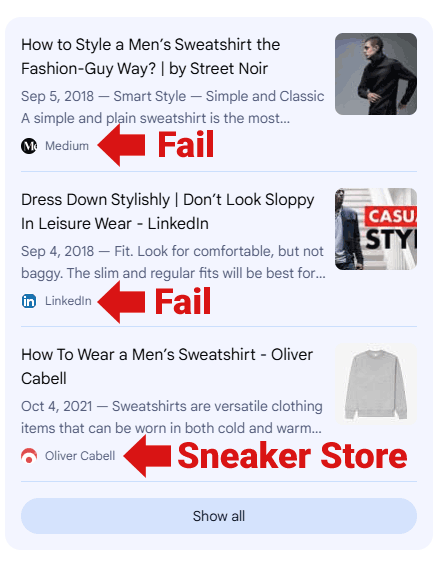

Microsoft’s guide says that the AEO and GEO competition changes from discovery over to influence. SEO is still important, but it is no longer the whole game.

The new competition is about influencing the AI recommendation layer, not just showing up in rankings.

Microsoft describes it like this:

- SEO helps the product get found.

- AEO helps the AI explain it clearly.

- GEO helps the AI trust it and recommend it.

Microsoft explains:

“Competition is shifting from discovery to influence (SEO to AEO/GEO).

If SEO focused on driving clicks, AEO is focused on driving clarity with enriched, real-time data, while GEO focuses on building credibility and trust so AI systems can confidently recommend your products.

SEO remains foundational, but winning in AI-powered shopping experiences requires helping AI systems understand not just what your product is, but why it should be chosen.”

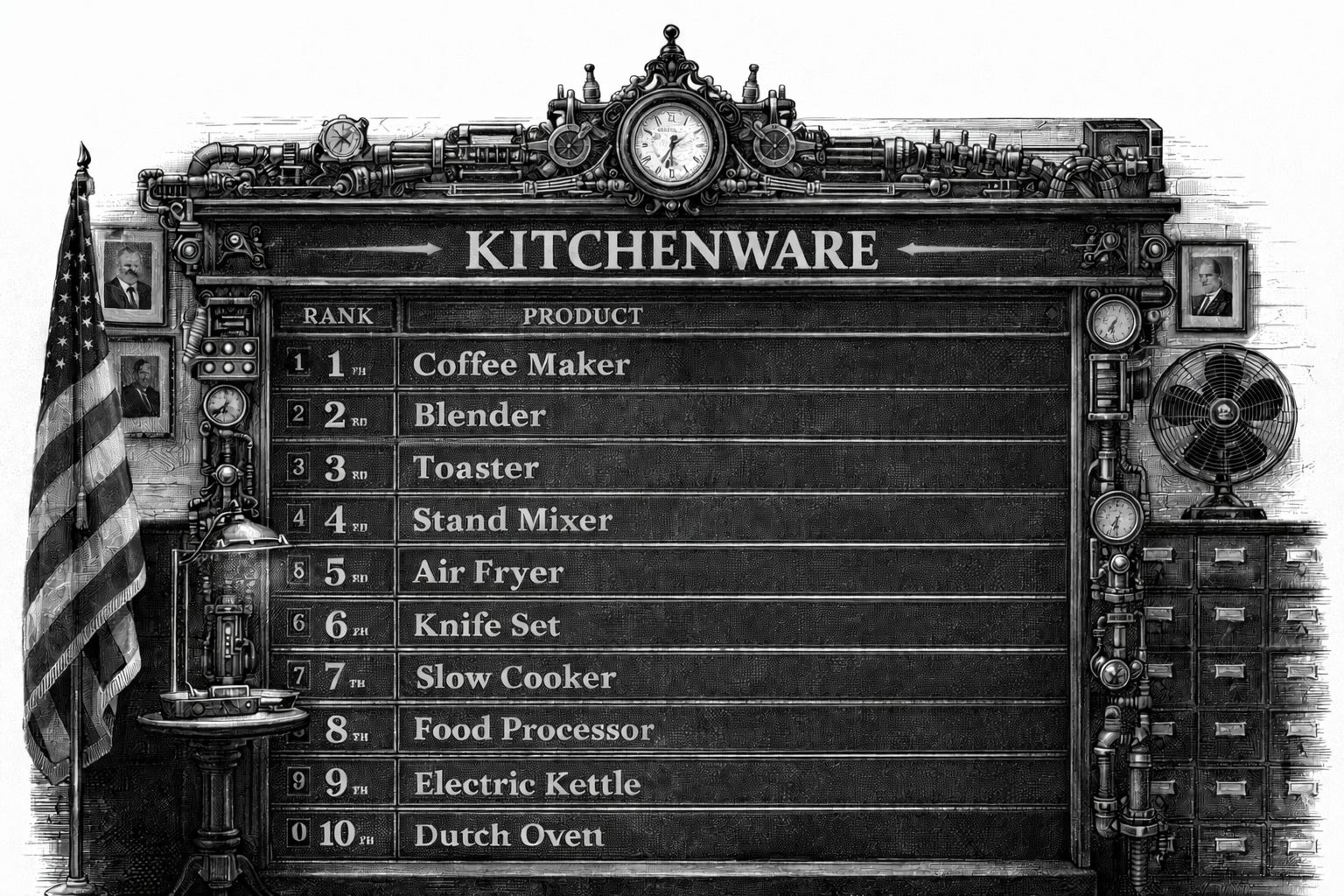

How AI Systems Decide What To Recommend

Microsoft explains how an AI assistant, in this case Copilot, handles a user’s request. When a user asks for a recommendation, the AI assistant goes into a reasoning phase where the query is broken down using a combination of web and product feed data.

The web data provides:

- “General knowledge

- Category understanding

- Your brand positioning”

Feed data provides:

- “Current prices

- Availability

- Key specs”

The AI assistant may, based on the feed data, choose to surface the product with the lowest price that is also in stock. When the user clicks through to the website, the AI Assistant scans the page for information that provides context.

Microsoft lists these as examples of context:

- Detailed reviews

- Video that explain the product

- Current promotions

- Delivery estimates

The agent aggregates this information and provides guidance on what it discovered in terms of the context of the product (delivery times, etc.).

Microsoft brings it all together like this:

First, there’s crawled data:

The information AI systems learned during training and retrieve from indexed web pages, which shapes your brand’s baseline perception and provides grounding for AI responses, including your product

categories, reputation and market position.Second, there’s product feeds and APIs:

The structured data you actively push to AI platforms, giving you control over how your products are represented in comparisons and recommendations. Feeds provide accuracy, details and consistency.Third, there’s live website data:

The real-time information AI agents see when they visit your actual site, from rich media and user reviews to dynamic pricing and transaction capabilities. Each data source plays a distinct role in the shopping journey — traditional SEO remains essential because AI systems perform real-time web searches frequently throughout the shopping journey, not just at purchase time, and your site must rank well to be discovered, evaluated, and recommended.

Microsoft recommends A Three-Part Action Plan

Strategy 1: Technical Foundations

The core idea for this strategy is that your product catalog must be machine-readable, consistent everywhere, and up to date.

Key actions:

- Use structured data (schema) for products, offers, reviews, lists, FAQs, and brand.

- Include dynamic fields like pricing and availability.

- Keep feed data and on-page structured data aligned with what users actually see.

- Avoid mismatches between visible content and what is served to crawlers.

Strategy 2: Optimize Content For Intent And Clarity

This strategy is about optimizing product content so that it answers typical user questions and is easy for AI to reuse.

Key actions:

- Write product descriptions that start with benefits and real use-case value.

- Use headings and phrasing that match how people ask questions.

Add modular content blocks:

- FAQs

- specs

- key features

- comparisons

Add Contextual Information

- Support multi-modal interpretation (good alt text, transcripts for video content, structured image metadata).

- Add complementary product context (pairings, bundles, “goes well with”).

Strategy 3: Trust Signals (Authority And Credibility)

The takeaway for this strategy is that AI assistants and agents prioritize content that looks verified and reputable.

Key actions:

- Strengthen review credibility (verified reviews, strong volumes, clear sentiment).

- Reinforce brand authority through real-world signals (press, certifications, partnerships).

- Keep claims grounded and consistent to avoid trust degradation.

- Use structured data to clarify legitimacy and identity.

Microsoft explains it like this:

“AI assistants prioritize content from sources they can trust. Signals such as verified reviews, review volume, and clear sentiment help establish credibility and influence recommendations.

Brand authority is reinforced through consistent identity, real-world validation such as press coverage, certifications, and partnerships, and the use of structured data to clearly define brand entities.

Claims should be factual, consistent, and verifiable, as exaggerated or misleading information can reduce trust and limit visibility in AI-powered experiences”

Takeaways

AI search changes the goal from winning rankings to earning recommendations. SEO still matters, but AEO and GEO determine how well content is interpreted, explained, and chosen inside AI assistants and agents.

AI shopping is not a single channel but an ecosystem of assistants, browsers, and agents that rely on authoritative signals across crawled content, structured feeds, and live site experiences. The brands that win are the ones with consistent, machine-readable data, and clear content that contains useful contextual information that can be easily summarized.

Microsoft published a blog post that is accompanied by a link to the downloadable explainer guide: From Discovery to Influence: A Guide to AEO and GEO.

Featured Image by Shutterstock/Kues