Google updated their spam documentation, adding a new definition of site reputation abuse as the largest single change, followed by additional information about manual action consequences. The remaining updates are a content refresh aimed at making the documentation easier to understand and more concise. Understanding these changes can provide ideas for how to update your own content effectively.

What Changed

There are about eight kinds of changes made to the documentation that improves the content. That’s seven ways that older content can be made fresher.

These are the types of changes made:

- More Information About Site Reputation Abuse

- New Details About Manual Action Consequences

- Changed Concept Of Thin Affiliate To Thin Affiliation

- More Appropriate Introductory Sentence

- Consolidation Of Words: Practices & Spam Practices

- Added The Concept Of Spam Abuse

- Improved Conciseness In General

- Improved Topic: Machine-Generated Traffic

More Information About Site Reputation Abuse

The previous documentation advises that site reputation abuse is when a third party publishes content on an authoritative site with “with little or no first-party oversight” but it didn’t explain what “first-party oversight” is so the new version of the spam documentation adds a new definition.

“Close oversight or involvement is when the first-party hosting site is directly producing or generating unique content (for example, via staff directly employed by the first-party, or freelancers working for staff of the first-party site). It is not working with third-party services (such as “white-label” or “turnkey”) that focus on redistributing content with the primary purpose of manipulating search rankings.”

New Details About Manual Action Consequences

Google added a new sentence explaining that one of the consequences of continuing to violate Google’s spam guidelines is that they can escalate the consequences by removing more sections of a site from the search results. This isn’t a new consequence but it is new information.

This is the new detail in the context of a site that continues to spam:

“…and taking broader action in Google Search (for example, removing more sections of a site from Search results).”

This is an example of refreshing content by adding additional information that was left out of the original version.

Changed Concept Of Thin Affiliate To Thin Affiliation

Google changed the section about “Thin affiliate pages” so that it is now about “Thin affiliation” and added a definition of what they mean.

The original version about thin affiliate pages started like this:

“Thin affiliate pages are pages with product affiliate links…”

The new version starts like this:

“Thin affiliation is the practice of publishing content with product affiliate links…”

More Appropriate Introductory Sentence

Google’s documentation improved the introductory sentence by making it more appropriate for the context of the topic. It now defines what spam is. The new sentence doesn’t replace the the old introductory sentence, the old one simply becomes the second sentence.

Original introductory sentence:

“Our spam policies help protect users and improve the quality of search results.”

New introductory sentence:

“In the context of Google Search, spam is web content that’s designed to deceive users or manipulate our Search systems in order to rank highly. Our spam policies help protect users and improve the quality of search results.”

The new version starts with a definition of spam, which makes sense for documentation about spam.

Consolidation Of Words: Practices & Spam Practices

The following examples show how Google consolidated euphemisms for the same thing (spam) into a single phrase that emphasizes the phrase Spam Practices.

This change combines phrases like ‘content and behaviors’ and ‘forms of spam’ into the simpler phrases ‘practices” and ‘spam practices.’ I’m not sure why Google made this change, but using consistent terminology makes content easier to understand.

Here are some examples of the phrase “practices” and “spam practices” being emphasized:

1. The second paragraph is changed to make it more concise.

This:

“We detect policy-violating content and behaviors both through automated systems….”

Is now this:

“We detect policy-violating practices…”

The sentence becomes easier to understand. <— This is important.

2. Around the fourth paragraph:

This:

“Our policies cover common forms of spam, but Google may act against any type of spam we detect.”

Becomes this:

“Our policies cover common spam practices, but Google may act against any type of spam practices we detect.”

The new sentence above is kind of redundant, but it shows a conscious effort to consolidate similar activities into a single category of activity.

Concept Of Spam Abuse

The next change is to increase the use of the word “abuse” in the new version of the spam policies. Abuse is a word that describes a harmful activity. In the case of SEO, Google may be using that word because it describes an activity that intentionally deceives users and search engines.

The old version used the word 11 times and the new version uses that word 17 times. It’s a relatively minor change but it significantly heightens the concept of spam being a form of abuse.

Here are two examples of how Google added the concept of abuse:

- The word “doorways” is now “doorway abuse”

- The phrase “Hidden text and links” is now Hidden text and links abuse”

There are other changes to the documentation where they add the word “abuse” and what’s interesting about that is this is a change to how a concept (abuse) is introduced to make a series of seemingly different things related. This helps reader comprehension because “hidden text” and “doorways” are now connected to each other in the concept of “abuse” in the sense of spam.

Improved Conciseness

Another change which should always be considered in a content refresh is to make phrases more concise.

Google changed the following text:

“Google uses links as a factor in determining the relevancy of web pages. Any links that are intended to manipulate rankings in Google Search results may be considered link spam. This includes any behavior that manipulates links to your site or outgoing links from your site.”

It’s now significantly shorter:

“Link spam is the practice of creating links to or from a site primarily for the purpose of manipulating search rankings.”

Big difference, right? I really like that change because someone probably looked at that original three sentences and considered what the core message was that was trying to get through that thicket of three sentences.

If you read the original three sentences it’s kind of a lot of information that doesn’t really stick in the mind. Considering whether a series of sentences communicate effectively is a good way to approach a content rewrite. Just read it and ask, “what does this mean?” and if the answer is shorter then consider writing that in place of the sentences.

Improved Topic Communication: Machine-Generated Traffic

This next change dramatically improves the machine-generated traffic section because it removes a part that makes it about Google and makes it more about a definition of machine generated traffic.

These sentences:

“Machine-generated traffic consumes resources and interferes with our ability to best serve users. Examples of automated traffic include:”

Are now this:

“Machine-generated traffic (also called automated traffic) refers to the practice of sending automated queries to Google. This includes scraping…”

The part about consuming resources is still there but it’s now moved toward the end of that section.

There are other instances in the documentation were two sentences were shortened into one that gets to the point more directly, concise.

For example, the section about Misleading Functionality replaces two sentences with one sentence that defines what misleading functionality is:

“Misleading functionality refers to the practice of…”

The section about Scraped Content replaced three long sentences with a sentence that defines what scraped content is:

“Scraping refers to the practice of taking content from other sites…”

Content Refresh Versus A Rewrite

The updated spam documentation is not a rewrite but an incremental refresh with some new information. It suggests ways to update your own content by adding new details and making existing information clearer and more concise.

Read the updated documentation:

Spam policies for Google web search

Featured Image by Shutterstock/Shutterstock AI Generator

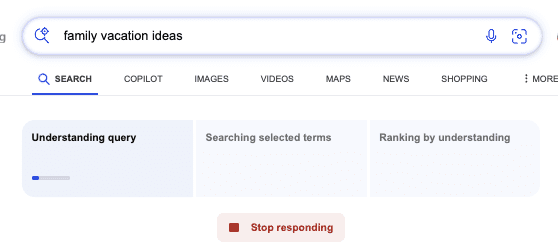

Screenshot from: blogs.bing.com, October 2024.

Screenshot from: blogs.bing.com, October 2024.