WordPress 7.0 Launches With Native AI Integration via @sejournal, @martinibuster

After weeks of delay, WordPress 7.0, named Armstrong, is finally released. The centerpiece feature was supposed to be real-time collaboration (RTC) but what is shipping is bigger: Native AI integration, a watershed moment in the content management system’s history. Native AI integration is what will carry WordPress into the future and put more distance between it and competitors.

Four Building Blocks Form The Foundation Of WordPress AI

WordPress 7.0 introduces four foundational building blocks that together form its native AI architecture. The larger story is that WordPress is building the infrastructure for a future where AI becomes part of how the CMS itself operates.

The Four WordPress 7.0 AI Building Blocks

- WP AI Client

- Client-Side Abilities API

- AI Connectors Screen

- Connectors API

These four features form the pillars that support a radical transformation of how information will be published and websites are designed. What makes this especially powerful is the massive community of developers around the world who can now create new ways of using themes, dream new ways of building websites, analyzing data, and making it easier to build a business online. No other CMS has that people-power behind it.

WordPress explains it like this:

“WordPress 7.0 unlocks AI capabilities right in your website. The new WP AI client adds a central interface that lets plugins communicate with generative AI models while remaining provider-agnostic. WordPress Core handles request routing for you. Managed in the Settings > Connectors screen with API keys funneled through the Connectors API, you can start with some preset models and add your favorites.

As a bonus, the Abilities API is integrated directly into the WP AI Client, delivering new and expansive AI abilities that can be built into workflows that run abilities fluidly, one after another.”

WP AI Client Enables AI Provider Integration

WordPress Core enables users to bring their own AI providers and easily integrate them into the CMS. The WP AI Client makes that possible by giving plugins a central, provider-agnostic interface for sending prompts to AI models and receiving responses through WordPress.

Plugin developers do not have to build separate AI integrations for every provider. They can integrate with the WP AI Client interface instead.

A plugin can describe what it needs, WordPress can route the request to a suitable configured model, and site owners can control which AI providers are available inside WordPress.

The release also introduces model preference ordering, feature detection, advanced configuration controls, and a Prompt Builder class for interacting with models. WordPress says developers can prioritize models based on capabilities, cost, and processing efficiency.

Client-Side Abilities API Extends AI Into WordPress Actions

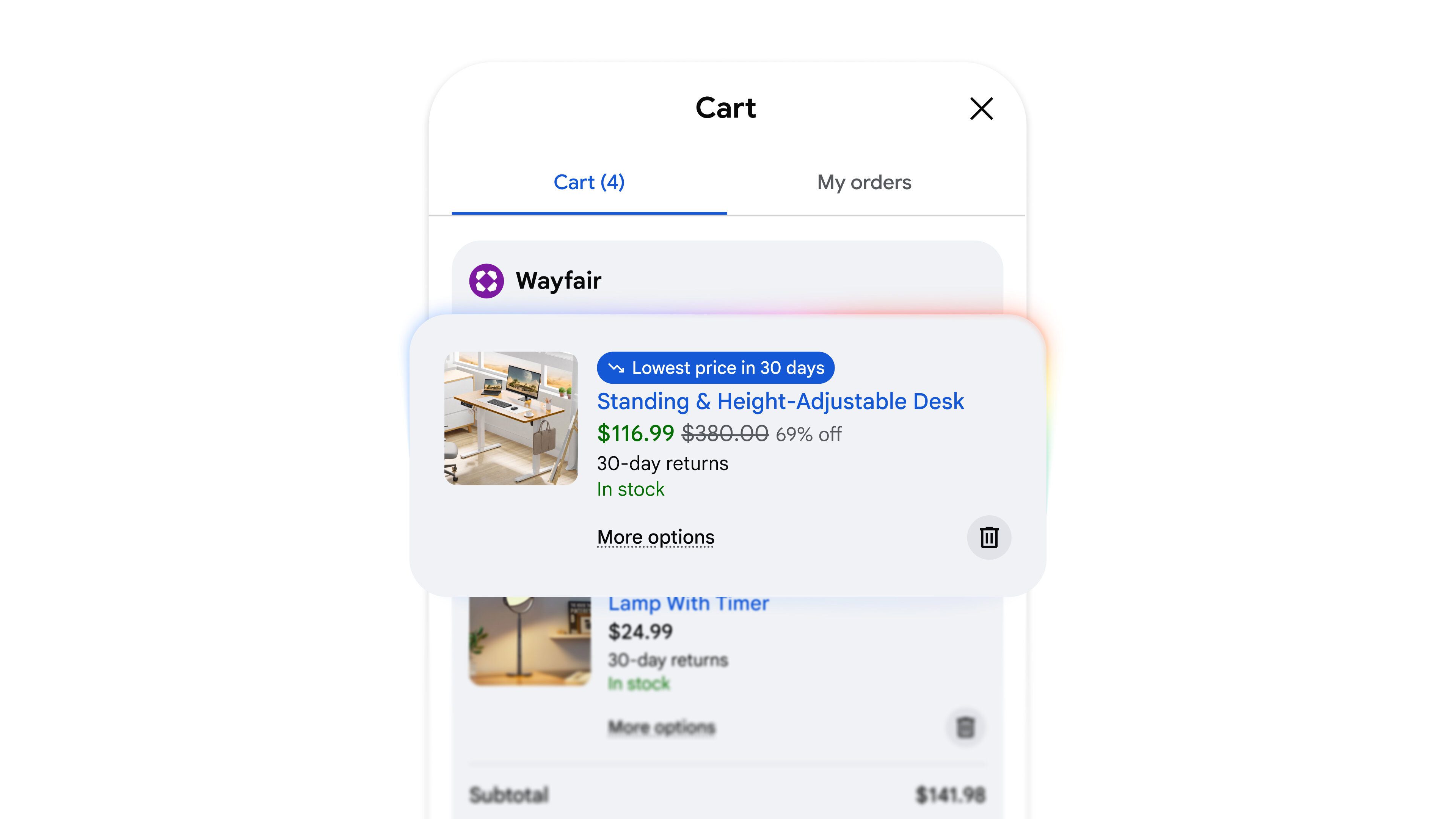

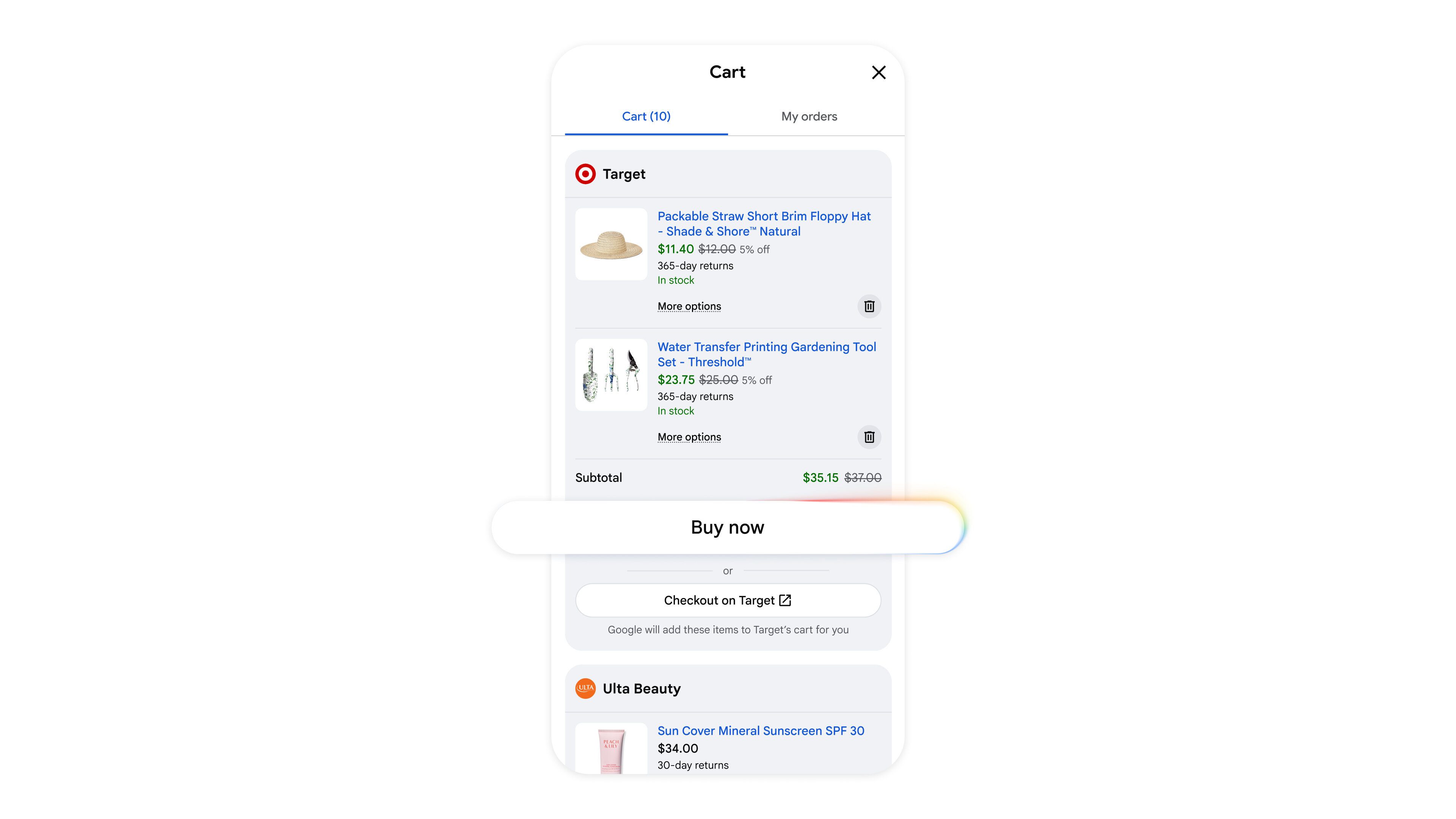

WordPress 7.0 gives AI and automation tools a way to interact with WordPress from inside the browser. That means AI can be connected to actions such as navigating the admin, inserting blocks, running commands, and participating in workflows instead of simply generating text outside the CMS.

This is where the AI story becomes bigger than content creation. WordPress is creating a layer where AI agents, plugins, and automation tools can act on the same set of WordPress capabilities through a shared interface.

The practical effect is that WordPress can become an environment that AI tools operate within, not just a place where AI-generated content is pasted.

AI Connectors Centralize External AI Services

The new Connectors screen gives site owners one place to manage connections to outside AI services. Instead of scattering API keys and provider settings across individual plugins, WordPress is creating a central location for managing those services.

The Connectors API is the technical layer behind that screen. It handles the provider registry, authentication details, metadata, and future connection types, which gives WordPress a standardized way to recognize and manage external AI services.

That matters because AI will not be limited to one provider or one kind of integration. WordPress is preparing for a future where multiple AI services can be connected, managed, and used across the CMS.

WordPress explains how the Connectors API works behind the scenes:

“The Connectors API is the backbone of the Connectors screen; an extensibility API that facilitates and supports the inclusion of agents.

The API supports two authentication methods (api_key and none) based on provider metadata, and is designed to facilitate additional connector types in future releases. The Connectors API uses the WP AI Client’s default registry to automatically discover providers, and corresponding metadata to generate connectors, while connectors authenticated via other methods are stored in the PHP registry.

You can use the wp_connectors_init action to override connectors metadata, which will be the key for registering new connector types in future releases. The API includes three public functions for querying the registry, and the frontend UI can be customized using client-side JavaScript registration.”

WordPress Is Building Beyond AI Features

The release is not just about adding AI to WordPress. It is about giving WordPress the internal structure needed for AI-workflows like publishing, SEO automation, site design, site building, and agent-based workflows.

The four building blocks built into WordPress 7.0 make it all happen:

- The WP AI Client connects WordPress to models.

- The Abilities API gives AI a way to take action.

- The Connectors screen gives users control over providers.

- The Connectors API gives developers a standard foundation for future integrations.

Real-time collaboration was expected to define WordPress 7.0. Native AI integration may prove to be the feature that defines what WordPress becomes next.