Google’s latest Search Off the Record podcast discussed whether ‘SEO is on a dying path’ because of AI Search. Their assessment sought to explain that SEO remains unchanged by the introduction of AI Search, revealing a divide between their ‘nothing has changed’ outlook for SEO and the actual experiences of digital marketers and publishers.

Google Speculates If AI Is On A Dying Path

At a certain point in the podcast they started talking about AI after John Mueller introduced the topic of the impact of AI on SEO.

John asked:

“So do you think AI will replace SEO? Is SEO on a dying path?”

Gary Illyes expressed skepticism, asserting that SEOs have been predicting the decline of SEO for decades.

Gary expressed optimism that SEO is not dead, observing:

“I mean, SEO has been dying since 2001, so I’m not scared for it. Like, I’m not. Yeah. No. I’m pretty sure that, in 2025,the first article that comes out is going to be about how SEO is dying again.”

He’s right. Google began putting the screws to the popular SEO tactics of the day around 2004, gaining momentum in 2005 with things like statistical analysis.

It was a shock to SEOs when reciprocal links stopped working. Some refused to believe Google could suppress those tactics, speculating instead about a ‘Sandbox’ that arbitrarily kept sites from ranking. The point is, speculation has always been the fallback for SEOs who can’t explain what’s happening, fueling the decades-long fear that SEO is dying.

What the Googlers avoided discussing are the thousands of large and small publishers that have been wiped out over the last year.

More on that below.

RAG Is How SEOs Can Approach SEO For AI Search

Google’s Lizzi Sassman then asked how SEO is relevant in 2025 and after some off-topic banter John Mueller raised the topic of RAG, Retrieval Augmented Generation. RAG is a technique that helps answers generated by a large language model (LLM) up to date and grounded in facts. The system retrieves information from an external source like a search index and/or a knowledge graph and the large language model subsequently generates the answer, retrieval augmented generation. The Chatbot interface then provides the answer in natural language.

When Gary Illyes confessed he didn’t know how to explain it, Googler Martin Splitt stepped in with an analogy of documents (representing the search index or knowledge base), search and retrieval of information from those documents, and an output of the information from “out of the bag”).

Martin offered this simplified analogy of RAG:

“Probably nowadays it’s much better and you can just show that, like here, you upload these five documents, and then based on those five documents, you get something out of the bag.”

Lizzi Sassman commented:

“Ah, okay. So this question is about how the thing knows its information and where it goes and gets the information.”

John Mueller picked up this thread of the discussion and started weaving a bigger concept of how RAG is what ties SEO practices to AI Search Engines, saying that there is still a crawling, indexing and ranking part to an AI search engine. He’s right, even an AI search engine like Perplexity AI uses an updated version of Google’s old PageRank algorithm.

Mueller explained:

“I found it useful when talking about things like AI in search results or combined with search results where SEOs, I feel initially, when they think about this topic, think, “Oh, this AI is this big magic box and nobody knows what is happening in there.

And, when you talk about kind of the retrieval augmented part, that’s basically what SEOs work on, like making content that’s crawlable and indexable for Search and that kind of flows into all of these AI overviews.

So I kind of found that angle as being something to show, especially to SEOs who are kind of afraid of AI and all of these things, that actually, these AI-powered search results are often a mix of the existing things that you’re already doing. And it’s not that it suddenly replaces crawling and indexing.”

Mueller is correct that the traditional process of indexing, crawling, and ranking still exists, keeping SEO relevant and necessary for ensuring websites are discoverable and optimized for search engines.

However, the Googlers avoided discussing the obvious situation today, which is the thousands of large and small publishers in the greater web ecosystem that have been wiped out by Google’s AI algorithms on the backend.

The Real Impacts Of AI On Search

What’s changed (and wasn’t addressed) is that the important part of AI in Search isn’t the one on the front end with AI Overviews. It’s the part on the back-end making determinations based on opaque signals of authority, topicality and the somewhat ironic situation that an artificial intelligence is deciding whether content is made for search engines or humans.

Organic SERPs Are Explicitly Obsolete

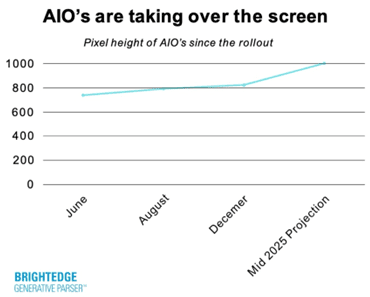

The traditional ten blue links have been implicitly obsolete for about 15 years but AI has made them explicitly obsolete.

Natural Language Search Queries

The context of search users who ask precise conversational questions within several back and forth turns is a huge change to search queries. Bing claims that this makes it easier to understand search queries and provide increasingly precise answers. That’s the part that unsettles SEOs and publishers because , let’s face it, a significant amount of content was created to rank in the keyword-based query paradigm, which is gradually disappearing as users increasingly shift to more complex queries. How content creators optimize for that is a big concern.

Backend AI Algorithms

The word “capricious” means the tendency to make sudden and unexplainable changes in behavior. It’s not a quality publishers and SEOs desire in a search engine. Yet capricious back-end algorithms that suddenly throttle traffic and subsequently change their virtual minds months later is a reality.

Is Google Detached From Reality Of The Web Ecosystem?

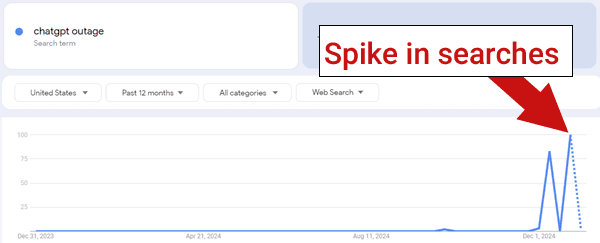

Industry-wide damage caused by AI-based algorithms that are still “improving” have unquestionably harmed a considerable segment of the web ecosystem. Immense amounts of traffic to publishers of all sizes has been wiped out since the increased integration of AI into Google’s backend, an issue that the recent Google Search Off The Record avoided discussing.

Many hope Google will address this situation in 2025 with greater nuance than their CEO Sundar Pichai who struggled to articulate how Google supports the web ecosystem, seemingly detached from the plight of thousands of publishers.

Maybe the question isn’t whether SEO is on a dying path but whether publishing itself is in decline because of AI on both the backend and the front of Google’s search box and Gemini apps.

Check out these related articles:

Google CEO’s 2025 AI Strategy Deemphasizes The Search Box

Google Gemini Deep Research May Erode Website Earnings

Google CEO: Search Will Change Profoundly In 2025

Featured Image by Shutterstock/Shutterstock AI Generator