This week’s Ask An SEO question is from someone who would like to know why their content is no longer “good” or “helpful.”

The person is curious why they no longer rank for “their keywords” when other pages don’t have original photos or content that proves they have real experience.

The person would like to remain anonymous, so I’m respecting their request.

This is a long post. The top section is how we think about content and why it should rank. The second section is where you will find ways to implement based on multiple niches, from travel to food, roofing, and more.

How We Go About Creating Content

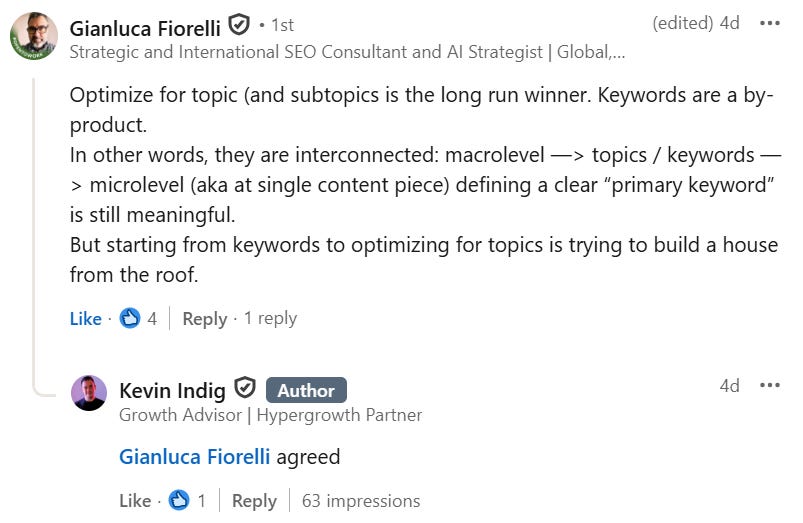

Their argument for why their content should rank is based on a concept called E-E-A-T.

Experience, Expertise, Authoritativeness, and Trustworthiness is not a ranking factor or signals; it is a trust builder for readers.

When done well, it can cause your content to get citations and backlinks naturally.

Your goal as a creator should be to show expertise and experience through original thoughts that only a person with first-hand knowledge knows.

That is how E-E-A-T works for SEO. There is no score or metric, and E-E-A-T is not a factor for ranking a page or website; it is an SEO concept.

I reviewed the website from the question submission, and it was similar to the slew of sites submitted for audits when the helpful content update killed niche sites. My feedback was the same.

The content is not original or unique, and it is not helpful. The person was just lucky they had the traffic for as long as they did.

Yes, the information given was from their personal experience, but it could have been generated by a large language model (LLM). Although the images were unique, they were not something unique to the topic or entity, and they did not help the user with a complete solution.

I live in Washington, D.C., and take photos when I go running most days, but I’m not a professional, and I do not sell my shots. It is a hobby. I could technically write a DC photography blog post or guide and try to rank it.

In order to do so, I not only need to share original photos, but I need to share original thoughts and things that will help someone wanting to take photos of DC, a complete solution. “The complete solution” part is what a lot of the sites I audited that got wiped out were missing.

The first step in providing this solution is to look for what people are asking and find a way to present it.

A question I get from friends on Facebook and sometimes on Instagram when I post a photo is “how do you get the lighting,” or “what do you use to edit people out.”

These become two topics that can be blog posts or YouTube videos on their own, and tips that only I would know because I’m the person taking these photos over and over with the same results.

This is where I can display E-E-A-T, provide the same information as everyone else, and then provide the information only I would know as the creator.

Instead of saying “take these photos at golden hour” and showing myself at golden hour, I should show what it looks like before, during, and after.

This helps the person know what to look for light-wise, so they can snap their shot around the same time I do pre-processing. But that isn’t enough. I need to go five steps further. I’ll use taking a photo of the monuments on the mall as the example.

First, I’d share that I don’t edit the people out, then write a section of the article dedicated to “taking photos of XYZ monument without people.”

In this section, I’ll give the instructions on how I calculate when fewer people will be around, the light is still diffused, and you get the glow of golden hour.

If you’re curious, the trick for this is showing up about 10 minutes after peak golden hour and facing east in the morning. People start to leave ,and you get a clean shot with sunrise.

Now I need to add a tip that only someone with a lot of experience and time spent on the craft would know. This could be looking for rainy mornings. There are few to no people out, and when you combine the weather factor and being just past golden hour, you are likely to get a photo with little to no people in it.

Third is to add a tip only I would know. This can be “this does not work for blue hour or sundown because people crowd before the sun sets, and it is dark afterwards eliminating your light source.”

But that doesn’t fully provide a solution. I need to give an alternative, as they still want a people-free photo. The opportunity here is to give three or four other monuments with examples of why they are better than the XYZ monument for sunset, including getting a photo without anyone in it.

Sounds like a lot of work, right? It is, but only if you’re not an expert in your field. Sites that did not go this far got wiped out. For our clients, we go even further. Here’s how.

Something I have not seen on photography sites is time tracking to let someone know when to show up, specifically.

For the DC monuments, I tracked the time it takes for people to leave after the sun rises during cherry blossoms, so I can get a photo as the sky changes and the trees are in bloom.

After keeping my spreadsheet for a few years, I knew how many days in advance and after peak bloom, people show up and leave. I also learned when they’d be dispersed enough from my favorite shots and angles.

It wasn’t perfect, but the spreadsheet did the job, and I get my favorite photos each year.

For this theoretical article, I could post the spreadsheet to help others, and that is something unique to my site and may get backlinks from travel guides, photographers, and DC tourism companies.

I do this for other locations as well. If you’re a creator in the hobbyist, travel bloggers and nomads, food space, etc. you should be doing this level of detail.

For New Orleans and when I was in St. Marteen last January, I used live streaming cameras from specific locations to track when to show up to take my photos.

For sunrise, I looked at when people left the beach by tracking movement. The beach cams showed sunrise, but the Bourbon Street cams did not, so I used the time when fewer people passed per minute.

Applying This In The Real World And To Multiple Niches

By being able to see sunrise and sunset, I knew which beaches and angles to go to.

I was also able to figure out when I’d still have the right lighting with fewer chances of people being there by tracking when people show up and leave, and how many people are in each spot by day.

For my photos in the French Quarter, I used the Bourbon Street live cams.

You can see when the streets are less full, when lights go on and off, and capture the mood and setting you want, whether it is Jackson Square, Bourbon St., Canal Street, etc.

I personally like street lamps being lit in my photos, so that’s why I tracked their on and off times.

Now it is time to present the content. Written text is only a portion; the instructions can be presented in:

- An ordered list.

- A spreadsheet that can be downloaded or accessed online with instructions on it.

- Videos sharing the steps visually so the person can follow along.

- A table that lists the steps and what to do with notes, featuring alternatives and examples.

- Infographics that walk through the steps and include reminders and visuals.

This is pretty specific, but it applies to the work we do for clients.

If you write about outdoor sports like hiking or snowboarding, you can do this for specific slopes or trails that are always trafficked, and the person wants to enjoy it without congestion.

I was able to apply this to the Velocicoaster at Universal Orlando and not have to wait in a huge line.

The same with shopping for fashion and food sites, when are the slower times, and how can they verify?

Google Business Profiles sometimes list heavy and slow traffic hours for stores, restaurants, and entertainment venues by day and hour.

In the case studies I share on my blog, I say we don’t build backlinks anymore, and that is true.

By thinking about how and why our data, skill sets, products, services, etc. are unique and how we can apply our knowledge, the content starts to rank and people cite and source us.

We get the backlinks naturally, and the clients grow.

If you’re a contract attorney, you know the trends that could signal shifts in the markets and how businesses are growing, what their concerns are, and the direction things are heading.

Publish the numbers of types of contracts as data points without revealing any client information, and share how it either correlates or goes against what traditional media and social media are saying.

Home builders, contractors, and interior designers know what is about to be popular because demand starts to spike, if people are downsizing or looking for more space and luxury, and how it compares to previous years. This can get B2B and B2C traffic and backlinks.

- B2C comes from potential customers that want to know what to buy or what is on trend, or to see what was popular five years ago and if it is making a comeback.

- B2B wants to know what materials, colors, and other items they should plan to order and stock as the demand will be coming.

By creating renderings and solutions for both, you can collect leads and hopefully convert them, whether they’re brides, religious events like a Bat Mitzvah or Quinceañeras, or kitchen renovations and roof repairs.

If you’re a retailer or affiliate, optimize your product pages for these before the demand starts vs. having to optimize and compete with the companies and vendors already ranking.

You have the advantage, as you know what will be in demand months in advance.

Travel sites can go five steps further than saying here is a wheelchair-friendly entrance.

Share where the nearest bathrooms to that entrance are and the easiest pathway through the museum that does not require stairs.

You can also share when they’re likely to be less crowded, so you don’t have to fight through crowds to see the exhibits or wait for elevators.

And make sure to post photos of how to find them, not just you at the location. This is how you help the reader and create rank-worthy content.

The same goes for castles in Europe, and beaches or temples in Asia. Help people with more than just saying it is friendly for people; give them the resources that only a person with real experience would know.

Show images of what to look for, not just the signature photo from the space. If you don’t share where to take that photo from, the person has to do more searching.

Anyone can show a photo saying they’ve been somewhere. That does not show E-E-A-T, and neither does saying it is “reviewed by” if the content does not have unique and original thoughts by the experts.

Take your content five to 10 times further and make sure the person does not have to do another search after or while performing a task.

This is how you create content that ranks and gets backlinks.

The content in these cases is helpful, as long as you use proper formatting with it so users can thumb through with ease.

More Resources:

Featured Image: Paulo Bobita/Search Engine Journal