The Shift To Zero-Click Searches: Is Traffic Still King? via @sejournal, @wburton27

The world of SEO has changed, especially with the rise of zero-click searches, where users get their answers directly on Google’s search results page without clicking through to any websites.

A study from SparkTaro found that zero-click searches accounted for nearly 60% of Google searches ending without a click in 2024.

This trend will continue to reshape the digital landscape and force marketers to adapt their strategies, but is traffic still king? Let’s explore.

Before we get into it, here are the most common types of zero click searches:

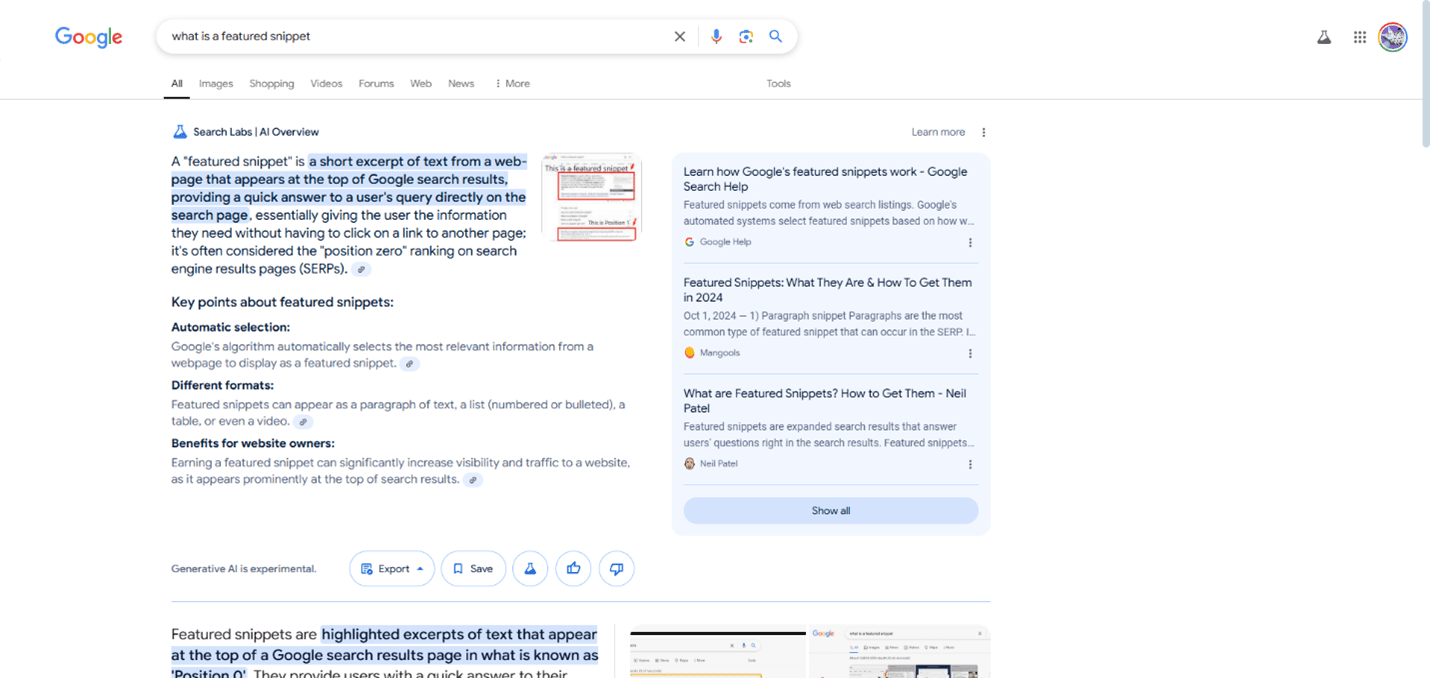

- Featured Snippets: These are snippets of text that appear at the top of the SERP, that provide direct answers to specific questions. This could be in the form of paragraphs, lists, or tables.

- Knowledge Panels: These information boxes appear on the side of the SERP, providing a quick overview of entities like people, places, or organizations.

- Direct Answers: These are concise answers to simple questions, such as “How Hot Will It Be Today?” or “How many feet are in 36 inches?”

- People Also Ask (PAA): This section displays related questions that users frequently ask, with answers provided directly on the SERP that are expandable.

- Local Packs: For local searches, Google displays a map with business listings and information, allowing users to find what they need without clicking through to individual websites.

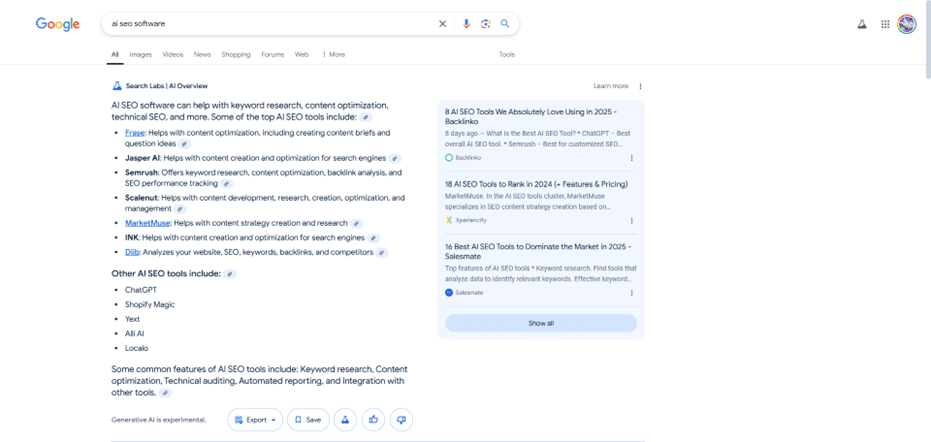

- AI Overviews: Answers to queries that are generated by AI, which give a quick overview of a topic searched.

- Calculators And Converters: Google provides built-in tools for calculations and conversions, eliminating the need to visit external websites. For example, a search for ‘calculator’ brings up a mathematical calculator in the SERPs.

- Definitions: When searching for the meaning of a word, the dictionary definition is often displayed directly on the SERP.

Here is the evolution of zero-click searches:

| Year | Description |

| 2004 | Google Local was introduced. |

| 2007 | Universal Search was launched. |

| 2008 | Google Suggest (Autocomplete) was introduced. |

| 2010 | Google Instant was launched. |

| 2012 | Knowledge Panels/Graphs were introduced. |

| 2013 | Quick Answers were introduced. |

| 2015 | Local Map Packs and People Also Ask were introduced. |

| 2017 | Google enhanced Knowledge Panels, and Google Posts were introduced. |

| 2018 | Featured Snippets and People Also Ask became more prominent. |

| 2019 | Zero-click searches passed the 50% mark in browsers. |

| 2020 | Knowledge Graph and Knowledge Panels were reintroduced |

| 2021 | Passages Ranking was introduced, and 64.82% of Google searches were zero-click. |

| 2023 | Google refined and expanded zero-click features. |

| 2024 | AI Overviews were introduced. |

Can Zero Click Impact My Organic Traffic?

Yes, with the rise of zero click, it could impact your website traffic, and here is why.

- If a user finds the answer to their query by a featured snippet or AI overview directly in the SERPs, they don’t need to click through to your website if the information matches what they were looking for. In this case, this could cause a decrease in organic traffic to your site.

- For certain industries, such as news and health, this could have a detrimental impact on site traffic unless you’re optimized for AI overviews and users click through to your site if they need more information.

- If you’re a brand that is well optimized and has conversational content, great content experience, and is optimized for featured snippets, then you may experience an increase in organic traffic. However, some publishers report increased traffic from AI overview citations.

- The expansion of AIOs, and their in-depth answers and size, takes up a whole lot of organic real estate.

Screenshot from search for [what is a featured snippet], Google, February 2025

Screenshot from search for [what is a featured snippet], Google, February 2025Adapting To Zero-Click Marketing

Just because your site may experience a decline in clicks, don’t throw in the towel just yet. It’s time to adapt your SEO strategy, and of course, in today’s landscape, you have to be everywhere your audience is.

Brands need to stop thinking about Google and think about social networks like Reddit, Quora, TikTok, YouTube, and others, in addition to optimizing AI Overviews.

While AIOs may result in fewer clicks to your website, if you show up in AIO and someone does click on your website, they are probably more qualified and more likely to convert.

Increase In Traffic From AI Citations

Some brands are reporting an increase in traffic from AI citations because they show up as links within AIO citations.

An example of this would be a search for AI SEO software.

Notice that brands like Backlinko, benefit from a link in the AIO. This can generate more brand awareness and traffic because it is an authority domain and is well optimized for AIOs.

Is Traffic Still King?

In my opinion, traffic is not king; it is queen unless traffic is your main key performance indicator (KPI).

Unlike paid traffic, traffic generated through organic search is still free and can provide long-term results for years to come.

Conversions are king if you have a site that depends upon converting website visitors to customers.

If your site depends upon growing the number of organic visitors, then traffic may be king based on your business model because it can increase members, drive up ad revenue, and increase subscriptions.

I spoke with a client the other day who said they got a lot of traffic from their SEO and paid search campaign. When I looked at the conversions, there were only a few over the last six months, and they are a lead-focused business.

If SEO is not driving leads and conversions and resulting in paying customers, then traffic does not matter. SEO is all about driving high-quality traffic that converts into customers.

Although, in most cases, zero-click traffic does not drive users directly to your website, you can reap the benefits of it if you show up as an AI citation or the answer to the snippet itself.

You can improve your brand awareness if you show up as the search results for zero-click results, resulting in more users recognizing your brand and potentially lift conversions.

While zero click results may not directly drive organic traffic to your site, demonstrating expertise and the authority gained from awareness can drive higher conversion rates when users do visit your site.

SEO Is Not Dead

SEO and organic traffic are not dead; it has just evolved.

With the rise of AI overviews and changing user behavior, end users are asking questions in social discovery channels like TikTok, YouTube, and Reddit as part of their search journey. And, you need to be everywhere your brand is.

SEO can no longer sit in a vacuum all by itself and must be a part of a fully integrated strategy,

How Can I Adapt My Strategy To Win

A good rule of thumb is to always create high-quality content that people can consume.

Focus on creating content that is conversational, directly answers user questions, is accurate and factual, and is marked up with structured data.

- Continue to optimize for featured snippets and knowledge panels.

- Create more comprehensive and conversational content that answers related questions, i.e., FAQs, etc.

- Focus on branded searches.

- Think outside Google and focus on social discovery channels like Reddit, YouTube, etc.

- Optimize your local SEO and Google Business Profile listings.

How To Measure Success

To measure the success of zero click, your metrics should focus on:

- Most of the main SEO tools provide good reporting to see if you can be visible for AI Overviews and zero-click searches.

- Focus on impressions and conversions. As I mentioned, SEO is all about driving traffic that converts into customers.

- See if you get more brand mentions and citations in AI overviews and featured snippets.

Wrapping Up

Optimizing zero-click is critical to being competitive today, especially as search engines refine their ability to answer user queries directly.

While zero-click searches are rising and becoming the new standard, especially where there are AI Overviews, SEO professionals and digital marketers must adapt and update strategies to focus on visibility, brand awareness, and providing value directly within search results and social platforms.

This is especially true as user behavior continues to change and users are expecting a faster, easier way to satisfy their information needs.

More Resources:

Featured Image: Dean Drobot/Shutterstock