Google published a research paper on how to extract user intent from user interactions that can then be used for autonomous agents. The method they discovered uses on-device small models that do not need to send data back to Google, which means that a user’s privacy is protected.

The researchers discovered they were able to solve the problem by splitting it into two tasks. Their solution worked so well it was able to beat the base performance of multi-modal large language models (MLLMs) in massive data centers.

Smaller Models On Browsers And Devices

The focus of the research is on identifying the user intent through the series of actions that a user takes on their mobile device or browser while also keeping that information on the device so that no information is sent back to Google. That means the processing must happen on the device.

They accomplished this in two stages.

- The first stage the model on the device summarizes what the user was doing.

- The sequence of summaries are then sent to a second model that identifies the user intent.

The researchers explained:

“…our two-stage approach demonstrates superior performance compared to both smaller models and a state-of-the-art large MLLM, independent of dataset and model type.

Our approach also naturally handles scenarios with noisy data that traditional supervised fine-tuning methods struggle with.”

Intent Extraction From UI Interactions

Intent extraction from screenshots and text descriptions of user interactions was a technique that was proposed in 2025 using Multimodal Large Language Models (MLLMs). The researchers say they followed this approach to their problem but using an improved prompt.

The researchers explained that extracting intent is not a trivial problem to solve and that there are multiple errors that can happen along the steps. The researchers use the word trajectory to describe a user journey within a mobile or web application, represented as a sequence of interactions.

The user journey (trajectory) is turned into a formula where each interaction step consists of two parts:

- An Observation

This is the visual state of the screen (screenshot) of where the user is at that step.

- An Action

The specific action that the user performed on that screen (like clicking a button, typing text, or clicking a link).

They described three qualities of a good extracted intent:

- “faithful: only describes things that actually occur in the trajectory;

- comprehensive: provides all of the information about the user intent required to re-enact the trajectory;

- and relevant: does not contain extraneous information beyond what is needed for comprehensiveness.”

Challenging To Evaluate Extracted Intents

The researchers explain that grading extracted intent is difficult because user intents contain complex details (like dates or transaction data) and the user intents are inherently subjective, containing ambiguities, which is a hard problem to solve. The reason trajectories are subjective is because the underlying motivations are ambiguous.

For example, did a user choose a product because of the price or the features? The actions are visible but the motivations are not. Previous research shows that intents between humans matched 80% on web trajectories and 76% on mobile trajectories, so it’s not like a given trajectory can always indicate a specific intent.

Two-Stage Approach

After ruling out other methods like Chain of Thought (CoT) reasoning (because small language models struggled with the reasoning), they chose a two-stage approach that emulated Chain of Thought reasoning.

The researchers explained their two-stage approach:

“First, we use prompting to generate a summary for each interaction (consisting of a visual screenshot and textual action representation) in a trajectory. This stage is

prompt-based as there is currently no training data available with summary labels for individual interactions.

Second, we feed all of the interaction-level summaries into a second stage model to generate an overall intent description. We apply fine-tuning in the second stage…”

The First Stage: Screenshot Summary

The first summary, for the screenshot of the interaction, they divide the summary into two parts, but there is also a third part.

- A description of what’s on the screen.

- A description of the user’s action.

The third component (speculative intent) is a way to get rid of speculation about the user’s intent, where the model is basically guessing at what’s going on. This third part is labeled “speculative intent” and they actually just get rid of it. Surprisingly, allowing the model to speculate and then getting rid of that speculation leads to a higher quality result.

The researchers cycled through multiple prompting strategies and this was the one that worked the best.

The Second Stage: Generating Overall Intent Description

For the second stage, the researchers fine tuned a model for generating an overall intent description. They fine tuned the model with training data that is made up of two parts:

- Summaries that represent all interactions in the trajectory

- The matching ground truth that describes the overall intent for each of the trajectories.

The model initially tended to hallucinate because the first part (input summaries) are potentially incomplete, while the “target intents” are complete. That caused the model to learn to fill in the missing parts in order to make the input summaries match the target intents.

They solved this problem by “refining” the target intents by removing details that aren’t reflected in the input summaries. This trained the model to infer the intents based only on the inputs.

The researchers compared four different approaches and settled on this approach because it performed so well.

Ethical Considerations And Limitations

The research paper ends by summarizing potential ethical issues where an autonomous agent might take actions that are not in the user’s interest and stressed the necessity to build the proper guardrails.

The authors also acknowledged limitations in the research that might limit generalizability of the results. For example, the testing was done only on Android and web environments, which means that the results might not generalize to Apple devices. Another limitation is that the research was limited to users in the United States in the English language.

There is nothing in the research paper or the accompanying blog post that suggests that these processes for extracting user intent are currently in use. The blog post ends by communicating that the described approach is helpful:

“Ultimately, as models improve in performance and mobile devices acquire more processing power, we hope that on-device intent understanding can become a building block for many assistive features on mobile devices going forward.”

Takeaways

Neither the blog post about this research or the research paper itself describe the results of these processes as something that might be used in AI search or classic search. It does mention the context of autonomous agents.

The research paper explicitly mentions the context of an autonomous agent on the device that is observing how the user is interacting with a user interface and then be able to infer what the goal (the intent) of those actions are.

The paper lists two specific applications for this technology:

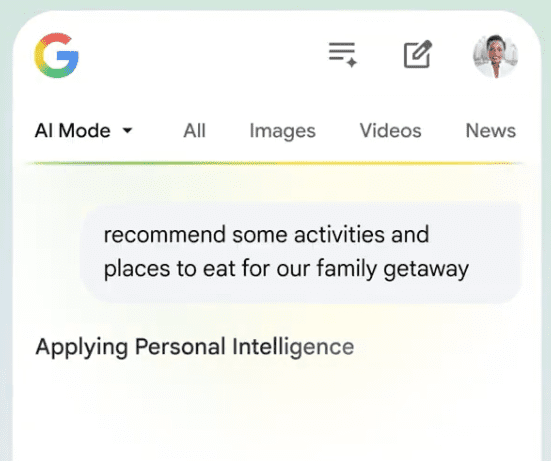

- Proactive Assistance:

An agent that watches what a user is doing for “enhanced personalization” and “improved work efficiency”.

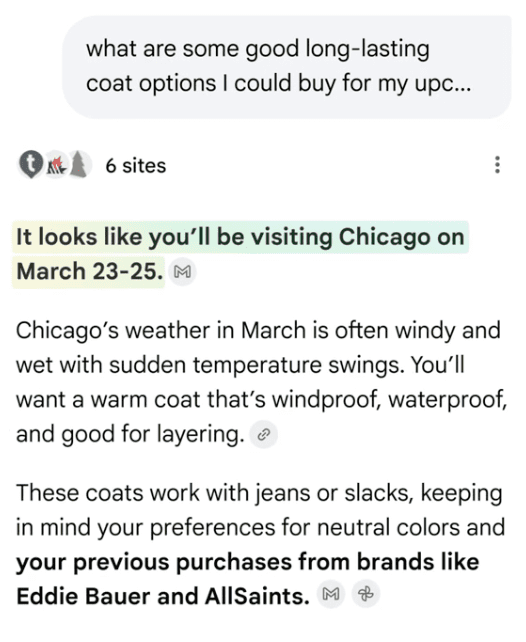

- Personalized Memory

The process enables a device to “remember” past activities as an intent for later.

Shows The Direction Google Is Heading In

While this might not be used right away, it shows the direction that Google is heading, where small models on a device will be watching user interactions and sometimes stepping in to assist users based on their intent. Intent here is used in the sense of understanding what a user is trying to do.

Read Google’s blog post here:

Small models, big results: Achieving superior intent extraction through decomposition

Read the PDF research paper:

Small Models, Big Results: Achieving Superior Intent Extraction through Decomposition (PDF)

Featured Image by Shutterstock/ViDI Studio