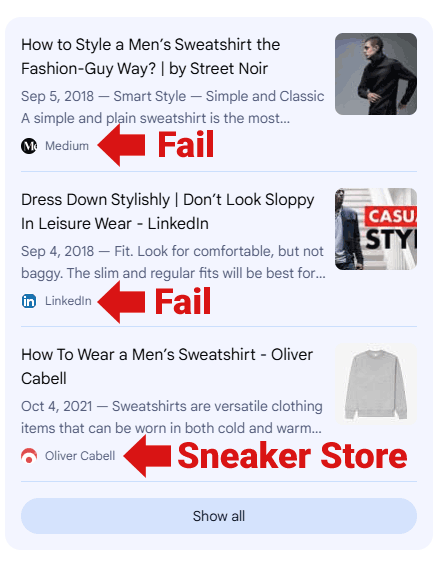

All In One SEO WordPress Vulnerability Affects Over 3 Million Sites via @sejournal, @martinibuster

A security vulnerability was discovered in the popular All in One SEO (AIOSEO) WordPress plugin that made it possible for low-privileged users to access a site’s global AI access token, potentially allowing them to misuse the plugin’s artificial intelligence features and could allow attackers to generate content or consume credits using the affected site’s AIOSEO AI credits and AI features. The plugin is installed on more than 3 million WordPress websites, making the exposure significant.

All in One SEO WordPress Plugin (AIOSEO)

All in One SEO is one of the most widely used WordPress SEO plugins, installed in over 3 million websites. It helps site owners manage search engine optimization tasks such as generating metadata, creating XML sitemaps, adding structured data, and providing AI-powered tools that assist with writing titles, descriptions, blog posts, FAQs, social medial posts, and generate images.

Those AI features rely on a site-wide AI access token that allows the plugin to communicate with the AIOSEO external AI services.

Missing Capability Check

According to Wordfence, the vulnerability was caused by a missing permission check on a specific REST API endpoint used by the plugin which enabled users with contributor level access to view the global AI access token.

In the context of a WordPress website, an API (Application Programming Interface) is like a bridge between the WordPress website and different software applications (including external apps like AIOSEO’s AI content generator) that enable them to securely communicate and share data with one another. A REST endpoint is a URL that exposes an interface to functionality or data.

The flaw was in the following REST API endpoint:

/aioseo/v1/ai/credits

That endpoint is meant to return information about a site’s AI usage and remaining credits. However, it failed to verify whether the user making the request was actually allowed to see that data. AIOSEO’s plugin failed to do a capability check to verify whether someone logged in with a contributor level access can have access to that data.

Because of that, any logged-in user with Contributor-level access or higher could call the endpoint and retrieve the site’s global AI access token.

Wordfence describes the flaw like this:

“This makes it possible for authenticated attackers, with Contributor-level access and above, to disclose the global AI access token.”

The problem was that the implementation of the REST API endpoint did not do a permission check, which enabled someone with contributor level access to see sensitive data.

In WordPress, REST API routes are supposed to include capability checks that ensure only authorized users can access them. In this case, that check was missing, so the plugin treated Contributors the same as administrators when returning the AI token.

Why The Vulnerability Is Problematic

In WordPress, the Contributor level role is one of the lowest privilege levels. Many sites grant Contributor level access to multiple people so that they can submit article drafts for review and publication.

By exposing the global AI token to those users, the plugin may have effectively handed out a site-wide credential that controls access to its AI features. That token could be used to:

1. Unauthorized AI Usage

The token functions as a site wide credential that authorizes AI requests. If an attacker obtains it, they could potentially use it to generate AI content through the affected site’s account, consuming whatever credits or usage limits are associated with that token.

2. Service Depletion

An attacker could automate requests using the exposed token to exhaust the site’s available AI quota. That would prevent site administrators from using the AI features they rely on, effectively creating a denial of service for the plugin’s AI tools.

Even though the vulnerability does not allow direct code execution, leaking a site-wide API token still represents a possible billing risk.

Part Of A Broader Pattern Of Vulnerabilities

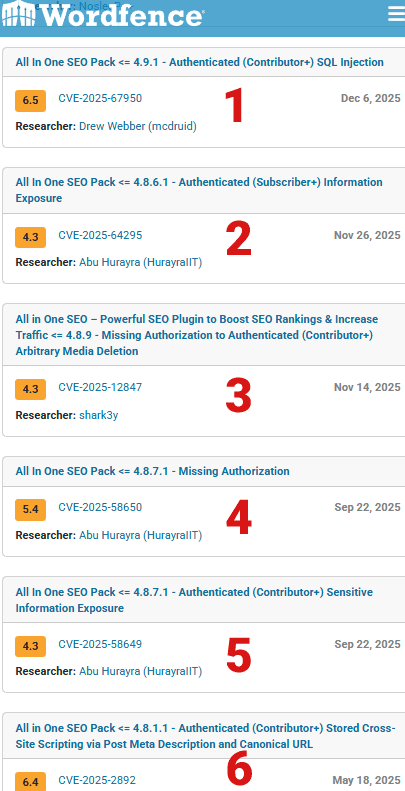

This is not the first time All In One SEO has shipped with vulnerabilities related to missing authorization or low-privilege access. According to Wordfence, the plugin has had six vulnerabilities disclosed in 2025 alone, many of which allowed Contributor or Subscriber level users to access or modify data they should not have been able to access.

Those issues included SQL injection, information disclosure, arbitrary media deletion, missing authorization checks, sensitive data exposure, and stored cross-site scripting. The recurring theme across those reports is improper permission enforcement for low-privilege users, the same underlying class of flaw that led to the AI token exposure in this case.

Six vulnerabilities in one year is a high level for an SEO plugin. Yoast SEO plugin had zero vulnerabilities in 2025, RankMath had four vulnerabilities in 2025 and Squirrly SEO had only three vulnerabilities in 2025.

Screenshot Of Six AIOSEO Vulnerabilities In 2025

How The Vulnerability Was Fixed

The vulnerability affects all versions of All in One SEO up to and including 4.9.2. It was addressed in version 4.9.3, which included a security update described in the official plugin changelog by the plugin developers as:

“Hardened API routes to prevent AI access token from being exposed.”

That change corresponds directly to the REST API flaw identified by Wordfence.

What Site Owners Should Do

Anyone running All in One SEO should update to version 4.9.3 or newer as soon as possible. Sites that allow multiple external contributors are especially exposed since low-privilege accounts could access the site’s AI token on vulnerable versions.

Featured Image by Shutterstock/Shutterstock AI Generator