Google’s Gary Illyes confirmed that AI content is fine as long as the quality is high. He said that “human created” isn’t precisely the right way to describe their AI content policy, and that a more accurate description would be “human curated.”

The questions were asked by Kenichi Suzuki in the context of an exclusive interview with Illyes.

AI Overviews and AI Mode Models

Kenichi asked about the AI models used for AI Overviews and AI Mode, and he answered that they are custom Gemini models.

Illyes answered:

“So as you noted, the the model that we use for AIO (for AI Overviews) and for AI mode is a custom Gemini model and that might mean that it was trained differently. I don’t know the exact details, how it was trained, but it’s definitely a custom model.”

Kenichi then asked if AI Overviews (AIO) and AI Mode use separate indexes for grounding.

Grounding is where an LLM will connect answers to a database or a search index so that answers are more reliable, truthful, and based on verifiable facts, helping to cut down on hallucinations. In the context of AIO and AI Mode, grounding generally happens with web-based data from Google’s index.

Suzuki asked:

“So, does that mean that AI Overviews and AI Mode use separate indexes for grounding?”

Google’s Illyes answered:

“As far as I know, Gemini, AI Overview and AI Mode all use Google search for grounding. So basically they issue multiple queries to Google Search and then Google Search returns results for that those particular queries.”

Kenichi was trying to get an answer regarding the Google Extended crawler, and Illyes’s response was to explain when the Google Extended crawler comes into play.

“So does that mean that the training data are used by AIO and AI Mode collected by regular Google and not Google Extended?”

And Illyes answered:

“You have to remember that when grounding happens, there’s no AI involved. So basically it’s the generation that is affected by the Google extended. But also if you disallow Google Extended then Gemini is not going to ground for your site.”

AI Content In LLMs And Search Index

The next question that Illyes answered was about whether AI content published online is polluting LLMs. Illyes said that this is not a problem with the search index, but it may be an issue for LLMs.

Kenichi’s question:

“As more content is created by AI, and LLMs learn from that content. What are your thoughts on this trend and what are its potential drawbacks?”

Illyes answered:

“I’m not worried about the search index, but model training definitely needs to figure out how to exclude content that was generated by AI. Otherwise you end up in a training loop which is really not great for for training. I’m not sure how much of a problem this is right now, or maybe because how we select the documents that we train on.”

Content Quality And AI-Generated Content

Suzuki then followed up with a question about content quality and AI.

He asked:

“So you don’t care how the content is created… so as long as the quality is high?”

Illyes confirmed that a leading consideration for LLM training data is content quality, regardless of how it was generated. He specifically cited the factual accuracy of the content as an important factor. Another factor he mentioned is that content similarity is problematic, saying that “extremely” similar content shouldn’t be in the search index.

He also said that Google essentially doesn’t care how the content is created, but with some caveats:

“Sure, but if you can maintain the quality of the content and the accuracy of the content and ensure that it’s of high quality, then technically it doesn’t really matter.

The problem starts to arise when the content is either extremely similar to something that was already created, which hopefully we are not going to have in our index to train on anyway.

And then the second problem is when you are training on inaccurate data and that is probably the riskier one because then you start introducing biases and they start introducing counterfactual data in your models.

As long as the content quality is high, which typically nowadays requires that the human reviews the generated content, it is fine for model training.”

Human Reviewed AI-Generated Content

Illyes continued his answer, this time focusing on AI-generated content that is reviewed by a human. He emphasizes human review not as something that publishers need to signal in their content, but as something that publishers should do before publishing the content.

Again, “human reviewed” does not mean adding wording on a web page that the content is human reviewed; that is not a trustworthy signal, and it is not what he suggested.

Here’s what Illyes said:

“I don’t think that we are going to change our guidance any time soon about whether you need to review it or not.

So basically when we say that it’s human, I think the word human created is wrong. Basically, it should be human curated. So basically someone had some editorial oversight over their content and validated that it’s actually correct and accurate.”

Takeaways

Google’s policy, as loosely summarized by Gary Illyes, is that AI-generated content is fine for search and model training if it is factually accurate, original, and reviewed by humans. This means that publishers should apply editorial oversight to validate the factual accuracy of content and to ensure that it is not “extremely” similar to existing content.

Watch the interview:

Featured Image by Shutterstock/SuPatMaN

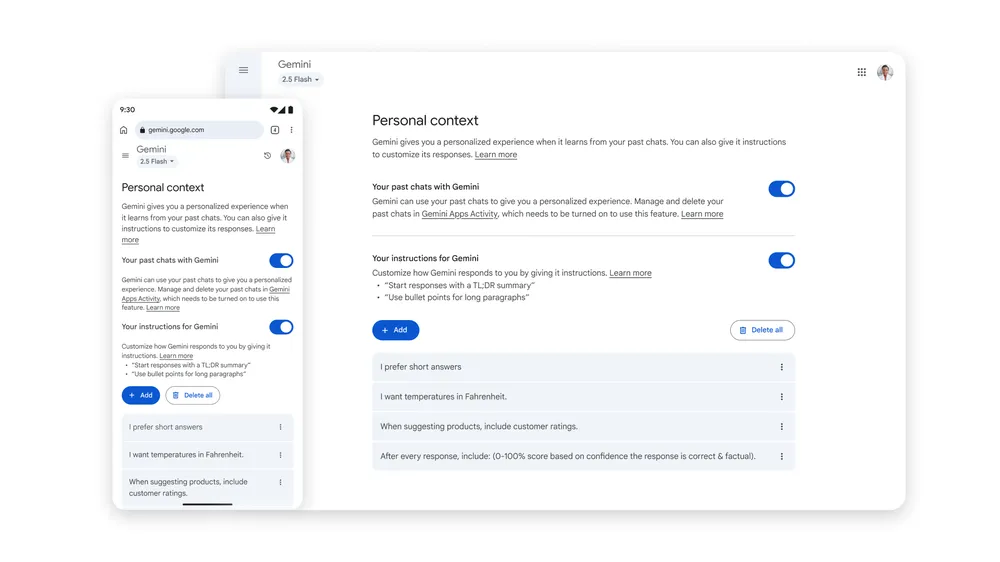

Screenshot from: blog.google/products/gemini/temporary-chats-privacy-controls/, August 2025.

Screenshot from: blog.google/products/gemini/temporary-chats-privacy-controls/, August 2025.