Does Google’s AI Overviews Violate Its Own Spam Policies? via @sejournal, @martinibuster

Search marketers assert that Google’s new long-form AI Overviews answers have become the very thing Google’s documentation advises publishers against: scraped content lacking originality or added value, at the expense of content creators who are seeing declining traffic.

Why put the effort into writing great content if it’s going to be rewritten into a complete answer that removes the incentive to click the cited source?

Rewriting Content And Plagiarism

Google previously showed Featured Snippets, which were excerpts from published content that users could click on to read the rest of the article. Google’s AI Overviews (AIO) expands on that by presenting entire articles that answer a user’s questions and sometimes anticipates follow-up questions and provides answers to those, too.

And it’s not an AI providing answers. It’s an AI repurposing published content. That action is called plagiarism when a student does the same thing by repurposing an existing essay without adding unique insight or analysis.

The thing about AI is that it is incapable of unique insight or analysis, so there is zero value-add in Google’s AIO, which in an academic setting would be called plagiarism.

Example Of Rewritten Content

Lily Ray recently published an article on LinkedIn drawing attention to a spam problem in Google’s AIO. Her article explains how SEOs discovered how to inject answers into AIO, taking advantage of the lack of fact checking.

Lily subsequently checked on Google, presumably to see if her article was ranking and discovered that Google had rewritten her entire article and was providing an answer that was almost as long as her original.

She tweeted:

“It re-wrote everything I wrote in a post that’s basically as long as my original post “

It re-wrote everything I wrote in a post that’s basically as long as my original post ☠️ pic.twitter.com/ucNCSMHsY4

— Lily Ray 😏 (@lilyraynyc) May 18, 2025

Did Google Rewrite Entire Article?

An algorithm that search engines and LLMs may use to analyze content is to determine what questions the content answers. This way the content can be annotated according to what answers it provides, making it easier to match a query to a web page.

I used ChatGPT to analyze Lily’s content and also AIO’s answer. The number of questions answered by both documents were almost exactly the same, twelve. Lily’s article answered 13 questions while AIO provided answeredo twelve.

Both articles answered five similar questions:

- Spam Problem In AI Overviews

AIO: “s there a spam problem affecting Google AI Overviews?

Lily Ray: What types of problems have been observed in Google’s AI Overviews? - Manipulation And Exploitation of AI Overviews

AIO: How are spammers manipulating AI Overviews to promote low-quality content?

Lily Ray: What new forms of SEO spam have emerged in response to AI Overviews? - Accuracy And Hallucination Concerns

AIO: Can AI Overviews generate inaccurate or contradictory information?

Lily Ray: Does Google currently fact-check or validate the sources used in AI Overviews? - Concern About AIO In The SEO Community

AIO: What concerns do SEO professionals have about the impact of AI Overviews?

Lily Ray: Why is the ability to manipulate AI Overviews so concerning? - Deviation From Principles of E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness)

AIO: What kind of content is Google prioritizing in response to these issues?

Lily Ray: How does the quality of information in AI Overviews compare to Google’s traditional emphasis on E-E-A-T and trustworthy content?

Plagiarizing More Than One Document

Google’s AIO system is designed to answer follow-up and related questions, “synthesizing” answers from more than one original source and that’s the case with this specific answer.

Whereas Lily’s content argues that Google isn’t doing enough, AIO rewrote the content from another document to say that Google is taking action to prevent spam. Google’s AIO differs from Lily’s original by answering five additional questions with answers that are derived from another web page.

This gives the appearance that Google’s AIO answer for this specific query is “synthesizing” or “plagiarizing” from two documents to answer the question Lily Ray’s search query, “spam in ai overview google.”

Takeaways

- Google’s AI Overviews is repurposing web content to create long-form content that lacks originality or added-value.

- Google’s AIO answers mirror the content they summarize, copying the structure and ideas to answer identical questions inherent in the articles.

- Google’s AIO arguably deviates from Google’s own quality standards, using rewritten content in a manner that mirrors Google’s own definitions of spam.

- Google’s AIO features apparent plagiarism of multiple sources.

The quality and trustworthiness of AIO responses may not reach the quality levels set by Google’s principles of Experience, Expertise, Authoritativeness, and Trustworthiness because AI lacks experience and apparently there is no mechanism for fact-checking.

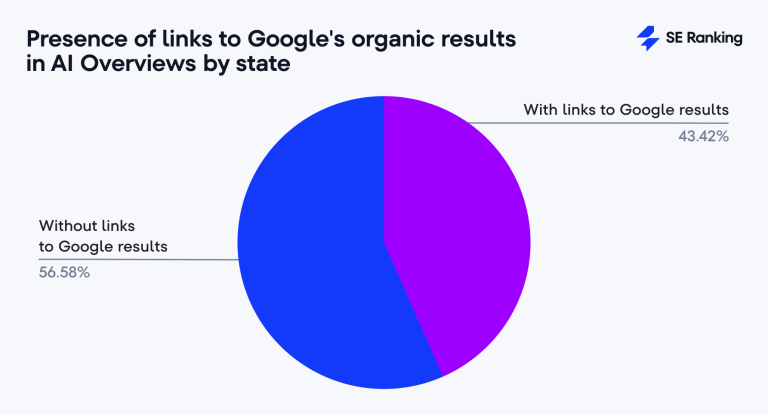

The fact that Google’s AIO system provides essay-length answers arguably removes any incentive for users to click through to the original source and may help explain why many in the search and publisher communities are seeing less traffic. The perception of AIO traffic is so bad that one search marketer quipped on X that ranking #1 on Google is the new place to hide a body, because nobody would ever find it there.

Google could be said to plagiarize content because AIO answers are rewrites of published articles that lack unique analysis or added value, placing AIO firmly within most people’s definition of a scraper spammer.

Featured Image by Shutterstock/Luis Molinero