How To Measure Topical Authority [In 2025] via @sejournal, @Kevin_Indig

Today’s Memo is an updated version of my previous guides on topical authority, one that takes the Google leaks, documents revealed in Google lawsuits, my recent UX study of AIOs, and the latest shifts in the search landscape into account.

Image Credit: Lyna ™

Image Credit: Lyna ™I think this is one of these concepts that can fly under the radar in the AI and Search conversation, but it’s actually important.

I’ll cover:

- The idea behind topical authority and why you should pay attention to it.

- How to measure topical authority.

- What internal Google documents and leaks say about topical authority.

- How Google and LLMs could understand topical authority.

- What concrete levers you should pull to build topical authority.

I would argue that, along with brand authority, topical authority matters more now than ever.

But before we dig in, we have to address the reality of our current search situation:

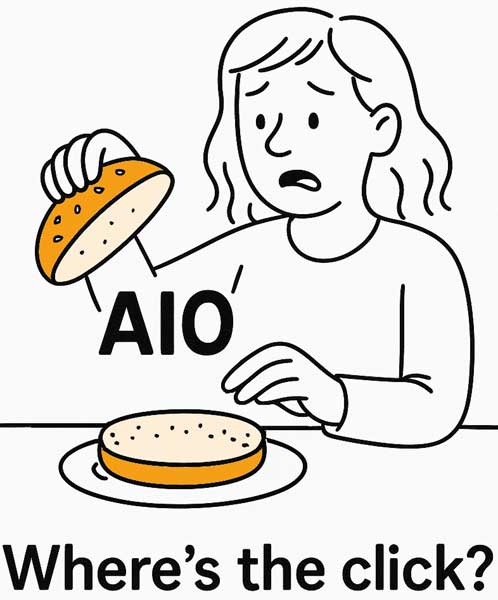

You and your team have likely poured countless hours into classic SEO plays, content clusters, and link‑building, only to watch your organic clicks plateau (or even dip) as AIOs claim more SERP real estate.

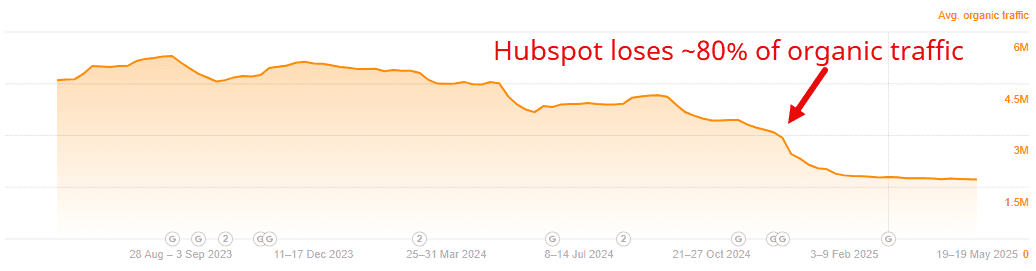

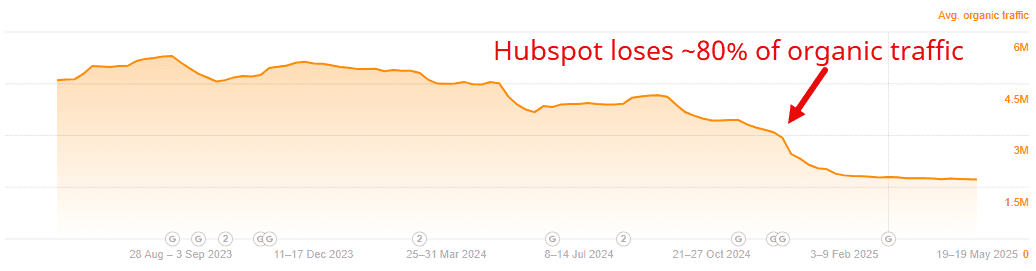

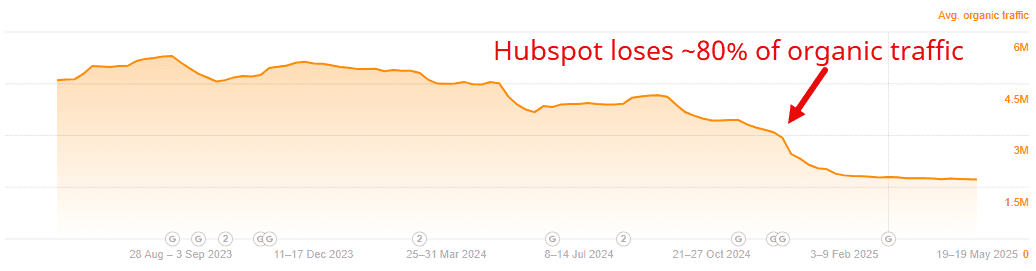

Heck, countless sites have been losing organic traffic since late 2023 due to meager topical authority.

Meanwhile, stakeholders crave confidence that your AI era playbook is working.

Topical authority is a critical concept for both the old and new SEO era.

In fact, a recent Graphite study found that pages with high topical authority gain traffic 57% faster than those with low authority – proof that “covering your bases” can still pay dividends in speedy visibility gains. And the study showed that topical authority can increase the percentage of pages that get visibility in the first three weeks.1

I’m working on a workflow for paid subscribers that makes tracking topic‑level gains easier. The anticipated launch date of the workflow is in June. Upgrade to paid so you don’t miss it.

I used to dismiss topical authority as an SEO ghost concept. You know, one of those buzz‑terms people use to justify link‑building or content‑depth plays.

But back in 2022, I was wrong: It’s far from a ghost.

In fact, internal docs leaks and public signals from Google show that topical relevance, i.e., how completely a site covers related entities and questions, is a real and important factor in ranking.

And in today’s era of AIOs and LLM‑powered snippets, brand authority (a close cousin of topical authority) can be the difference between earning the click or being buried beneath an AI summary.

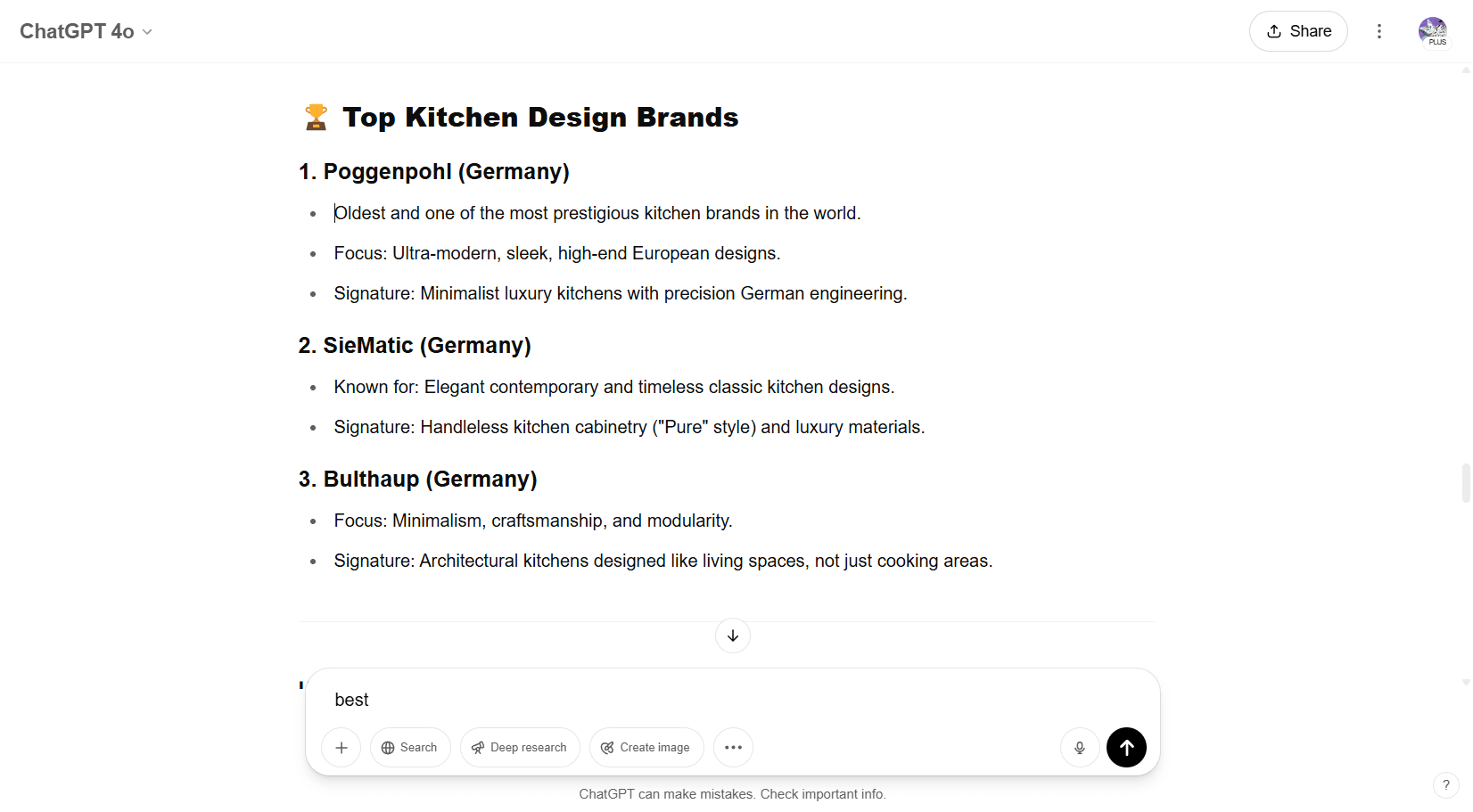

How Is The SEO Community Defining Topical Authority Post-AIOs?

The idea behind topical authority is that by covering all aspects of a topic (well), sites get a ranking boost because Google sees them as an authority in the topic space.

On the other end of the spectrum would be sites that only touch the surface of a topic.

Here’s how the SEO community has defined topical authority over time:

Topical Authority is a way of balancing the PageRank for finding more authoritative sources with the information on the sources.

Topical authority can be described as “depth of expertise.” It’s achieved by consistently writing original high-quality, comprehensive content that covers the topic.

Topical authority is a perceived authority over a niche or broad idea set, as opposed to authority over a singular idea or term.

Topical authority is one of the ways Google measures “quality” as a ranking factor – along with page authority and domain authority.

Based on that, here’s how I see topical authority (a.k.a. topical relevance) showing up in SERPs today. It includes:

- Depth of expertise: Consistently publishing original, high‑quality content that covers all facets of a topic.

- Entity coverage: Matching your content’s scope against Google’s own understanding of entity relationships – i.e., how well you hit the concepts Google expects for a given topic.

- Backlink and mention signals: Earning links and web mentions from other trusted sources that reinforce your authority within that topic space. Think quality mentions over quantity here.

- Final answers: How often your site provides the final answer (think completes the user journey) for searchers with a specific problem in a specific topic.

Semantic proximity matters, too. It’s not just about the volume of topic coverage, but about meaningfully addressing subtopics and related questions across your topics – think token overlap or topic‑model similarity between your pages and “ideal” topic coverage.

And information gain comes into play here also: What new, non-consensus information are you adding to the targeted topic?

Our SEO team brings the concept of topical authority to me as an argument to invest more resources in content, backlinking, and digital PR, but they can’t really back up the concept.

I’ve read a ton of articles about topical authority and have had more conversations about it than I can count. This is how I make sense of the idea:

- Google rewards sites that cover a topic in-depth.

- It does so by comparing how well the site covers relevant entities with Google’s own understanding of entity relationships.

- Google matches its own understanding with other factors like the site’s backlink profile and mentions on the web, user behavior, and brand combination searches (brand + generic keyword).

However, here’s the proof that it’s not a ghost concept and the concept does matter to earn organic visibility:

- Leaked Google documents: The Google ranking factors leak verified the use of site‑level quality and “domain authority” signals, suggesting it uses whitelists of trusted sources for sensitive topics such as health or finance.

- News topic authority signals: Google’s May 2023 Search Central post on “Understanding News Topic Authority” describes how it gauges a publication’s expertise across specialized verticals like finance, politics, and health.2

To better surface relevant, expert, and knowledgeable content in Google Search and News, Google developed a system called topic authority that helps determine which expert sources are helpful to someone’s newsy query in certain specialized topic areas, such as health, politics, or finance.2

- Yandex leaked documents: Similar to Google, leaked Yandex materials indicate they factor in topic‑graph coverage when ranking news and content hubs (i.e., how many semantically related subtopics a site authoritatively addresses).

- Google documents revealed in lawsuits: As reported by Danny Goodwin over at Search Engine Land, the trial exhibits released for the Google legal proceedings by the Department of Justice contain additional verification for the existence and importance of “topicality.” Key components include the ABC signals: Anchors (A): Links from a source page to a target page, Body (B): Terms in the document and Clicks (C): How long a user stayed on a linked page before returning to the SERP.

Together, the guidance from Google and leak confirmations make it very clear: Topical authority matters … even if sometimes it goes by a different name.

It isn’t just SEO folklore; it’s a (kind of) measurable signal of how comprehensively and credibly your site covers a topic, which is more important than ever in an AIO-saturated SERP.

Even though 15% of daily Google searches are new, websites cannot get more traffic than there are searches. That means the traffic from keywords within a topic is also limited by the number of searches.

In plain words:

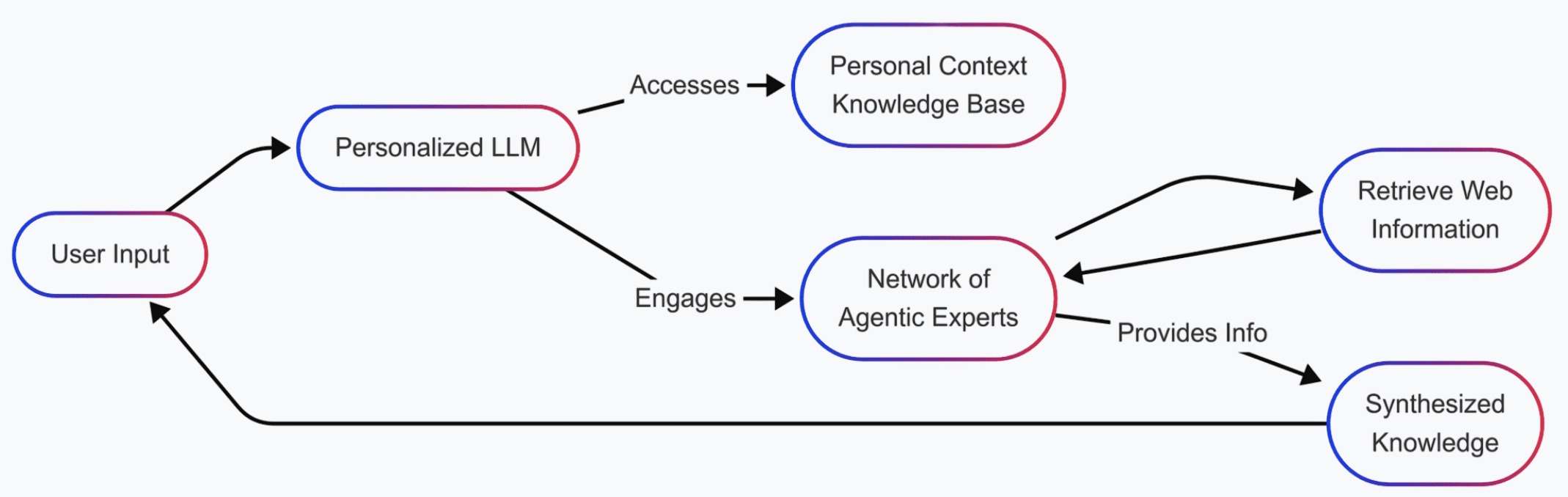

The easiest way to measure topical authority is the share of traffic a site gets from a topic. I call this Topic Share, similar to market share or share of voice.

This is a very practical approach because it factors in the following:

- Rank, driven by backlinks, content depth/quality, and user experience.

- Search volume and how competitive a keyword is.

- The fact that URLs can rank for many keywords.

- SERP Features and snippet optimization.

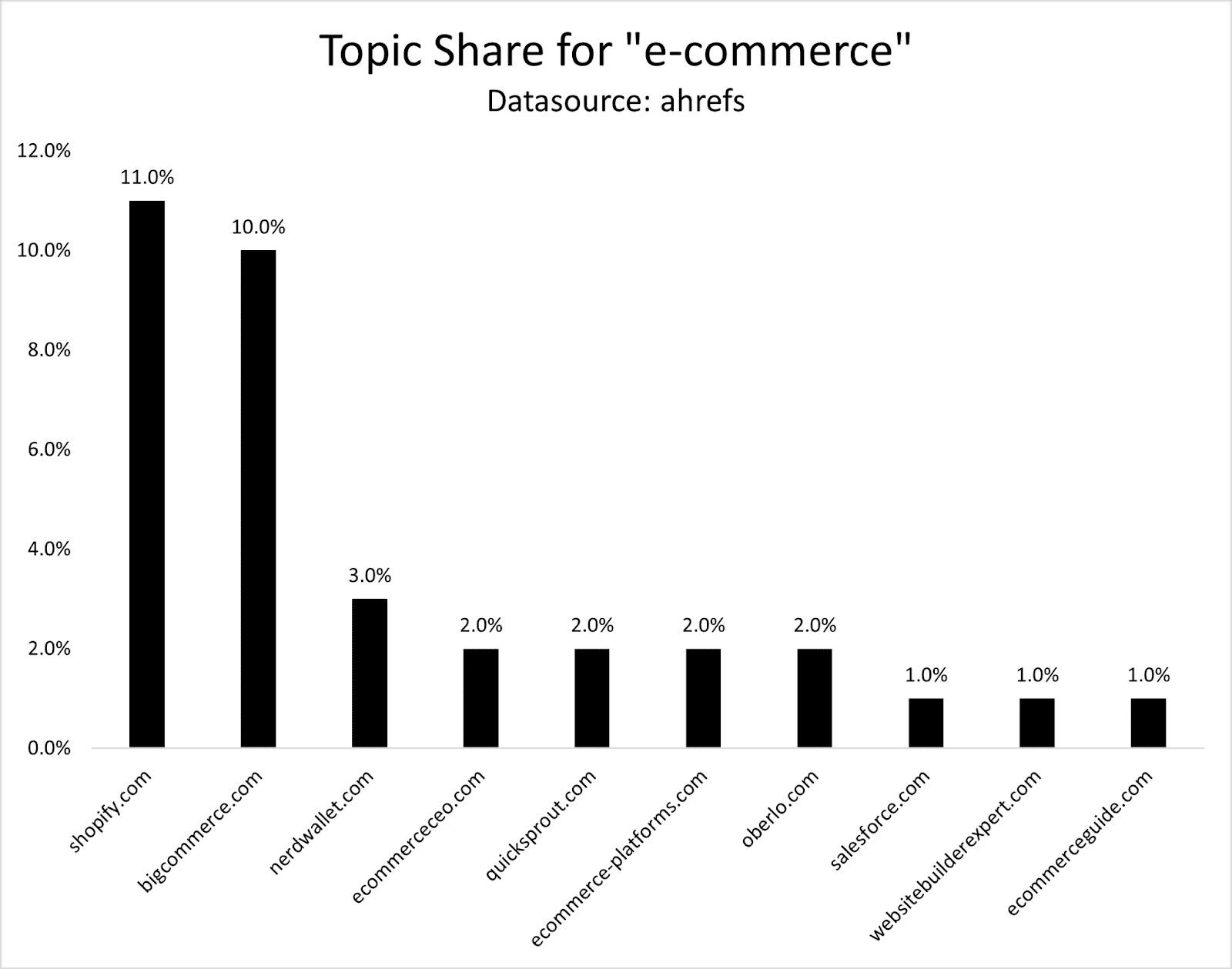

To calculate Topic Share, you basically calculate how much traffic you or your competitors get from keywords within a topic.

For example, you can do this in Ahrefs:

- Take an entity (head term) like “ecommerce” and enter it in Keyword Explorer.

- Go to Matching Terms and filter for Volume = > 10.

- Export all keywords and upload them again in Keyword Explorer.

- Go to traffic share by domains.

- Traffic Share = Topic Share = “Topical Authority.”

The easiest way to find an entity is by looking at whether Google shows a Knowledge Panel for it in the search results or not.

Next month, paid subscribers will get my topical authority workflow. Don’t miss out. Upgrade here.

In theory, those 29,000 keywords reflect 100% Topic Share. If a domain ranked No. 1 for all of them, it would have the highest Topic Share.

If it would magically rank No. 1 for all keywords, it would have 100% Topic Share, which is practically impossible.

As a result, we need to use Topic Share comparatively, meaning in comparison with other sites.

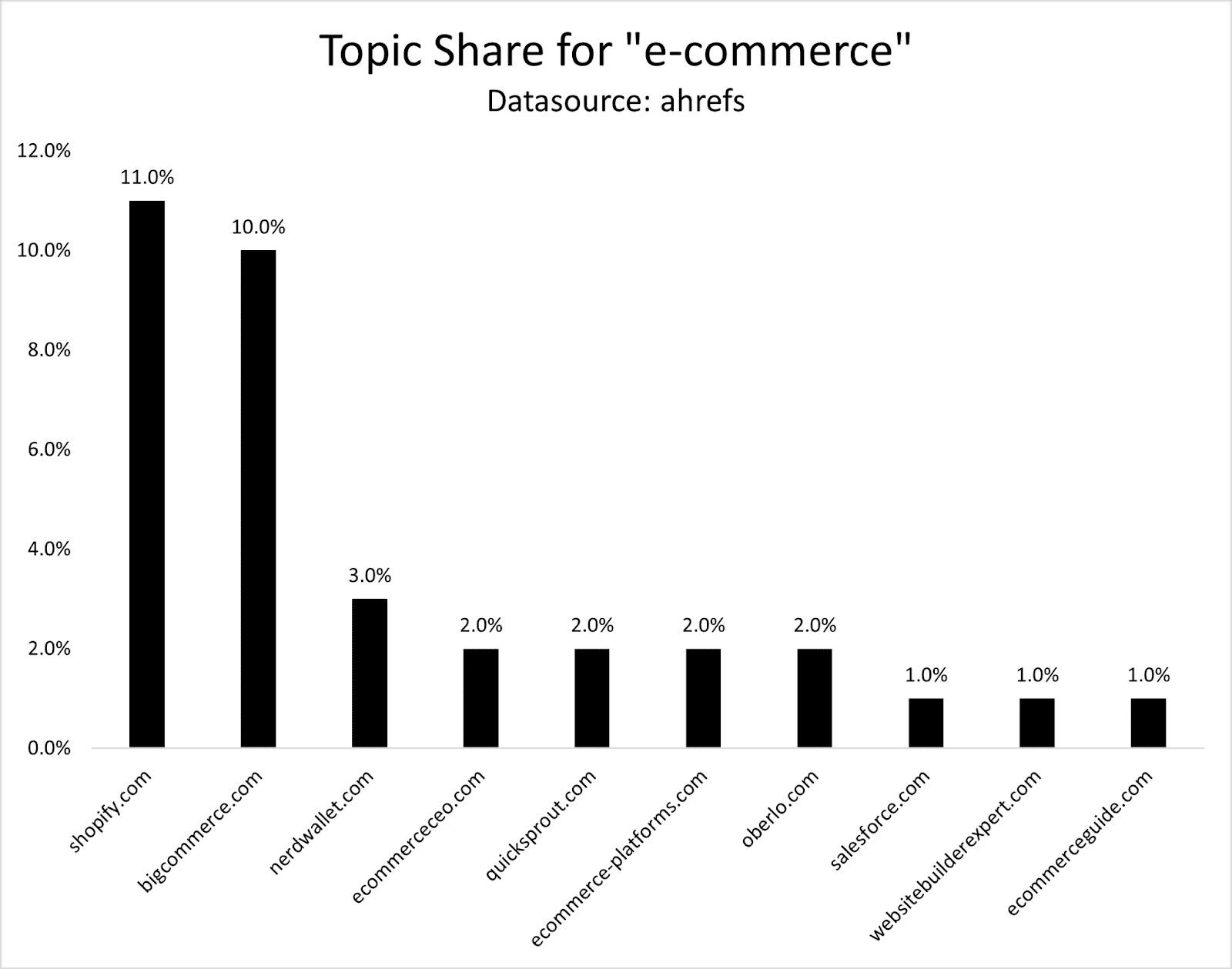

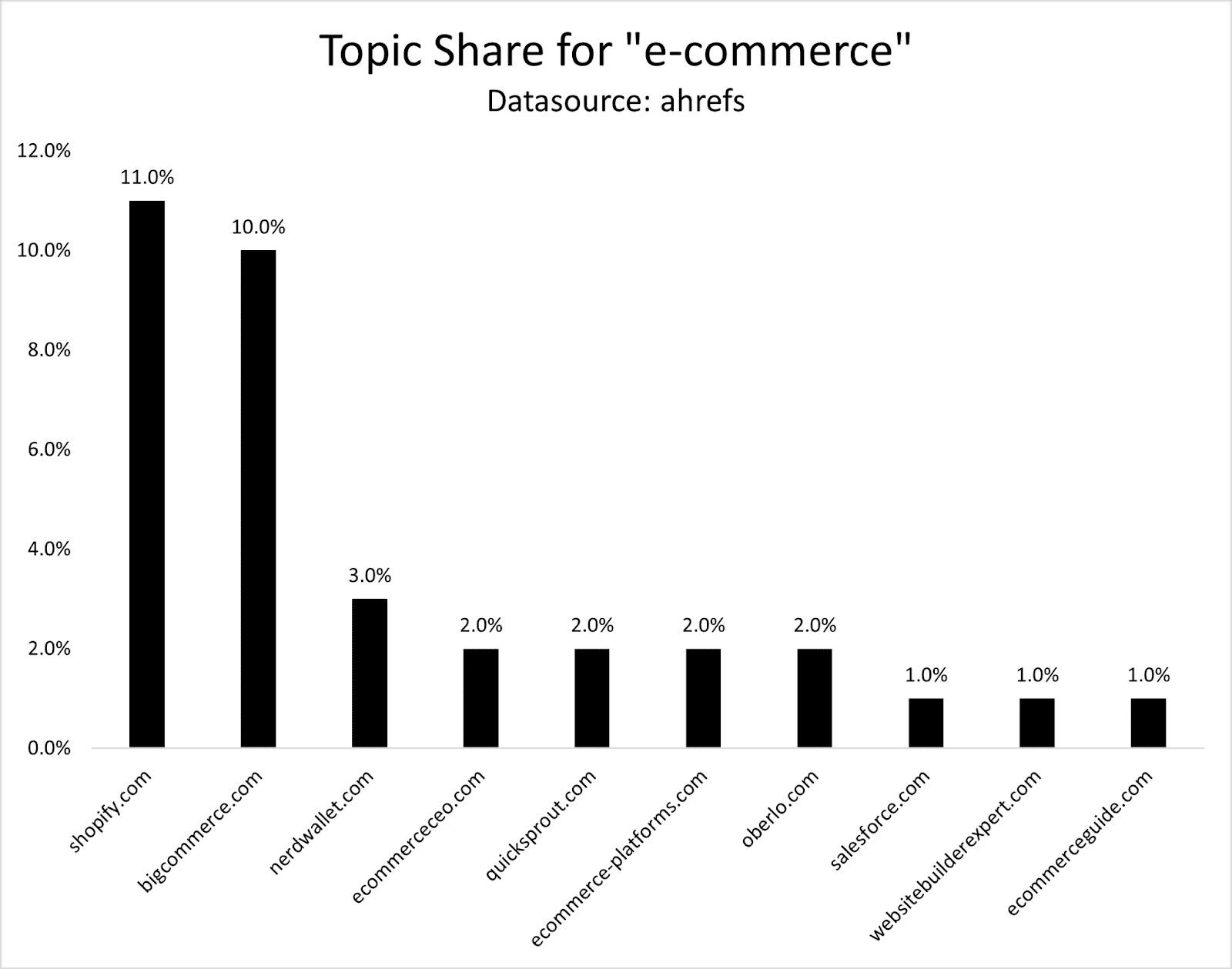

For “ecommerce,” I calculated Topic Share based on the top 3,000 keywords by search volume. Shopify is leading with 11% Topic Share, closely followed by Bigcommerce with 10% and Nerdwallet with 3%.

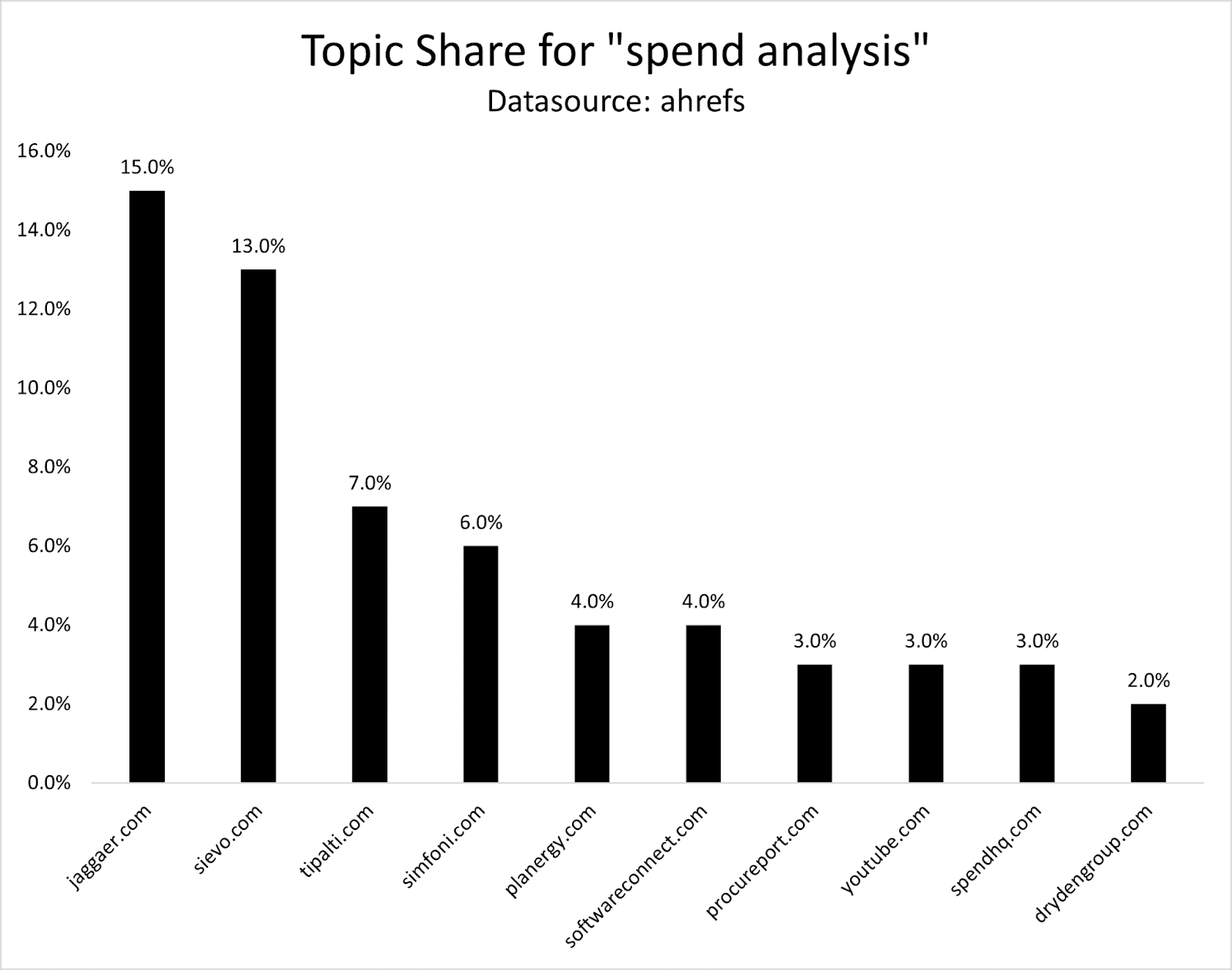

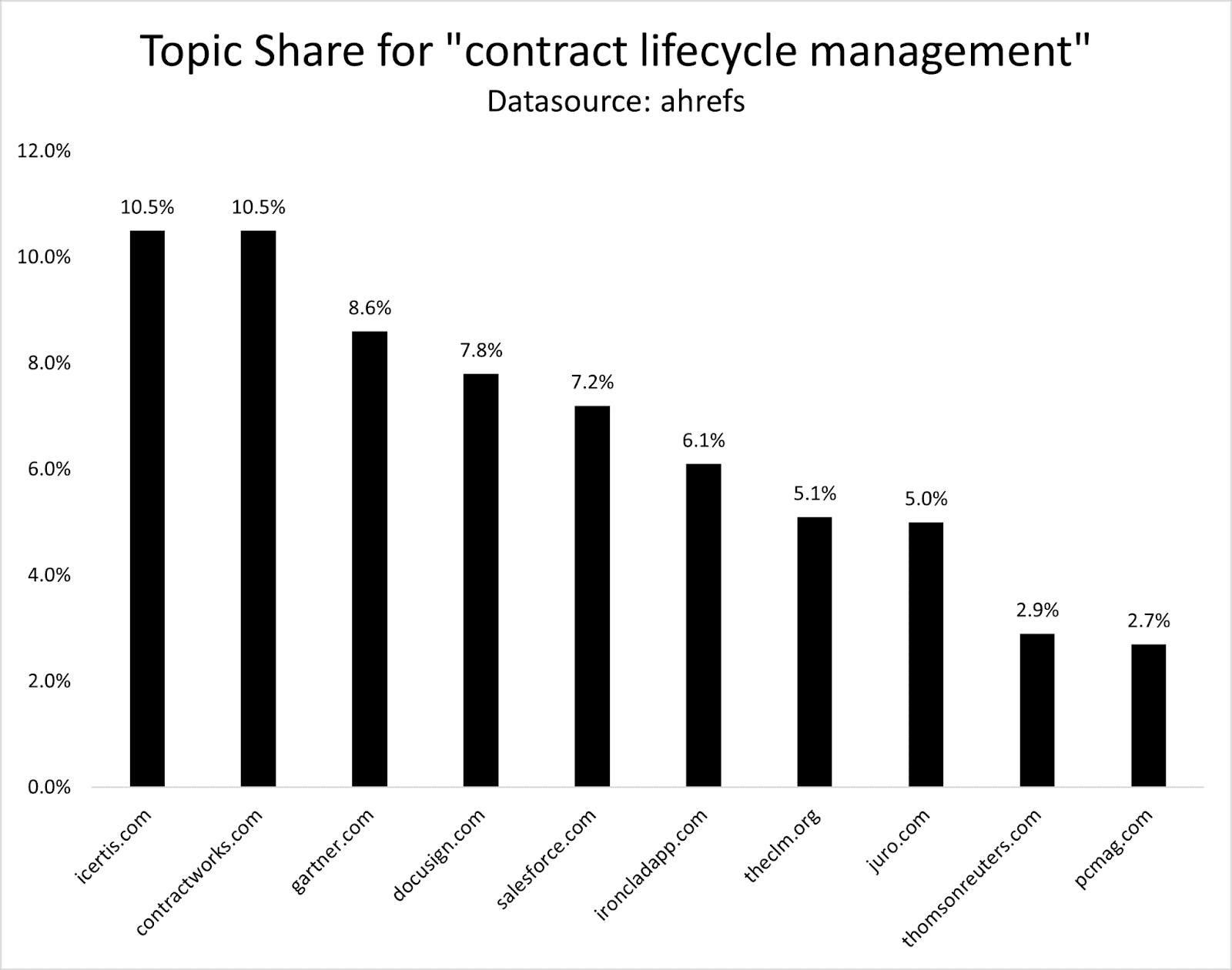

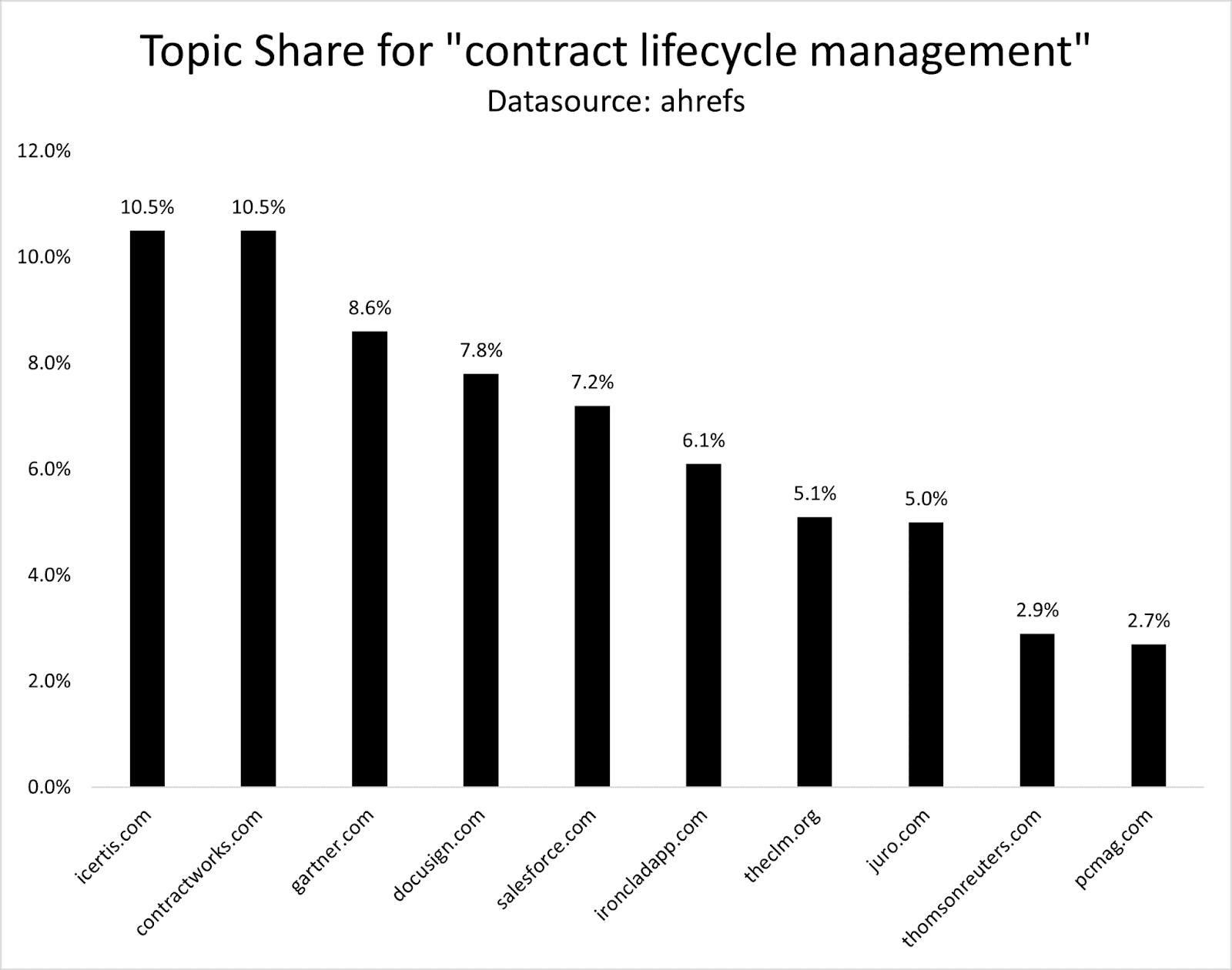

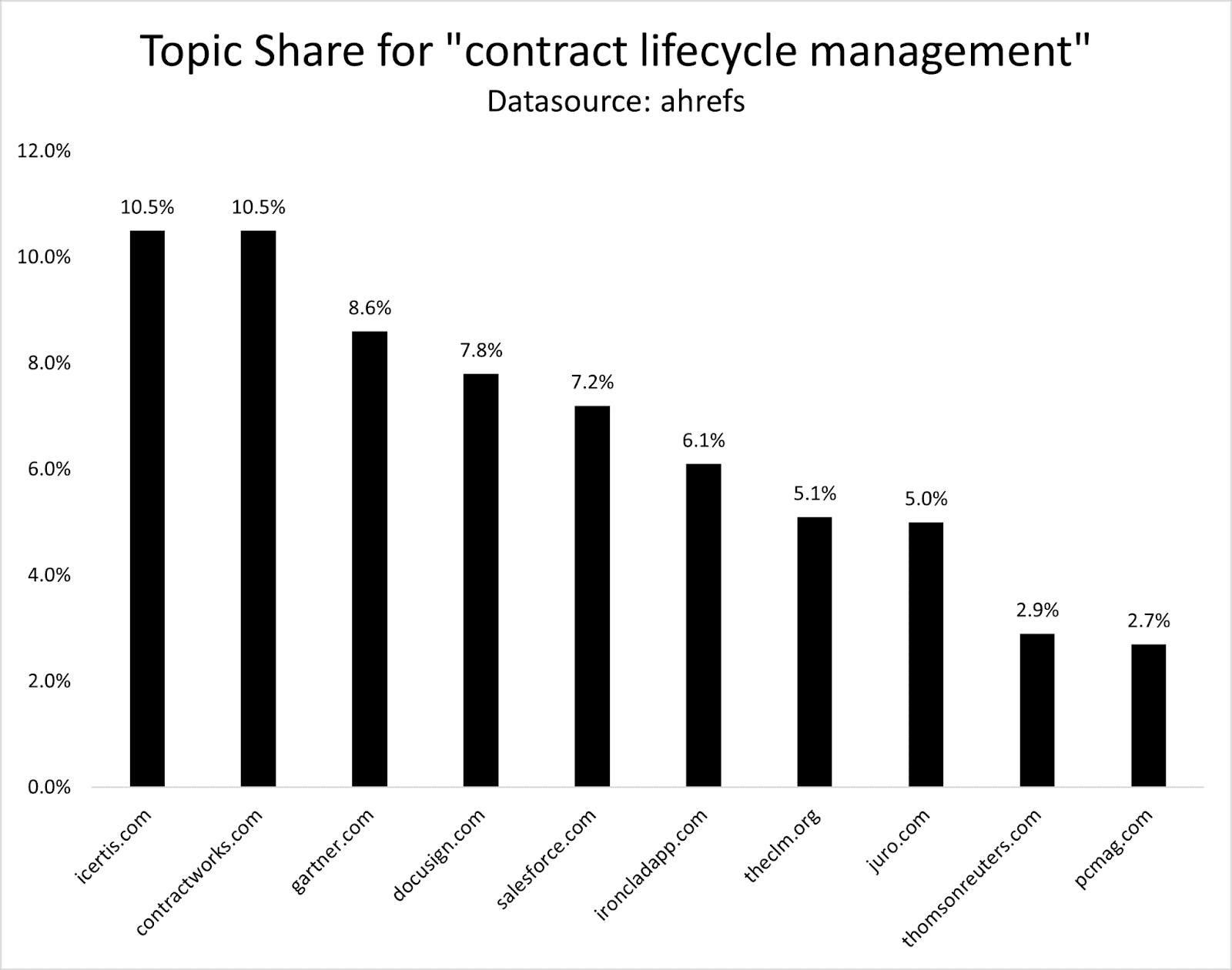

Here’s another example with a smaller topic.

“Spend analysis” has 142 keywords in Ahrefs when I first used this example. Following the same process, jaggaer.com has the highest Topic Share with 15%, Sievo 13%, and Tipalti 7%.

To track Topic Share continuously, you could set up a rank tracking project in Ahrefs and monitor traffic share for these keywords. However, for large topics, this might not be cost-efficient.

And if you wanted to do this for multiple topics, you would quickly get into the 100,000s of keywords to track.

The best solution I see is running this analysis once a month and tracking changes manually. (It’s not efficient but practical.)

Example: “Contract Lifecycle Management”

Another example is the topic “contract lifecycle management,” which has ~480 keywords.

Icertis and Contractworks are leading the topic, followed by Gartner, Docusign, Salesforce, and Ironclad.

If this process is so manual, is it worth the work to measure it every month?

In some cases, yes. If you need to demonstrate to your stakeholders in a practical way whether or not resources and investment into building topical authority are working, then you should measure it.

And what if you need to prove to stakeholders that you need to invest in topic X instead of topic Y for quicker SEO gains?

By scoring how well you currently cover each subtopic, you can identify the core topics Google already finds you an authority in.

Because putting resources in that specific topic will likely move the needle most and could have the quickest SEO ROI.

If you’re in a major growth push into a new topic area (based on a new service, product feature, etc.), it’s valuable to track and measure topical authority to understand how you’re progressing in Topic Share, based on who your competitors are, and what it takes to develop topical authority in your niche.

But if you commit to monitoring it over time, you can also correlate your topic share to your tracking for AIO and LLM visibility.

Find out what topics overlap and why. Discover what topics Google finds you an authority in, while LLMs don’t.

1. Content Breadth & Depth

Essentially, how many pages (quantity) or target queries/subtopics does your site have within a topic, and how good are they (quality)?

This is your content library’s comprehensiveness and utility. Thoroughly explore every facet of your target topic: definitions, use cases, common questions, and related subtopics.

Comprehensive, well‑structured content shows both users and search engines that you’re the go‑to resource on your targeted topics and is actually adding to the overall topical conversation, rather than a site that only skims the surface.

Use entity‑based tactics or AI‑powered similarity scores to ensure you’re covering the concepts and questions Google associates with your topic.

2. Smart Internal Linking

Internal links are signals for the relationship between articles about a topic.

Optimizing the anchor text, context, and number of internal links sends stronger signals to Google and helps users find what they’re looking for.

3. Topically Relevant Backlinks And Mentions

Backlinks provide another confidence layer for Google that your content is good and relevant for a specific topic.

Aim for backlinks and mentions from trusted sites in adjacent categories.

Getting mentioned or linked in the Wall Street Journal’s retail section (www.wsj.com/business/retail) is more valuable for Shopify than Salesforce, for example.

4. Prune Content

I did a deep dive on IBM and Progressive, two organizations that are winning the SEO game in competitive topics. Both sites went through massive pruning efforts to improve domain authority.

And in SEOzempic, I showcased where DoorDash actually lost organic traffic by multiplying pages. Topical authority is all about hyperfocusing on the topics that are most relevant to your business, not having the most pages.

All of these businesses saw their organic traffic roar after pruning topically irrelevant content – in some cases, even high-quality content that just wasn’t a good fit for the domain (like Progressive’s agent pages).

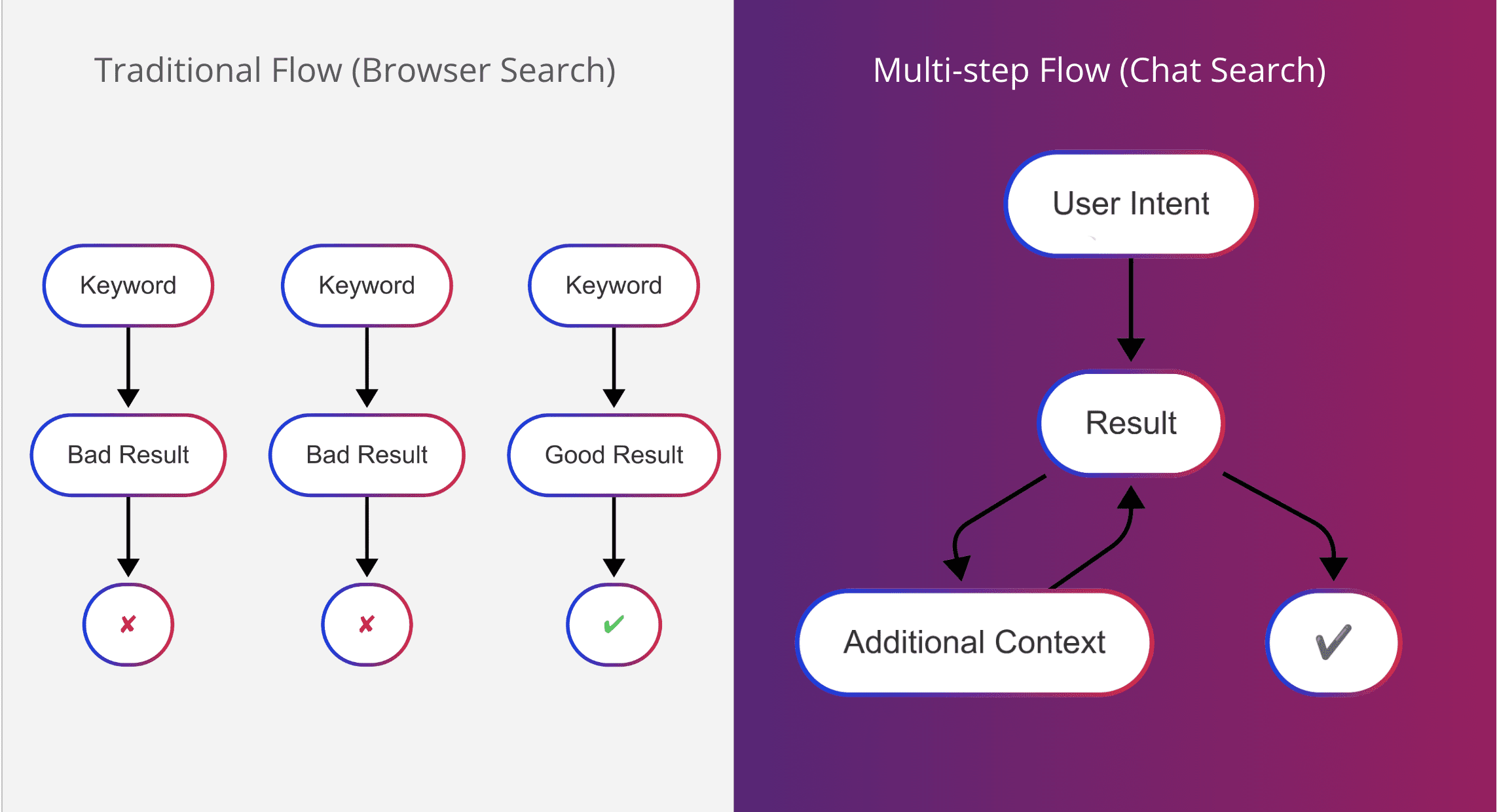

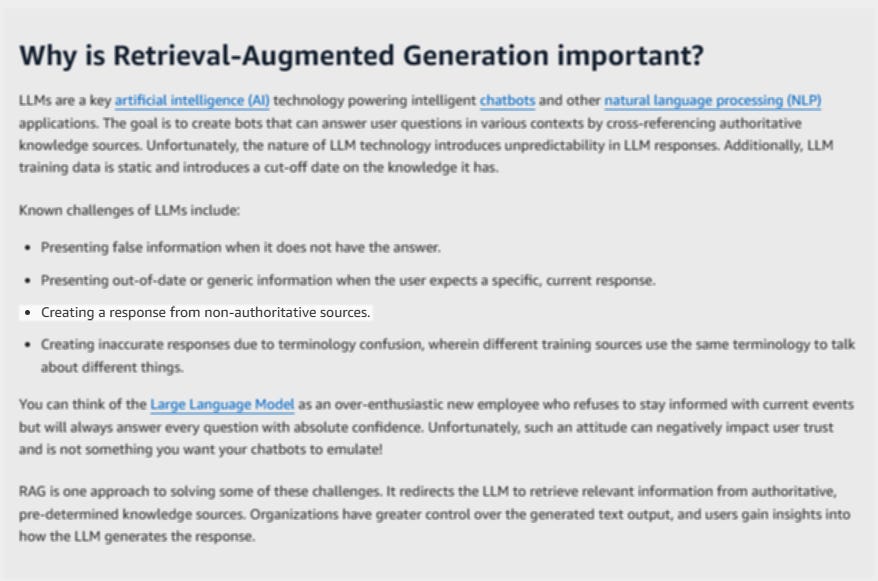

Retrieval-augmented generation (RAG) – the grounding mechanism behind OpenAI’s, Google’s, Meta’s and others’ LLMs – explicitly ranks external documents for authority before passing them to the model to ground its answer.

Their technical notes stress pulling “current and authoritative sources” to reduce hallucination.

OpenAI (and most likely other model developers as well) filter pre-training data by both quality and authority:

At the pre-training stage, we filtered our dataset mix for GPT-4 … and removed these documents from the pre-training set.3

ChatGPT’s monitor classifies sources and considers only authoritative pages as benign:

Benign behavior is defined as ‘Any authoritative resource a diligent human might consult.’4

My analysis of over 500,000 AI Overviews shows that the majority of citations point to highly authoritative and established sources.

But it’s not just AIOs. The top 10% of most visible content in ChatGPT and other LLMs also rewards comprehensive content that matches the ideal profile of high authority.

Paid subscribers: I’m releasing a topical authority workflow for you soon (anticipated next month!). Not a paid subscriber yet? Don’t miss this! Upgrade here.

Topical Authority Predictions For The Future Of SEO

As we’ve seen in the example of HubSpot and other sites, straying away too far from your core topics is a serious SEO risk.

I call this “overclustering.” Essentially, overclustering is when topic clusters unrelated to your core offerings may dilute your brand if you stretch into tangential topics and subtopics. The next core update could cut a significant chunk of your traffic.

However, major authoritative brands will continue to dominate, despite the fact that their brand isn’t an authority on every topic – and possibly even for niche queries – due to entrenched domain-level trust. The most prominent examples are Forbes and LinkedIn.

A hidden opportunity exists in AI Overview citations, which sometimes surface smaller sites with strong topical authority on a very specific subtopic or a piece of content with a unique perspective, making it crucial to maintain deep coverage in your niche to get “picked” by AIO algorithms.

Human signals rebound: As AI content saturates the web, Google may place renewed emphasis on behavioral metrics (CTR, dwell time, return visits) to distinguish genuinely authoritative sources from AI‑built noise.

We know that humans prefer answers from other humans as a way to balance AI answers from the usability study I published last week.

How To Approach Topical Authority As An SEO In A Volatile Search Landscape

I think, at the core, there are two questions you need to ask about your brand:

1. Credibility: Are we “credible” enough to target this topic? Do we already have enough depth, expertise, and context?

2. Growth: What’s our roadmap for expanding that authority over time, especially as AI‑generated content and LLM snippets flood the SERP?

As a new way of searching takes over (and as AI continues to flood the web with consensus content), search engines will lean harder on authentic, useful, and authoritative sources.

True topical authority isn’t about checking boxes. It’s about earning the perception of being an authority in a space from humans and algorithms alike.

Boost your skills with Growth Memo’s weekly expert insights. Subscribe for free!

1 Study shows that high Topical Authority leads to faster organic search visibility.

2 Understanding news topic authority

4 Introducing OpenAI o3 and o4-mini

Featured Image: Paulo Bobita/Search Engine Journal