Standby As Google Cannibalizes Itself (While Also Devouring All Of Us) via @sejournal, @Kevin_Indig

In 2023, I wrote about a provocative “what if”: What if Google added an AI chatbot to Search, effectively cannibalizing itself?

Fast-forward to 2025, and it’s no longer hypothetical.

With AI Overviews (AIOs) and AI Mode, Google has not only eaten into its own search product, but it has also taken a big bite out of publishers, too.

Cannibalization is usually framed as a risk. But in the right circumstances, it can be a growth driver-or even a survival tactic.

In today’s Memo, I’m revisiting product cannibalization through a fresh AI-era lens, including:

- What cannibalization really is (and why it’s not always bad).

- Iconic examples from Netflix, Apple, Amazon, Google, and Instagram.

- How Google’s AI shift meets the definition of self-cannibalization – and where it doesn’t.

- The four big marketing implications if your brand cannibalizes itself in the AI-boom landscape (for premium subscribers).

Image Credit: Kevin Indig

Image Credit: Kevin IndigBoost your skills with Growth Memo’s weekly expert insights. Subscribe for free!

Today’s Memo is an updated version of my previous guide on product cannibalization.

Previously, I wrote about how adding an AI chatbot to Google Search would mean that Google would be cannibalizing itself – which only a few companies in history have successfully accomplished.

In this updated Memo, I’ll walk you through successful product cannibalization examples while we revisit how Google has cannibalized itself through a refreshed lens.

Because … Google has effectively cannibalized itself with the incorporation of AI Overviews (AIOs) and AI Mode, but they haven’t found a way to monetize them yet.

And publishers and brands are suffering as a result.

So who wins here? (Does anyone?) Only time will tell.

Product cannibalization is the replacement of a product with a new one from the same company, typically expressed in sales revenue.

Even though most definitions say that cannibalization occurs when revenue is flat while two products trade market share, there are a number of examples that show revenue can grow as a result of cannibalization.

Product cannibalization, or market cannibalization, is often seen as something bad – but it can be good or even necessary.

Let’s consider a few examples of product cannibalization you’re likely already familiar with:

- Hardware.

- Retail.

- SaaS/Tech.

Hardware companies, for example, need to bring out better and newer chips on a regular basis. The lifecycle of AI training chips is often less than a year because new architectures and higher processing capabilities quickly make the previous generation obsolete.

Right now, chips are the hottest commodity in tech – companies building and training AI models need them in massive quantities, and as soon as the next generation is released, the old one loses most of its value.

As a result, chipmakers are forced to cannibalize their own products, advancing designs to stay competitive not only with rival manufacturers but also with their own previous breakthroughs.

But there are stark differences between cannibalization in retail and tech.

Retail cannibalization is driven by seasonal changes or consumer trends, while tech product cannibalization is primarily a result of progress.

In fashion, for example, consumers prefer this year’s collection over last season’s. The new collections cannibalize old ones.

In tech, new technology leads companies to replace old products.

New PlayStation consoles, for example, significantly replace sales from older ones – especially since they’re backward compatible with games.

Another example? The growth of the headless content management system (CMS), which increasingly replaces the coupled CMS and pushes content management providers to offer new products and features.

Netflix made several product pivots in its history, but two stand out the most:

- The switch from DVD rental to streaming, and

- Subscription-only memberships to ad-supported revenue.

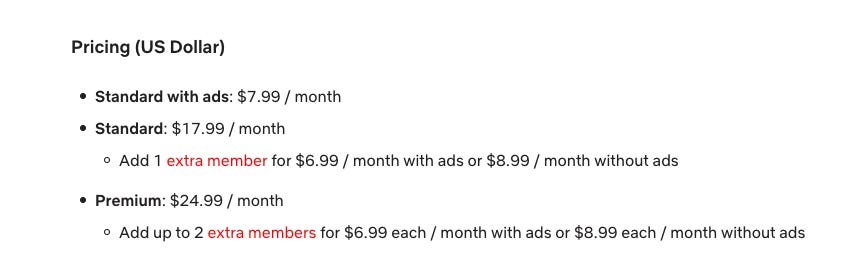

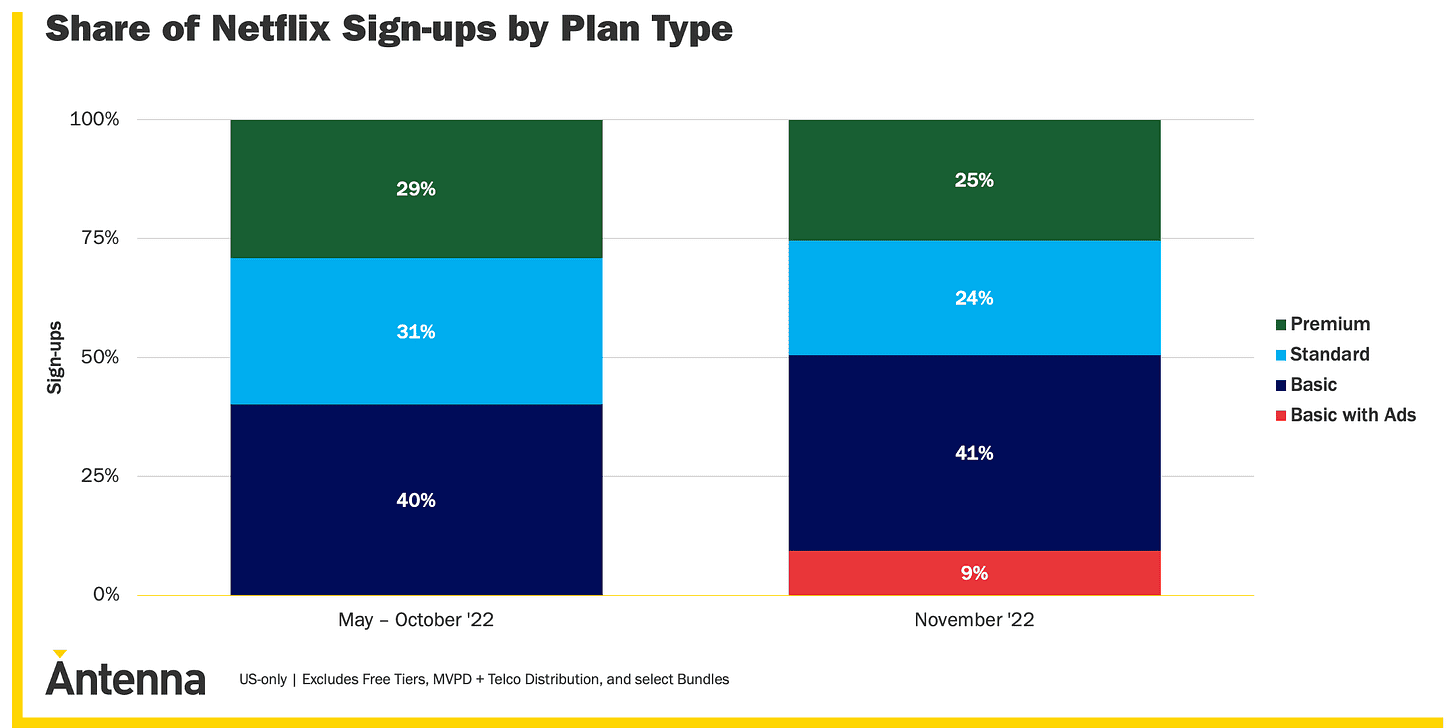

On November 3, 2022, Netflix launched an ad-supported plan for $6.99/month on top of its standard and premium plans. (It has since increased to $7.99/month. See image below.)

During the pandemic, Netflix’s subscriber numbers skyrocketed, but they came back to earth like Falcon 9 when Covid receded: Enter the “Basic with ads” subscription that promoted retention.

Another challenge for Netflix? Competitors. Lots of them – and with legacy media histories.

Initially, the strategy of creating original content and making the experience seamless across many countries resulted in strong growth.

But when competitors like HBO, Disney, and Paramount launched similar products with original content, growth slowed down.

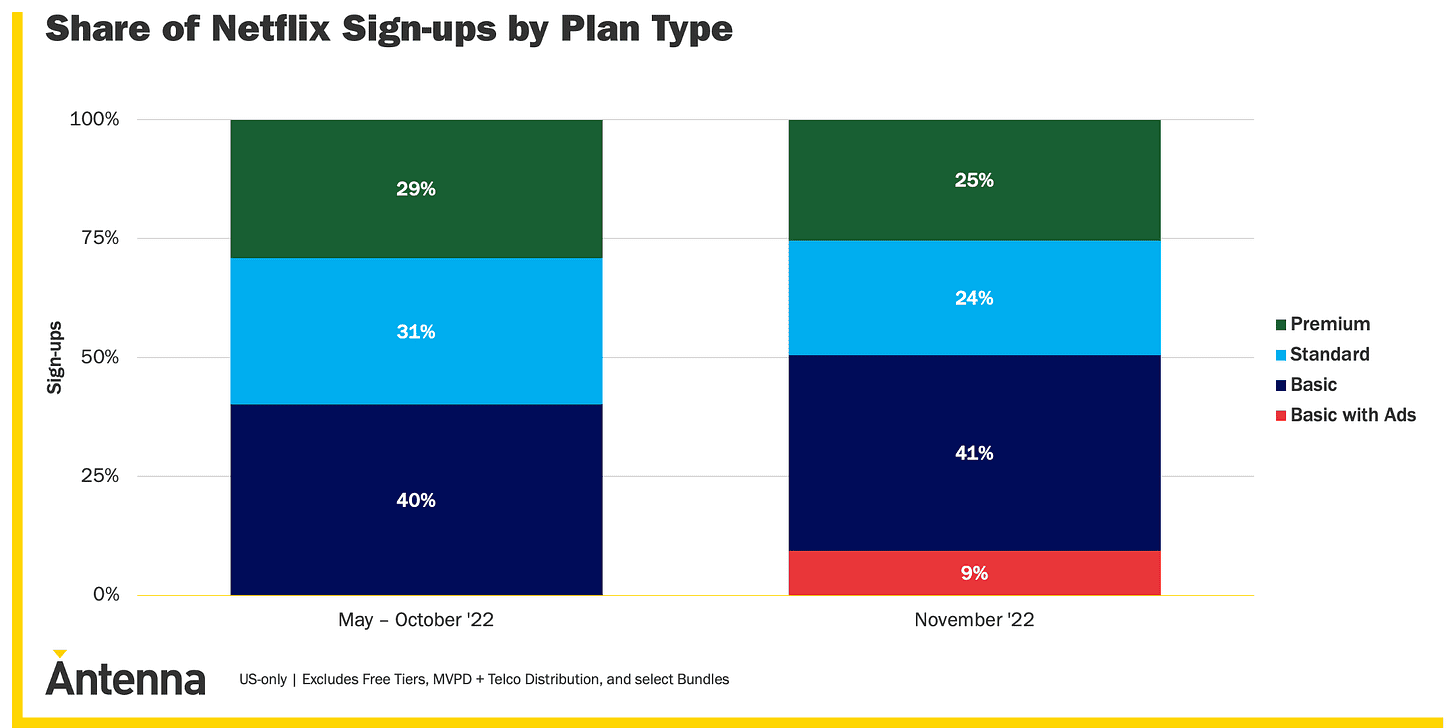

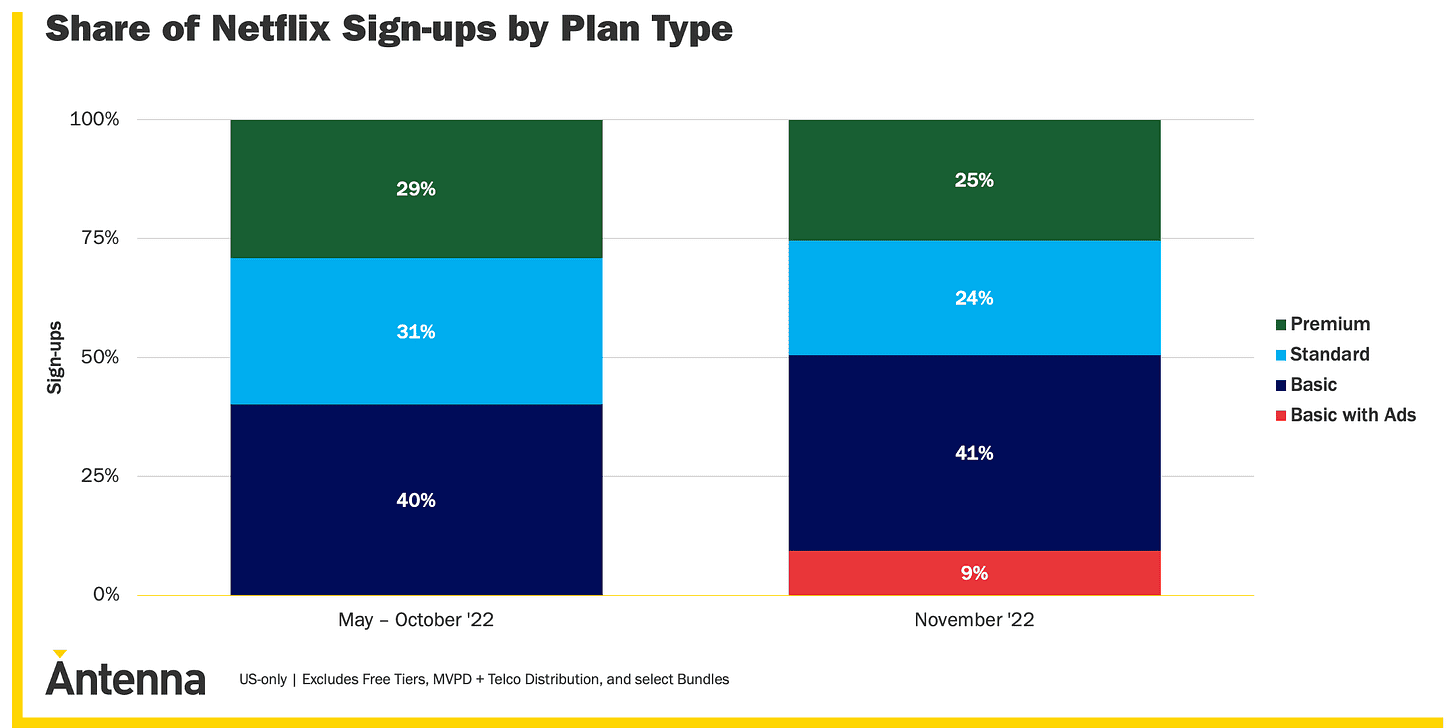

When Netflix launched the ad-supported plan, only 0.1% of existing users made the switch, but 9% of new users chose it (see below).

A look at other streaming platforms suggests the share will increase over time. Here’s a quick look at percentages of subscribers on ad-supported plans across platforms:

- Hulu has 57%.

- Paramount+ – 44%.

- Peacock – 76%.

- HBO Max – 21%.

However, Netflix’s new plan is not technically considered product cannibalization but partial cannibalization based on price.

The product is the same, but through the new plan, it’s now accessible to a new customer segment that previously wouldn’t have considered Netflix.

Additionally, it prevents an existing customer segment from churning since the recession shuffles the spending behavior of customer segments.

We can conclude that the new ad-supported Netflix plan is not the same type of cannibalization as its streaming service.

In 2007, internet connections became strong enough to open the door to streaming. Netflix was not the first company to provide movie streaming, but the first one to be successful at it.

The company paved the way by incentivizing DVD rental customers to engage online, for example, with rental queues on Netflix’s website. But ultimately, the pivot was the result of technological progress.

Another product that saw the light of day for the first time in 2007?

The iPhone.

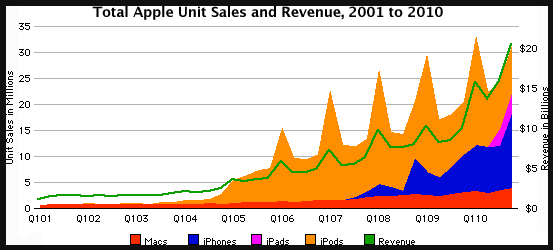

When it launched, the iPhone had all the features of the iPod and more, making it a case of full product cannibalization.

As a result, the share of revenue from the iPod significantly decreased once the iPhone launched (see image below).

Even though you could argue it’s a “regular case” of market cannibalization when looking at revenue streams from each product, it was a technological step-change instead of partial cannibalization based on pricing.

However, big steps in technology don’t always lead to a desired replacement of an old product.

Take the Amazon Kindle, for example.

Launched in 2007 – just like Netflix’s streaming product and the iPhone (something was up that year) – Amazon brought its new ebook reader, Kindle, to market.

It made such an impact that people predicted the death of paper books. (And librarians everywhere laughed while booksellers braced themselves.)

But over 10 years later, ebooks stabilized at 20% market share, while print books captured 80%.

The reason is that publishers got into pricing battles with Amazon and Apple, which also started to play an important role in the ebook market. (It’s a long story; but you can read about it here).

Amazon attempted to cannibalize its core business (books) with the Kindle (ebooks), but couldn’t make product pricing work, which resulted in ebooks often being more expensive than print editions. Yikes.

The technology changed, but consumers weren’t incentivized to use it.

Let’s look at two final examples here. These two companies acquired or copied competitors to control partial cannibalization:

- YouTube videos are technically better answers to many search queries than web results. Google saw this very early on and smartly acquired YouTube. Video results took some time to fill more space in the Google SERP, even though they technically cannibalize web results. But today, they’re often some of the most visually impactful results (and often the first results) that searchers see.

- Instagram saw the success of Snapchat stories and decided to copy the feature in order to mitigate competitor growth. Despite the cannibalization of regular Instagram posts, net engagement with Stories was greater. (And speaking of YouTube, you could argue that YouTube Shorts follow the same principle.)

With all this in mind, we can say there is full and partial cannibalization based on how many features a new product replaces.

Pricing changes, copied features, or acquisitions lead to partial cannibalization that doesn’t result in the same revenue growth as full cannibalization.

Full cannibalization requires two conditions to be true:

- The new product must be built on a technological step change, and

- Customers need to be incentivized to use it.

With this knowledge foundation in place, let’s examine the shifts in the Google Search product over the last 12-24 months.

Let’s apply these product cannibalization principles to the case of Google vs. ChatGPT & Co.

In the original content of this memo (published in 2023), I shared the following:

If Google were to add AI to Search in a similar way as Neeva & Co (see previous article about Early attempts at integrating AI in Search), the following conditions would be true:

- AI Chatbots are a technological step-change.

- Customers are incentivized to use AI Chatbots because they give quick and good answers to most questions.

However, not all conditions are true:

- AI Chatbots don’t provide the full functionality of Google Search.

- It’s not cheaper to integrate an AI Chatbot with Search.

I’ve been clear about my hypothesis for a while now. As I shared in my 2025 Halftime Report:

I personally believe that AI Mode won’t launch [fully in the SERP] before Google has figured out the monetization model. And I predict that searchers will see way fewer ads but much better ones and displayed at a better time.

And I highlighted this in Is AI cutting into your conversions? also:

Google won’t show AI Mode everywhere, because adoption is generational (see the UX study of AIOs for more info). I think AI Mode will launch at a broader scale (like showing up for more queries overall) when Google figures out monetization.

Plus, ChatGPT is not yet monetizing, so advertisers go to Google and Meta – for now. And that’s my hypothesis as to why Google Search is continuing to grow.

Keep in mind, to successfully cannibalize your existing product, you need customers to want to use it. And according to a recent report from Garrett Sussman over at iPullrank, over 50% of users who tried Google’s AI Mode once and didn’t return. [Source] (So it seems Google’s still figuring out the incentivising part.)

Even with the advancements we’ve seen in the last six to 12 months with AI models – and the inclusion of live web search and product recommendations into AI chats – I’d argue that they’re useful for information-driven or generative queries but lack the databases needed to give good answers to product or service searches.

Let’s take a look at an example:

If you input “best plumber in Chicago” or “best toaster” into ChatGPT, I’d argue you’d actually get less quality results – for now – than if you input the same queries into Google. (Go try it for yourself and let me know what you find. But here’s a walk-through with Amanda Johnson hopping in to illustrate this below.)

At the same time, these product and service queries are the queries that search engines with an ad revenue business model can monetize best.

It was said that ChatGPT costs at least $100,000 per day to run when it first crossed 1 million users in December 2022. By 2023, it was costing about $700,000 a day. [Source]

Today, it’s likely to be a significant multiple of that.

Keep in mind, Google Search sees billions of search queries every day.

Even with Google’s advanced infrastructure and talent, AI chatbots are costly.

And they can (still) be slow – even with the advancements they’ve made in the last 12 months. Current and classic Google Search systems (like Featured Snippets and People Also Ask) might provide a much faster answer.

But, alas, here we are in 2025, and Google is cannibalizing its own product via AIOs and AI Mode.

Right now, according to Similarweb data, usage of the AI Mode tab on Google.com in the U.S. has slightly dipped and now sits at just over 1%. [Source, Source]

Google AIOs are now seen by more than 1.5 billion searchers every month, and they sit front and center. But engagement is falling. Users are spending less time on Google and clicking fewer pages. [Source]

But Google has to compete with not only other search engines that provide an AI-chat-forward experience, but also with ChatGPT & Co. themselves.

Below, I’ve listed out important considerations for your brand if you might utilize product cannibalization as a strategy.

You’ll want to:

- Reframe cannibalization as a strategic option for the brand rather than a failure.

- Use the full vs. partial cannibalization lens.

- Test the two success conditions.

- Protect your core offerings while you experiment.

- Use competitive cannibalization defensively.

- Monitor, learn, and adjust.

In the next section, for premium subscribers, I’ll walk you through what to watch out for if you decide to use product cannibalization as a growth strategy.

1. Reframe Cannibalization As A Strategic Option

- Don’t default to seeing product cannibalization as a failure; assess if it can protect market share or accelerate growth.

- Audit your product line and GTM strategy to identify areas where you could self-disrupt before a competitor does.

2. Use The Full Vs. Partial Cannibalization Lens

- Full cannibalization works best when there’s a tech leap and strong customer incentives.

- Example: Apple iPhone replacing iPod – all iPod features plus far more capability led to the iPod’s rapid decline.

- Partial cannibalization via pricing, features, or acquisitions is less risky but may not deliver big growth.

- Example: Netflix ad-supported plan – same streaming product, but a lower-cost tier opened the door to new segments and reduced churn risk.

- Map current and future offerings against these two categories to decide your approach.

3. Test The 2 Success Conditions

A cannibalizing product is more likely to succeed when both are true:

- Tech Leap: Offers a meaningfully better way to solve the problem.

- Example: Netflix DVD → Streaming in 2007 leveraged faster internet speeds to change the delivery model entirely.

- Customer Incentive: Lower cost, better performance, more convenience, or status.

- Example: YouTube acquisition by Google made richer, more visual answers possible in Search, improving the user experience.

If both apply → pursue full cannibalization.

If one applies → pursue partial cannibalization with controlled scope.

4. Protect Your Core While You Experiment

- Identify high-revenue segments and shield them from early disruption.

- Example: Google keeping AI Mode away from highly monetizable queries like “best credit card” until the ad model is ready.

- Test self-disruption in lower-stakes markets to validate demand before scaling

- Example: Instagram Stories rolled out in a way that boosted net engagement while protecting the feed’s ad inventory.

5. Use Competitive Cannibalization Defensively

- When a rival launches a threat, choose between:

- Acquire: Google acquiring YouTube to control the rise of video as a search answer format.

- Copy: Instagram adopting Stories from Snapchat to stop user migration and grow engagement.

- Differentiate: Amazon Kindle – a tech leap that tried to move readers from print to digital, but without a price advantage, adoption plateaued.

6. Monitor, Learn, And Adjust

- Track engagement, revenue mix, and adoption by segment.

- Example: Similarweb data on AI Mode – U.S. usage holding just over 1%, signaling limits to adoption speed.

- Adjust rollout pace based on generational adoption patterns and competitor moves.

- Example: Google AIO engagement drop – showing that placement alone doesn’t guarantee sustained user interest.

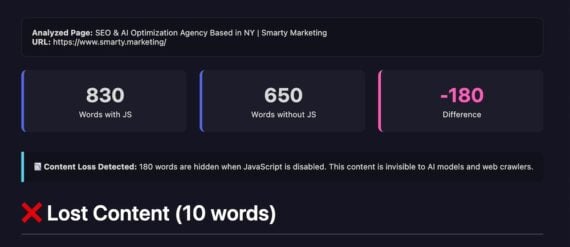

A good example is how to do this is Chegg.

The company has been obliterated by Google’s AI Overviews and even sued Google. Chegg’s value was answers to homework questions, but since almost every student uses ChatGPT for that, their value chain broke. How is the company reacting to this life-ending threat?

In my Q2 Marketplace Deep Dive, I explain that Chegg has found a lifeboat:

Chegg has launched a new tool, Solution Scout, that allows students to compare answers from ChatGPT & Co. with Chegg’s archive.

Instead of trying to beat AI Chatbots, Chegg hits them where it hurts: in the hallucinations.

LLMs can make stuff up, which is especially painful when it comes to learning and taking tests. Imagine you spend hours internalizing the wrong facts!

Solution Scout validates AI answers with Chegg’s archive of human-sourced material. It compares the answer from foundational models and highlights differences and consensus.

Featured Image: Paulo Bobita/Search Engine Journal