5 Ways To Prove The Real Value Of SEO In The AI Era via @sejournal, @wburton27

As SEO evolves with AI optimization, generative engine optimization, and answer engine optimization, brands and marketers must rethink their SEO strategies to stay competitive.

Instead of focusing solely on traditional SEO strategies and tactics, you need to be visible in AI-powered search and answer engines.

Showing the value of SEO in this new world means showcasing how optimized, structured, and intent-driven content can maximize visibility across generative platforms.

It can also enhance user trust and drive qualified engagement in a world where AI chatbots and platforms interpret a user’s intent, retrieve relevant information, and generate clear and concise answers.

In today’s competitive AI-powered results, it can be difficult to maximize your visibility.

With SEO becoming more challenging and the search engine results constantly changing to incorporate AI results, what metrics do you need to track, and how can you show the value of SEO in today’s AI-powered search results?

Let’s explore.

Proving The Value Of SEO

Proving SEO value depends on your client or prospective client’s goals and what will move the needle for them to get visibility in the search engine results pages (SERPs) and in AI chatbots and platforms.

This could include local search, app store optimization, content marketing, technical optimization, AI Overviews, etc.

That said, you must show performance improvements and drive revenue to secure more funding and make your client successful.

In my experience, here are some of the best metrics to track and measure to prove the SEO value in an AI world:

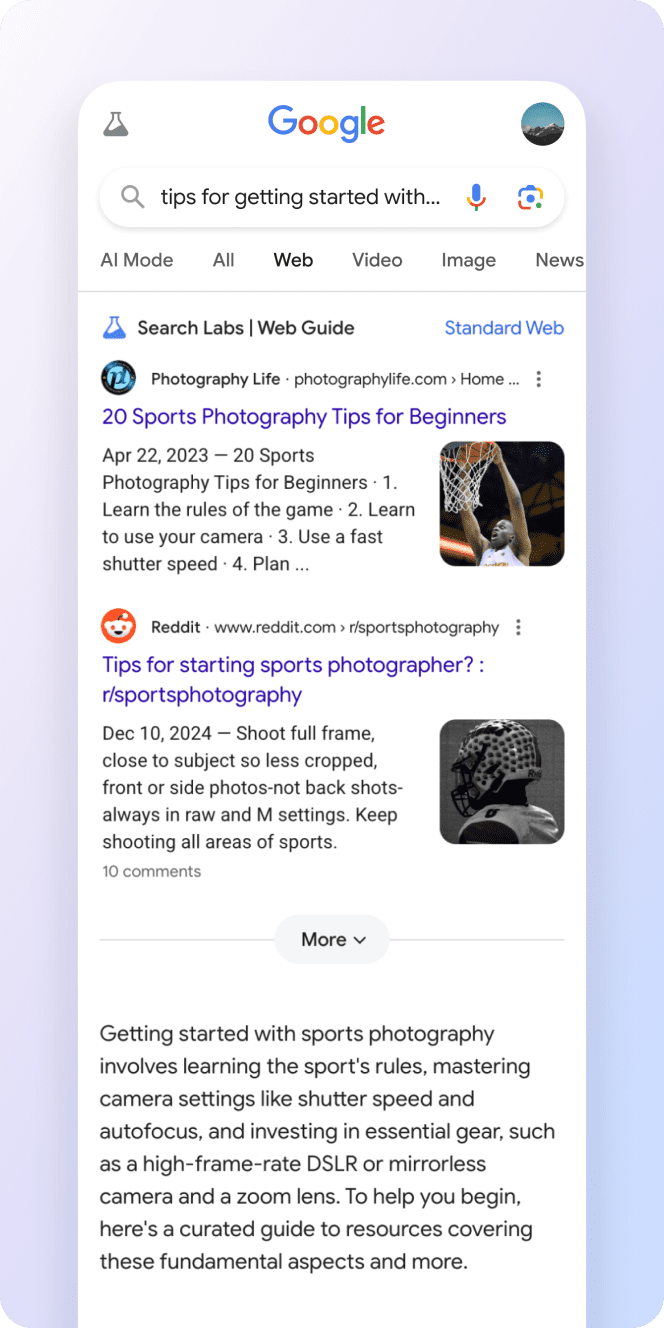

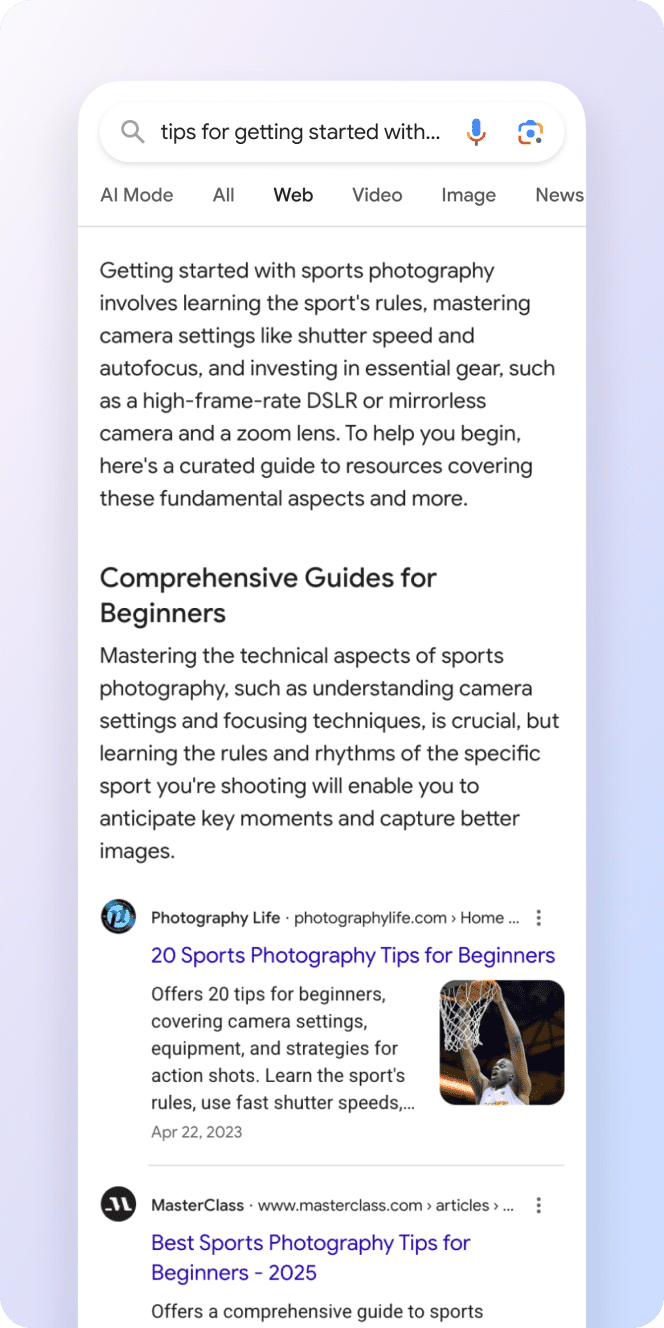

1. Monitor AI Results

With AI Overviews and generative AI changing SEO, it is important to track visibility as we move from ranking to relevance.

AI Overviews are not expected to go anywhere. During I/O 2025, Google announced that AI Overviews were expanding to over 200 countries and more than 40 languages.

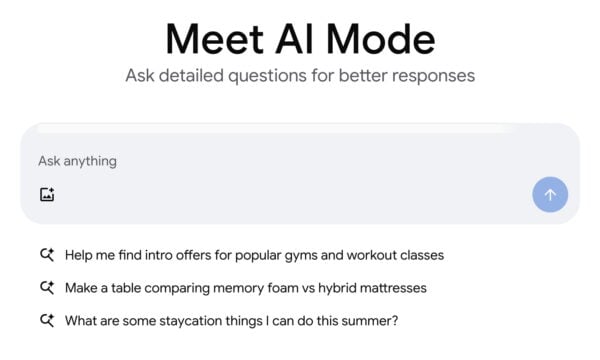

AI Mode is now available to all users in the United States without the need to opt in via Search Labs.

To track AI Overviews:

Identify Which Queries Trigger AI Overviews

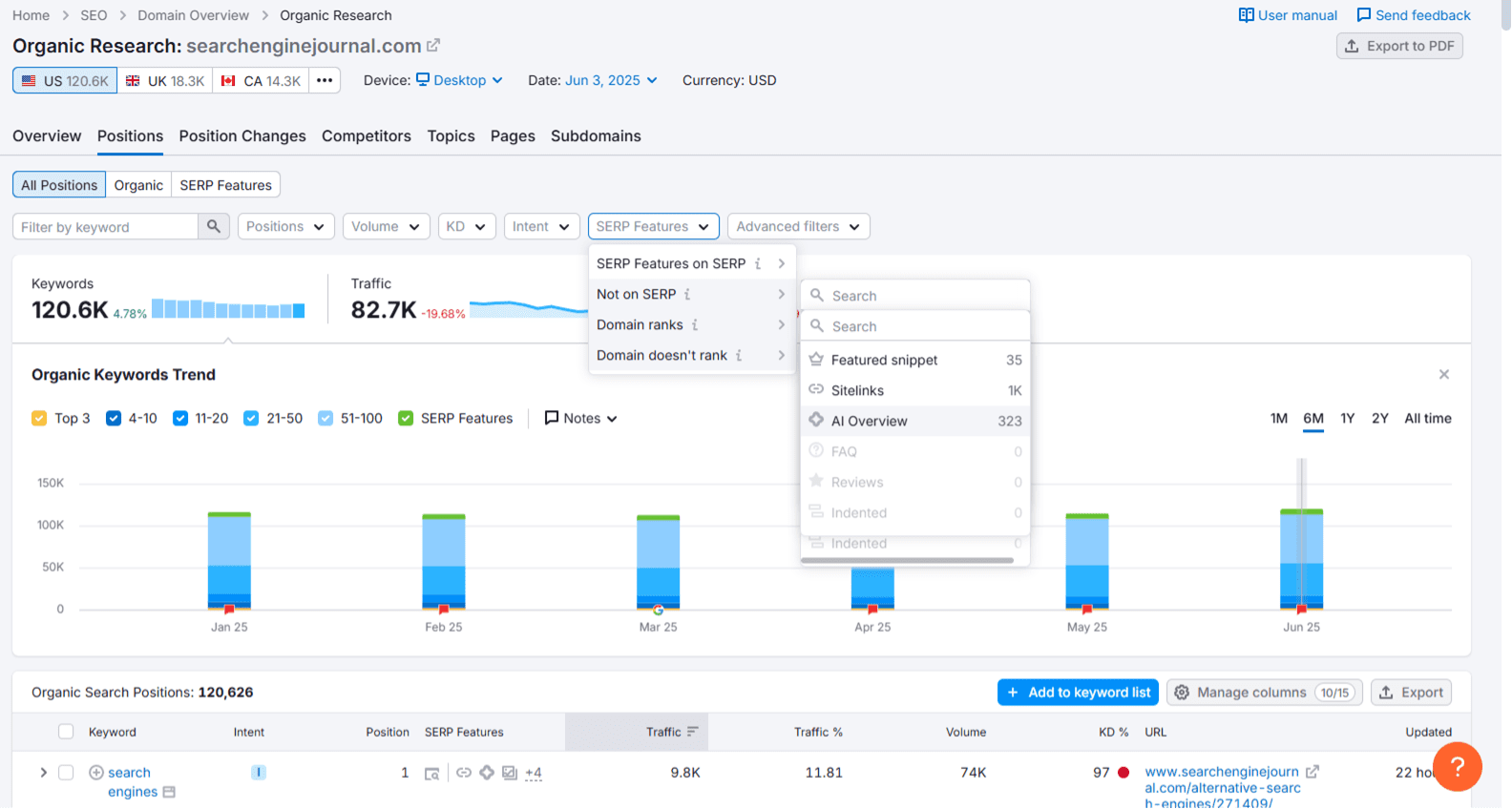

You can use tools like ZipTie.dev or Semrush to track which of your top-performing queries show AI Overviews and whether your site is included in those summaries.

Screenshot from Semrush, June 2025

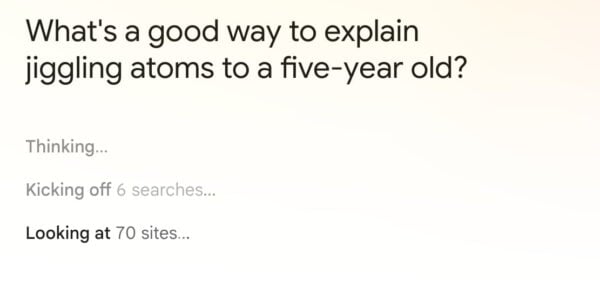

Screenshot from Semrush, June 2025Track AI Overview Queries

Once you have a list of queries that your site does or doesn’t appear in for an AI Overview, you should track those queries using keyword tracking tools and compare your traffic pre- and post-AI rollouts.

Strategize To Optimize Your Content For AI Overviews

Segment your traffic based on content type, as many informational queries are experiencing a decline in traffic due to users obtaining answers directly from AI Overviews.

This will help you identify which areas are most impacted and plan your strategy to optimize queries that have the potential to show AI Overviews.

Consider server-side analytics solutions (e.g., Writesonic’s AI Traffic Analytics) to track AI crawler visits, see which pages are accessed, and monitor trends over time.

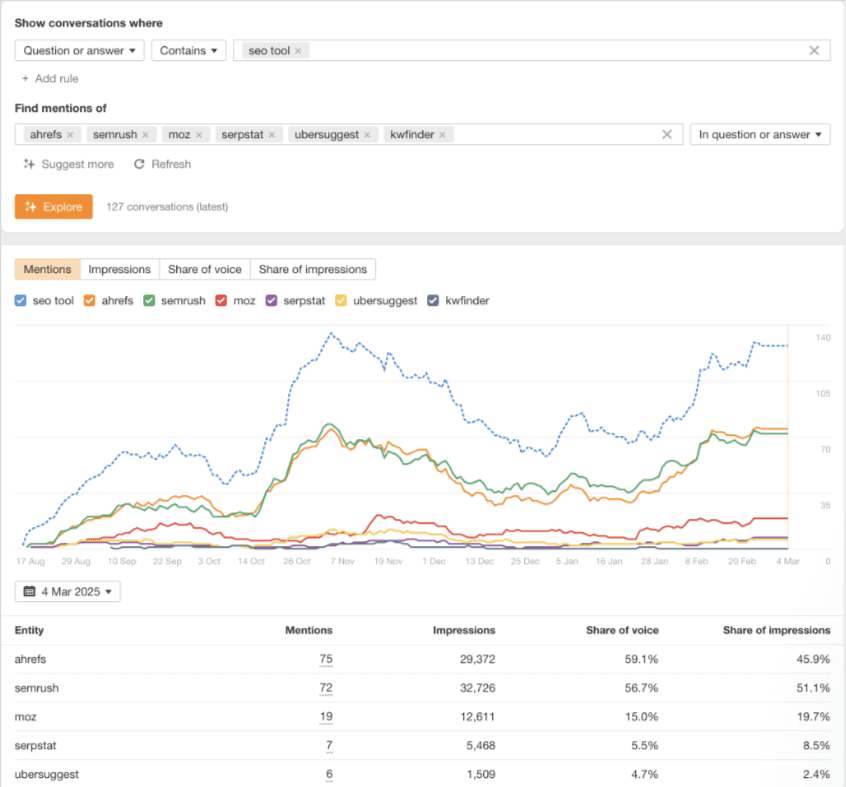

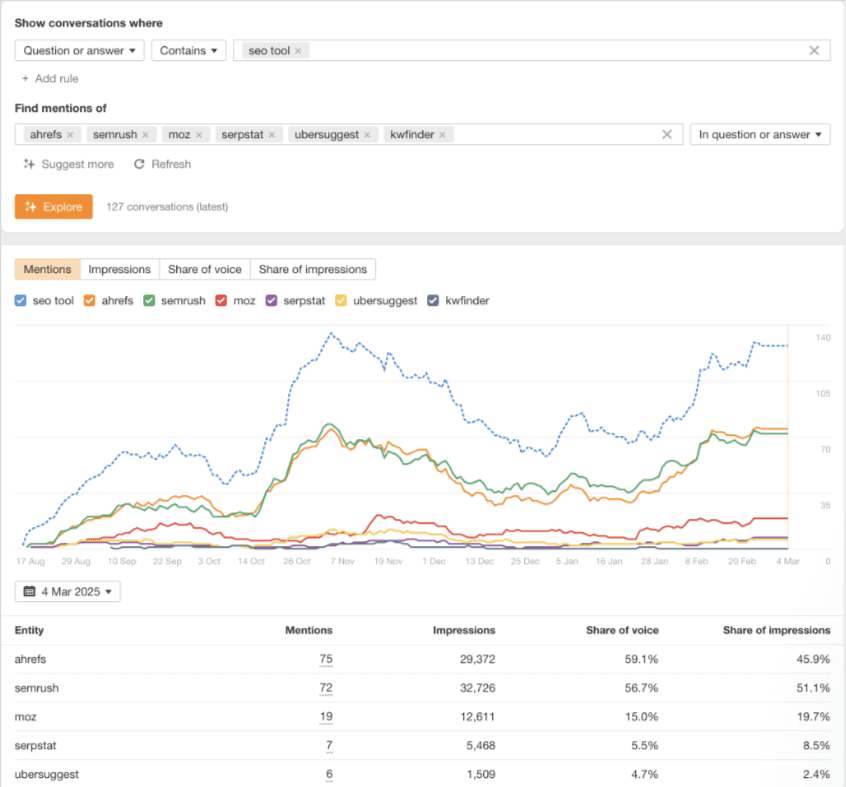

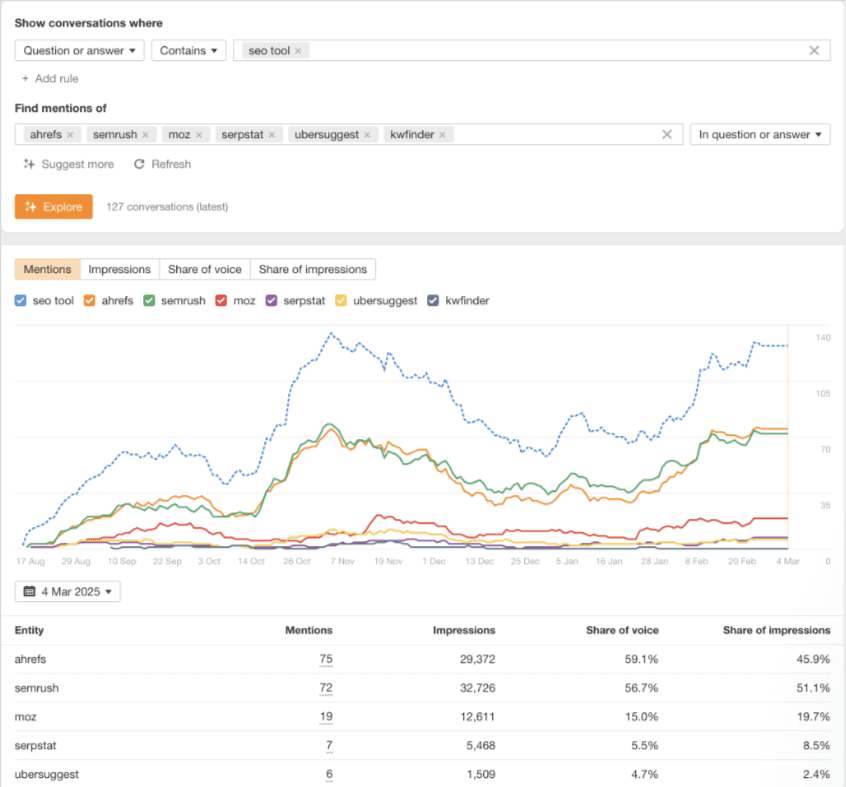

2. Track AI Brand Mentions

Since AI platforms process information differently than traditional search engines, getting mentioned in ChatGPT, Perplexity, Claude, or Google’s AI Mode for relevant queries is a must.

AI platforms like ChatGPT and Google’s AI Overview generate answers from a mix of training data and some real-time retrieval, depending on the platform and setup.

In my experience, brands that are frequently mentioned across various platforms, including PR, blogs, social media, news coverage, YouTube forums (such as Reddit and Quora), and authoritative sites, tend to be mentioned by AI.

To track AI mentions, several tools like Brand24, Brand Radar from Ahrefs, and Mention.com use AI to monitor online conversations across various platforms, leveraging large datasets to provide insights into your brand’s perception and those of your competitors.

It’s imperative that you find out if your brand is mentioned, what people are saying about your brand (both positive and negative), what queries are used to describe it, and which websites mention your brand.

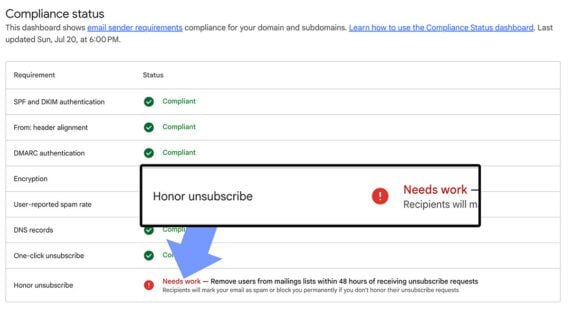

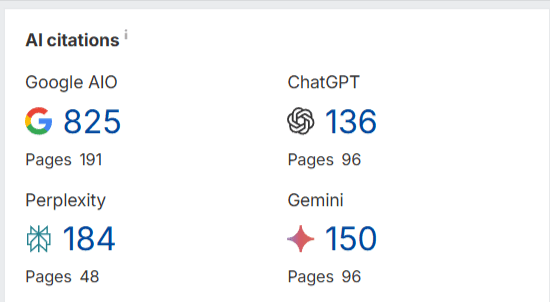

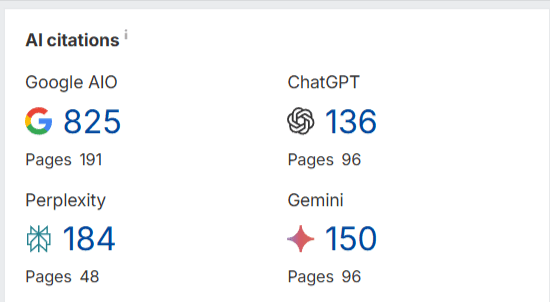

3. Track AI Citations/References

Checking to see if your website is cited by large language models (LLMs) can help brands and marketers understand how their content is being used by AI and assess their brand’s authority and visibility.

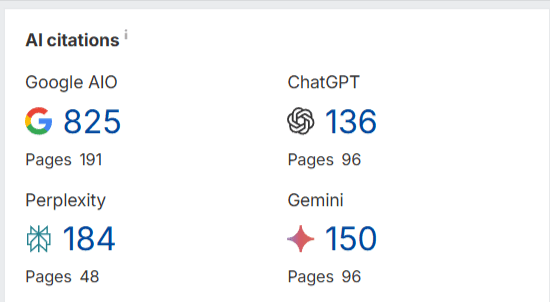

Ahrefs now offers a free tool that tracks when your website is cited in the answers generated by AI-powered search tools like Google AIO, ChatGPT, and Perplexity. AI citations count how often a domain was linked in AI results.

Pages show how many unique URLs from this domain were linked.

This is one of my favorite audit tools to look to see if there are any citations in any brand that we’re reviewing.

If Ahrefs adds trend analysis to track whether you’re gaining more citations in Google AIO, ChatGPT, and other platforms over time, it would be a valuable way to assess whether your strategies are working.

4. Tracking Branded Searches

It’s extremely important to track your branded searches in this new SEO AI era. AI-powered search results are personalized, and LLMs like Gemini and ChatGPT, to name a few, heavily consider user intent and context.

Having strong brand signals could improve entity recognition, which can improve your visibility for related queries.

Tracking how AI-generated answers (e.g., featured snippets or AI Overviews) treat your brand helps you optimize for entity-driven SEO.

In the AI SEO era, where search engines prioritize context, trust, and relevance, tracking branded searches could inform you to refine strategies that help defend your SERP presence and maximize conversions.

Here are some tips to help enhance branded visibility:

- Create unique, authoritative, and factual, conversational content because AI models prioritize reliable and accurate information. Focus on content that demonstrates expertise and includes verifiable data.

- Structure content for AI readability by using clear headings (H1, H2, H3), bullet lists, numbered lists, and data tables. Also, create concise paragraphs that directly answer questions.

- Leverage schema markup like Organization, Product, Service, FAQPage, and Review to provide structured data that AI models can easily understand and reference.

- Build brand authority and expertise by getting consistent citations, mentions on authoritative third-party sites, and positive reviews, to contribute to AI’s perception of your brand’s credibility.

- Optimize conversational queries by creating content that directly answers “who, what, why, and how” in your niche.

- Be active on platforms like Reddit and Quora, where AI models often pull information. SEO becomes “Search Engine Everywhere.”

- Regularly review your AI visibility data, identify gaps, and adjust your content and SEO strategies based on insights.

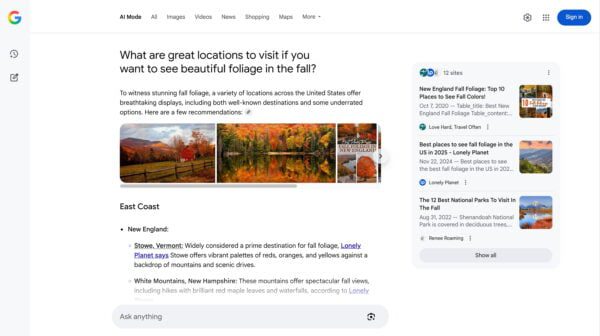

5. Tracking AI Mode Metrics

AI Traffic In GSC

Google has recently provided some data in GSC for tracking AI Mode and marketers can track clicks, impressions, and positions.

AI Mode groups the user’s question into subtopics and searches for each one simultaneously, and users can go deeper.

If a user asks a follow-up question within AI Mode, they are essentially performing a new query. All impression, position, and click data in the new response are counted as coming from this new user query.

AI Traffic In GA4

While Google Analytics 4 doesn’t explicitly label AI traffic, you can look for patterns. Create custom reports with “Session source/medium” and apply regex filters for known AI domains (e.g., .*ChatGPT.*|.*perplexity.*|.*openai.*|.*bard.*).

For specific content you hope AI will cite, create unique URLs with UTM parameters (e.g., utm_source=chatgpt, utm_medium=ai). This can help attribute some traffic directly.

If you can get more conversions from AI Overviews, like Ahrefs did, when it found that AI search visitors converted at a rate 23 times higher than traditional organic search traffic, despite representing only 0.5% of total website visits, then you will have discovered a conversion goldmine that makes AI optimization not just worthwhile, but essential for staying competitive.

Final Thoughts

The SEO landscape has shifted from optimizing search engines and traditional search to optimizing for AI-powered chatbots and solutions, such as ChatGPT, Perplexity, Claude, Google’s AI Overviews, and potentially OpenAI’s web browser “in the coming weeks,” according to Reuters.

Google may face increased pressure and potentially lose market share if OpenAI launches an AI-powered web browser that challenges Google Chrome, changing how users access web content.

OpenAI has 500 million weekly active users of ChatGPT and could disrupt a key component of rival Google’s ad-money source.

SEO is no longer about ranking on the first page of Google.

It’s about being relevant and visible across multiple AI platforms, getting mentioned in generative responses, and demonstrating value through AI-focused metrics outside of the traditional metrics like rankings and traffic.

Brands and marketers that prove the SEO value in this new era can deliver immediate, measurable value while building momentum for larger investments in the future.

More Resources:

Featured Image: Roman Samborskyi/Shutterstock