When Darren Riley moved to Detroit seven years ago, he didn’t expect the city’s air to change his life—literally. Developing asthma as an adult opened his eyes to a much larger problem: the invisible but pervasive impact of air pollution on the health of marginalized communities.

“I was fascinated about why we don’t have the data we need,” Riley recalls, “or why we don’t have the infrastructure to solve these issues, to understand where pollution is coming from, how it’s impacting our communities, so that we can solve these problems and make an equitable breathing environment for everybody.”

That personal reckoning sparked the idea for JustAir, a Michigan-based clean-tech startup building neighborhood-level air quality monitoring tools. The goal is simple but urgent: provide communities with access to hyper-local data so they can better manage pollution and protect public health. As Riley puts it, “JustAir is solving the problem of how to better manage local pollution so that we can make sure our communities, our lifestyles—where we work, where we play, and where we learn—are really protected.”

Founded during the height of the pandemic, when the connection between health disparities and air quality became impossible to ignore, JustAir now partners with local governments, health departments, and community residents to deploy monitoring networks that offer key data relevant to everything from policy to personal decision-making.

From the start, the Michigan Economic Development Corporation (MEDC) offered key support that helped turn JustAir’s bold vision into technical infrastructure. Through the MEDC’s early-stage funding partners and a network of mentorship and resources known as SmartZones, JustAir sharpened its product-market fit and gained critical momentum.

Success for Riley isn’t just about scale, it’s about impact. “It warms my heart, and it shows that we’re doing exactly what we said we wanted to do,” Riley says, “which is to make sure that communities have the data that they deserve to create the future, the clean, healthy future that they desperately need.”

To other burgeoning entrepreneurs, Riley sees a sense of community as key to lasting and impactful change. “When people are celebrating you with your head up, and then when people are helping you put your chin up when your head’s down, I think it’s so, so critical. I found that here in Michigan, and also found it here in our community, right here in Detroit. Passion and finding a community that’s going to help get you through the journey is all it takes.”

This episode of Business Lab is produced in association with the Michigan Economic Development Corporation.

Full Transcript

Megan Tatum: From MIT Technology Review, I’m Megan Tatum, and this is Business Lab, the show that helps business leaders make sense of new technologies coming out of the lab and into the marketplace.

Today’s episode is brought to you in partnership with the Michigan Economic Development Corporation.

Our topic today is building a technology startup in the U.S. state of Michigan. Taking an innovative idea to a full-fledged product and company requires resources that individuals might not have. That’s why the Michigan Economic Development Corporation, the MEDC, has launched an innovation campaign to support technology entrepreneurs.

Two words for you: startup ecosystem.

My guest is Darren Riley, the co-founder and CEO at JustAir, a clean air startup that began its journey in Michigan.

Welcome, Darren.

Darren Riley: Hi. Thanks for having me.

Megan: Thank you ever so much for being with us. To get us started, let’s just talk a bit about JustAir. How did the idea for the company come about, and what does your company do as well?

Darren: Yeah, absolutely. The real thesis of JustAir, is really a combination of one, my personal experience but also my professional experience. On the professional side, background in software engineering, graduated from Carnegie Mellon University, but I was always fascinated by how to use technology to really support and innovate and really push the frontier on issues that are near and dear to my heart. Coming from Houston, Texas, coming from communities that often are restricted with certain issues, systemic issues, is something that I always carried in my heart.

And on the personal side, it was around seven years ago when I moved to Detroit, in Southwest Detroit, where I developed asthma. Not growing up with asthma and not developing any issues, having that disease of the lungs really opened my eyes to just how much our environment impacts our health and well-being.

The combination of those, that pain point and also my background in technology, I was fascinated about why we don’t have the data we need or why we don’t have the infrastructure to solve these issues, to understand where pollution is coming from, how it’s impacting our communities, so that we can solve these problems and make an equitable breathing environment for everybody. That’s kind of what birthed JustAir in a way.

And actually, it was around COVID-19 where we really started to push forward, where we saw all this information and research around health disparities and a lot of the issues of mortality rates around COVID-19, which kind of coincides with COPD, asthma, and other diseases that are often overburdened in communities that look like ours, in Black and brown communities. That’s kind of where we got our start.

And what is JustAir today? JustAir is solving the problem of how to better manage local pollution so that we can make sure our communities, our lifestyles—where we work, where we play, and where we learn—are really protected. And, so, what JustAir does is build hyper-local neighborhood-level air quality monitoring networks. Communities have access to the data, policymakers and decision-makers can use that data to really influence and push things to help protect the community, but also other stakeholders can use the data to move the environment to a healthier state. So that’s where we are, and we’re four years strong, and I’m really excited to be a part of this journey here in Michigan.

Megan: So you launched about four years ago now. Why did you choose to build and grow just there in Michigan?

Darren: Yeah, I think a combination of things, the reason why I chose to start here and be intentional about building our team here. I think first is really around the ecosystem support around Michigan. So the MEDC has a network of what we call SmartZones that really offer funding, resources, mentorship, advisory on the different challenges that can range from capital, legal, and other issues that kind of hold an entrepreneur from just getting out there and putting their product in the market. First and foremost, I’m super thankful and grateful for just the state really focusing on and putting entrepreneurs first in that regard.

I think secondly is community. I really felt a strong sense of community here in Detroit. One of the founding members of an organization called Black Tech Saturdays, which sees over hundreds, 500-1,000 folks almost every Saturday of the month, just really sharing and really engaging with tech-curious folks from all different walks of life, but making intentional space for folks who are often left out of those rooms and out of those conversations. And just really seeing a peer network of entrepreneurs who come from a similar cultural background or a similar situation, really going after it together and helping each other navigate some issues.

And then lastly, I talk about this a lot, but problem-solution fit. Being here in Detroit where I developed asthma, where we have many issues and many around the environment that have hit some communities the hardest, right here in Detroit in my own backyard I really want to be very narrowly focused and make sure that I’m building something that actually solves the problem that got me on this journey in the first place. Not thinking about regional-wide, different country, international, et cetera, but how do we build something right here in the backyard that solves the problem for my neighbors and makes sure that we can make a real difference in the community. So, from the community to the problem that I really care about and make sure we solve, and then also just the ecosystem support is why we’re here in Michigan and why we plan to really grow and really be a part of this movement.

Megan: Fantastic. And you’ve touched on a few of those already, but as you were getting started, what specific resources, partnerships, or community support helped you navigate the early-stage research and development stages?

Darren: One example, really early, actually, I forgot about this for a while, but we have a Business Accelerator Fund here in Michigan where there’s funding offered to entrepreneurs for technical assistance. I used that to operationalize some of our technical roadmap processes to build out the infrastructure that we really intended to do. So, that real funding that was non-dilutive that the state provided helped accelerate some of those issues in the early days, where it was just myself and advisors going after this problem. And so now, where we are today, there are funds that receive funding from MEDC, so local funds and venture capital that help you get your first check. Those are really helpful as well. All that to say is basically a combination of funding primary source, but also strategically, that funding is going towards product positioning and product-market fit. Those were some of the two core examples that have been beneficial.

And then, I think the last thing I’ll mention as well, MEDC and a lot of the SmartZones within the state, these SmartZones are just bucketed in different regions and areas, so you have Ann Arbor, you’ve got Detroit, you have Grand Rapids, the whole nine yards, having these events and creating these clusters, if you will, of density of entrepreneurs, I think is super, super critical. I’ve experienced in New York, Chicago, and San Francisco, and other bigger ecosystems that density is so critical to where you’re constantly rubbing shoulders with the next entrepreneur, the next investor, the next customer, to really kind of accelerate that velocity of your journey.

Megan: Yeah. Having that ecosystem makes such a difference, doesn’t it?

Darren: Oh yeah, absolutely.

Megan: And tech acumen and business acumen are very different sets of skills. I wonder what was the process like developing out your technology whilst also building out a viable business plan?

Darren: I think I have a real unique opportunity. Having a software background, I code all the time, felt I had a lot of ideas, always joked that I had a Google Drive of 30 ideas that never worked, that I never showed anybody. I really felt I had that piece. What I was missing in my journey and why nothing ever came to fruition was just the simple principles of, are you solving a real problem, a real pain point for a customer?

Two things on the business acumen side are having an affinity for the problem. I truly believe that going on the entrepreneurial journey is lonely, it’s risky, it’s stressful, and tiring. The more I can wake up in the morning and think about [how] the problems that we solve could actually result in a breath of clean air for someone who may not have that awareness or have the tools to advocate on their behalf, just having that extra motivation and having that affinity towards a problem that I feel really deeply, I think does help.

But I think also from the business acumen side of things, I had the opportunity to work at an organization called Endeavor based here in Michigan, where I was on the other side of an entrepreneur resource support organization. I got to see founders from high-growth companies throughout Michigan, series A, series B, retail, fintech, the whole nine yards, health tech, and seeing where are the challenges, where are things going well and where things are going wrong, from co-founder struggles to missing the market timing or going through banking issues from a couple years ago and all that stuff. All those things really help build a muscle memory of, I don’t have all the answers, but being able to pull through those experiences and pattern matching does help as well, from how you actually build a business from zero, from product-market fit to scale and grow.

Megan: Yeah, absolutely. And as you say, it can be a stressful journey, life as an entrepreneur, but I wonder if you could also share some highlights from your journey so far, any partnerships or projects that you’re really excited about at the moment?

Darren: I think the first and foremost highlight [that] I didn’t realize I would come to enjoy so much is certainly my team. Being able to work with people who are aligned in passionate values and just kind of the culture and the focus is immensely valuable. If I’m going to spend this many hours in a week or in a year, I’d love to spend it with folks who are really passionate about it. I want to see them succeed. So I think first and foremost, I think the biggest success is really just the fortunate opportunity to work with people I really enjoy working with.

The others I’ll mention [are] we have one of the largest county-owned monitoring networks in the country within Wayne County. The Health Department of Wayne County and Executive Warren Evans established this partnership where we deployed 100 fixed monitors throughout Wayne County to understand the patterns of local pollution to where we can help combat some of these issues where we are ranked F in air quality from the Lung Association, or Detroit is the third-worst from Asthma and Allergy Foundation of America, the third-worst place to live in with asthma. So, how do we really look at this data and tell the story, and how can we really mitigate solutions, while also giving data to the public so that they can navigate the world that’s happening to them. That’s one of our critical partnerships.

We’re also very excited, we just got announced in Fast Company as one of the most innovative companies of 2025, so woo-hoo to that.

Megan: Congratulations.

Darren: It is really exciting, yeah, in the social impact, social good category. There are many, many more, but I think the last one, I’m so, so grateful for, and I tell our team this all the time, is that we’ve already succeeded. Going to community meetings, hearing people raise their hand, asking questions about the adjuster application or about their data, and I to emphasize that when you hear community members saying ‘our data’ and not an ask, but as something that they have obtained, it warms my heart, and it shows that we’re doing exactly what we said we wanted to do, which is to make sure that communities have the data that they deserve to create the future, the clean, healthy future that they desperately need.”.

Megan: Yeah, absolutely, what an incredible achievement. And what advice, finally, would you offer to other burgeoning entrepreneurs?

Darren: Yeah, I think really something you are passionate about. Repeat that point again, do something that you feel that you can really go through those pain points and struggles for, [because] you need some extra kick to get you through and navigate these challenges.

The second thing, and the most important thing that a lot of people take away is community, community, community. I wouldn’t be here today if I didn’t have people to call on when I’m at my lowest points, and call on people in my highest points. When people are celebrating you with your head up, and then when people are helping you put your chin up when your head’s down, I think it’s so, so critical. I found that here in Michigan, and also found it here in our community, right here in Detroit. Passion and finding a community that’s going to help get you through the journey is all it takes.

Megan: Fantastic. All great advice. Thank you ever so much, Darren.

Darren: Absolutely.

Megan: That was Darren Riley, the co-founder and CEO at JustAir whom I spoke with from Brighton, England.

That’s it for this episode of Business Lab. I’m your host, Megan Tatum. I’m a contributing editor and host for Insights, the custom publishing division of MIT Technology Review. We were founded in 1899 at the Massachusetts Institute of Technology, and you can find us in print on the web and at events each year around the world. For more information about us on the show, please check out our website at technologyreview.com.

This show is available wherever you get your podcasts. And if you enjoyed this episode, we hope you’ll take a moment to rate and review us. Business Lab is a production of MIT Technology Review. This episode was produced by Giro Studios. Thanks for listening.

This content was produced by Insights, the custom content arm of MIT Technology Review. It was not written by MIT Technology Review’s editorial staff.

This content was researched, designed, and written entirely by human writers, editors, analysts, and illustrators. This includes the writing of surveys and collection of data for surveys. AI tools that may have been used were limited to secondary production processes that passed thorough human review.

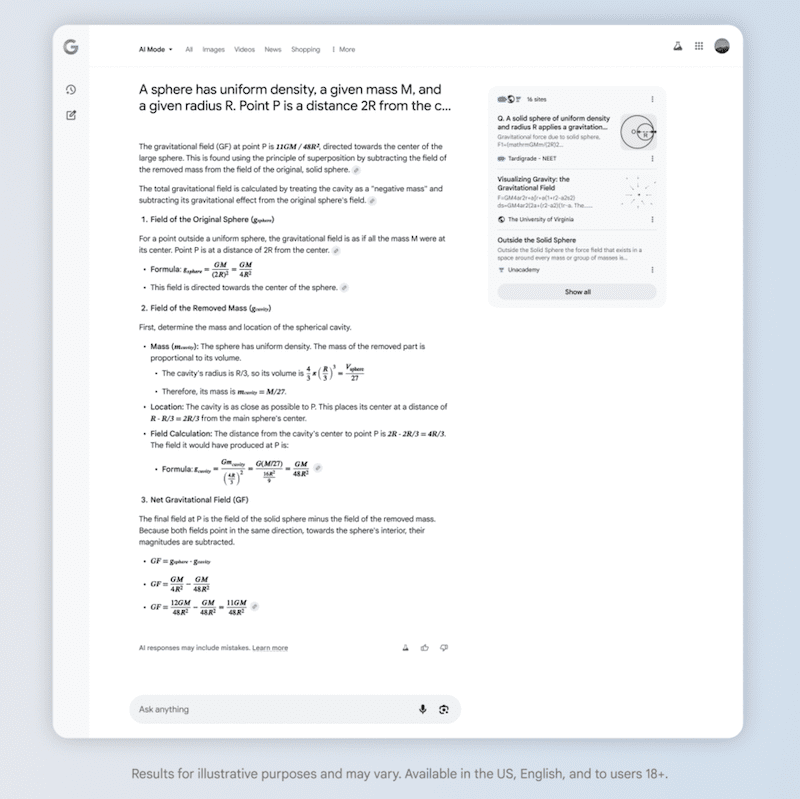

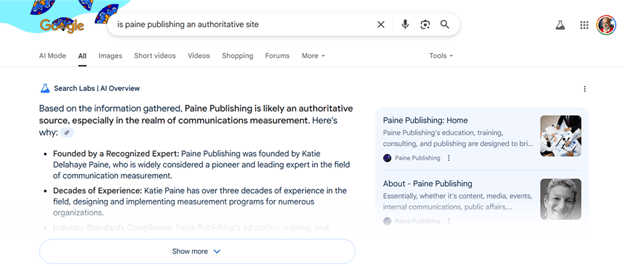

Screenshot from: blog.google/products/search/deep-search-business-calling-google-search, July 2025.

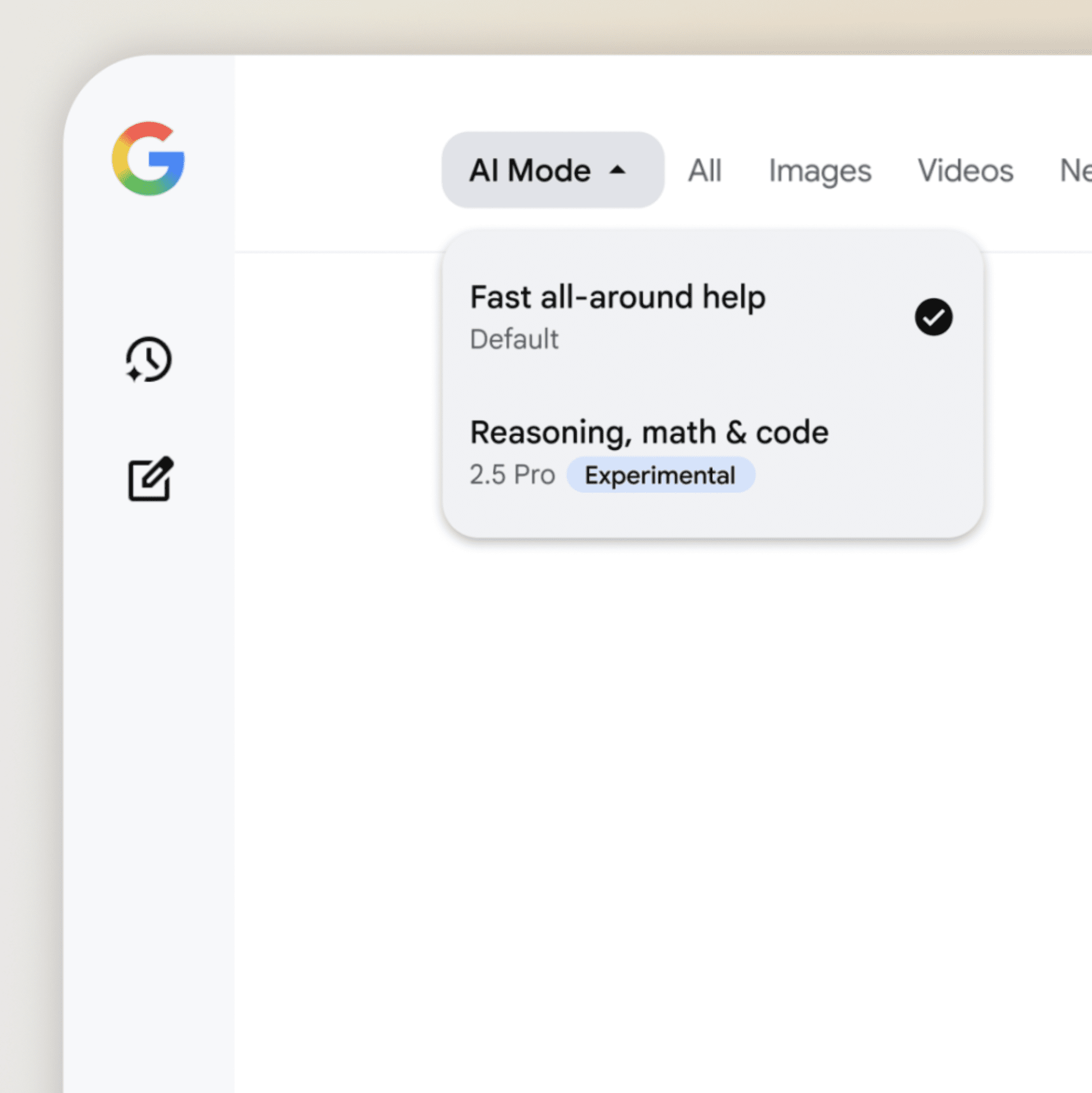

Screenshot from: blog.google/products/search/deep-search-business-calling-google-search, July 2025.