<div data-chronoton-summary="

- A US nuclear-powered spacecraft may head to Mars: NASA has announced SR-1, the first-ever nuclear-reactor-powered interplanetary spacecraft, with a planned Mars launch before the end of 2028—a timeline experts call aggressive but exciting.

- Nuclear could beat chemical and solar power: Unlike traditional propulsion, nuclear electric propulsion is orders of magnitude more efficient and doesn’t depend on sunlight, making it better suited for long, fast journeys through the solar system.

- The design is already taking shape: SR-1 will resemble a giant fletched arrow, with a recycled Gateway space station propulsion unit at the rear and a 20-kilowatt uranium reactor up front, cooled by enormous fins that vent excess heat into space.

- The stakes go beyond engineering: With China and Russia pursuing their own deep-space nuclear programs, SR-1 is as much a geopolitical gambit as a scientific one—and success could put the US ahead in the race to land humans on Mars.

” data-chronoton-post-id=”1135848″ data-chronoton-expand-collapse=”1″ data-chronoton-analytics-enabled=”1″>

MIT Technology Review Explains: Let our writers untangle the complex, messy world of technology to help you understand what’s coming next. You can read more from the series here.

Just before Artemis II began its historic slingshot around the moon, Jared Isaacman, the recently confirmed NASA administrator, made a flurry of announcements from the agency’s headquarters in Washington, DC. He said the US would soon undertake far more regular moon missions and establish the foundations for a base at the lunar south pole before the end of the decade. He also affirmed the space agency’s commitment to putting a nuclear reactor on the lunar surface.

These goals were largely expected—but there was still one surprise. Isaacman also said NASA would build the first-ever nuclear reactor-powered interplanetary spacecraft and fly it to Mars by the end of 2028. It’s called the Space Reactor-1 Freedom, or SR-1 for short. “After decades of study, and billions spent on concepts that have never left Earth, America will finally get underway on nuclear power in space,” he said at the event. “We will launch the first-of-its-kind interplanetary mission.”

A successful mission would herald a new era in spaceflight, one in which traveling between Earth, the moon, and Mars would—according to a range of experts—be faster and easier than ever. And it might just give the US the edge in the race against China—allowing the country to beat its greatest geopolitical rival to landing astronauts on another planet.

While experts agree the timeline is extremely tight, they’re excited to see if America’s space agency and its industry partners can deliver an engineering miracle. “You wake up to that announcement, and it puts a big smile on your face,” says Simon Middleburgh, co-director of the Nuclear Futures Institute at Bangor University in Wales.

Little detail on SR-1 is publicly available, and NASA’s own spaceflight researchers did not respond to requests for comment. But MIT Technology Review spoke to several nuclear power and propulsion experts to find out how the new nuclear-powered spacecraft might work.

Nuclear propulsion 101

Traditionally, spaceflight has been powered by chemical propulsion. Liquefied hydrogen and liquefied oxygen are mixed, and then ignited, within a rocket; the searingly hot exhaust from this explosion is ejected through a nozzle, which propels the rocket forth.

Chemical propulsion offers a significant amount of thrust and will, for the foreseeable future, still be used to launch spacecraft from Earth. But nuclear propulsion would enable spacecraft to fly through the solar system for far longer, and faster, than is currently possible.

“You get more bang per kilogram,” says Middleburgh. A nuclear fuel source is far more energy-dense than its conventional cousin, which means it’s orders of magnitude more efficient. “It’s really, really, really high efficiency,” says Lindsey Holmes, an expert in space nuclear technology and the vice president of advanced projects at Analytical Mechanics Associates, an aerospace company in Virginia.

The approach also removes one other element of the traditional power equation: solar. Spacecraft, including the Artemis II mission’s Orion space capsule, often rely on the sun for power. But this can be a problem, since it doesn’t always shine in space, particularly when a planet or moon gets in its way—and as you head toward the outer solar system, beyond Mars, there’s just less sunlight available.

To circumvent this issue, nuclear energy sources have been used in spacecraft plenty of times before—including on both Voyager missions and the Saturn-interrogating Cassini probe. Known as radioisotope thermoelectric generators, or RTGs, these use plutonium, which radioactively decays and generates heat in the process. That heat is then converted into electricity for the spacecraft to use. RTGs, however, aren’t the same as nuclear reactors; they are more akin to radioactive batteries—more rudimentary and considerably less powerful.

So how will a nuclear-reactor-powered spacecraft work?

Despite operational differences, the fundamentals of running a nuclear reactor in space are much the same as they are on Earth. First, get some uranium fuel; then bombard it with neutrons. This ruptures the uranium’s unstable atomic nuclei, which expel a torrent of extra neutrons—and that rapidly escalates into a self-sustaining, roasting-hot nuclear fission reaction. Its prodigious heat output can then be used to produce electricity.

Doing this in space may sound like an act of lunacy, but it’s not: The idea, and even a lot of the basic technology, has been around for decades. The Soviet Union sent dozens of nuclear reactors into orbit (often to power spy satellites), while the US deployed just one, known as SNAP-10A, back in 1965—a technological demonstration to see if it would operate normally in space. The aim was for the reactor to generate electricity for at least a year, but it ran for just over a month before a high-voltage failure in the spacecraft caused it to malfunction and shut down.

Now, more than half a century later, the US wants its second-ever space-based nuclear reactor to do something totally different: power an interplanetary spacecraft.

To be clear, the US has started, and terminated, myriad programs looking into nuclear propulsion. The latest casualty was DRACO, a collaboration between NASA and the Department of Defense, which ended in 2025. Like several previous efforts, DRACO was canceled because of a mix of high experimentation costs, lower prices for conventional rocket propulsion, and the difficulty of ensuring that ground tests could be performed safely and effectively (they are creating an incredibly powerful nuclear reaction, after all).

But now external considerations may be changing the calculus. The Artemis program has jump-started America’s return to the moon, and the new space race has palpable momentum behind it. The first nation to deploy nuclear propulsion would have a serious advantage navigating through deep space.

“I think it’s a very doable technology,” says Philip Metzger, a spaceflight engineering researcher at the Florida Space Institute. “I’m happy to see them finally doing this.”

One version of this technology is known as nuclear thermal propulsion, or NTP. You start with a nuclear reactor, one that’s cooking at around 5,000°F. Then “you’ve got a cold gas, and you squirt cold gas over the hot reactor,” says Middleburgh. “The gas expands, you shoot it out the back of a nozzle, and you have an impulse. And that impulse drives you forward.”

Because the thrust depends on the speed of the gas being ejected, the propellant gas needs to be light, making hydrogen a popular choice. But hydrogen is a corrosive and explosive substance, so using it in NTP engines can make them precarious to operate. On top of this, NTP doesn’t necessarily have a very long operating life.

Alternatively, there’s nuclear electric propulsion, or NEP, which “is very low thrust, but very efficient, so you can use it for a long period of time,” says Sebastian Corbisiero, the US Department of Energy’s national technical director of space reactor programs. This method uses heat from a fission reactor to generate power. That power is used to electrify a gas and then blast it out of the spacecraft, generating thrust.

Both NTP and NEP have been investigated by US researchers, because both have the added benefit of making it easier and safer for human beings to explore the solar system. Astronauts in space are exposed to harmful cosmic radiation, but because nuclear propulsion makes spacecraft speedier and more agile, they’d spend less time in it. “It solves the radiation problem,” says Metzger. “That’s one of the main motivations for inventing better propulsion to and from Mars.”

How to build a nuclear-powered spaceship

For SR-1, NASA has opted for nuclear electric propulsion. NEP is “a much simpler affair” than its thermal counterpart, says Middleburgh. Essentially, you just need to plug a nuclear reactor into a power-and-propulsion system. Luckily for NASA, it’s already got one.

For many years, NASA—along with its space agency partners in Canada, Europe, Japan, and the Middle East—was preparing for Gateway, meant to be humanity’s first space station to orbit around the moon. Isaacman canceled the project in March, but that doesn’t mean its technology will go to waste; the power-and-propulsion element of the nixed space station will be used in SR-1 instead. This contraption was going to be powered by solar energy. It’ll now be attached to an in-development nuclear reactor custom built to survive in space.

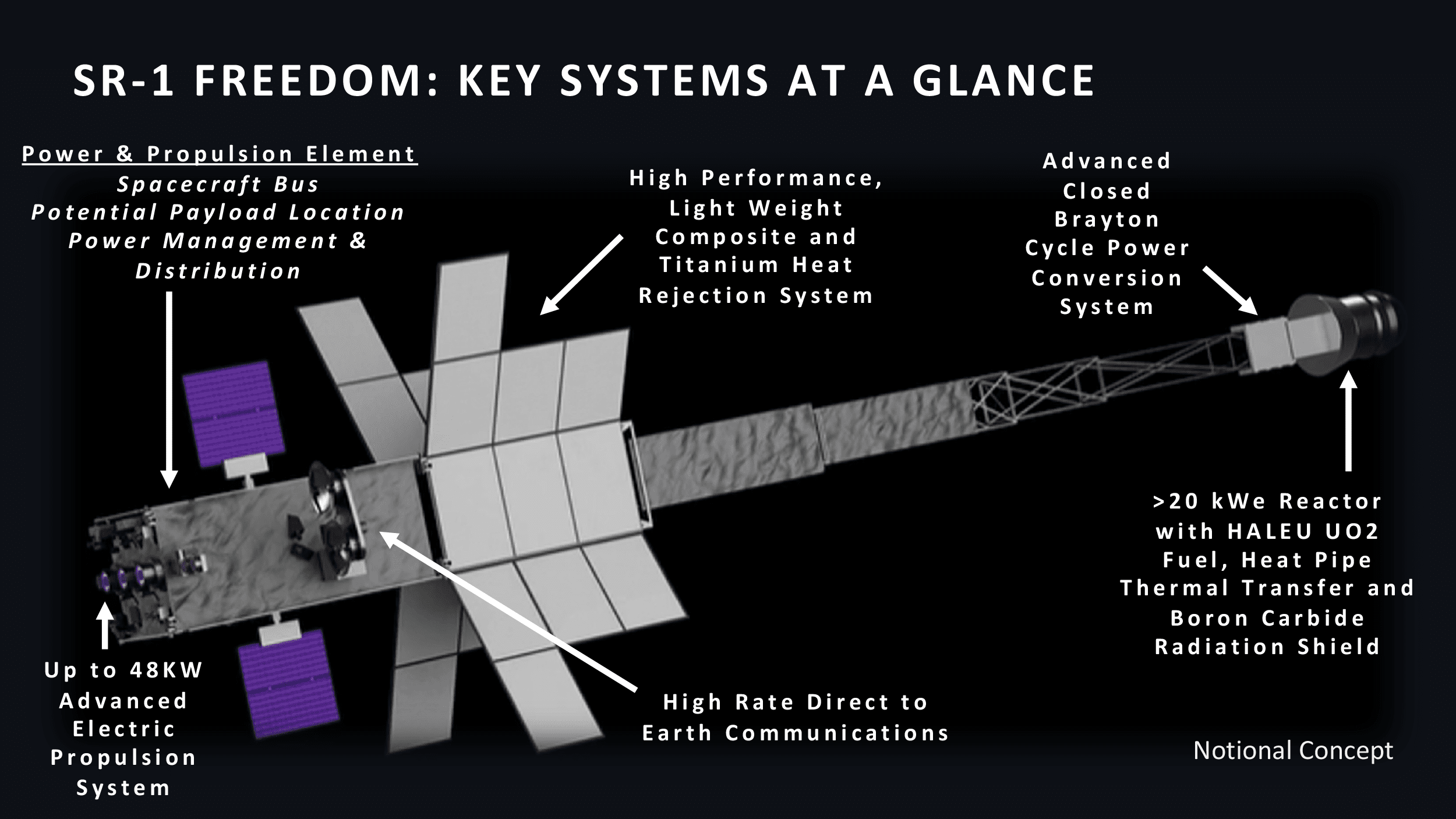

What might the SR-1 look like? MIT Technology Review saw a presentation by Steve Sinacore, program executive of NASA’s Space Reactor Office, that offers some clues. So far, the concept art makes it look like a colossal fletched arrow. At the back will be the power-and-propulsion system, while its tip will hold a 20-kilowatt-or-greater uranium-filled nuclear reactor. (For context, a typical nuclear plant on Earth is 50,000 times more powerful, producing a gigawatt of power.)

The “fletches” on SR-1 are large fins that allow the reactor to cool down. “You have to have really large radiators,” says Holmes, since the nuclear fission process produces so much heat that much of it has to be vented into space—otherwise, the reactor and spacecraft will melt.

According to that presentation, the spacecraft’s hardware development is due to start this June. By January 2028, SR-1’s systems should be ready for assembly and testing. And by that October, the spacecraft will arrive at the launch site, ready for liftoff before the year’s end. Will the nuclear reactor manage to hold itself together? “Going through the launch safely is going to be a challenge,” says Middleburgh. “You are being shaken, rattled, and rolled.”

Then, he says, “once you’re up in space, once you’ve got through that few minutes of hell in getting there, it’s zero-gravity considerations you have to worry about.” The question then becomes: Will the mechanics of the reactor, built on terra firma, still work?

For safety reasons, the nuclear reactor will be switched on around two days post-launch, when it’s comfortably in space. Uranium isn’t tremendously dangerous by itself, but that can’t be said of the nuclear waste products that emerge when the reactor is activated, so you don’t want any of that to fall back to Earth.

If this schedule is adhered to, and SR-1 works as planned, it’s expected to reach Mars about a year after launch. “It’s an aggressive timeline,” says Holmes, something she suspects is being driven partly by China’s and Russia’s own deep-space nuclear ambitions. The two countries aim to place their own nuclear reactor on the moon’s surface to power the planned International Lunar Research Station—a jointly operated lunar base—by 2035.

Whether it flies or fails in space, SR-1’s operations should help NASA with putting a nuclear reactor on the moon soon after. “All of the things we’d be learning about how that system operates in space [are] very helpful for a surface application, because basically it’s the same,” says Corbisiero. “There’s still no air on the moon.”

And if SR-1 does triumph, it will be a game-changing victory for NASA. It will also be “a massive win for the human race, frankly,” says Middleburgh. “It will be a marvel of engineering, and it will move the dial in humans potentially taking a step on Mars.” Like many of his colleagues, including Holmes, he remains thrilled by the prospect of the first-ever nuclear-powered interplanetary spacecraft—even with the incredibly ambitious timeline.

“These are the things that get us up in the morning,” he says. “These are the sorts of things we will remember when we’re old.”