In Bengaluru, India, Adithya Kolavi felt a mix of excitement and validation as he watched DeepSeek unleash its disruptive language model on the world earlier this year. The Chinese technology rivaled the best of the West in terms of benchmarks, but it had been built with far less capital in far less time.

“I thought: ‘This is how we disrupt with less,’” says Kolavi, the 20-year-old founder of the Indian AI startup CognitiveLab. “If DeepSeek could do it, why not us?”

But for Abhishek Upperwal, founder of Soket AI Labs and architect of one of India’s earliest efforts to develop a foundation model, the moment felt more bittersweet.

Upperwal’s model, called Pragna-1B, had struggled to stay afloat with tiny grants while he watched global peers raise millions. The multilingual model had a relatively modest 1.25 billion parameters and was designed to reduce the “language tax,” the extra costs that arise because India—unlike the US or even China—has a multitude of languages to support. His team had trained it, but limited resources meant it couldn’t scale. As a result, he says, the project became a proof of concept rather than a product.

“If we had been funded two years ago, there’s a good chance we’d be the ones building what DeepSeek just released,” he says.

Kolavi’s enthusiasm and Upperwal’s dismay reflect the spectrum of emotions among India’s AI builders. Despite its status as a global tech hub, the country lags far behind the likes of the US and China when it comes to homegrown AI. That gap has opened largely because India has chronically underinvested in R&D, institutions, and invention. Meanwhile, since no one native language is spoken by the majority of the population, training language models is far more complicated than it is elsewhere.

Historically known as the global back office for the software industry, India has a tech ecosystem that evolved with a services-first mindset. Giants like Infosys and TCS built their success on efficient software delivery, but invention was neither prioritized nor rewarded. Meanwhile, India’s R&D spending hovered at just 0.65% of GDP ($25.4 billion) in 2024, far behind China’s 2.68% ($476.2 billion) and the US’s 3.5% ($962.3 billion). The muscle to invent and commercialize deep tech, from algorithms to chips, was just never built.

Isolated pockets of world-class research do exist within government agencies like the DRDO (Defense Research & Development Organization) and ISRO (Indian Space Research Organization), but their breakthroughs rarely spill into civilian or commercial use. India lacks the bridges to connect risk-taking research to commercial pathways, the way DARPA does in the US. Meanwhile, much of India’s top talent migrates abroad, drawn to ecosystems that better understand and, crucially, fund deep tech.

So when the open-source foundation model DeepSeek-R1 suddenly outperformed many global peers, it struck a nerve. This launch by a Chinese startup prompted Indian policymakers to confront just how far behind the country was in AI infrastructure, and how urgently it needed to respond.

India responds

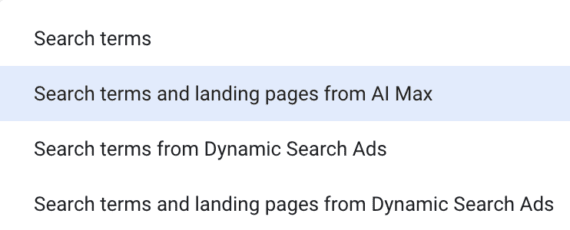

In January 2025, 10 days after DeepSeek-R1’s launch, the Ministry of Electronics and Information Technology (MeitY) solicited proposals for India’s own foundation models, which are large AI models that can be adapted to a wide range of tasks. Its public tender invited private-sector cloud and data‑center companies to reserve GPU compute capacity for government‑led AI research.

Providers including Jio, Yotta, E2E Networks, Tata, AWS partners, and CDAC responded. Through this arrangement, MeitY suddenly had access to nearly 19,000 GPUs at subsidized rates, repurposed from private infrastructure and allocated specifically to foundational AI projects. This triggered a surge of proposals from companies wanting to build their own models.

Within two weeks, it had 67 proposals in hand. That number tripled by mid-March.

In April, the government announced plans to develop six large-scale models by the end of 2025, plus 18 additional AI applications targeting sectors like agriculture, education, and climate action. Most notably, it tapped Sarvam AI to build a 70-billion-parameter model optimized for Indian languages and needs.

For a nation long restricted by limited research infrastructure, things moved at record speed, marking a rare convergence of ambition, talent, and political will.

“India could do a Mangalyaan in AI,” said Gautam Shroff of IIIT-Delhi, referencing the country’s cost-effective, and successful, Mars orbiter mission.

Jaspreet Bindra, cofounder of AI&Beyond, an organization focused on teaching AI literacy, captured the urgency: “DeepSeek is probably the best thing that happened to India. It gave us a kick in the backside to stop talking and start doing something.”

The language problem

One of the most fundamental challenges in building foundational AI models for India is the country’s sheer linguistic diversity. With 22 official languages, hundreds of dialects, and millions of people who are multilingual, India poses a problem that few existing LLMs are equipped to handle.

Whereas a massive amount of high-quality web data is available in English, Indian languages collectively make up less than 1% of online content. The lack of digitized, labeled, and cleaned data in languages like Bhojpuri and Kannada makes it difficult to train LLMs that understand how Indians actually speak or search.

Global tokenizers, which break text into units a model can process, also perform poorly on many Indian scripts, misinterpreting characters or skipping some altogether. As a result, even when Indian languages are included in multilingual models, they’re often poorly understood and inaccurately generated.

And unlike OpenAI and DeepSeek, which achieved scale using structured English-language data, Indian teams often begin with fragmented and low-quality data sets encompassing dozens of Indian languages. This makes the early steps of training foundation models far more complex.

Nonetheless, a small but determined group of Indian builders is starting to shape the country’s AI future.

For example, Sarvam AI has created OpenHathi-Hi-v0.1, an open-source Hindi language model that shows the Indian AI field’s growing ability to address the country’s vast linguistic diversity. The model, built on Meta’s Llama 2 architecture, was trained on 40 billion tokens of Hindi and related Indian-language content, making it one of the largest open-source Hindi models available to date.

Pragna-1B, the multilingual model from Upperwal, is more evidence that India could solve for its own linguistic complexity. Trained on 300 billion tokens for just $250,000, it introduced a technique called “balanced tokenization” to address a unique challenge in Indian AI, enabling a 1.25-billion-parameter model to behave like a much larger one.

The issue is that Indian languages use complex scripts and agglutinative grammar, where words are formed by stringing together many smaller units of meaning using prefixes and suffixes. Unlike English, which separates words with spaces and follows relatively simple structures, Indian languages like Hindi, Tamil, and Kannada often lack clear word boundaries and pack a lot of information into single words. Standard tokenizers struggle with such inputs. They end up breaking Indian words into too many tokens, which bloats the input and makes it harder for models to understand the meaning efficiently or respond accurately.

With the new technique, however, “a billion-parameter model was equivalent to a 7 billion one like Llama 2,” Upperwal says. This performance was particularly marked in Hindi and Gujarati, where global models often underperform because of limited multilingual training data. It was a reminder that with smart engineering, small teams could still push boundaries.

Upperwal eventually repurposed his core tech to build speech APIs for 22 Indian languages, a more immediate solution better suited to rural users who are often left out of English-first AI experiences.

“If the path to AGI is a hundred-step process, training a language model is just step one,” he says.

At the other end of the spectrum are startups with more audacious aims. Krutrim-2, for instance, is a 12-billion-parameter multilingual language model optimized for English and 22 Indian languages.

Krutrim-2 is attempting to solve India’s specific problems of linguistic diversity, low-quality data, and cost constraints. The team built a custom Indic tokenizer, optimized training infrastructure, and designed models for multimodal and voice-first use cases from the start, crucial in a country where text interfaces can be a problem.

Krutrim’s bet is that its approach will not only enable Indian AI sovereignty but also offer a model for AI that works across the Global South.

Besides public funding and compute infrastructure, India also needs the institutional support of talent, the research depth, and the long-horizon capital that produce globally competitive science.

While venture capital still hesitates to bet on research, new experiments are emerging. Paras Chopra, an entrepreneur who previously built and sold the software-as-a-service company Wingify, is now personally funding Lossfunk, a Bell Labs–style AI residency program designed to attract independent researchers with a taste for open-source science.

“We don’t have role models in academia or industry,” says Chopra. “So we’re creating a space where top researchers can learn from each other and have startup-style equity upside.”

Government-backed bet on sovereign AI

The clearest marker of India’s AI ambitions came when the government selected Sarvam AI to develop a model focused on Indian languages and voice fluency.

The idea is that it would not only help Indian companies compete in the global AI arms race but benefit the wider population as well. “If it becomes part of the India stack, you can educate hundreds of millions through conversational interfaces,” says Bindra.

Sarvam was given access to 4,096 Nvidia H100 GPUs for training a 70-billion-parameter Indian language model over six months. (The company previously released a 2-billion-parameter model trained in 10 Indian languages, called Sarvam-1.)

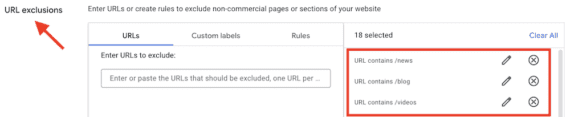

Sarvam’s project and others are part of a larger strategy called the IndiaAI Mission, a $1.25 billion national initiative launched in March 2024 to build out India’s core AI infrastructure and make advanced tools more widely accessible. Led by MeitY, the mission is focused on supporting AI startups, particularly those developing foundation models in Indian languages and applying AI to key sectors such as health care, education, and agriculture.

Under its compute program, the government is deploying more than 18,000 GPUs, including nearly 13,000 high-end H100 chips, to a select group of Indian startups that currently includes Sarvam, Upperwal’s Soket Labs, Gnani AI, and Gan AI.

The mission also includes plans to launch a national multilingual data set repository, establish AI labs in smaller cities, and fund deep-tech R&D. The broader goal is to equip Indian developers with the infrastructure needed to build globally competitive AI and ensure that the results are grounded in the linguistic and cultural realities of India and the Global South.

According to Abhishek Singh, CEO of IndiaAI and an officer with MeitY, India’s broader push into deep tech is expected to raise around $12 billion in research and development investment over the next five years.

This includes approximately $162 million through the IndiaAI Mission, with about $32 million earmarked for direct startup funding. The National Quantum Mission is contributing another $730 million to support India’s ambitions in quantum research. In addition to this, the national budget document for 2025-26 announced a $1.2 billion Deep Tech Fund of Funds aimed at catalyzing early-stage innovation in the private sector.

The rest, nearly $9.9 billion, is expected to come from private and international sources including corporate R&D, venture capital firms, high-net-worth individuals, philanthropists, and global technology leaders such as Microsoft.

IndiaAI has now received more than 500 applications from startups proposing use cases in sectors like health, governance, and agriculture.

“We’ve already announced support for Sarvam, and 10 to 12 more startups will be funded solely for foundational models,” says Singh. Selection criteria include access to training data, talent depth, sector fit, and scalability.

Open or closed?

The IndiaAI program, however, is not without controversy. Sarvam is being built as a closed model, not open-source, despite its public tech roots. That has sparked debate about the proper balance between private enterprise and the public good.

“True sovereignty should be rooted in openness and transparency,” says Amlan Mohanty, an AI policy specialist. He points to DeepSeek-R1, which despite its 236-billion parameter size was made freely available for commercial use.

Its release allowed developers around the world to fine-tune it on low-cost GPUs, creating faster variants and extending its capabilities to non-English applications.

“Releasing an open-weight model with efficient inference can democratize AI,” says Hancheng Cao, an assistant professor of information systems and operations management at Emory University. “It makes it usable by developers who don’t have massive infrastructure.”

IndiaAI, however, has taken a neutral stance on whether publicly funded models should be open-source.

“We didn’t want to dictate business models,” says Singh. “India has always supported open standards and open source, but it’s up to the teams. The goal is strong Indian models, whatever the route.”

There are other challenges as well. In late May, Sarvam AI unveiled Sarvam‑M, a 24-billion-parameter multilingual LLM fine-tuned for 10 Indian languages and built on top of Mistral Small, an efficient model developed by the French company Mistral AI. Sarvam’s cofounder Vivek Raghavan called the model “an important stepping stone on our journey to build sovereign AI for India.” But its download numbers were underwhelming, with only 300 in the first two days. The venture capitalist Deedy Das called the launch “embarrassing.”

And the issues go beyond the lukewarm early reception. Many developers in India still lack easy access to GPUs and the broader ecosystem for Indian-language AI applications is still nascent.

The compute question

Compute scarcity is emerging as one of the most significant bottlenecks in generative AI, not just in India but across the globe. For countries still heavily reliant on imported GPUs and lacking domestic fabrication capacity, the cost of building and running large models is often prohibitive.

India still imports most of its chips rather than producing them domestically, and training large models remains expensive. That’s why startups and researchers alike are focusing on software-level efficiencies that involve smaller models, better inference, and fine-tuning frameworks that optimize for performance on fewer GPUs.

“The absence of infrastructure doesn’t mean the absence of innovation,” says Cao. “Supporting optimization science is a smart way to work within constraints.”

Yet Singh of IndiaAI argues that the tide is turning on the infrastructure challenge thanks to the new government programs and private-public partnerships. “I believe that within the next three months, we will no longer face the kind of compute bottlenecks we saw last year,” he says.

India also has a cost advantage.

According to Gupta, building a hyperscale data center in India costs about $5 million, roughly half what it would cost in markets like the US, Europe, or Singapore. That’s thanks to affordable land, lower construction and labor costs, and a large pool of skilled engineers.

For now, India’s AI ambitions seem less about leapfrogging OpenAI or DeepSeek and more about strategic self-determination. Whether its approach takes the form of smaller sovereign models, open ecosystems, or public-private hybrids, the country is betting that it can chart its own course.

While some experts argue that the government’s action, or reaction (to DeepSeek), is performative and aligned with its nationalistic agenda, many startup founders are energized. They see the growing collaboration between the state and the private sector as a real opportunity to overcome India’s long-standing structural challenges in tech innovation.

At a Meta summit held in Bengaluru last year, Nandan Nilekani, the chairman of Infosys, urged India to resist chasing a me-too AI dream.

“Let the big boys in the Valley do it,” he said of building LLMs. “We will use it to create synthetic data, build small language models quickly, and train them using appropriate data.”

His view that India should prioritize strength over spectacle had a divided reception. But it reflects a broader growing consensus on whether India should play a different game altogether.

“Trying to dominate every layer of the stack isn’t realistic, even for China,” says Shobhankita Reddy, a researcher at the Takshashila Institution, an Indian public policy nonprofit. “Dominate one layer, like applications, services, or talent, so you remain indispensable.”

Correction: We amended Reddy’s name