If you lead a marketing team, chances are you’ve had this conversation:

“How are the campaigns doing?”

“Well, our ROAS is 4:1.”

The room breathes a collective sigh of relief. The good news: the marketing budget is justified (for the time being).

But here’s the problem: that number might not actually tell you anything useful.

Return on ad spend (ROAS) has long been the go-to metric for measuring paid media performance. It’s clean. It’s easy to calculate.

And let’s be honest: It looks great in a boardroom slide deck. But, that simplicity can be deceiving.

When CMOs use ROAS as the end-all be-all, it can create a warped view of what’s actually driving meaningful growth.

It often rewards short-term wins, punishes necessary investment periods, and misaligns internal and agency teams chasing vanity benchmarks instead of business results.

This article isn’t a hit piece on ROAS. It’s a reality check on meaningful key performance indicators (KPIs). ROAS can be useful, but it’s not your North Star.

And if you’re serious about long-term revenue growth, customer lifetime value, and competitive market share, it’s time to rethink what success really looks like.

Why ROAS Isn’t Always What It Seems

On paper, ROAS is straightforward: revenue divided by ad spend. Spend $10,000 and generate $40,000 in sales, and you’ve got a 4:1 ROAS.

But, under the hood, it’s not so simple.

Here are a few reasons why ROAS can often mislead:

- It favors existing customers. Your branded campaigns and remarketing lists usually show sky-high ROAS, but they’re mostly capturing people already in your funnel. That’s not growth; it’s in maintenance mode.

- It ignores profit margins. A $40 cost-per-acquisition (CPA) might look great in one product line and catastrophic in another. ROAS doesn’t account for your cost of goods, fulfillment, or operational costs.

- It limits (actual) growth. If your only goal is to “hit ROAS,” you’ll throttle spend on upper-funnel or exploratory campaigns that could fuel future revenue.

- It can be gamed. Agencies and internal teams might optimize for ROAS simply because that’s the KPI they’re judged on, even if it means saying no to high-potential but lower-efficiency campaigns.

And perhaps most importantly, ROAS often ignores timing.

You might lose money on day 1, break even by day 14, and profit significantly by day 90. But ROAS, by default, only tells you what happened in the reporting window you chose.

That’s not a North Star. That’s a snapshot in time.

ROAS Is Still Useful, If You Know When & How To Use It

Let’s be clear: ROAS isn’t bad to report on. It just needs additional context.

There are plenty of scenarios where ROAS is helpful:

- Comparing performance between campaigns, channels, and platforms.

- Evaluating high-volume SKU efficiency in ecommerce.

- Reporting on short-term promotional campaigns.

- Reviewing the efficiency of remarketing or loyalty campaigns.

The key is to treat ROAS like a diagnostic tool, not a destination. It’s one piece of the story, not the whole narrative.

When CMOs and marketing leaders make ROAS the only metric that matters, they end up over-indexing on campaigns that drive immediate revenue, often at the cost of sustainable growth.

What Should Be Your North Star Metric?

If it’s not ROAS, then what should it be?

The truth is, your North Star depends on your business model and goals. Here are a few KPI candidates that typically give a better long-term signal of paid media health.

1. Customer Lifetime Value (CLV) To CAC Ratio

This is arguably the best lens through which to evaluate your investment. If you’re acquiring customers who buy once and never return, you’ll never scale profitably.

Tracking your customer acquisition cost (CAC) against lifetime value forces you to think beyond the first purchase.

Why does this ratio matter?

CLV:CAC shows whether you’re building a sustainable business model. A healthy ratio is often around 3:1 or better, depending on your margins.

An example of how to use this metric is to look at campaign-level CAC and model projected CLV by channel or audience.

If you’re seeing CLV gains over time from specific campaigns, that’s a strong sign of durable growth.

2. Incremental Revenue

Not all revenue is created equal. Incrementality helps you understand what your paid media efforts are truly adding, not just capturing right now.

Why does this metric matter?

Paid campaigns often get credit for conversions that might have happened anyway. Branded search is a classic example. Measuring incrementality filters out that noise.

Some examples of how to use this metric include:

- Set up geo-holdout tests.

- Use audience exclusions.

- Google and Meta’s Incrementality Testing tools.

Incrementality is not always easy to measure, but it brings clarity to where your dollars are actually making a difference.

3. Payback Period

This metric measures how long it takes for a campaign or customer to break even.

Why does this metric matter as a potential North Star?

Not every investment has to pay off instantly. But, leadership should be aligned on how long you’re willing to wait before seeing a return on investment (ROI). That transparency allows you to fund top-of-funnel efforts with more confidence.

To use this metric in practice, try tagging customer cohorts by acquisition source or campaign. Then, track how long it takes to recoup their acquisition cost through future purchases or subscription value.

4. New Customer Revenue Growth

Instead of optimizing for cheapest clicks or best ROAS, try optimizing for the growth of your new customer base.

Why does this metric matter?

It keeps your marketing focused on expanding market share, not just retargeting people who are already in your orbit.

To use this metric, start segmenting campaigns by new and returning users. You can use customer relationship management (CRM) or post-purchase tagging to see how many new users are coming in from each campaign.

The Real Problem: Misalignment Between Leadership And Execution

One of the most common breakdowns in paid media performance isn’t technical misalignment. It’s organizational misalignment.

CMOs often set ROAS goals because they’re easy to track and easy to report. But, if those goals aren’t communicated with nuance to the teams or agencies executing the campaigns, the output becomes distorted.

Here’s how this usually plays out:

- A marketing leader tells the agency or in-house team they need a 5:1 ROAS to justify the budget.

- The team optimizes for what’s most efficient: branded search, bottom-of-funnel retargeting, and low-risk campaigns.

- Top-of-funnel campaigns get throttled, experimental audiences never see the light of day, and new customer growth stalls.

- Eventually, performance plateaus. And leadership is left wondering why they’re not seeing growth, despite “great” ROAS.

This is why setting the right KPIs, and clearly communicating their intent, is not optional. It’s essential to have each team, from ideation to execution, on the same page towards the right goals.

Rethinking Your KPI Framework: What Does “Good” Look Like?

Once you move away from ROAS as your main performance indicator, the natural next question is: What do we track instead?

It’s not about throwing out the metrics you’ve used for years. You need to put them in the right order and context.

A well-thought-out KPI framework helps everyone, from your C-suite to your campaign managers, stay aligned on what you’re optimizing for and why.

Think Of KPIs As Layers, Not Silos

Not all metrics serve the same purpose. Some help guide day-to-day decisions. Others reflect long-term strategic impact. The problem starts when we treat every metric as equally important or try to roll them into one number.

ROAS might help optimize a remarketing campaign. But it tells you very little about whether your brand is growing, reaching new audiences, or acquiring customers that actually stick.

That’s why the best KPI frameworks break metrics out into three categories:

1. Short-Term KPIs: Optimization & Efficiency

These are the metrics your media buyers use every day to adjust bids, pause underperformers, and keep spend in check.

They’re meant to be directional, not definitive.

Examples include:

- ROAS (by campaign or platform).

- Cost per acquisition (CPA).

- Click-through rate (CTR).

- Conversion rate.

- Impression share.

These KPIs are most useful for weekly or even daily reporting. But, they should never be the only numbers presented in a quarterly business review. They help you stay efficient, but they don’t reflect bigger outcomes.

If these metrics are the only thing being reported or discussed, your team may fall into a cycle of only optimizing what’s already working. This leads to missing opportunities to test, expand, or learn.

2. Mid-Term KPIs: Growth Momentum

These metrics show whether your marketing is actually building toward something. They’re tied to broader business goals but can still be influenced in the current quarter or campaign cycle.

Examples include:

- Payback period (days to recoup CAC).

- New customer revenue.

- Net-new customer acquisition.

- Micro conversions (demo requests, app installs, newsletter signups, etc.).

Mid-term KPIs are great for monthly reviews and identifying how top- or mid-funnel investments are performing. They help you evaluate whether you’re fueling growth beyond existing audiences.

Mid-term metrics can sometimes get ignored because they’re harder to track or take longer to show impact. Don’t let imperfect data stop you from establishing benchmarks and looking at trends over time.

3. Long-Term KPIs: Strategic Business Health

This is where your true North Star lives.

These KPIs take longer to measure but reflect the outcomes that matter most: customer loyalty, sustainable revenue, and profitability.

Examples include:

- Customer lifetime value (CLV).

- CLV to CAC ratio.

- Churn or retention rate.

- Repeat purchase rate.

- Gross margin by channel.

Use these metrics to evaluate the success of your marketing investments across quarters or even years. They should influence annual planning and resource allocation.

These metrics are often siloed inside CRM or finance teams. Make sure your paid media or acquisition teams have access and visibility so they can understand their long-term impact.

A KPI Framework Doesn’t Work Without Context

Even with the right metrics in place, your team won’t succeed unless they understand how to prioritize them and what success looks like.

For example, if your team knows ROAS is important, but also understands it’s not the deciding factor for scaling budget, they’re more likely to take healthy risks and test growth-oriented campaigns.

On the other hand, if they’re unsure which KPI matters most, they’ll default to optimizing what they can control, often at the expense of progress.

You don’t need a perfect attribution model to start here. You just need a shared understanding across your team and partners.

When everyone knows which KPIs matter most at each stage of the funnel, it becomes much easier to align strategy, set goals, and evaluate performance with nuance.

What CMOs Can Do Differently Starting Tomorrow

Changing how your organization approaches paid media measurement doesn’t require a complete overhaul.

But, it does take intentional conversations and a willingness to zoom out from the usual dashboard metrics.

Here are six steps you can take to shift your team (or agency) toward a more aligned and strategic direction.

1. Audit What You’re Optimizing For

Start with a gut-check: what are your internal teams or agencies truly prioritizing day to day?

Ask them to show you not just results, but the actual goals entered in-platform. Are they optimizing for purchases, leads, or something vague like clicks? Are they using ROAS targets in Smart Bidding or manually prioritizing it in their reporting?

You might be surprised how often the tactical goals don’t match the business strategy. A quick audit of campaign objectives and KPIs can uncover a lot about where misalignment begins.

If your goal is to grow market share, but your team is focused on protecting branded search ROAS, that’s a disconnect worth addressing.

2. Reset Internal Expectations

This step often gets overlooked, but it’s a big one. CFOs tend to like ROAS because it looks like a clean efficiency ratio: spend in, revenue out.

But, they don’t always see the nuance of long sales cycles, customer value over time, or the lag between impression and conversion.

Take time to walk your finance partners through your updated KPI framework. Show them examples of campaigns that had a low short-term ROAS but brought in high-value, repeat customers over time.

When leadership understands how marketing performance compounds, they’re less likely to cut budgets based on a one-week dip in return.

This is especially helpful if you’re advocating for top-of-funnel investments that take longer to pay off.

3. Educate Your Team Or Agency

Once you’ve reset internal expectations, don’t forget to bring your team or agency into the loop.

It’s not enough to just say, “We’re no longer using ROAS as our North Star.” You have to explain what you’re prioritizing instead, and why.

That might sound like:

- “We’re shifting to focus on acquiring net-new customers and reducing payback period.”

- “This quarter, we’re okay with lower ROAS on prospecting campaigns if we’re growing CLV in the right audience segments.”

- “Let’s break out CLV:CAC reporting by campaign group so we can identify what’s really delivering long-term value.”

When you frame KPIs as tools to hit bigger business goals, your team can make smarter decisions without fear of getting penalized for not hitting an arbitrary ROAS number.

4. Separate Performance Expectations By Funnel Stage

A common mistake is holding every campaign to the same performance goal.

But the truth is, a prospecting campaign will never look as efficient as a remarketing one, and that’s fine.

Give your team or agency space to evaluate performance based on where in the funnel the campaign sits. Set realistic benchmarks for awareness, engagement, or assisted conversions, and evaluate them alongside lower-funnel ROAS or CPA.

Not only does this help you spend more confidently across the full funnel, but it also encourages the kind of creative testing that often gets squeezed out when efficiency metrics dominate.

5. Invest In Stronger Data Modeling

You don’t need to have a perfect attribution system in place to start moving beyond ROAS. You do need to improve your visibility into how customers behave over time.

Work with your team to build even a basic model of customer payback and CLV across channels.

Use what you already have: Google Analytics 4, CRM exports, or even Shopify data to start segmenting users by acquisition source and repeat value.

Over time, this will help you answer key questions like:

- Which campaigns actually bring in our best long-term customers?

- What’s our average time to first, second, and third purchase?

- Are we over-investing in short-term wins at the expense of lifetime value?

Even directional insights can shape much better budgeting and strategy decisions over time.

6. Lead By Example In How You Talk About Performance

As a marketing leader, the way you talk about performance will set the tone for your entire team.

If you ask, “What’s our ROAS this week?” in every meeting, your team will naturally default to chasing it, regardless of whether it reflects progress toward the bigger picture.

Instead, consider asking:

- “Are we growing our base of high-value customers?”

- “What are we seeing with new user acquisition?”

- “Which campaigns have the strongest long-term value, even if short-term ROAS is lower?”

These types of questions signal that you’re interested in more than just this week’s dashboard metrics.

They give your team permission to think bigger, experiment, and optimize for actual business growth.

Stop Letting ROAS Be The Only Metric That Matters

It makes sense why ROAS gets so much attention. It’s familiar, easy to explain, and shows up nicely on a dashboard. But, when it becomes the only thing your team is aiming for, you risk missing the bigger picture.

If your real goals are growth, better margins, and stronger customer relationships, then you need to look at more than just the numbers that look good in a report.

Start by defining the KPIs that support the way your business actually operates, and make sure your team understands why those metrics matter.

This isn’t about ignoring ROAS. It’s about putting it in its proper place, which is just one part of a much larger story.

More Resources:

Featured Image: SvetaZi/Shutterstock

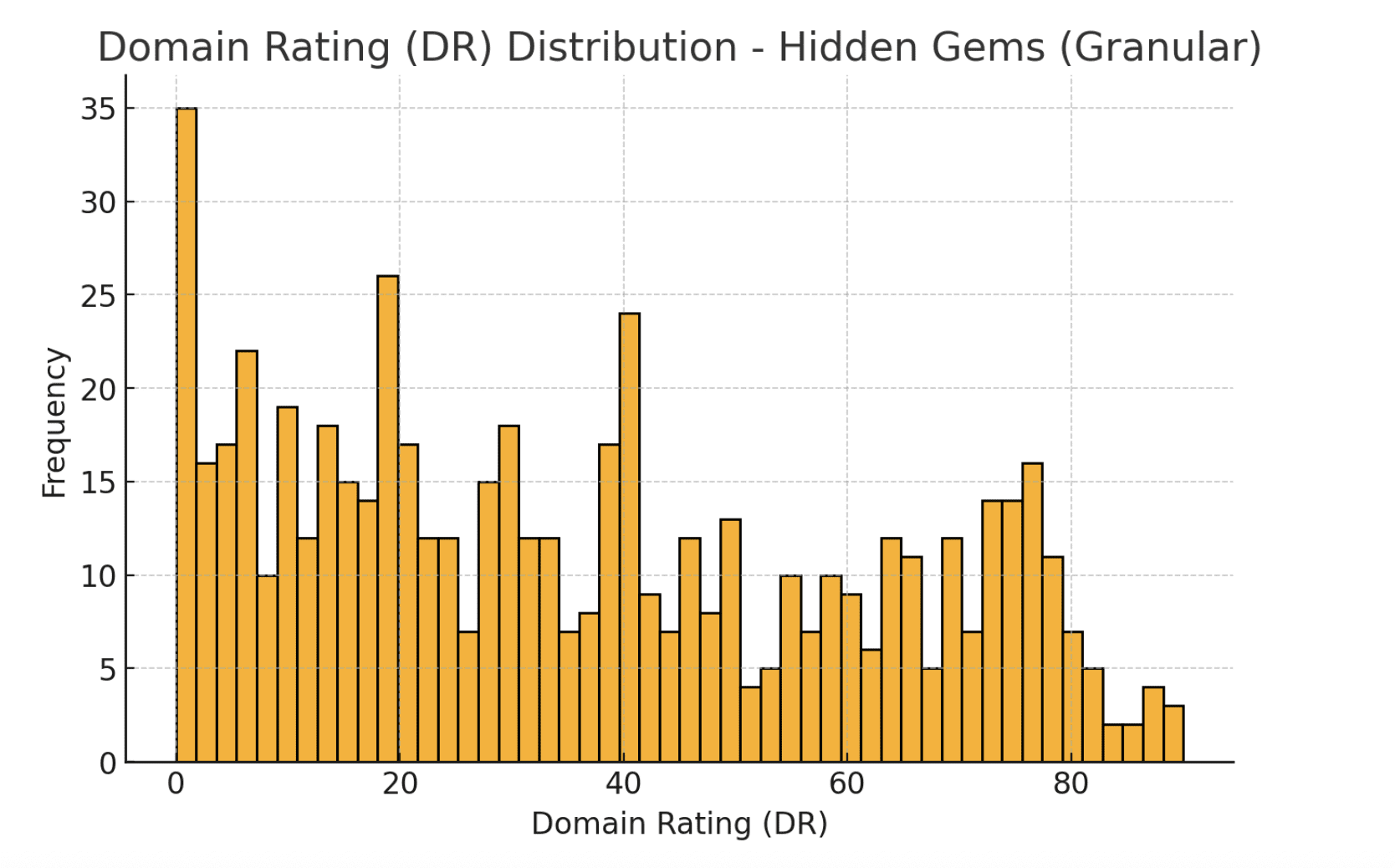

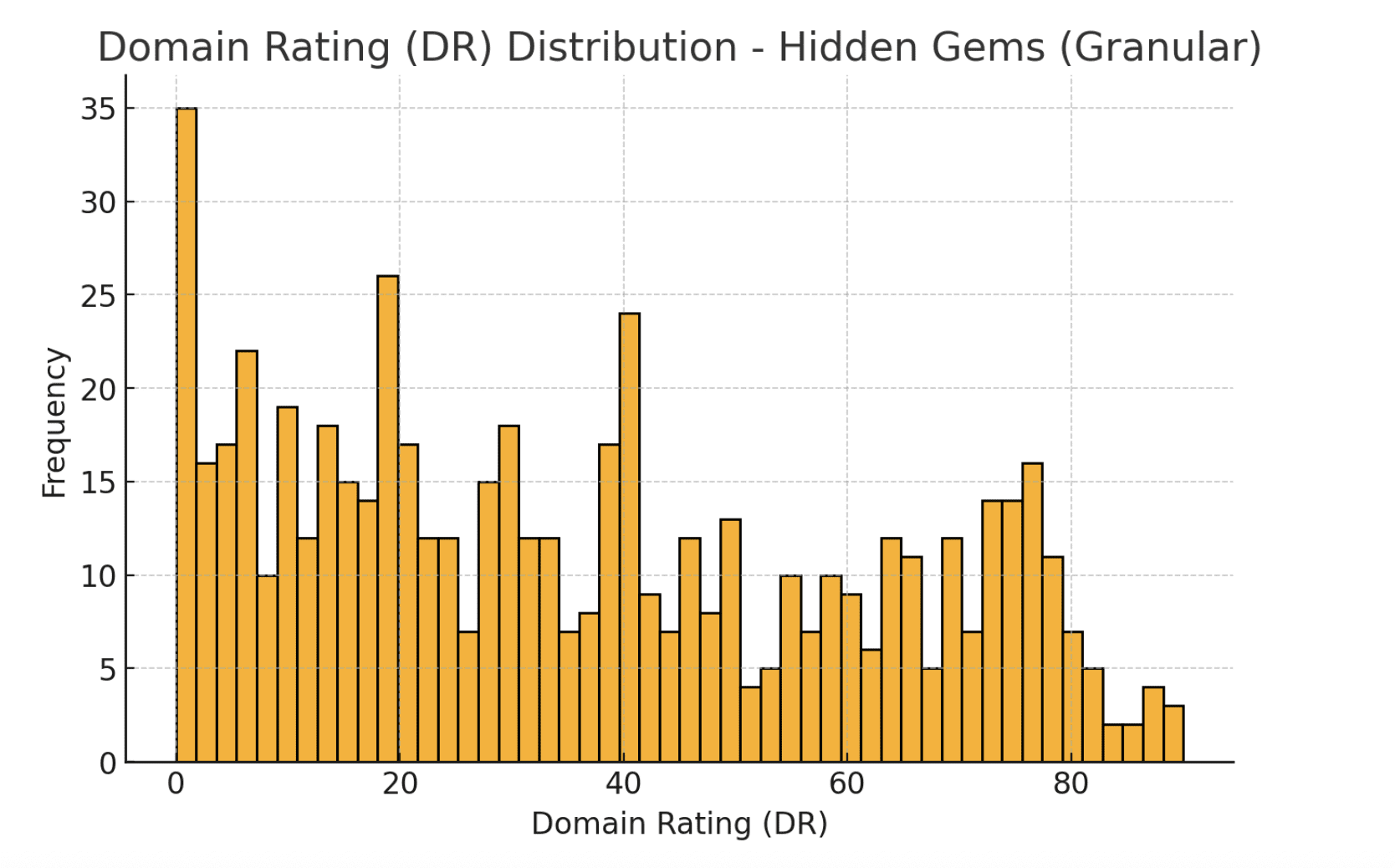

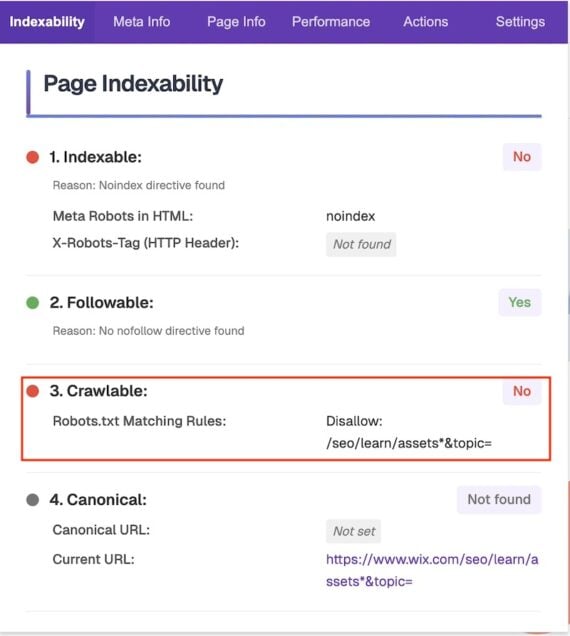

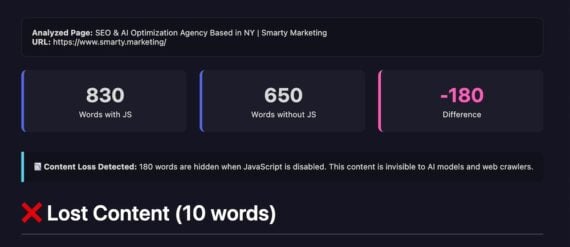

Image from author, August 2025

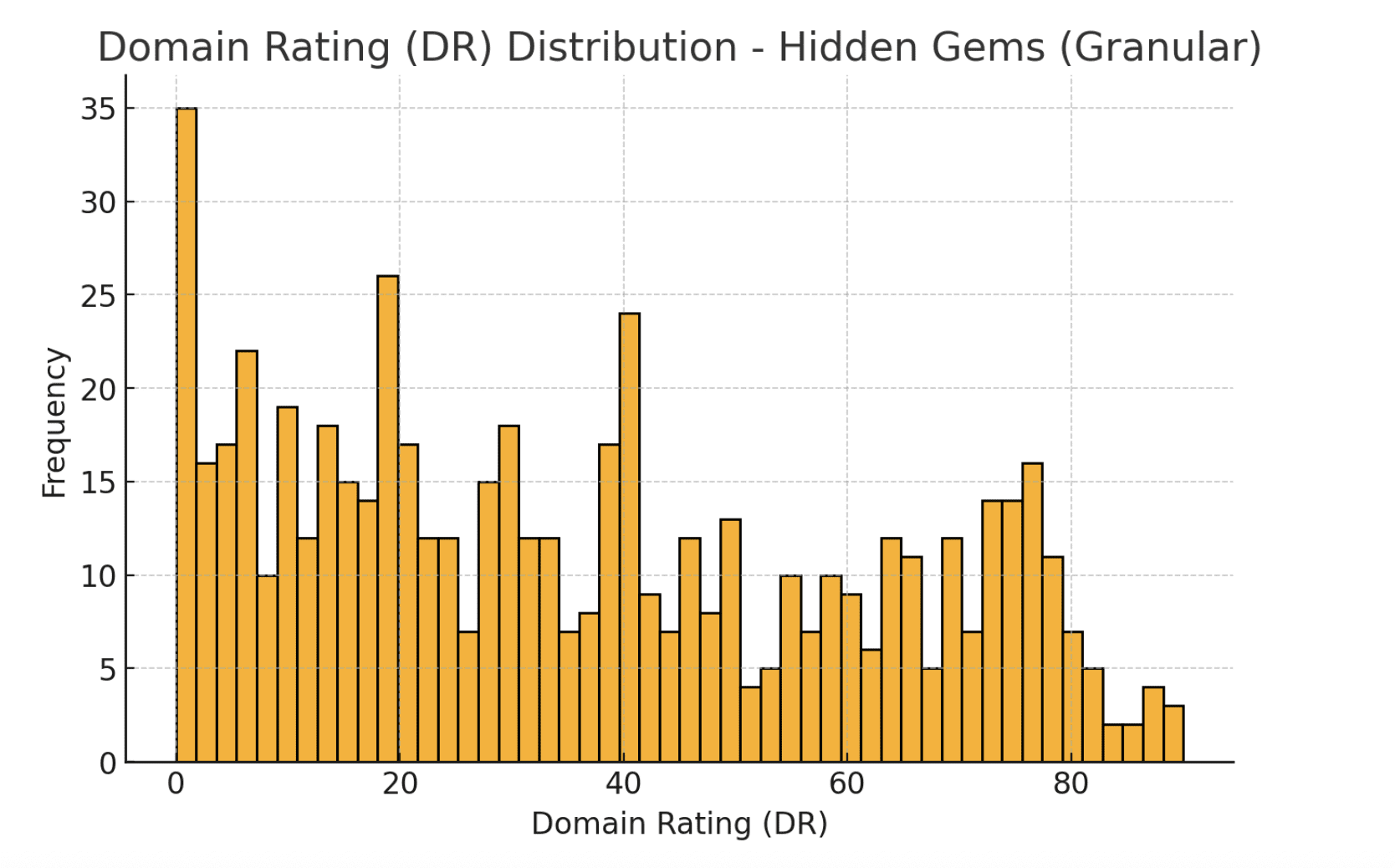

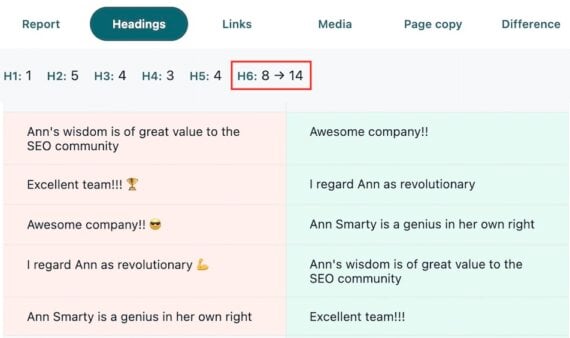

Image from author, August 2025