Why physical AI is becoming manufacturing’s next advantage

For decades, manufacturers have pursued automation to drive efficiency, reduce costs, and stabilize operations. That approach delivered meaningful gains, but it is no longer enough.

Today’s manufacturing leaders face a different challenge: how to grow amid labor constraints, rising complexity, and increasing pressure to innovate faster without sacrificing safety, quality, or trust. The next phase of transformation will not be defined by isolated AI tools or individual robots, but by intelligence that can operate reliably in the physical world.

This is where physical AI—intelligence that can sense, reason, and act in the real world—marks a decisive shift. And it is why Microsoft and NVIDIA are working together to help manufacturers move from experimentation to production at industrial scale.

The industrial frontier: Intelligence and trust, not just automation

Most early AI adoption focused on narrow optimization: automating tasks, improving utilization, and cutting costs. While valuable, that phase often created new friction, including skills gaps, governance concerns, and uncertainty about long‑term impact. Furthermore, the use cases were plentiful but not as strategic.

The industrial frontier represents a different approach. Rather than asking how much work machines can replace, frontier manufacturers ask how AI can expand human capability, accelerate innovation, and unlock new forms of value while remaining trustworthy and controllable.

Across industries, companies that successfully move into this frontier phase share two non‑negotiables:

- Intelligence: AI systems must understand how the business actually handles its data, workflows, and institutional knowledge.

- Trust: As AI begins to act in high‑stakes environments, organizations must retain security, governance, and observability at every layer.

Without intelligence, AI becomes generic. Without trust, adoption stalls.

Why manufacturing is the proving ground for physical AI

Manufacturing is uniquely positioned at the center of this shift.

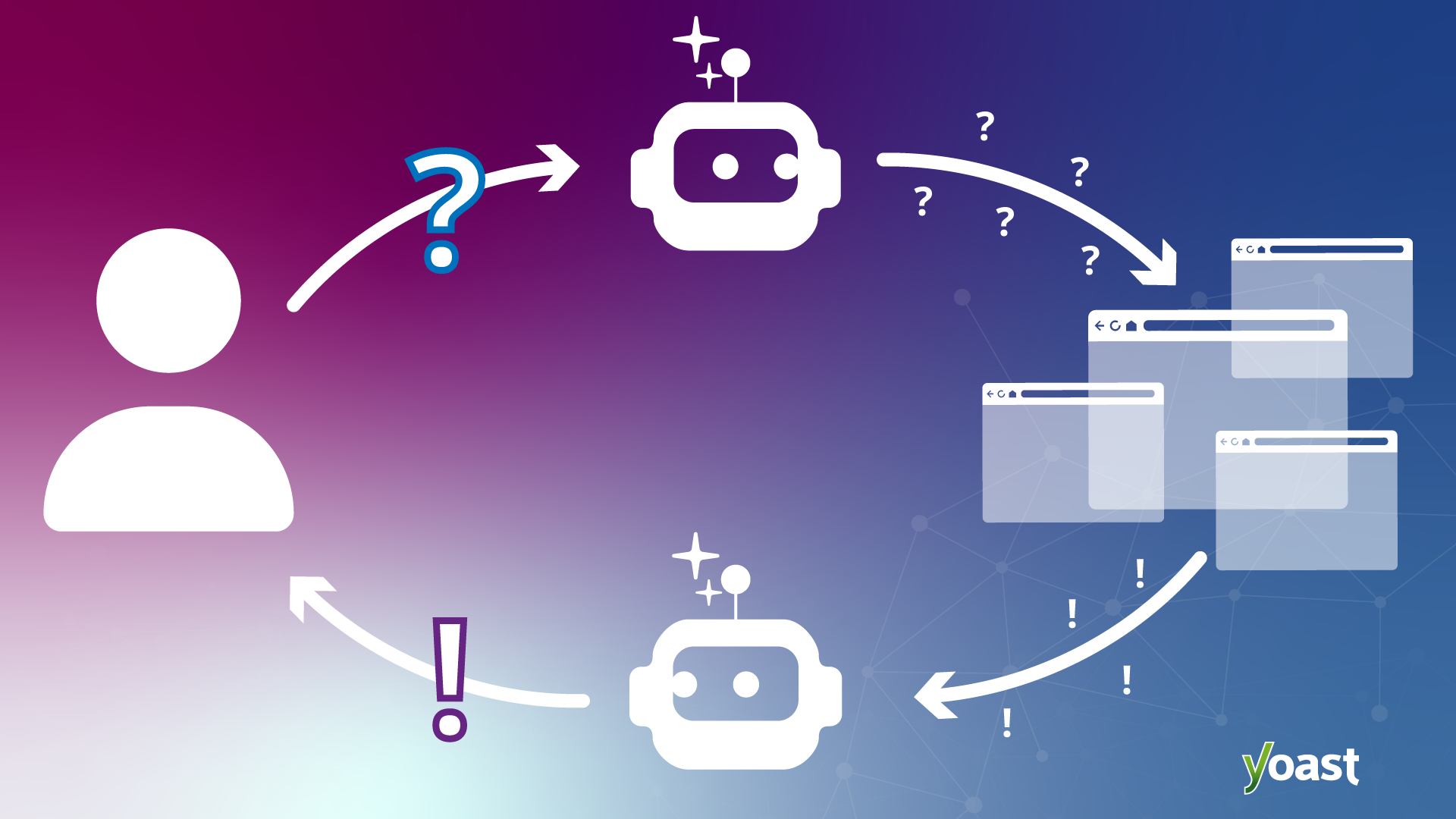

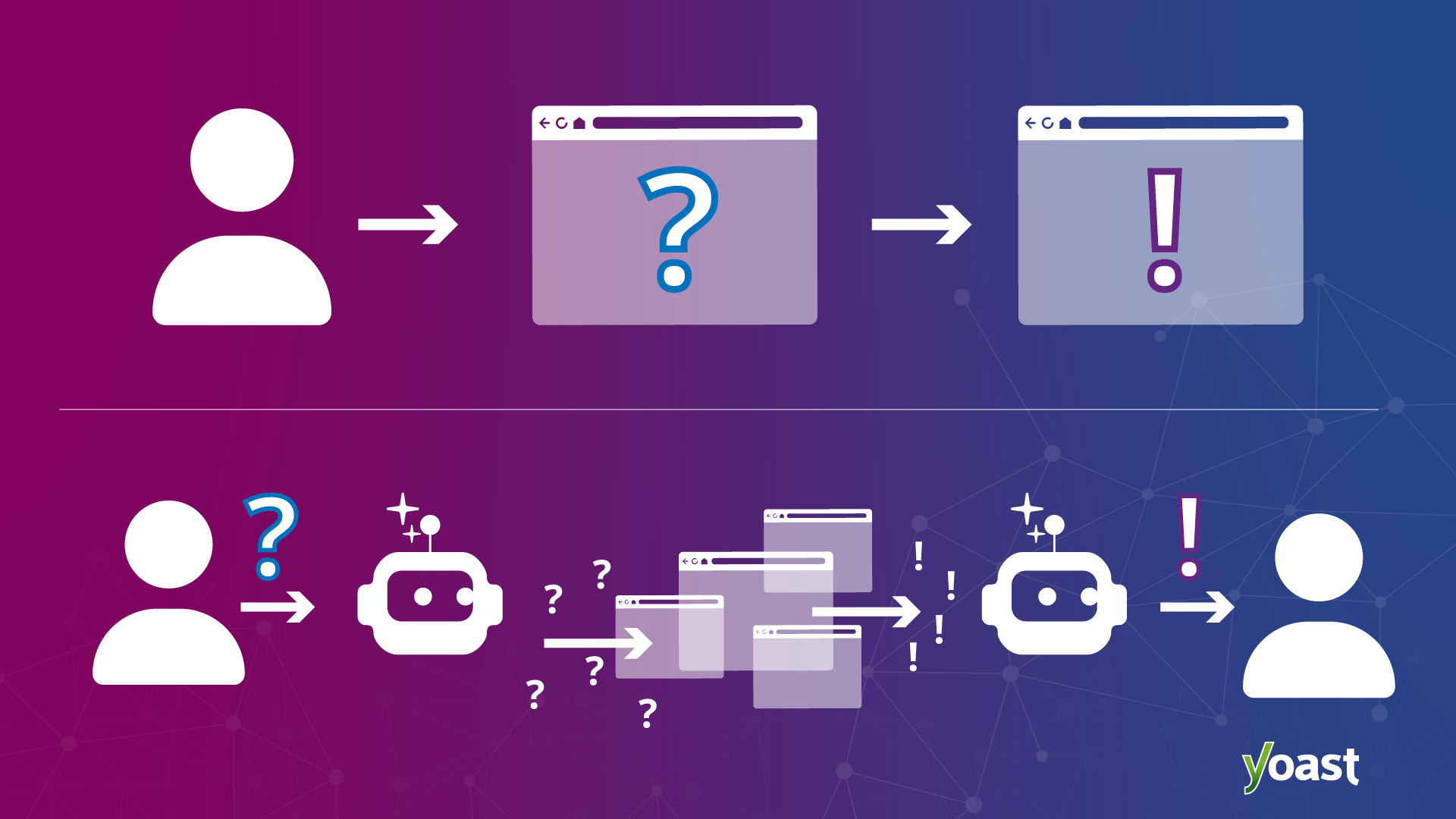

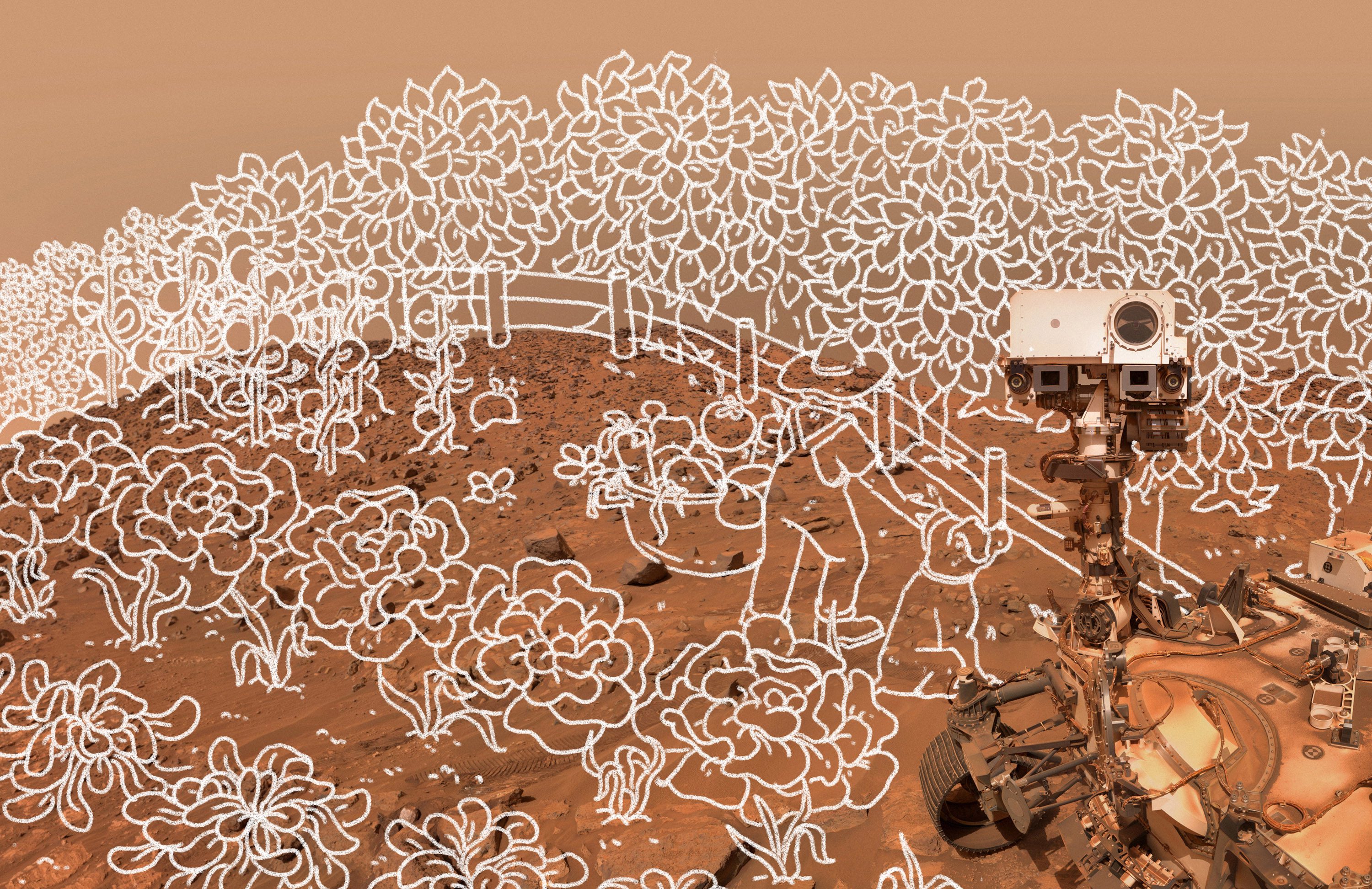

AI is no longer confined to planning or analytics. It is moving into physical execution: coordinating machines, adapting to real‑world variability, and working alongside people on the factory floor. Robotics, autonomous systems, and AI agents must now perceive, reason, and act in dynamic environments.

This transition exposes a critical gap. Traditional automation excels at repetition but struggles with adaptability. Human workers bring judgment and context but are constrained by scale. Physical AI closes that gap by enabling human‑led, AI‑operated systems, where people set intent and intelligent systems execute, learn, and improve over time. Humans are essential for scaled success.

Microsoft and NVIDIA: Accelerating physical AI at scale

Physical AI cannot be delivered through point solutions. It requires agentic-driven, enterprise-grade development, deployment, and operations toolchains and workflows that connect simulation, data, AI models, robotics, and governance into a coherent system.

NVIDIA is building the AI infrastructure that makes physical AI possible, including accelerated computing, open models, simulation libraries, and robotics frameworks and blueprints that enable the ecosystem to build autonomous robotics systems that can perceive, reason, plan, and take action in the physical world. Microsoft complements this with a cloud and data platform designed to operate physical AI securely, at scale, and across the enterprise.

Together, Microsoft and NVIDIA are enabling manufacturers to move beyond pilots toward production‑ready physical AI systems that can be developed, tested, deployed, and continuously improved across heterogeneous environments spanning the product lifecycle, factory operations, and supply chain.

From intelligence to action: Human-agent teams in the factory

At the industrial frontier, AI is not a standalone system, but a digital teammate.

When AI agents are grounded in the proper operational data, embedded in human workflows, and governed end to end, they can assist with tasks such as:

- Optimizing production lines in real time

- Coordinating maintenance and quality decisions

- Adapting operations to supply or demand disruptions

- Accelerating engineering and product lifecycle decisions

For example, manufacturers are beginning to use simulation‑grounded AI agents to evaluate production changes virtually before deploying them on the factory floor, reducing risk while accelerating decision‑making.

Crucially, frontier manufacturers design these systems so humans remain in control. AI executes, monitors, and recommends, while people provide intent, oversight, and judgment. This balance allows organizations to move faster without losing confidence or control.

The role of trust in scaling physical AI

As physical AI systems scale, trust becomes the limiting factor.

Manufacturers must ensure that AI systems are secure, observable, and operating within policy, especially when they influence safety‑critical or mission‑critical processes. Governance cannot be an afterthought; It must be engineered into the platform itself.

This is why frontier manufacturers treat trust as a first‑class requirement, pairing innovation with visibility, compliance, and accountability. Only then can physical AI move from promising demonstrations to enterprise‑wide deployment.

Why this moment matters—and what’s next

The convergence of AI agents, robotics, simulation, and real‑time data marks an inflection point for manufacturing. What was once experimental is becoming operational. What was once siloed is becoming connected.

At NVIDIA GTC 2026, Microsoft and NVIDIA will demonstrate how this collaboration supports physical AI systems that manufacturers can deploy today and scale responsibly tomorrow. From simulation‑driven development to real‑world execution, the focus is on helping manufacturers cross the industrial frontier with confidence.

For manufacturing leaders, the question is no longer whether physical AI will reshape operations, but how quickly they can adopt it responsibly, at scale, and with trust built in from the start.

Discover more with Microsoft at NVIDIA GTC 2026.

This content was produced by Microsoft. It was not written by MIT Technology Review’s editorial staff.