Ask An SEO: How To Implement Faceted Navigation Without Hurting Crawl Efficiency via @sejournal, @kevgibbo

This week’s question tackles the potential SEO fallouts when implementing faceted navigation:

“How can ecommerce sites implement SEO-friendly faceted navigation without hurting crawl efficiency or creating index bloat?”

Faceted navigation is a game-changer for user experience (UX) on large ecommerce sites. It helps users quickly narrow down what they’re looking for, whether it’s a size 8 pair of red road running trainers for women, or a blue, waterproof winter hiking jacket for men.

For your customers, faceted navigation makes huge inventories feel manageable and, when done right, enhances both UX and SEO.

However, when these facets create a new URL for every possible filter combination, they can lead to significant SEO issues that harm your rankings, and waste valuable crawl budget if not managed properly.

How To Spot Faceted Navigation Issues

Faceted navigation issues often fly under the radar – until they start causing real SEO damage. The good news? You don’t need to be a tech wizard to spot the early warning signs.

With the right tools and a bit of detective work, you can uncover whether filters are bloating your site, wasting crawl budget, or diluting rankings.

Here’s a step-by-step approach to auditing your site for faceted SEO issues:

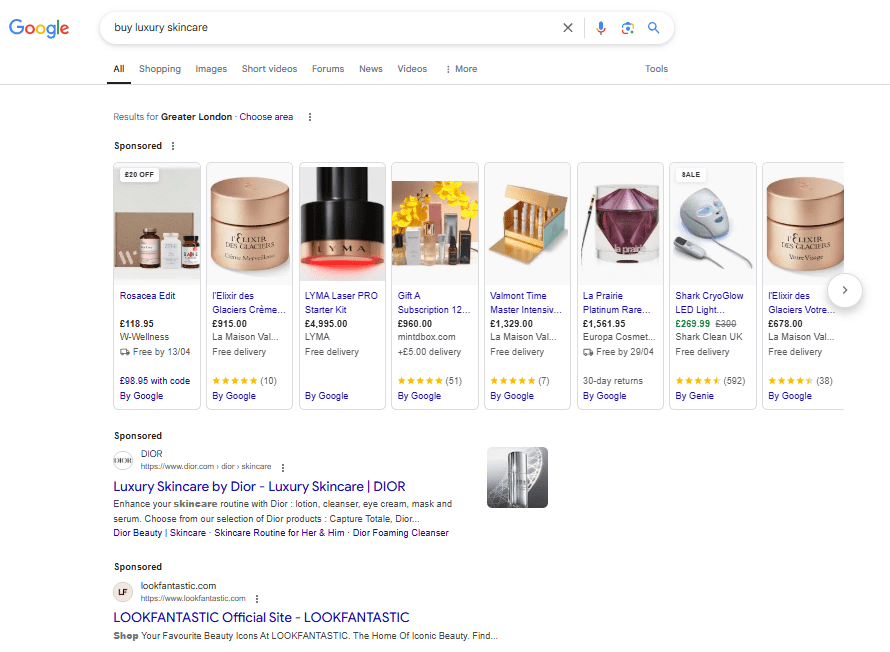

1. Do A Quick “Site:” Search

Start by searching on Google with this query: site:yourdomain.com.

This will show you all the URLs Google has indexed for your site. Review the list:

- Does the number seem higher than the total pages you want indexed?

- Are there lots of similar URLs, like ?color=red&size=8?

If so, you may have index bloat.

2. Dig Into Google Search Console

Check Google Search Console (GSC) for a clearer picture. Look under “Coverage” to see how many pages are indexed.

Pay attention to the “Indexed, not submitted in sitemap” section for unintended filter-generated pages.

3. Understand How Facets Work On Your Site

Not all faceted navigation behaves the same. Make sure you understand how filters work on your site:

- Are they present on category pages, search results, or blog listings?

- How do filters stack in the URL (e.g.,?brand=ASICS&color=red)?

4. Compare Crawl Activity To Organic Visits

Some faceted pages drive traffic; others burn crawl budget without returns.

Use tools like Botify, Screaming Frog, or Ahrefs to compare Googlebot’s crawling behavior with actual organic visits.

If a page gets crawled a lot but doesn’t attract visitors, it’s a sign that it’s consuming crawl resources unnecessarily.

5. Look For Patterns In URL Data

Run a crawler to scan your site’s URLs. Check for repetitive patterns, such as endless combinations of parameters like ?price=low&sort=best-sellers. These are potential crawler traps and unnecessary variations.

6. Match Faceted Pages With Search Demand

To decide which SEO tactics to use for faceted navigation, assess the search demand for specific filters and whether unique content can be created for those variations.

Use keyword research tools like Google Keyword Planner or Ahrefs to check for user demand for specific filter combinations. For example:

- White running shoes (SV 1000; index).

- White waterproof running shoes (SV 20; index).

- Red trail running trainers size 9 (SV 0; noindex).

This helps prioritize which facet combinations should be indexed.

If there’s enough value in targeting a specific query, such as product features, a dedicated URL may be worthwhile.

However, low-value filters like price or size should remain no-indexed to avoid bloated indexing.

The decision should balance the effort needed to create new URLs against the potential SEO benefits.

7. Log File Analysis For Faceted URLs

Log files record every request, including those from search engine bots.

By analyzing them, you can track which URLs Googlebot is crawling and how often, helping you identify wasted crawl budget on low-value pages.

For example, if Googlebot is repeatedly crawling deep-filtered URLs like /jackets?size=large&brand=ASICS&price=100-200&page=12 with little traffic, that’s a red flag.

Key signs of inefficiency include:

- Excessive crawling of multi-filtered or deeply paginated URLs.

- Frequent crawling of low-value pages.

- Googlebot is stuck in filter loops or parameter traps.

By regularly checking your logs, you get a clear picture of Googlebot’s behavior, enabling you to optimize crawl budget and focus Googlebot’s attention on more valuable pages.

Best Practices To Control Crawl And Indexation For Faceted Navigation

Here’s how to keep things under control, so your site stays crawl-efficient and search-friendly.

1. Use Clear, User-Friendly Labels

Start with the basics: Your facet labels should be intuitive. “Blue,” “Leather,” “Under £200” – these need to make instant sense to your users.

Confusing or overly technical terms can lead to a frustrating experience and missed conversions. Not sure what resonates? Check out competitor sites and see how they’re labeling similar filters.

2. Don’t Overdo It With Facets

Just because you can add 30 different filters doesn’t mean you should. Too many options can overwhelm users and generate thousands of unnecessary URL combinations.

Stick to what genuinely helps customers narrow down their search.

3. Keep URLs Clean When Possible

If your platform allows it, use clean, readable URLs for facets like /sofas/blue rather than messy query strings like ?color[blue].

Reserve query parameters for optional filters (e.g., sort order or availability), and don’t index those.

4. Use Canonical Tags

Use canonical tags to point similar or filtered pages back to the main category/parent page. This helps consolidate link equity and avoid duplicate content issues.

Just remember, canonical tags are suggestions, not commands. Google may ignore them if your filtered pages appear too different or are heavily linked internally.

For any faceted pages you want indexed, these should include a self-referencing canonical, and for any that don’t, canonicalize these to the parent page.

5. Create Rules For Indexing Faceted Pages

Break your URLs into three clear groups:

- Index (e.g., /trainers/blue/leather): Add a self-referencing canonical, keep them crawlable, and internally link to them. These pages represent valuable, unique combinations of filters (like color and material) that users may search for.

- Noindex (e.g., /trainers/blue_black): Use a to remove them from the index while still allowing crawling. This is suitable for less useful or low-demand filter combinations (e.g., overly niche color mixes).

- Block Crawl (e.g., filters with query parameters like /trainers?color=blue&sort=popularity): Use robots.txt, JavaScript, or parameter handling to prevent crawling entirely. These URLs are often duplicate or near-duplicate versions of indexable pages and don’t need to be crawled.

6. Maintain A Consistent Facet Order

No matter the order in which users apply filters, the resulting URL should be consistent.

For example, /trainers/blue/leather and /trainers/leather/blue should result in the same URL, or else you’ll end up with duplicate content that dilutes SEO value.

7. Use Robots.txt To Conserve Crawl Budget

One way to reduce unnecessary crawling is by blocking faceted URLs through your robots.txt file.

That said, it’s important to know that robots.txt is more of a polite request than a strict rule. Search engines like Google typically respect it, but not all bots do, and some may interpret the syntax differently.

To prevent search engines from crawling pages you don’t want indexed, it’s also smart to ensure those pages aren’t linked to internally or externally (e.g., backlinks).

If search engines find value in those pages through links, they might still crawl or index them, even with a disallow rule in place.

Here’s a basic example of how to block a faceted URL pattern using the robots.txt file. Suppose you want to stop crawlers from accessing URLs that include a color parameter:

User-agent: *

Disallow: /*color*

In this rule:

- User-agent: * targets all bots.

- The * wildcard means “match anything,” so this tells bots not to crawl any URL containing the word “color.”

However, if your faceted navigation requires a more nuanced approach, such as blocking most color options but allowing specific ones, you’ll need to mix Disallow and Allow rules.

For instance, to block all color parameters except for “black,” your file might include:

User-agent: *

Disallow: /*color*

Allow: /*color=black*

A word of caution: This strategy only works well if your URLs follow a consistent structure. Without clear patterns, it becomes harder to manage, and you risk accidentally blocking key pages or leaving unwanted URLs crawlable.

If you’re working with complex URLs or an inconsistent setup, consider combining this with other techniques like meta noindex tags or parameter handling in Google Search Console.

8. Be Selective With Internal Links

Internal links signal importance to search engines. So, if you link frequently to faceted URLs that are canonicalized or blocked, you’re sending mixed signals.

Consider using rel=”nofollow” on links you don’t want crawled – but be cautious. Google treats nofollow as a hint, not a rule, so results may vary.

Point to only canonical URLs within your website wherever possible. This includes dropping parameters and slugs from links that are not necessary for your URLs to work.

You should also prioritize pillar pages; the more inlinks a page has, the more authoritative search engines will deem that page to be.

In 2019, Google’s John Mueller said:

“In general, we ignore everything after hash… So things like links to the site and the indexing, all of that will be based on the non hash URL. And if there are any links to the hashed URL, then we will fold up into the non hash URL.”

9. Use Analytics To Guide Facet Strategy

Track which filters users actually engage with, and which lead to conversions.

If no one ever uses the “beige” filter, it may not deserve crawlable status. Use tools like Google Analytics 4 or Hotjar to see what users care about and streamline your navigation accordingly.

10. Deal With Empty Result Pages Gracefully

When a filtered page returns no results, respond with a 404 status, unless it’s a temporary out-of-stock issue, in which case show a friendly message stating so, and return a 200.

This helps avoid wasting crawl budget on thin content.

11. Using AJAX For Facets

When you interact with a page – say, filtering a product list, selecting a color, or typing in a live search box – AJAX lets the site fetch or send data behind the scenes, so the rest of the page stays put.

It can be really effective to implement facets client-side via AJAX, which doesn’t create multiple URLs for every filter change. This reduces unnecessary load on the server and improves performance.

12. Handling Pagination In Faceted Navigation

Faceted navigation often leads to large sets of results, which naturally introduces pagination (e.g., ?category=shoes&page=2).

But when combined with layered filters, these paginated URLs can balloon into thousands of crawlable variations.

Left unchecked, this can create serious crawl and index bloat, wasting search engine resources on near-duplicate pages.

So, should paginated URLs be indexed? In most cases, no.

Pages beyond the first page rarely offer unique value or attract meaningful traffic, so it’s best to prevent them from being indexed while still allowing crawlers to follow links.

The standard approach here is to use noindex, follow on all pages after page 1. This ensures your deeper pagination doesn’t get indexed, but search engines can still discover products via internal links.

When it comes to canonical tags, you’ve got two options depending on the content.

If pages 2, 3, and so on are simply continuations of the same result set, it makes sense to canonicalize them to page 1. This consolidates ranking signals and avoids duplication.

However, if each paginated page features distinct content or meaningful differences, a self-referencing canonical might be the better fit.

The key is consistency – don’t mix page 2 canonical to page 1 and page 3 to itself, for example.

About rel=”next” and rel=”prev,” while Google no longer uses these signals for indexing, they still offer UX benefits and remain valid HTML markup.

They also help communicate page flow to accessibility tools and browsers, so there’s no harm in including them.

To help control crawl depth, especially in large ecommerce sites, it’s wise to combine pagination handling with other crawl management tactics:

- Block excessively deep pages (e.g., page=11+) in robots.txt.

- Use internal linking to surface only the first few pages.

- Monitor crawl activity with log files or tools like Screaming Frog.

For example, a faceted URL like /trainers?color=white&brand=asics&page=3 would typically:

- Canonical to /trainers?color=white&brand=asics (page 1).

- Include noindex, follow.

- Use rel=”prev” and rel=”next” where appropriate.

Handling pagination well is just as important as managing the filters themselves. It’s all part of keeping your site lean, crawlable, and search-friendly.

Final Thoughts

When properly managed, faceted navigation can be an invaluable tool for improving user experience, targeting long-tail keywords, and boosting conversions.

However, without the right SEO strategy in place, it can quickly turn into a crawl efficiency nightmare that damages your rankings.

By following the best practices outlined above, you can enjoy all the benefits of faceted navigation while avoiding the common pitfalls that often trip up ecommerce sites.

More Resources:

Featured Image: Paulo Bobita/Search Engine Journal