How I Edit AI Content: A Workflow For The New Age Of Content Creation via @sejournal, @Kevin_Indig

In last week’s Memo, I explained how, just as digital DJing transformed music mixing, AI is revolutionizing how we create content by giving us instant access to diverse expressions and ideas.

Instead of fighting this change, writers should embrace AI as a starting point while focusing our energy on adding uniquely human elements that machines can’t replicate, like our personal experiences, moral judgment, and cultural understanding.

Last week, I identified seven distinctly human writing capabilities and 11 telltale signs of AI-generated content.

Today, I want to show you how I personally apply these insights in my editing process.

Image Credit: Lyna ™

Image Credit: Lyna ™Rather than seeing AI as my replacement, I advocate for thoughtful collaboration between human creativity and AI efficiency, much like how skilled DJs don’t just play songs but transform them through artistic mixing.

As someone who’s spent countless hours editing and tinkering with AI-generated drafts, I’ve noticed most people get stuck on grammar fixes while missing what truly makes writing connect with readers.

They overlook deeper considerations like:

- Purposeful imperfection: Truly human writing isn’t perfectly polished. Natural quirks, occasional tangents, and varied sentence structures create authenticity that perfect grammar and flawless organization can’t replicate.

- Emotional intelligence: AI content often lacks the intuitive emotional resonance that comes from lived experience. Editors frequently correct grammar but overlook opportunities to infuse genuine emotional depth.

- Cultural context: Humans naturally reference shared cultural touchpoints and adapt their tone based on context. This awareness is difficult to edit into AI content without completely reframing passages.

In today’s Memo, I explain how to turn these edits into a recurring workflow for you or your team, so you can leverage the power of AI, accelerate content output, and drive more organic revenue.

Boost your skills with Growth Memo’s weekly expert insights. Subscribe for free!

Turning AI-Editing Into A Workflow

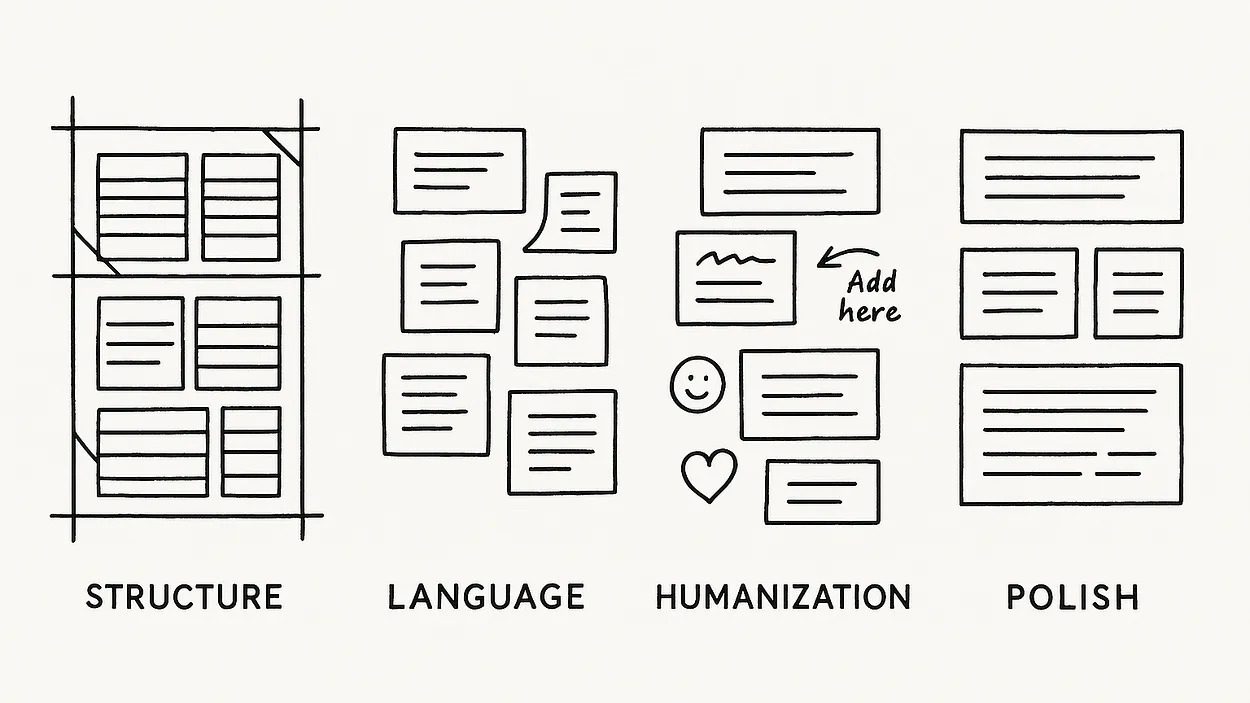

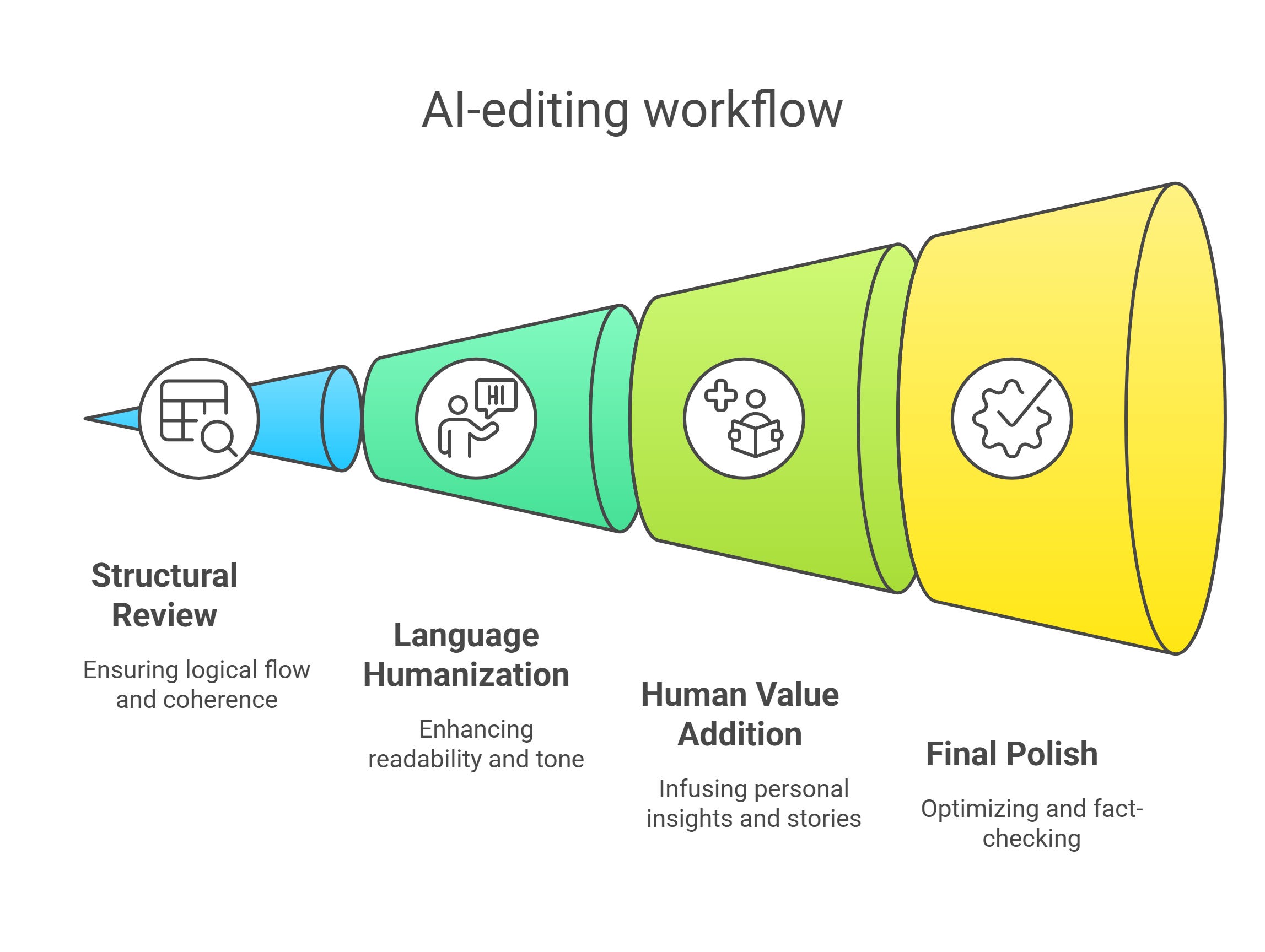

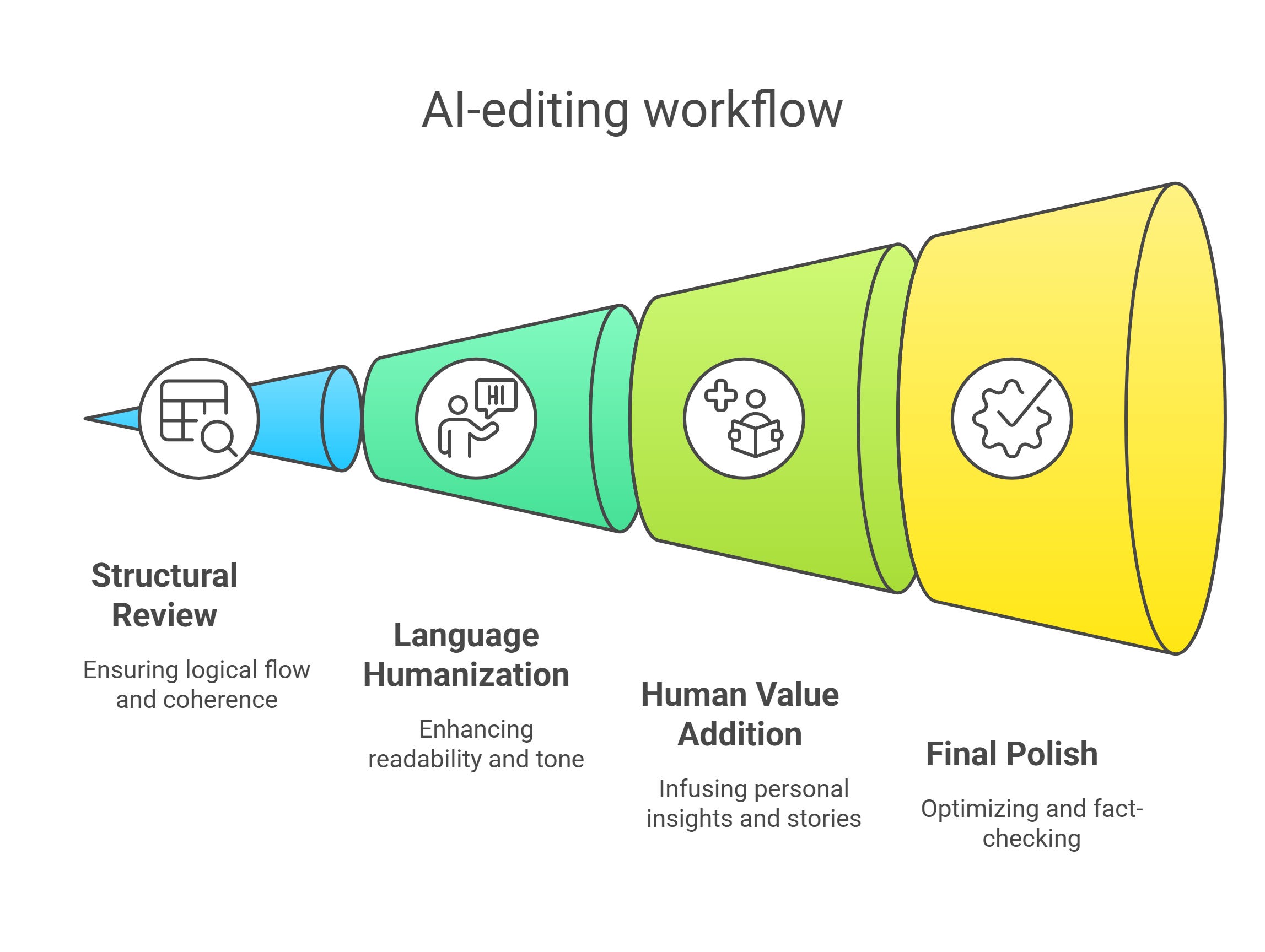

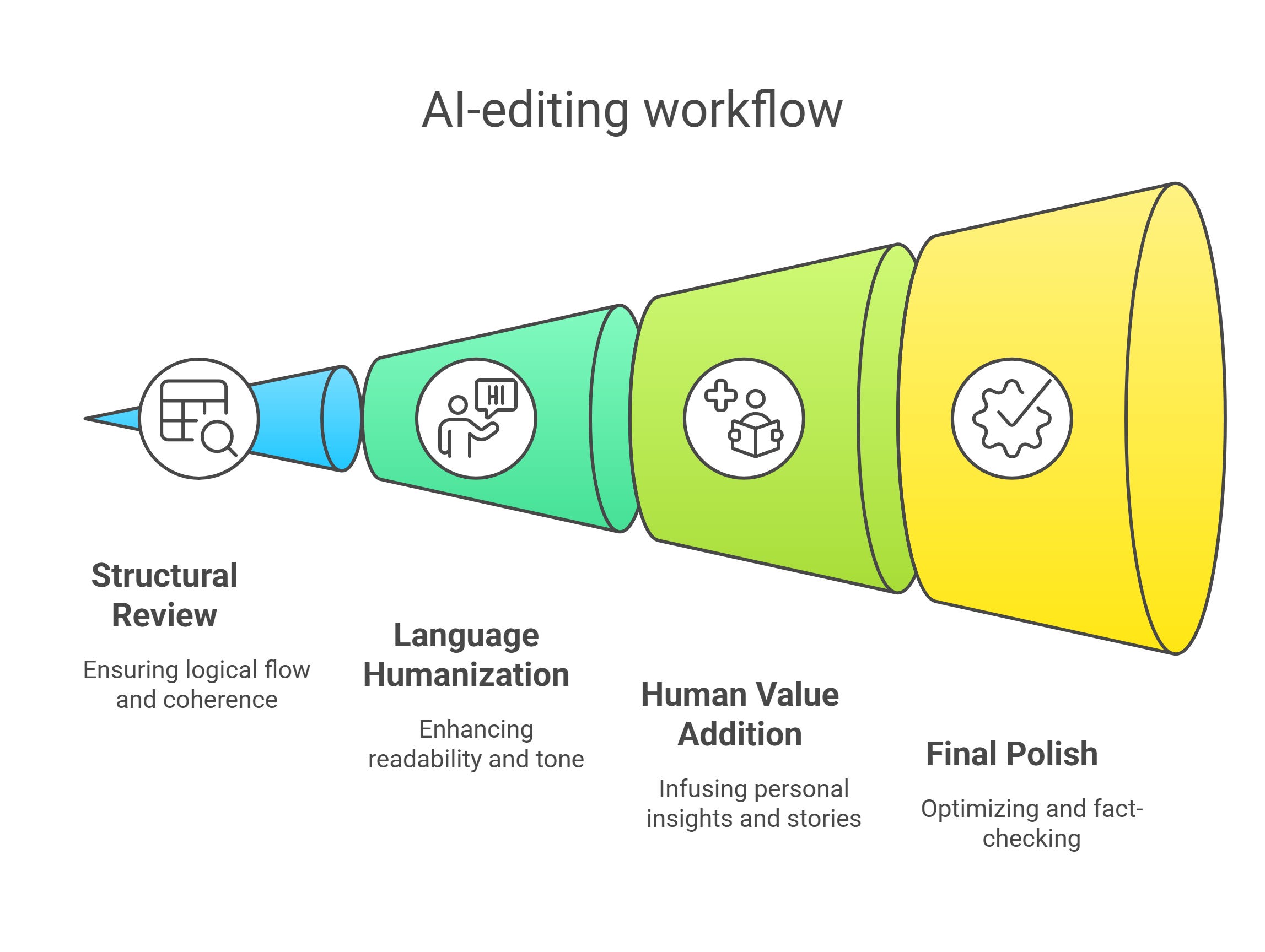

I like to edit AI content in several passes, each with a specific focus:

- Round 1: Structure.

- Round 2: Language.

- Round 3: Humanization.

- Round 4: Polish.

Not every type of content needs the same amount of editing:

- You can be more hands-off with supporting content on category or product pages, while editorial content for blogs or content hubs needs significantly more editing.

- In the same way, evergreen topics need less editing while thought leadership needs a heavy editorial hand.

Round 1: Structure & Big-Picture Review

First, I read the entire draft like a skeptical reader would.

I’m looking for logical flow issues, redundant sections, and places where the AI went on unhelpful tangents.

This is about getting the bones right before polishing sentences.

Rather than nitpicking grammar, I ask: “Does this piece make sense? Would a human actually structure it this way?”

But, the most important question is: “Does this piece meet user intent?” You need to ensure that the structure optimizes for speed-to-insights and helps users solve the implied problem of their searches.

If sections feel out of order or disconnected, I rearrange them.

If the AI repeats the same point in multiple places (they love doing this), I consolidate.

Round 2: Humanize The Language & Flow

Next, I tackle that sterile AI tone that makes readers’ eyes glaze over.

I break up the robotic rhythm by:

- Consciously varying sentence lengths (Watch this. I’m doing it right now. Different lengths create natural cadence.).

- Replacing corporate-speak with how humans actually talk (“use” instead of “utilize,” “start” instead of “commence”).

- Cutting those meaningless filler sentences AI loves to add (“It’s important to note that…” or “As we can see from the above…”).

For example, I’d transform this AI-written line:

Utilizing appropriate methodologies can facilitate enhanced engagement among target demographics.

Into this:

Use the right approach, and people will actually care about what you’re saying.

Round 3: Add The Human Value Only You Can Provide

Here’s where I earn my keep.

I infuse the piece with:

- Opinions where appropriate.

- Personal stories or examples.

- Unique metaphors or cultural references.

- Nuanced insights that come from my expertise.

One of the shifts we have to make – and that I made – is to be more deliberate about collecting stories and opinions that we can tell.

In his book “Storyworthy,” Matthew Dicks shares how he saves stories from everyday life in a spreadsheet. He calls this habit Homework For Life, and it’s the most effective way to collect relatable stories that you can use for your content. It’s also a way to slow down time:

As you begin to take stock of your days, find those moments — see them and record them — time will begin to slow down for you. The pace of your life will relax.

Round 4: Final Polish & Optimization

Finally, I do a last pass focusing on:

- A punchy opening that hooks the reader.

- Removing any lingering AI patterns (overly formal language, repetitive phrases).

- Search optimization (user intent, headings, keywords, internal links) without sacrificing readability.

- Fact-checking every statistic, date, name, and claim.

- Adding calls to action or questions that engage readers.

I know I’ve succeeded when I read the final piece and genuinely forget that an AI was involved in the drafting process.

The ultimate test: “Would I be proud to put my name on this?”

AI Content Editing Checklist

Before you hit “Publish,” run through this checklist to make sure you’ve covered all bases:

- ✅ User Intent: The content is organized logically and addresses the intended topic or keyword completely, without off-topic detours.

- ✅ Tone & Voice: The writing sounds consistently human and aligns with brand voice (e.g., friendly, professional, witty, etc.).

- ✅ Readability: Sentences and paragraphs are concise and easy to read. Jargon is explained or simplified. The formatting (headings, lists, etc.) makes it skimmable.

- ✅ Repetition: No overly repetitive phrases or ideas. Redundant content is trimmed. The language is varied and interesting.

- ✅ Accurate: All facts, stats, names, and claims have been verified. Any errors are corrected. Sources are cited for important or non-obvious facts, lending credibility. There are no unsupported claims or outdated information.

- ✅ Original Value: The content contains unique elements (experiences, insights, examples, opinions) that did not come from AI.

- ✅ SEO: The primary keyword and relevant terms are included naturally. Title and headings are optimized and clear. Internal links to related content are added where relevant. External links to authoritative sources support the content.

- ✅ Polish: The introduction is compelling. The content includes elements that engage the reader (questions, conversational bits) and a call to action. It’s free of typos and grammatical errors. All sentences flow well.

If you can check off all (or most) of these items, you’ve likely turned the AI draft into a high-quality piece that can confidently be published.

AI Content Editing = Remixing

We’ve come full circle.

Just as digital technology transformed DJing without eliminating the need for human creativity and curation, AI is reshaping writing while still requiring our uniquely human touch.

The irony I mentioned at the start of this article – trying to make AI content more human – becomes less ironic when we view AI as a collaborative tool rather than a replacement for human creativity.

Just as DJs evolved from vinyl crates to digital platforms without losing their artistic touch, writers are adapting to use AI while maintaining their unique value.

You can raise the chances of creating high-performing content that stands out by selecting the right models, inputs, and direction:

- The newest models lead to exponentially better content than older (cheaper) ones. Don’t try to save money here.

- Spend a lot of time getting style guides and examples right so the models work in the right lanes.

- The more unique your data sources are, the more defensible your AI draft becomes.

The key insight is this: AI content editing is about enhancing the output with the irreplaceable human elements that make content truly engaging.

Whether that’s through adding lived experience, cultural understanding, emotional depth, or purposeful imperfection, our role is to be the bridge between AI’s computational efficiency and human connection.

The future belongs not to those who resist AI but to those who learn to dance with it, knowing exactly when to lead with their uniquely human perspective and when to follow the algorithmic beat.

Back in my DJ days, the best sets weren’t about the equipment I used but about the moments of unexpected connection I created.

The same holds true for writing in this new era.

Featured Image: Paulo Bobita/Search Engine Journal