AI Is Changing Buying Behavior, Study Finds

Artificial intelligence is driving global shopping experiences according to Capgemini Research Institute’s annual trends report.

“What matters to today’s consumer 2025,” published Jan. 9, recaps the firm’s survey in October and November 2024 of 12,000 consumers in Australia, Canada, France, Germany, India, Italy, Japan, the Netherlands, Spain, Sweden, the United Kingdom, and the United States.

The 100-page report focuses on how consumers discover products, how they shop, and why they switch brands.

Product Discovery

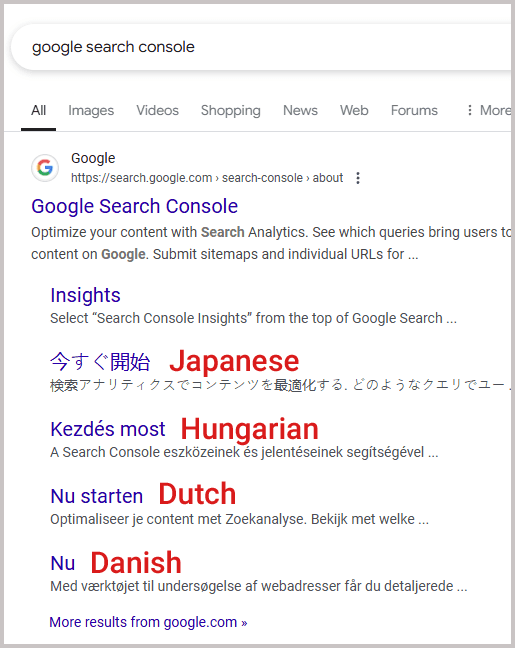

ChatGPT and other generative AI platforms have largely replaced traditional search engines for product recommendations, according to the survey. Nearly two-thirds of Gen Zs (ages 18-25), Millennials (26-41), and Gen Xs (42-47) prefer genAI for that purpose. Only Boomers (58 and over) still favor Google and other search engines for recommendations.

Moreover, genAI is transforming seemingly every touchpoint of the shopping journey. Consumers now ask genAI to curate images and aggregate product searches from multiple platforms. Some had even found virtual assistants more adept at making fashion, home décor, and travel recommendations than sales associates.

We addressed in December the power of influencers and social media for gift recommendations. An Adobe survey found that 20% of all U.S. Cyber Monday sales came from influencer endorsements. The Capgemini survey confirms those findings and more, reporting:

- 32% of consumers purchased products through social media,

- 68% of Gen Zs have discovered a product or brand through social.

Shopping

Consumers are responding to retail media, according to the survey.

- 67% of respondents notice ads on retailer sites.

- 35% found the ads helpful.

- 22% discovered products from those ads.

Despite the recent cost-of-living improvements, consumers still seek in-store and online discounts.

- 64% visit multiple physical stores seeking deals.

- 65% buy private-label or low-cost brands.

Consumers also value “quick commerce,” the hyper-fast delivery of online goods. Approximately two-thirds of respondents stated a 2-hour or a 10-minute delivery was important to their purchase decisions. Forty-two percent valued the order-online pick-up in-store option.

“Demand for quick commerce is on the rise, with consumers from some geographies increasingly willing to pay for speed and efficiency,” researchers wrote, adding that merchants continue to invest in AI and logistics to improve infrastructures.

Switching Brands

Brand loyalty is increasingly rare among consumers, according to the survey. Researchers advised brands to (i) augment genAI tools to become more consumer-centric, (ii) use technology to lower prices, and (iii) leverage social and retail media networks.