Why Now’s The Time To Adopt Schema Markup via @sejournal, @marthavanberkel

There is no better time for organizations to prioritize Schema Markup.

Why is that so, you might ask?

First of all, Schema Markup (aka structured data) is not new.

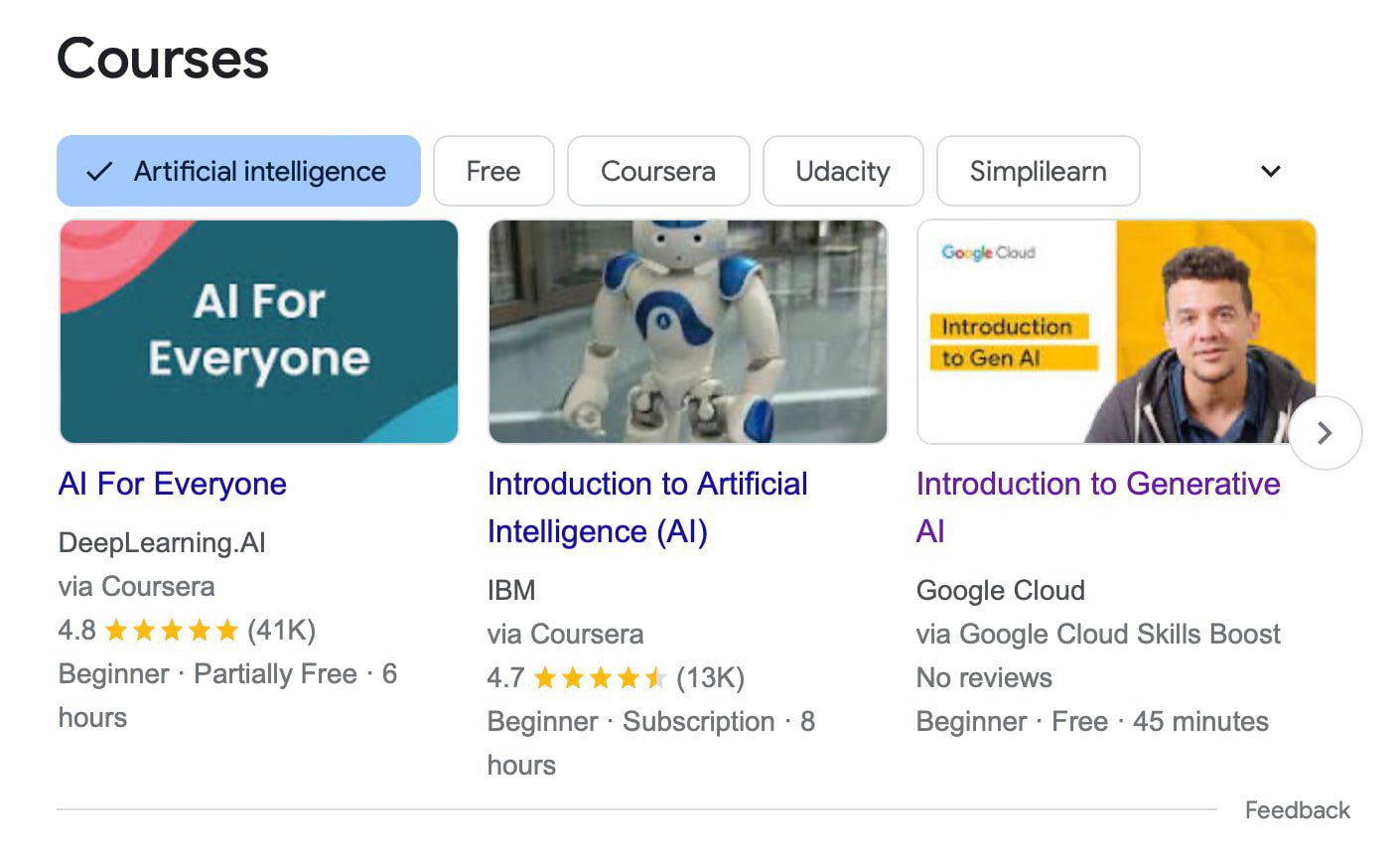

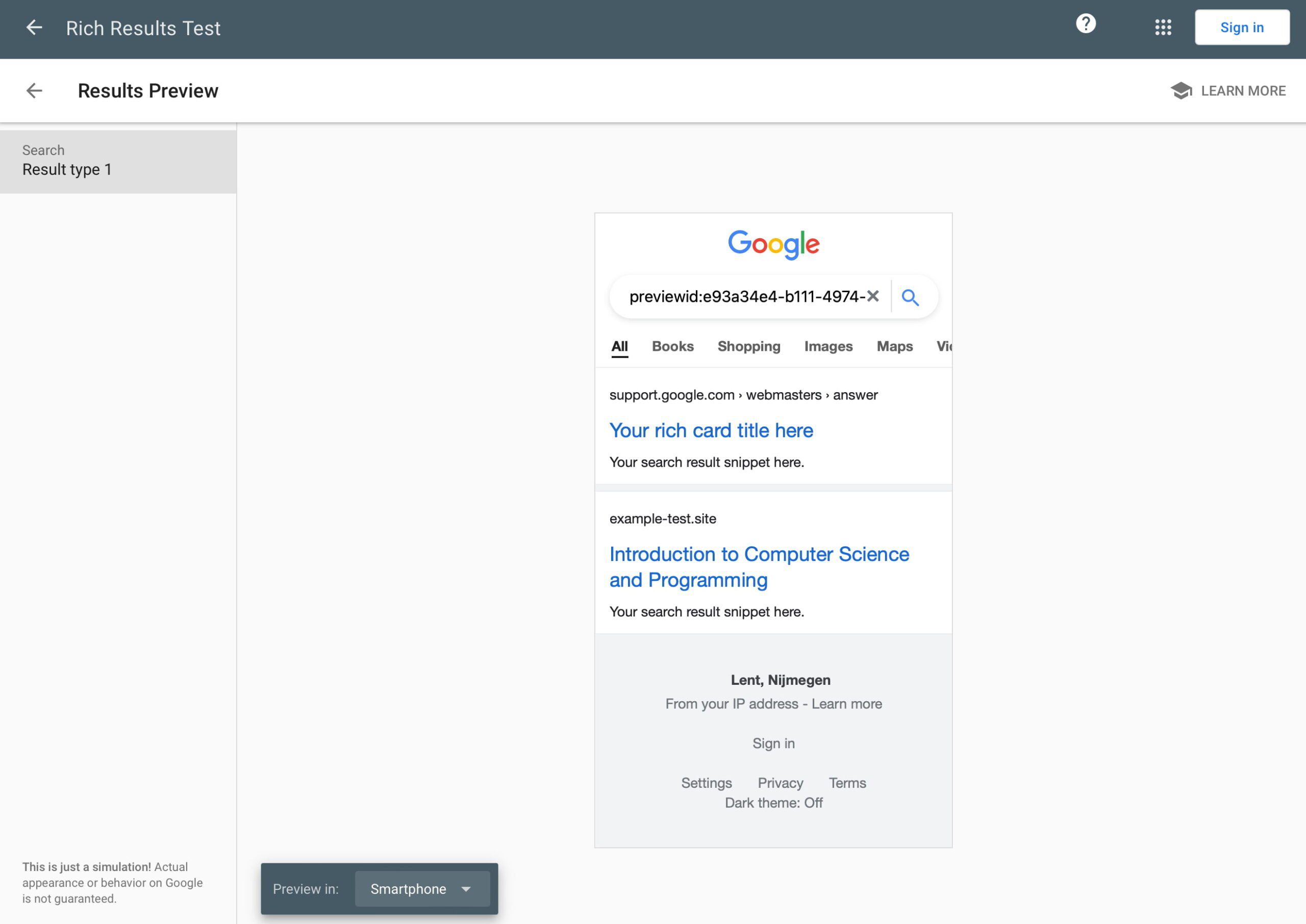

Google has been awarding sites that implement structured data with rich results. If you haven’t taken advantage of rich results in search, it’s time to gain a higher click-through rate from these visual features in search.

Secondly, now that search is primarily driven by AI, helping search engines understand your content is more important than ever.

Schema Markup allows your organization to clearly articulate what your content means and how it relates to other things on your website.

The final reason to adopt Schema Markup is that, when done correctly, you can build a content knowledge graph, which is a critical enabler in the age of generative AI. Let’s dig in.

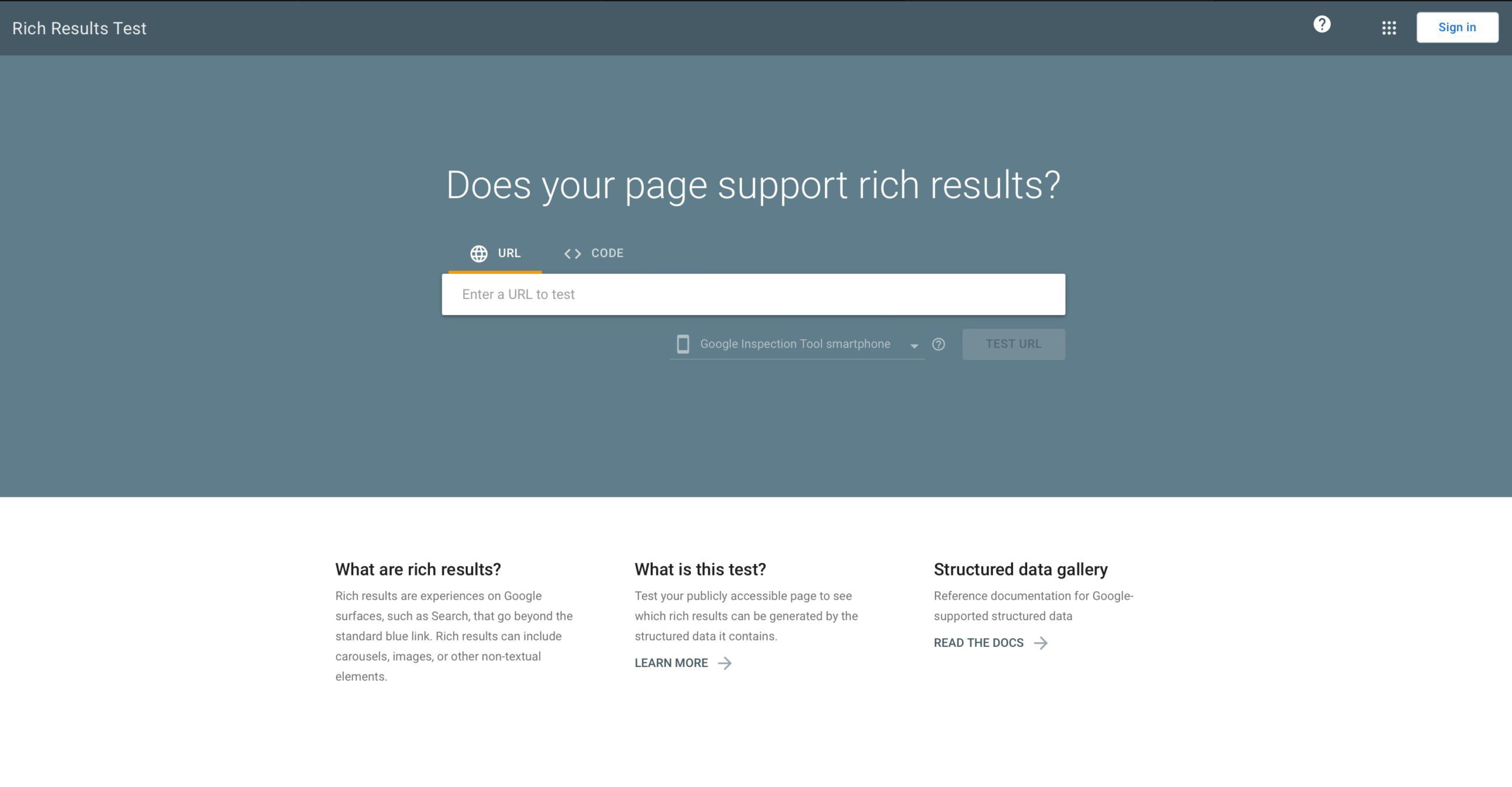

Schema Markup For Rich Results

Schema.org has been around since 2011. Back then, Google, Bing, Yahoo, and Yandex worked together to create the standardized Schema.org vocabulary to enable website owners to translate their content to be understood by search engines.

Since then, Google has incentivized websites to implement Schema Markup by awarding rich results to websites with certain types of markup and eligible content.

Websites that achieve these rich results tend to see higher click-through rates from the search engine results page.

In fact, Schema Markup is one of the most well-documented SEO tactics that Google tells you to do. With so many things in SEO that are backward-engineered, this one is straightforward and highly recommended.

You might have delayed implementing Schema Markup due to the lack of applicable rich results for your website. That might have been true at one point, but I’ve been doing Schema Markup since 2013, and the number of rich results available is growing.

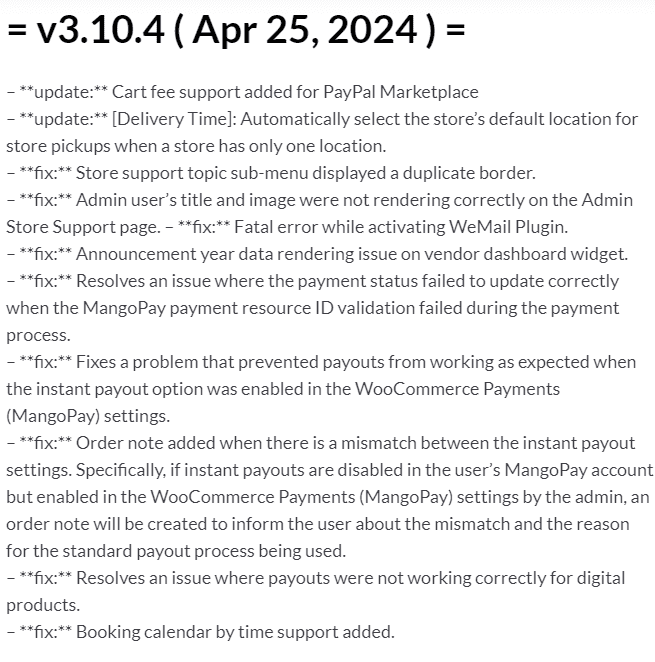

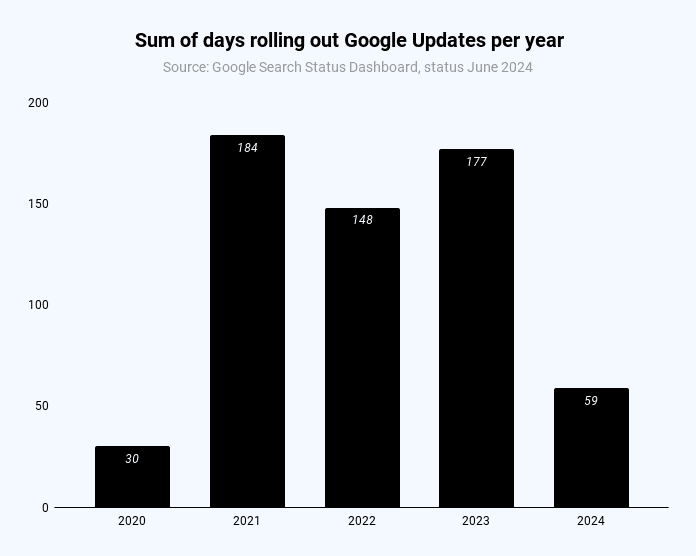

Even though Google deprecated how-to rich results and changed the eligibility of FAQ rich results in August 2023, it introduced six new rich results in the months following – the most new rich results introduced in a year!

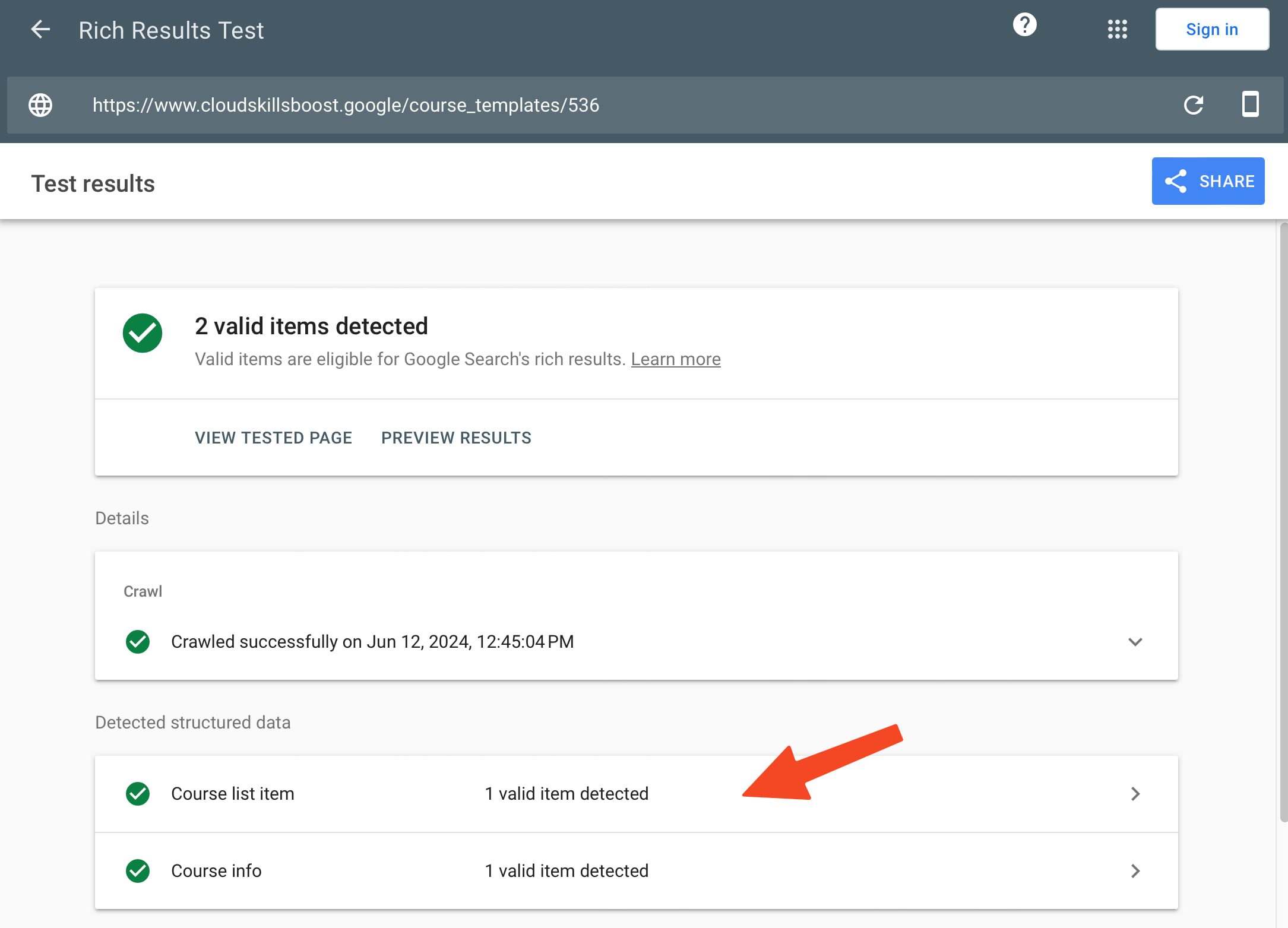

These rich results include vehicle listing, course info, profile page, discussion forum, organization, vacation rental, and product variants.

There are now 35 rich results that you can use to stand out in search, and they apply to a wide range of industries such as healthcare, finance, and tech.

Here are some widely applicable rich results you should consider utilizing:

- Breadcrumb.

- Product.

- Reviews.

- JobPosting.

- Video.

- Profile Page.

- Organization.

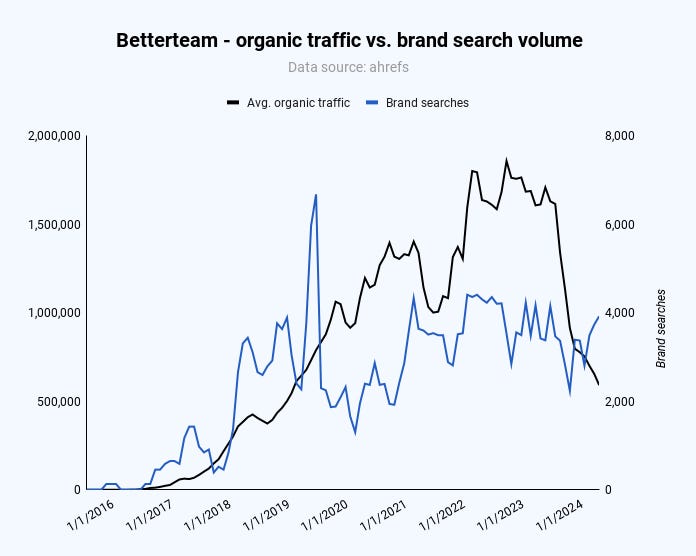

With so many opportunities to take control of how you appear in search, it’s surprising that more websites haven’t adopted it.

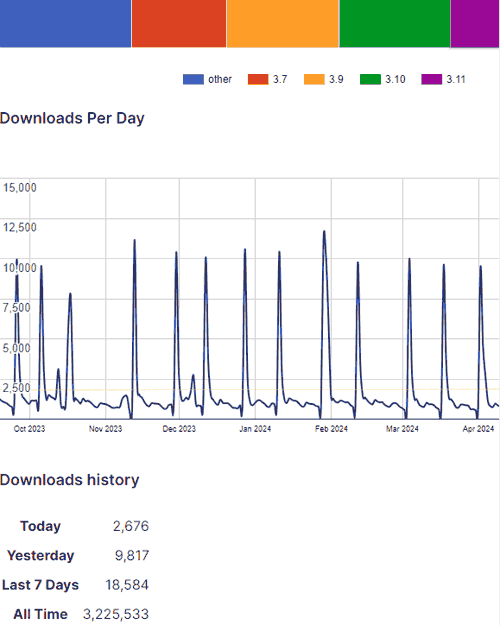

A statistic from Web Data Commons’ October 2023 Extractions Report showed that only 50% of pages had structured data.

Of the pages with JSON-LD markup, these were the top types of entities found.

- http://schema.org/ListItem (2,341,592,788 Entities)

- http://schema.org/ImageObject (1,429,942,067 Entities)

- http://schema.org/Organization (907,701,098 Entities)

- http://schema.org/BreadcrumbList (817,464,472 Entities)

- http://schema.org/WebSite (712,198,821 Entities)

- http://schema.org/WebPage (691,208,528 Entities)

- http://schema.org/Offer (623,956,111 Entities)

- http://schema.org/SearchAction (614,892,152 Entities)

- http://schema.org/Person (582,460,344 Entities)

- http://schema.org/EntryPoint (502,883,892 Entities)

(Source: October 2023 Web Data Commons Report)

Most of the types on the list are related to the rich results mentioned above.

For example, ListItem and BreadcrumbList are required for the Breadcrumb Rich Result, SearchAction is required for Sitelink Search Box, and Offer is required for the Product Rich Result.

This tells us that most websites are using Schema Markup for rich results.

Even though these Schema.org types can help your site achieve rich results and stand out in search, they don’t necessarily tell search engines what each page is about in detail and help your site be more semantic.

Help AI Search Engines Understand Your Content

Have you ever seen your competitor’s sites using specific Schema.org Types that are not found in Google’s structured data documentation (i.e. MedicalClinic, IndividualPhysician, Service, etc)?

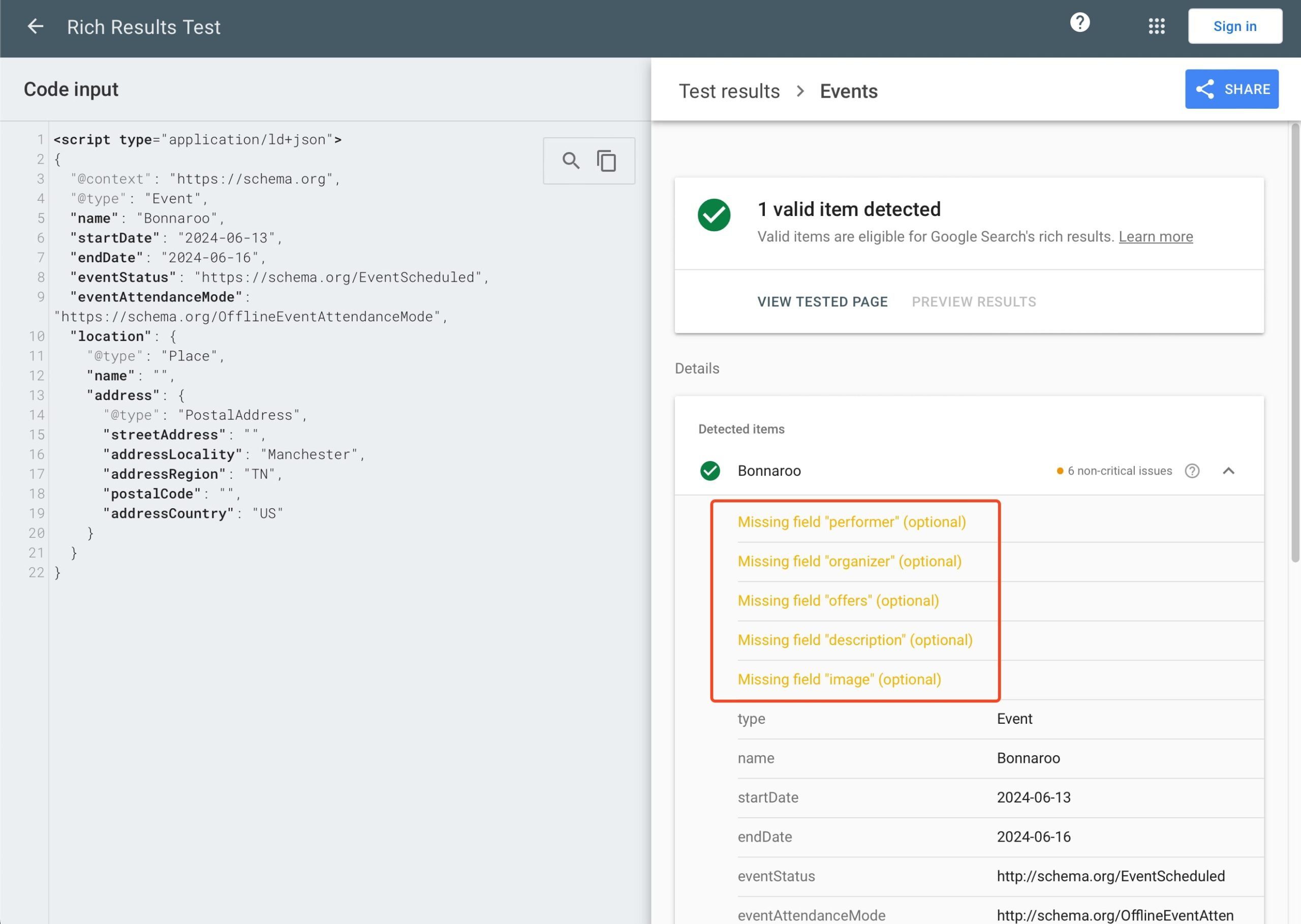

The Schema.org vocabulary has over 800 types and properties to help websites explain what the page is about. However, Google’s structured data features only require a small subset of these properties for websites to be eligible for a rich result.

Many websites that solely implement Schema Markup to get rich results tend to be less descriptive with their Schema Markup.

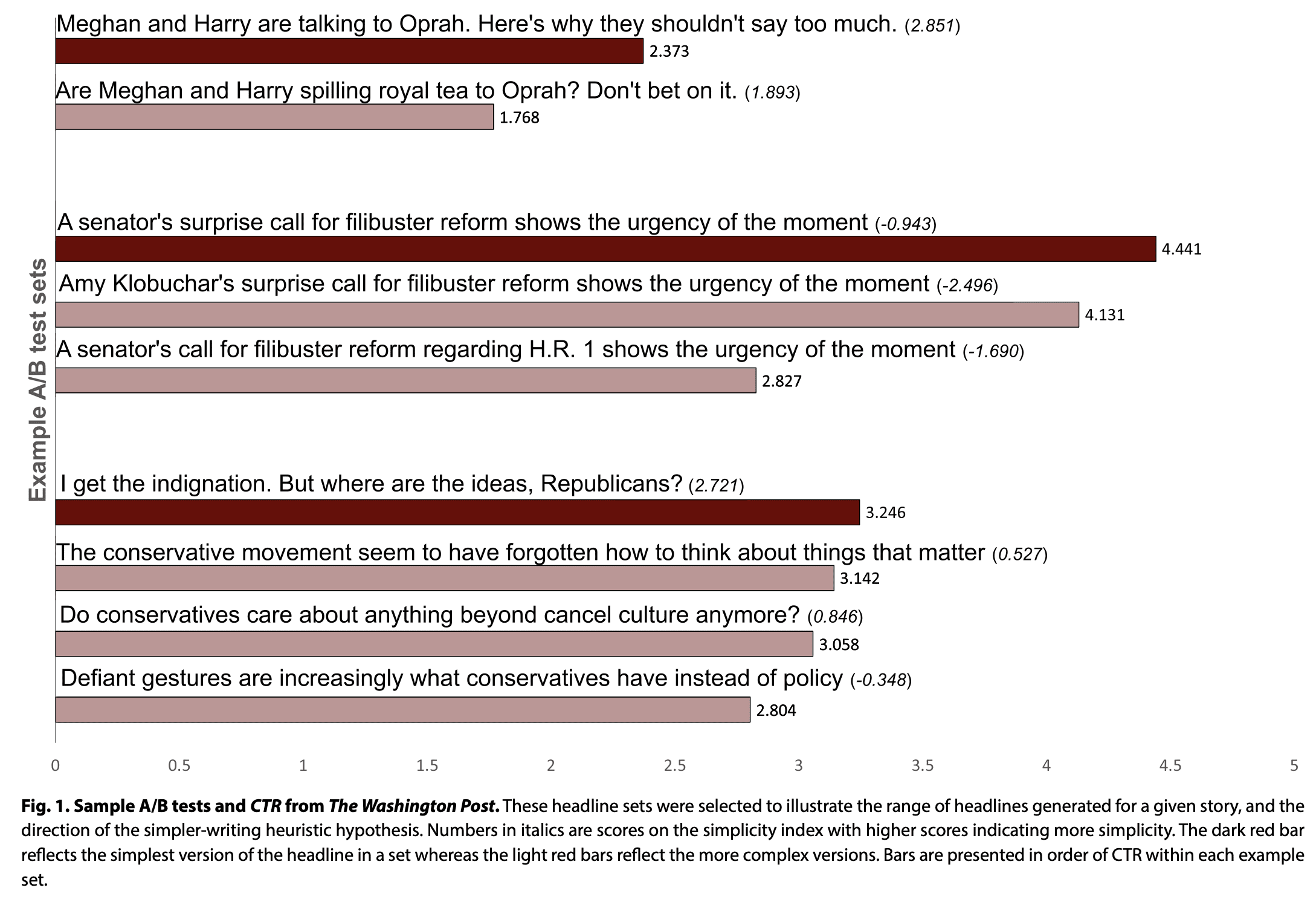

AI search engines now look at the meaning and intent behind your content to provide users with more relevant search results.

Therefore, organizations that want to stay ahead should use more specific Schema.org types and leverage appropriate properties to help search engines better understand and contextualize their content. You can be descriptive with your content while still achieving rich results.

For example, each type (e.g. Article, Person, etc.) in the Schema.org vocabulary has 40 or more properties to describe the entity.

The properties are there to help you fully describe what the page is about and how it relates to other things on your website and the web. In essence, it’s asking you to describe the entity or topic of the page semantically.

The word ‘semantic’ is about understanding the meaning of language.

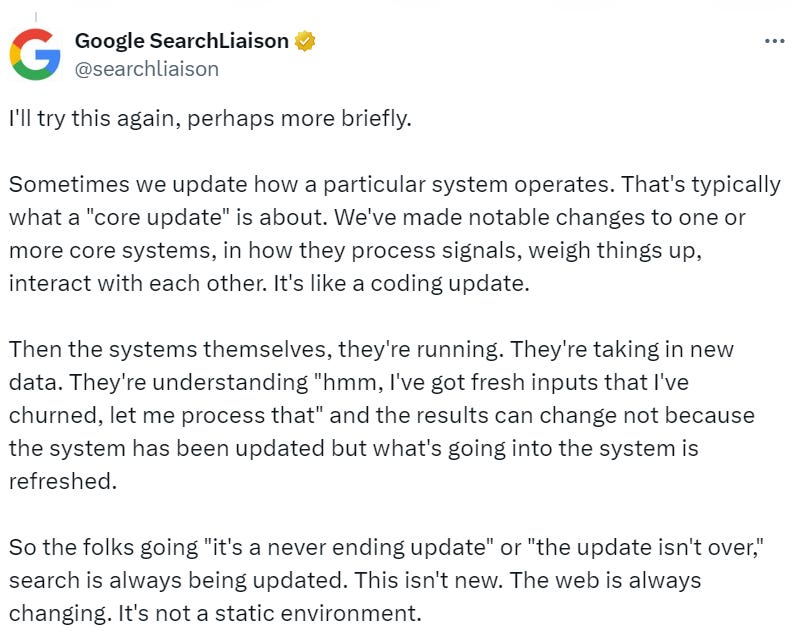

Note that the word “understanding” is part of the definition. Funny enough, in October 2023, John Mueller at Google released a Search Update video. In this six-minute video, he leads with an update on Schema Markup.

For the first time, Mueller described Schema Markup as “a code you can add to your web pages, which search engines can use to better understand the content. ”

While Mueller has historically spoken a lot about Schema Markup, he typically talked about it in the context of rich result eligibility. So, why the change?

This shift in thinking about Schema Markup for enhanced search engine understanding makes sense. With AI’s growing role and influence in search, we need to make it easy for search engines to consume and understand the content.

Take Control Of AI By Shaping Your Data With Schema Markup

Now, if being understood and standing out in search is not a good enough reason to get started, then doing it to help your enterprise take control of your content and prepare it for artificial intelligence is.

In February 2024, Gartner published a report on “30 Emerging Technologies That Will Guide Your Business Decisions,” highlighting generative AI and knowledge graphs as critical emerging technologies companies should invest in within the next 0-1 years.

Knowledge graphs are collections of relationships between entities defined using a standardized vocabulary that enables new knowledge to be gained by way of inferencing.

Good news! When you implement Schema Markup to define and connect the entities on your site, you are creating a content knowledge graph for your organization.

Thus, your organization gains a critical enabler for generative AI adoption while reaping its SEO benefits.

Learn more about building content knowledge graphs in my article, Extending Your Schema Markup From Rich Results to Knowledge Graphs.

We can also look at other experts in the knowledge graph field to understand the urgency of implementing Schema Markup.

In his LinkedIn post, Tony Seale, Knowledge Graph Architect at UBS in the UK, said,

“AI does not need to happen to you; organizations can shape AI by shaping their data.

It is a choice: We can allow all data to be absorbed into huge ‘data gravity wells’ or we can create a network of networks, each of us connecting and consolidating our data.”

The “networks of networks” Seale refers to is the concept of knowledge graphs – the same knowledge graph that can be built from your web data using semantic Schema Markup.”

The AI revolution has only just begun, and there is no better time than now to shape your data, starting with your web content through the implementation of Schema Markup.

Use Schema Markup As The Catalyst For AI

In today’s digital landscape, organizations must invest in new technology to keep pace with the evolution of AI and search.

Whether your goal is to stand out on the SERP or ensure your content is understood as intended by Google and other search engines, the time to implement Schema Markup is now.

With Schema Markup, SEO pros can become heroes, enabling generative AI adoption through content knowledge graphs while delivering tangible benefits, such as increased click-through rates and improved search visibility.

More resources:

Featured Image by author