Unlocking The Secrets Of Google Ad Auctions via @sejournal, @siliconvallaeys

In the world of search marketing, ad auction dynamics play a crucial role in determining ad placements and costs.

Since the DOJ trial against Google, a few elements of the ad auction have gained visibility in the advertising community.

Due to the nature of the trial, the nuances of the auction have been portrayed as serving primarily to increase ad costs. But while higher cost per click (CPCs) are rightfully viewed with skepticism, consider that they may be a side effect of something advertisers would actually want.

I believe nobody should care about CPC.

Instead, the focus should be on cost per action (CPA), return on ad spend (ROAS), return on investment (ROI), or another metric more closely related to business outcomes than CPC.

If you disagree with that premise, you will disagree with the rest of my post. But if you are willing to consider that a higher CPC is not always a bad thing, read on to learn how to explain it to a boss or client who is always on your case about CPCs being too high.

We’ll explore key components of ad auctions, including ad rank thresholds and reserve prices, out-of-order promotions, Randomized Generalized Second-Price (RGSP) mechanisms, and pCTR normalizers to understand how these elements work to create a more effective advertising ecosystem.

But first, let’s cover some of the basics of the ad auction.

The Importance Of Ad Rank

Ad Rank is a fundamental component of ad auctions, balancing bid amounts with ad quality to determine ad placement on the search results page. The basic formula is:

Ad Rank = Max CPC × predicted CTR

This formula ensures that both bid amount and ad quality are considered when determining ad placement.

Predicted CTR (pCTR) is an estimate of how likely it is that an ad will be clicked when shown for a particular search query. This metric is critical because it reflects the ad’s relevance and expected performance.

pCTR Impacts Actual CPC

The actual cost-per-click (CPC) that advertisers pay in an ad auction is influenced by the projected click-through rate (pCTR) of their ads.

Essentially, ads with higher pCTR can achieve better ad positions at a lower actual CPC compared to ads with lower pCTR.

This encourages advertisers to create highly relevant and engaging ads that align with user intent, as improving pCTR can lead to more efficient spending and better ad placements.

Google Ranks Ads Based On CPM

You read that right, and I haven’t gone mad. Since we’re exploring the dynamics of ad auctions and how they influence costs, a helpful point for advertisers to understand is that Google’s ad auction is not a CPC auction but rather a cost-per-thousand-impressions (CPM) auction.

Its not being a CPC auction should be obvious. After all, the pCTR is an equally important factor, and the ad with the highest MaxCPC doesn’t automatically win.

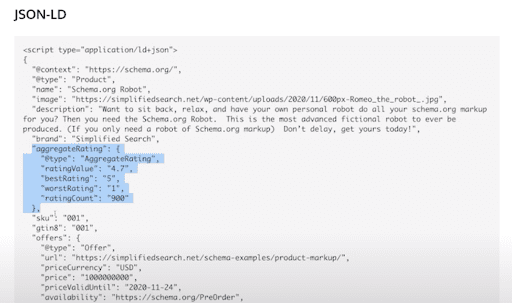

Advertisers bid a maximum CPC (or set a tROAS or tCPA, which gets turned into a MaxCPC at the time of each auction), and when that is combined with pCTR, you get an estimated CPM (eCPM).

The ad with the highest eCPM wins the auction. Since the ad with the highest ad rank wins the auction, we can see that ad rank and eCPM are interchangeable.

And by the way, any publisher can tell you that the best way to monetize a finite number of web visits is by maximizing the CPM, so it should make sense that Google wants to sell ads to the advertisers with the highest CPMs. I explain this in a video.

The Role Of pCTR In Ad Auctions

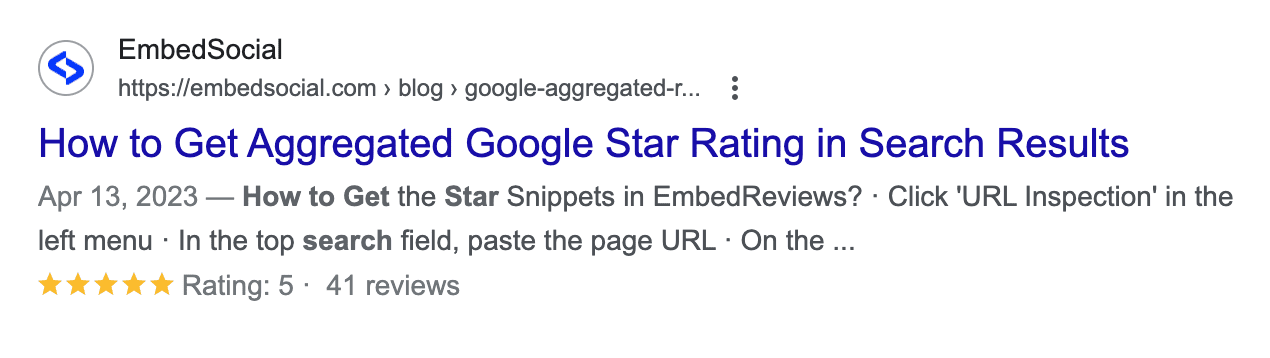

pCTR is a dynamic metric that influences ad placement and cost. It is calculated for each auction based on the specific context of the search query.

Advertisers with high pCTR benefit from lower CPCs and better ad positions, as the system rewards ads that are more relevant and provide a better user experience.

Understanding and optimizing relevance is crucial for advertisers. High-quality ads that resonate with users are more likely to achieve higher pCTR, reducing overall costs and improving campaign effectiveness.

This dynamic nature of pCTR ensures that advertisers continuously strive to improve ad quality, benefiting both users and advertisers.

Quality Score Is Not pCTR

Quality Score (QS) and projected click-through rate (pCTR) are both critical components for advertisers, but they are not the same.

QS is a 1-10 integer representing the quality and relevance of an ad, taking into account factors such as ad relevance, landing page experience, and historical performance. It is a key performance indicator to help advertisers navigate their way to more relevant ads.

On the other hand, pCTR is a dynamic metric that estimates the likelihood of an ad being clicked for a specific search query.

It varies with each auction and reflects the ad’s expected performance in real time. While QS provides a broad assessment of ad quality, pCTR focuses specifically on predicting user engagement for individual auctions.

Now that I’ve covered the foundation of the ad auction, let’s explore the nuanced aspects that surfaced during the trial.

Thresholds And Reserve Prices

What Are Thresholds And Reserve Prices?

The ad auction is not as simple as ranking ads and then showing them from highest to lowest rank. There are thresholds that determine a number of things, including an ad’s eligibility for a more prominent location on the page and the reserve price for it to be shown at all.

These thresholds vary based on factors such as ad quality, position, user signals, and the specific topic of the search.

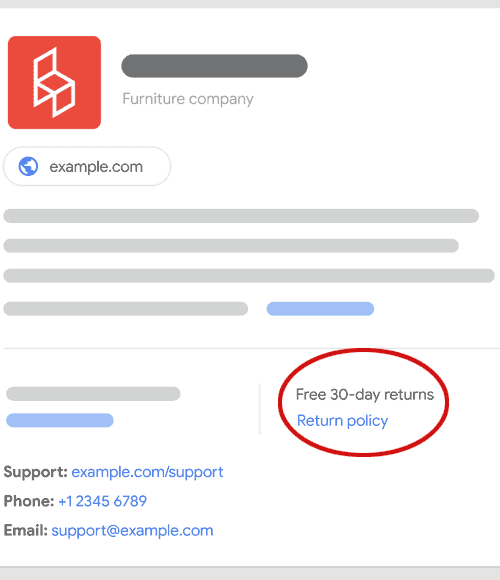

Google believes ads are information, too, and should help answer questions. So, there is a quality threshold an ad must meet before it can be shown above organic results.

This is why many searches have fewer than 4 ads above the search results. According to Google’s internal data, as of 2020, fewer than 2% of all searches on Google had 4 or more ads, regardless of position on the page.

How Thresholds And Reserve Prices Impact Costs

To explain this, we need to introduce the notion of an ad’s long-term value (LTV), a measure of the economic benefit of showing the ad minus the expected cost of showing it.

The economic benefit is the ad rank, or pCTR X Max CPC, i.e. how much Google predicts they will earn from showing the ad.

The cost of showing the ad is a prediction of the possibility that the ad will harm user experience and cause them to start avoiding future ads or suffer ad blindness.

The predicted negative impact is the threshold, or reserve price, for an ad. Only if its economic benefit exceeds the expected cost can the ad be shown. So if LTV > 0, the ad may show.

This means that ads may need to pay more than $0.01 (or the equivalent lowest currency in other markets) in order to appear, and that raises prices.

How Do Thresholds And Reserve Prices Benefit Advertisers?

If all second-price auction prices were determined by the next competitor, many advertisers would fall below the LTV > 0 thresholds even though they have a maxCPC that could get them above the threshold.

Google honors the advertiser’s wish to show their ad by collecting the CPC necessary to offset the predicted negative value of showing the ad.

You can think of the threshold as a hidden participant in the ad auction whose ad is tied to the position of the threshold. Beating this threshold raises the effective CPC an advertiser pays, but it also enables the advertiser to get their ads to show in scenarios where they otherwise may not have shown while paying no more than their maximum bid.

For example, in a scenario where your ad is the sole eligible contender, you may be required to pay the reserve price, which is influenced by the thresholds.

In a scenario without strong competition, a very good ad with high quality and a high MaxCPC could find itself unable to meet the threshold. To ensure the advertiser gets what they want, Google bumps their effective CPC so that they meet the threshold and their ad can be shown (LTV > 0).

Out-Of-Order Ad Promotion

Now that we understand reserve prices and thresholds, let’s look at a particular example that involves the threshold for ads to be shown at the top of the page.

What Is Out-Of-Order Ad Promotion?

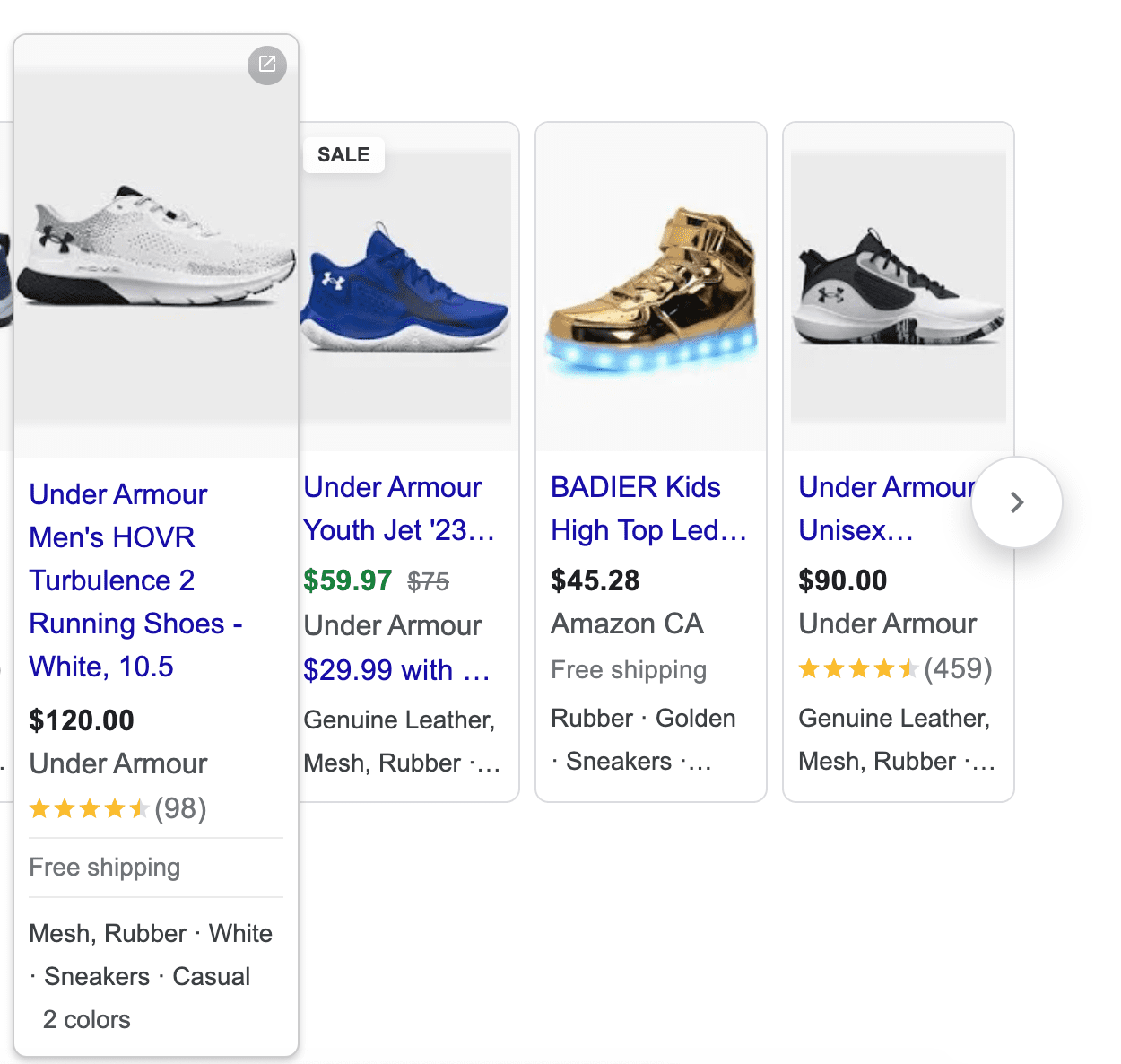

Out of order ad promotion is when an ad with a lower Ad Rank is allowed to be promoted above an ad with higher Ad Rank.

Let’s dive into this.

The thresholds have a relevance component; for example, Google may say that an ad can only be promoted to the top of the page if it has at least a certain level of relevance (pCTR).

Because Ad Rank is made up of MaxCPC and pCTR, it is possible that a lower-ranked ad (Ad B) could have a better pCTR but be stuck at the bottom of the page behind a higher-ranked ad (Ad A) with a lower pCTR.

If the pCTR promotion threshold was 5%, and Ad Rank was honored, neither of these ads could appear at the top of the page even though ad B has a high enough quality. It would be forced to stay behind Ad A in order to honor Ad Rank.

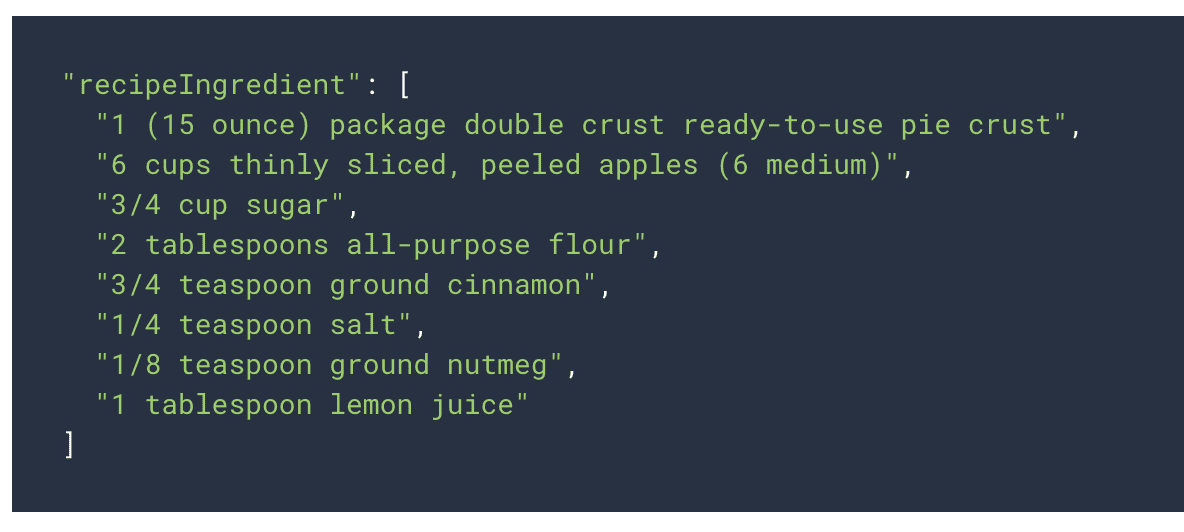

| Ad | MaxCPC | pCTR | Ad Rank |

| A | 10 | 3 | 30 |

| B | 2 | 10 | 20 |

In out-of-order promotion, ad B is allowed to jump over ad A.

How Out-Of-Order Ad Promotion Impacts Costs

When advertiser A’s low quality doesn’t meet the promotion threshold but advertiser B does meet it, rather than pushing both advertisers to the bottom of the page, advertiser B is allowed to be promoted out of order above advertiser A.

Now, advertiser B pays the CPC needed to beat the top of page threshold (reserve price) which is more than if they were left at the bottom of the page. It can also be more than if they had to beat the Ad Rank of Ad A.

How Out-Of-Order Ad Promotion Benefits Advertisers

Out-of-order ad promotion, where ads are promoted based on factors beyond just the bid amount, benefits advertisers. This approach considers various thresholds, including ad relevance, ensuring that high-quality ads have a chance to appear in top positions even if their Ad Ranks are not the highest.

This can help smaller advertisers with highly relevant ads compete effectively against larger competitors with bigger budgets.

By promoting ads based on relevance and quality, advertisers are incentivized to create more engaging and useful ads, ultimately leading to better user experiences and higher conversion rates.

Randomized Generalized Second-Price (RGSP)

What Is RGSP?

In a traditional second-price auction, the highest bidder wins the ad spot at the price of the second-highest bid.

But remember that the second price depends on pCTR, a number predicted with machine learning. Predictions are not precise, and it can happen that multiple advertisers are competing very closely, and the only thing that sets them apart is an ML-generated pCTR.

To ensure that inaccurate predictions don’t become self-reinforcing truths, ads can be randomly re-ordered. This introduces chances for experimentation that the ML algorithm can use to evaluate its accuracy and improve future predictions.

RGSP is a system to help ensure normalization is handled correctly. It’s hard to have data to do normalization if ads don’t vary. You need to see the same ad’s performance when it wins and loses to be able to identify how much of its performance is due to its inherent quality vs external factors like where it showed.

How RGSP Impacts Costs

RGSP introduces an element of unpredictability, which encourages advertisers to bid their true value rather than strategically underbidding.

When ads are re-ordered and don’t follow the pure ad ranking mechanism, CPCs will be different, and that can raise prices for some advertisers.

How RGSP Helps Advertisers

This mechanism helps prevent ads with high predicted relevance from consistently hogging top positions, promoting a diverse range of ads. By fostering a competitive environment, RGSP mechanisms encourage advertisers to focus on ad quality and relevance, which can lead to better performance and higher return on investment (ROI).

It prevents ads with incorrectly predicted high pCTRs from unfairly remaining in top positions and beating newer ads with inaccurate low pCTRs.

Normalization Techniques

What Are Normalization Techniques?

Google’s normalization techniques ensure that ad rankings reflect relevance rather than being influenced by external factors like ad format or position.

By incorporating metrics such as projected click-through rate (pCTR) and adjusting for factors like ad format, the system creates a level playing field for all advertisers.

Ad rank is partially based on pCTR. But we know that CTR depends on a lot more than just the text of the ad itself. For example, all else being equal, ads in higher positions will get a higher CTR than those in lower positions. Ads with more visible lines of ad text will get higher CTRs than those with fewer lines of text.

Project Momiji works to normalize pCTRs so that a more appealing ad format doesn’t unfairly penalize advertisers whose ads didn’t get the same visual treatment.

How Normalization Techniques Impact Costs

When pCTR is normalized for ad formats and page position, some advertisers with high pCTRs will see a downward adjustment. This is to say that the high pCTR was driven in part by the inherent benefit of a more appealing ad format or a higher page position.

Advertisers should compete on a level playing field, so when this normalization happens, some advertisers will pay more than if the normalization hadn’t happened.

For example, an ad shown in position 1 with a pCTR of 10% may only have had a pCTR of 8% if it had been shown in position 2. There’s an underlying ad relevance pCTR that can be estimated by removing all factors that boost the pCTR due to factors out of the advertiser’s control, like ad formats, position on the page, number of additional ads, etc.

Google can then price all ads based on their normalized pCTR. So, in our example, if the pCTR for the auction is 10% but normalized for all factors, it would only be 8%, then the advertiser’s effective CPC will be higher.

How Normalization Techniques Help Advertisers

Normalization techniques prevent unfair advantages stemming from superior positions or ad treatments, ensuring that ad pricing reflects true relevance. This approach benefits advertisers by promoting fair competition and encouraging investment in high-quality ads that align with user intent.

Focus Less On CPC

Understanding the intricacies of ad auction dynamics is crucial for advertisers seeking to optimize their campaigns and achieve better outcomes.

While higher CPCs might initially appear disadvantageous, they often result from mechanisms designed to promote ad quality, relevance, and a better user experience.

By focusing on metrics that truly matter, such as CPA, ROAS, and ROI, advertisers can better appreciate the benefits of these dynamics.

The components of the ad auction, from ad rank thresholds to out-of-order promotions and RGSP mechanisms, work together to create a competitive yet fair environment.

This encourages advertisers to continuously improve their ads, ultimately benefiting both their business and the users they aim to reach. By embracing these complexities and striving for high-quality, relevant ads, advertisers can navigate the ad auction landscape more effectively and achieve greater success in their digital marketing efforts.

More resources:

Featured Image: ImageFlow/Shutterstock