The Download: AI to measure pain, and how to deal with conspiracy theorists

This is today’s edition of The Download, our weekday newsletter that provides a daily dose of what’s going on in the world of technology.

AI is changing how we quantify pain

Researchers around the world are racing to turn pain—medicine’s most subjective vital sign—into something a camera or sensor can score as reliably as blood pressure.

The push has already produced PainChek—a smartphone app that scans people’s faces for tiny muscle movements and uses artificial intelligence to output a pain score—which has been cleared by regulators on three continents and has logged more than 10 million pain assessments. Other startups are beginning to make similar inroads.

The way we assess pain may finally be shifting, but when algorithms measure our suffering, does that change the way we treat it? Read the full story.

—Deena Mousa

This story is from the latest print issue of MIT Technology Review magazine, which is full of fascinating stories about our bodies. If you haven’t already, subscribe now to receive future issues once they land.

How to help friends and family dig out of a conspiracy theory black hole

—Niall Firth

Someone I know became a conspiracy theorist seemingly overnight.

It was during the pandemic. They suddenly started posting daily on Facebook about the dangers of covid vaccines and masks, warning of an attempt to control us.

As a science and technology journalist, I felt that my duty was to respond. I tried, but all I got was derision. Even now I still wonder: Are there things I could have done differently to talk them back down and help them see sense?

I gave Sander van der Linden, professor of social psychology in society at the University of Cambridge, a call to ask: What would he advise if family members or friends show signs of having fallen down the rabbit hole? Read the full story.

This story is part of MIT Technology Review’s series “The New Conspiracy Age,” on how the present boom in conspiracy theories is reshaping science and technology. Check out the rest of the series here. It’s also part of our How To series, giving you practical advice to help you get things done.

If you’re interested in hearing more about how to survive in the age of conspiracies, join our features editor Amanda Silverman and executive editor Niall Firth for a subscriber-exclusive Roundtable conversation with conspiracy expert Mike Rothschild. It’s at 1pm ET on Thursday November 20—register now to join us!

Google is still aiming for its “moonshot” 2030 energy goals

—Casey Crownhart

Last week, we hosted EmTech MIT, MIT Technology Review’s annual flagship conference in Cambridge, Massachusetts. As you might imagine, some of this climate reporter’s favorite moments came in the climate sessions. I was listening especially closely to my colleague James Temple’s discussion with Lucia Tian, head of advanced energy technologies at Google.

They spoke about the tech giant’s growing energy demand and what sort of technologies the company is looking to to help meet it. In case you weren’t able to join us, let’s dig into that session and consider how the company is thinking about energy in the face of AI’s rapid rise. Read the full story.

This article is from The Spark, MIT Technology Review’s weekly climate newsletter. To receive it in your inbox every Wednesday, sign up here.

The must-reads

I’ve combed the internet to find you today’s most fun/important/scary/fascinating stories about technology.

1 ChatGPT is now “warmer and more conversational”

But it’s also slightly more willing to discuss sexual and violent content. (The Register)

+ ChatGPT has a very specific writing style. (WP $)

+ The looming crackdown on AI companionship. (MIT Technology Review)

2 The US could deny visas to visitors with obesity, cancer or diabetes

As part of its ongoing efforts to stem the flow of people trying to enter the country. (WP $)

3 Microsoft is planning to create its own AI chip

And it’s going to use OpenAI’s internal chip-building plans to do it. (Bloomberg $)

+ The company is working on a colossal data center in Atlanta. (WSJ $)

4 Early AI agent adopters are convinced they’ll see a return on their investment soon

Mind you, they would say that. (WSJ $)

+ An AI adoption riddle. (MIT Technology Review)

5 Waymo’s robotaxis are hitting American highways

Until now, they’ve typically gone out of their way to avoid them. (The Verge)

+ Its vehicles will now reach speeds of up to 65 miles per hour. (FT $)

+ Waymo is proving long-time detractor Elon Musk wrong. (Insider $)

6 A new Russian unit is hunting down Ukraine’s drone operators

It’s tasked with killing the pilots behind Ukraine’s successful attacks. (FT $)

+ US startup Anduril wants to build drones in the UAE. (Bloomberg $)

+ Meet the radio-obsessed civilian shaping Ukraine’s drone defense. (MIT Technology Review)

7 Anthropic’s Claude successfully controlled a robot dog

It’s important to know what AI models may do when given access to physical systems. (Wired $)

8 Grok briefly claimed Donald Trump won the 2020 US election

As reliable as ever, I see. (The Guardian)

9 The Northern Lights are playing havoc with satellites

Solar storms may look spectacular, but they make it harder to keep tabs on space. (NYT $)

+ Seriously though, they look amazing. (The Atlantic $)

+ NASA’s new AI model can predict when a solar storm may strike. (MIT Technology Review)

10 Apple users can now use digital versions of their passports

But it’s strictly for internal flights within the US only. (TechCrunch)

Quote of the day

“I hope this mistake will turn into an experience.”

—Vladimir Vitukhin, chief executive of the company behind Russia’s first anthropomorphic robot AIDOL, offers a philosophical response to the machine falling flat on its face during a reveal event, the New York Times reports.

One more thing

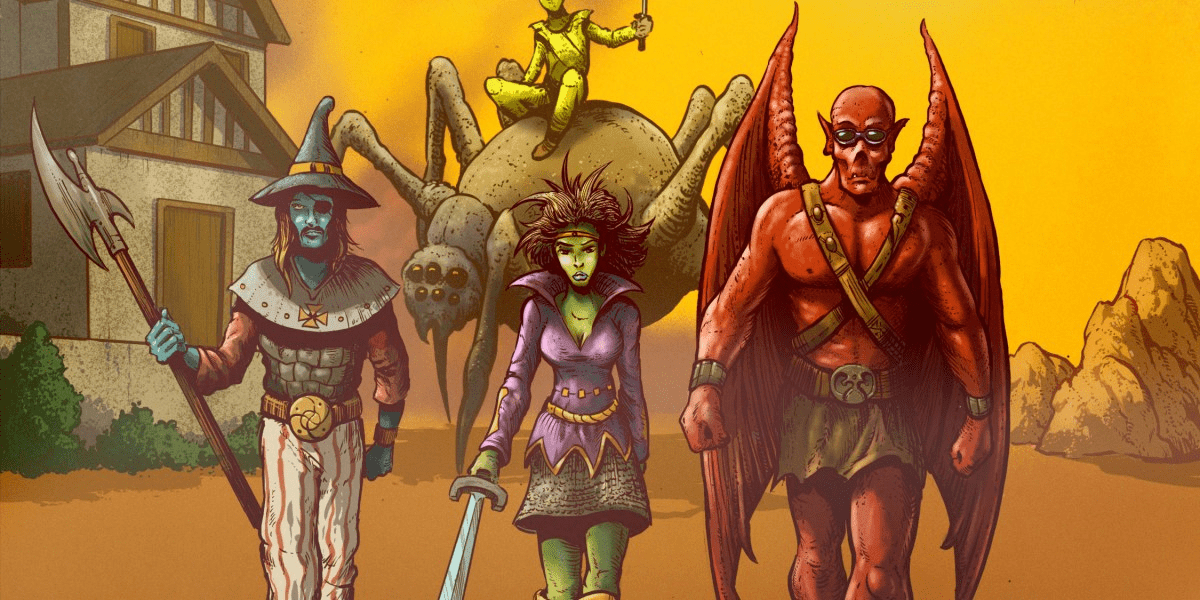

Welcome to the oldest part of the metaverse

Headlines treat the metaverse as a hazy dream yet to be built. But if it’s defined as a network of virtual worlds we can inhabit, its oldest corner has been already running for 25 years.

It’s a medieval fantasy kingdom created for the online role-playing game Ultima Online. It was the first to simulate an entire world: a vast, dynamic realm where players could interact with almost anything, from fruit on trees to books on shelves.

Ultima Online has already endured a quarter-century of market competition, economic turmoil, and political strife. So what can this game and its players tell us about creating the virtual worlds of the future? Read the full story.

—John-Clark Levin

We can still have nice things

A place for comfort, fun and distraction to brighten up your day. (Got any ideas? Drop me a line or skeet ’em at me.)

+ Unlikely duo Sting and Shaggy are starring together in a New York musical.

+ Barry Manilow was almost in Airplane!? That would be an entirely different kind of flying, altogether

+ What makes someone sexy? Well, that depends.

+ Keep an eye on those pink dolphins, they’re notorious thieves.