The holiday this year brings more competition than ever, but the shopper journey is also shifting. Consumers begin research weeks earlier, often starting in October, and rely on conversational AI or chatbot-style searches to compare products. Microsoft’s holiday insights show that shopping behavior kicks off in October, with many November and December conversions originating from clicks made weeks earlier.

The funnel is changing shape: wider at the top as more shoppers browse early, but shorter at the bottom as they move quickly once urgency kicks in. The key lesson is that PPC strategy must nurture intent early and be ready for compressed buying cycles when urgency arrives.

Holiday shoppers are beginning earlier, researching longer, and converting later. The funnel is wider than ever, but also shorter once the urgency hits.

Bidding: Winning The Ad Auction

Don’t Fear Expensive Clicks, Fear Unprofitable Ones

Holiday auctions bring higher cost-per-click (CPCs), a natural result of more advertisers competing for limited inventory. Success is not about avoiding CPC increases but maintaining strong return on ad spend (ROAS) and protecting profit margins. Teika Metrics’ Black Friday and Cyber Monday (BFCM) data confirms that CPCs climb seasonally, especially on Black Friday and Cyber Monday.

Smart Bidding goals should be tied to profitability, not just revenue, and portfolio bidding can help balance volatility across campaigns. Microsoft and Google also recommend applying seasonality bid adjustments before major holidays so automation anticipates conversion spikes.

Pro Tip: Set seasonality adjustments 24-48 hours before and after Black Friday and Cyber Monday to help Smart Bidding avoid over- or under-reacting.

Smart Bidding With Guardrails: Train The Machine

Automation is powerful, but it is not infallible. It needs monitoring and guardrails. Trust tROAS or tCPA when conditions are stable, but ensure you have bid limits (through portfolio bidding) and guardrails to alert you about unusual performance during peak periods when volatility spikes.

Real-World Example: Last BFCM, a large retailer client of ours using offline conversion import (OCI) saw conversions suddenly vanish. Optmyzr automation flagged the anomaly right away, revealing a Google-side glitch in OCI reporting. Without that safeguard, Smart Bidding would have assumed conversions had dried up and slashed bids during the most important shopping week of the year. Guardrails prevented disaster.

Key Take: Automation doesn’t eliminate risk; it changes the type of risk. Without guardrails, a data glitch can quietly sabotage your bids. With guardrails, you catch it before it becomes a disaster.

Inventory And Feed-Aware Bidding: Don’t Burn Budget On Out-Of-Stock

Holiday shoppers expect items to be in stock, priced competitively, and available with fast delivery. Automating feed hygiene to pause out-of-stock products is essential. Structuring campaigns by margin allows for different tROAS bids that achieve your target profitability.

Pro Tip: If your price is not competitive, shift spend toward SKUs where you can compete on both offer and margin.

And before you worry about bids, ensure the feed can win the impression. Tighten mobile-friendly titles and human-readable attributes (e.g., use “light brown,” not obscure color names), add seasonal terms like “Black Friday deals,” and fix disapprovals early so you don’t lose visibility when auctions heat up.

Create label taxonomies that align with your profit strategy, like “hero products,” “doorbusters,” “low-margin,” “last-chance,” so you can direct bids and budgets to what actually drives profits.

Case Study Insight: When Amazon briefly exited the Google Ads auction, Optmyzr’s analysis showed other advertisers gained clicks at lower CPCs, but ROAS did not improve. Shoppers were expecting Amazon, and when they did not find it, they often failed to convert with alternatives. Winning an auction is meaningless if the offer and expectations do not align.

Budgeting: Flexibility Wins

Turn On Campaigns Now And Control Delivery With Budgets

I normally recommend pausing campaigns that are not needed, rather than reducing their budgets to a very low amount to keep them active. Advertisers sometimes use budget rather than status to “pause” a campaign because they fear the dreaded learning period that may kick in when a campaign is enabled after an extensive period of inactivity.

Pausing does not erase Google’s memory, since “learning” reflects new auction contexts rather than forgotten history. Longer pauses, however, risk drift as consumer behavior shifts. The bigger issue is that paused campaigns with new ads will not undergo review until they are re-enabled, which can delay serving during crucial moments.

So during BFCM, there are good reasons to use budget rather than status because it keeps campaigns actively learning about shifts in consumer behavior, and it ensures new creatives go into the approval process.

Holiday Pitfall Alert: Do not pause campaigns with unapproved creatives close to Black Friday. Get ads reviewed in advance.

Intraday Pacing: Don’t Get Fooled By Conversion Lag

Static daily budgets can be damaging in volatile holiday conditions. Dynamic pacing using scripts or APIs is a better approach, especially when aligned with key milestones like Black Friday, Cyber Monday, shipping cutoffs, and last-minute windows.

On Black Friday and Cyber Monday, pacing must be monitored throughout the day. Hourly reporting in Google Ads makes this possible, but advertisers must also account for conversion lag.

Looking at last year’s data, conversions appear smooth by the hour because lag has already resolved. On the day, however, conversions will often appear behind pace even when clicks and impressions are aligned. Saving hourly reports as the day unfolds will provide a baseline for analyzing lag in future years.

Pro Tip: Do not confuse lag with poor performance. Cutting budgets midday can mean missing the evening conversion surge.

Lock in your total Q4 budget and earmark a supplemental pool for Black Friday, Cyber Monday, and the biggest shopping weekends. Expect higher CPCs and raise day caps accordingly so campaigns don’t exhaust at noon. Finally, audit your automations – safety scripts that pause or cap spend are helpful, but if they fire at the wrong time during BFCM, they can suppress profitable traffic.

Targeting

Audience Signals Are Your Multiplier

First-party data goes beyond CRM lists. It includes your business’s unit economics, such as pricing and profit margins, which can guide automation toward profitability rather than vanity ROAS.

Key Take: First-party data is not only about who your customers are, but also includes all your business data, including how you price. Leverage this to guide when you run ads and how much you bid.

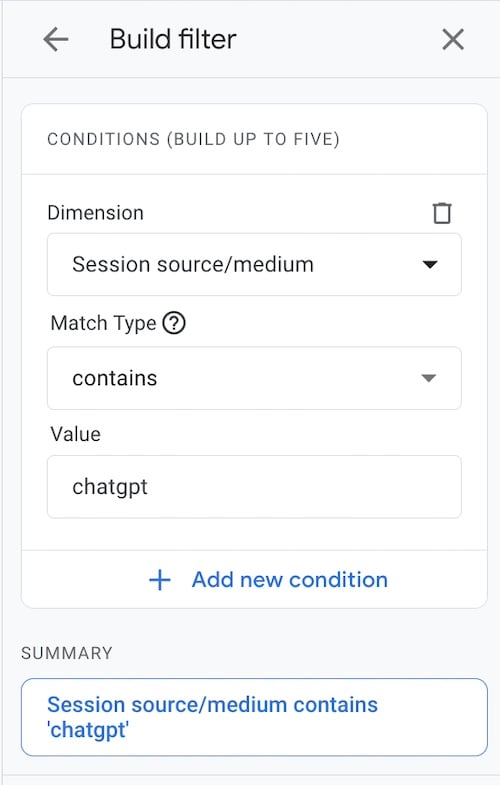

Microsoft has a unique feature that Google doesn’t have: impression-based remarketing, which allows advertisers to retarget users who saw their ads but did not click. This expands reach to pre-qualified audiences and often reduces costs. Combining CRM imports, impression-based remarketing, and profit-based bidding provides automation with richer signals.

Keywords And Keywordless Targeting

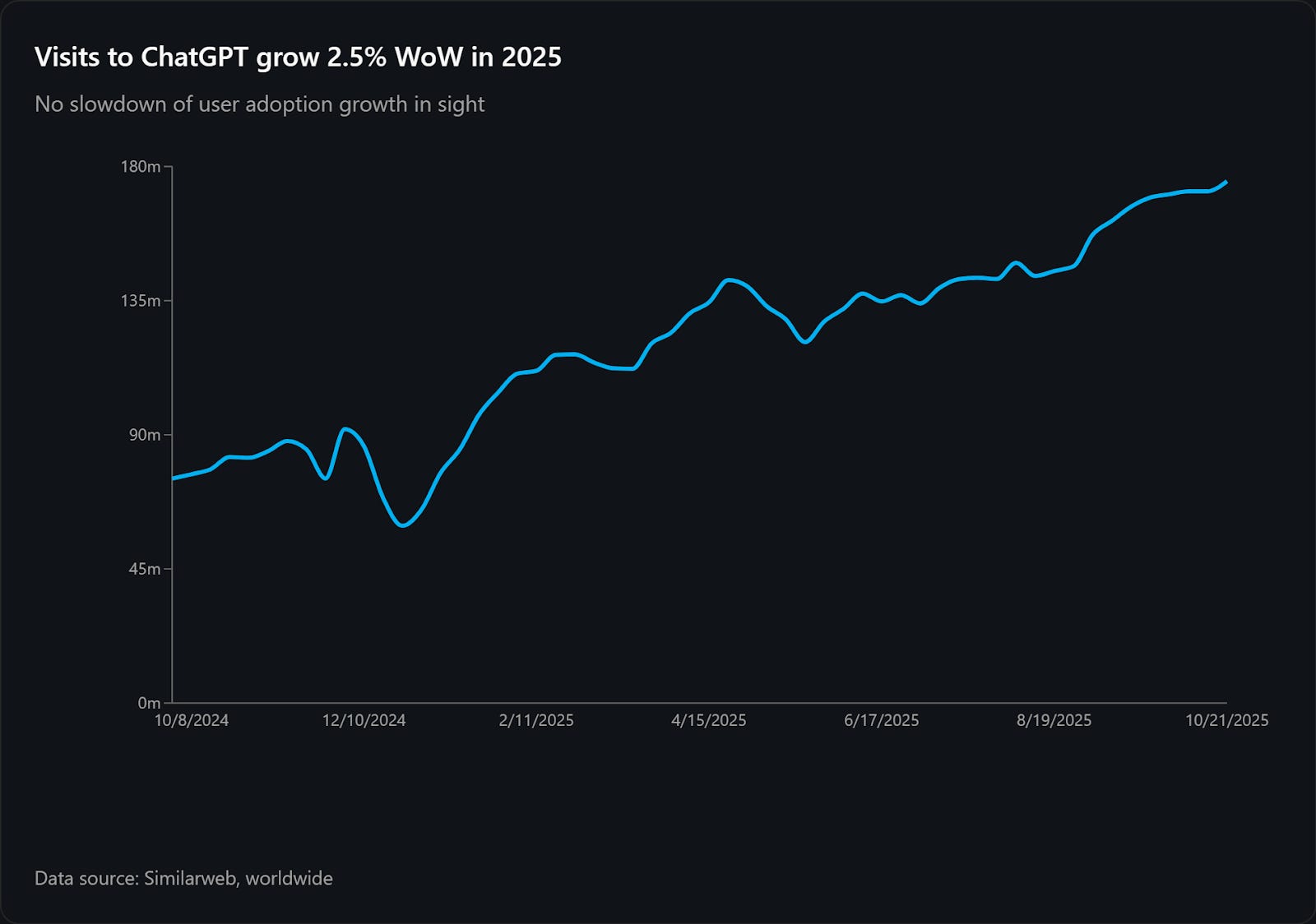

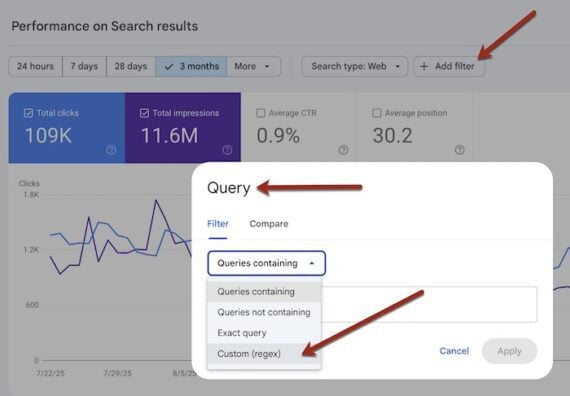

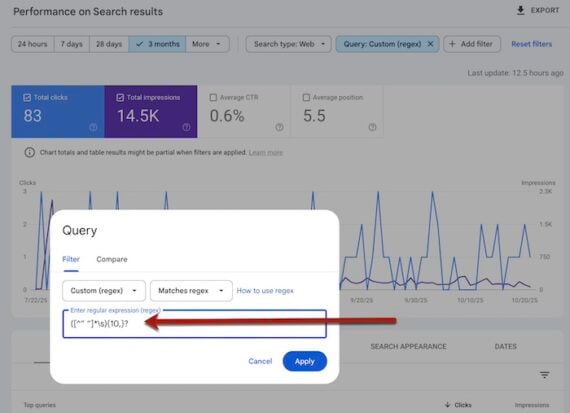

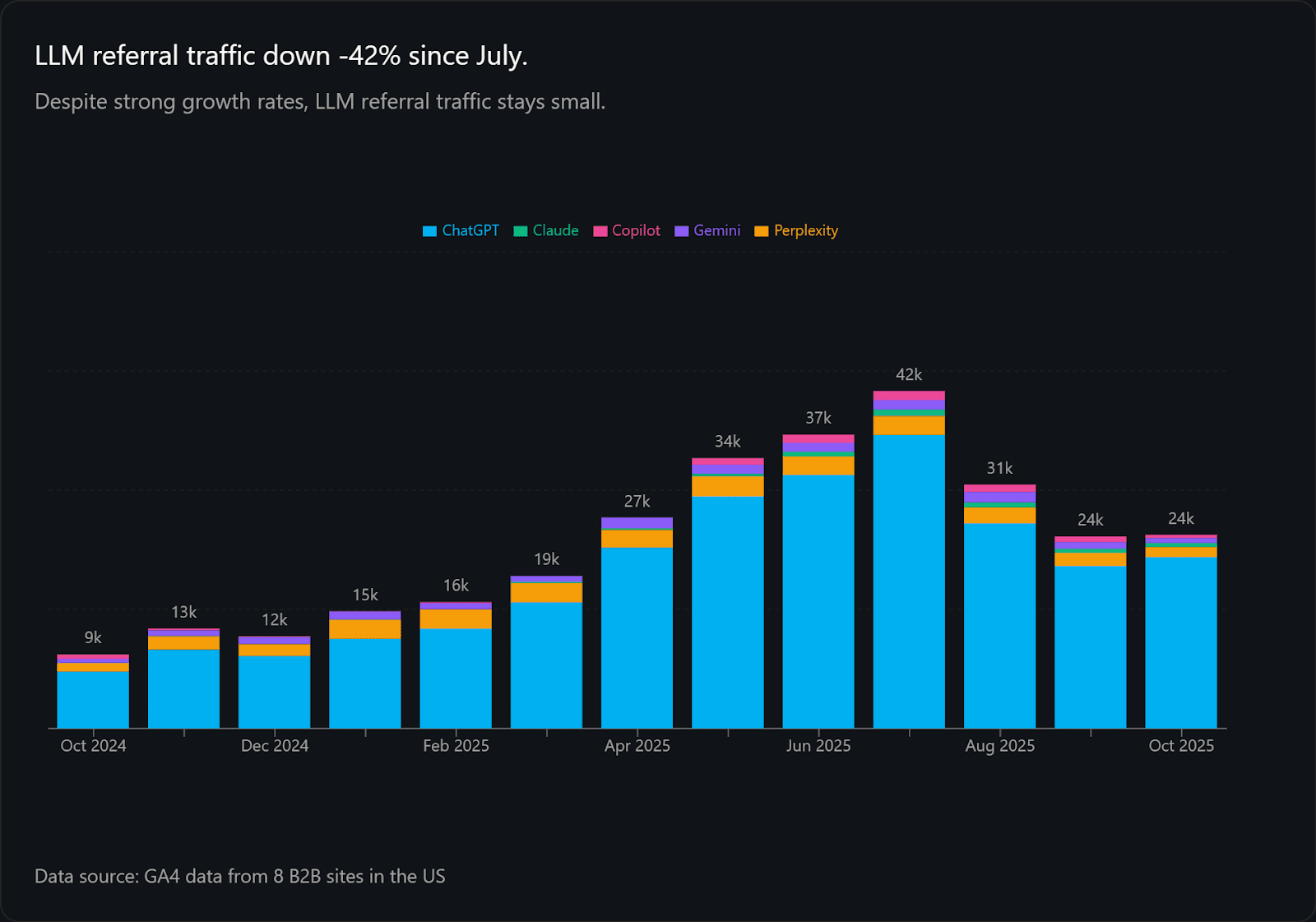

With match types getting broader every year, and the growth in keywordless campaign types like Performance Max, advertiser control over queries is eroding. This trend will continue as users shift from keyword searches to prompting, and Google eventually replaces synthetic keywords with a more precise targeting system.

Performance Max is performing well, and we shared details about what trends are working best in our PMax study. AI Max, on the other hand, doesn’t feel quite as ready for primetime, though there is unverified speculation that a September 2025 algorithm update improved performance significantly. Test AI Max using Experiments before setting it loose on your BFCM traffic this year.

Creative: Stand Out In Crowded Auctions

Ads That Win Auctions: CTR Beats Clever Copy

Auctions for bottom-of-the-funnel search ads reward click-through rate (CTR) and predicted CTR, not witty copy. Coverage and clarity matter most. Ad headlines, descriptions, and assets (formerly ad extensions) should be updated with current promotions, shipping cutoffs, and urgency messaging.

However, with 15 potential headlines that Google can choose from for your ad, controlling what is most important to include in messaging requires pinning during BFCM.

Optmyzr’s soon-to-be-published 2025 Responsive Search Ads (RSA) study shows that advertisers who pin multiple variations to the same position achieve better ROAS. Pinning one element restricts the machine too much, while no pinning gives it too much freedom. Multi-asset pinning balances human guidance with algorithmic optimization. Google’s RSA guidance confirms that variation improves performance.

Pro Tip: Plan RSAs in waves and use multi-asset pinning to balance brand strategy with system optimization.

Keep It Fresh: Creative Burnout Happens Faster In Q4

Shoppers tire quickly of repetitive ads, especially in Demand Gen campaigns. But even search ads should be kept fresh, and ads should be staged in waves to appeal to Black Friday and Cyber Monday shoppers, and reflect shipping cutoffs, last-minute gifts, and post-holiday clearance as the holidays approach.

Pre-loading assets ensures they are reviewed and ready to serve. Countdown customizers and promotion extensions can reinforce urgency, but messaging must stay consistent with site offers to maintain trust.

Pro Tip: Schedule creative waves in advance. Do not wait until Cyber Monday morning to swap assets.

Competitive Insights

Competitor Surge Alerts: Auction Insights As A Warning

Auction Insights is a powerful diagnostic tool. Google’s Auction Insights report reveals shifts in competitor behavior, such as impression share surges. Monitoring these trends in November helps advertisers react quickly, whether by increasing brand defense or positioning directly against rivals.

Auction Insights is your battlefield radar for Q4. Ignore it, and you could be blindsided.

Post-Holiday: Turn December Buyers Into January Fans

January Is Your PPC Lab: Retain, Don’t Just Acquire

Holiday buyers are the most expensive to acquire but can become the most profitable if nurtured in Q1. Segment holiday-only versus year-round buyers using customer relationship management (CRM) and ad data, then run loyalty and cross-sell campaigns. Feeding learnings back into bidding and audience systems ensures automation improves over time.

Holiday buyers are the most expensive you will ever acquire. Retarget them in January to make them more profitable.

Final Thoughts

Holiday PPC is the ultimate stress test. CPC inflation, automation, budgets, audiences, creative, competition, and fraud all converge at once. Winning requires guiding automation with better inputs, protecting profitability with strong signals, and owning your message at a time when keyword precision is fading. Prepare early, pace carefully, and place guardrails everywhere they matter most.

Checklist Summary

- Expect CPC inflation in Q4. Optimize for profit and ROAS, not cheap clicks.

- Set seasonality bid adjustments and add guardrails so Smart Bidding doesn’t misfire on BFCM.

- Treat budgets as fluid with intraday pacing. Don’t confuse conversion lag with underperformance.

- Use first-party data beyond CRM lists. Profit margins and pricing strategy are key signals.

- Microsoft’s impression-based remarketing lets you retarget high-intent searchers who never clicked.

- Make creative your control lever in a PMax and broad-match world. Use multi-asset RSA pinning.

- Monitor Auction Insights, watch for fraud/MFA, and turn expensive Q4 buyers into Q1 loyalists.

More Resources:

Featured Image: Roman Samborskyi/Shutterstock

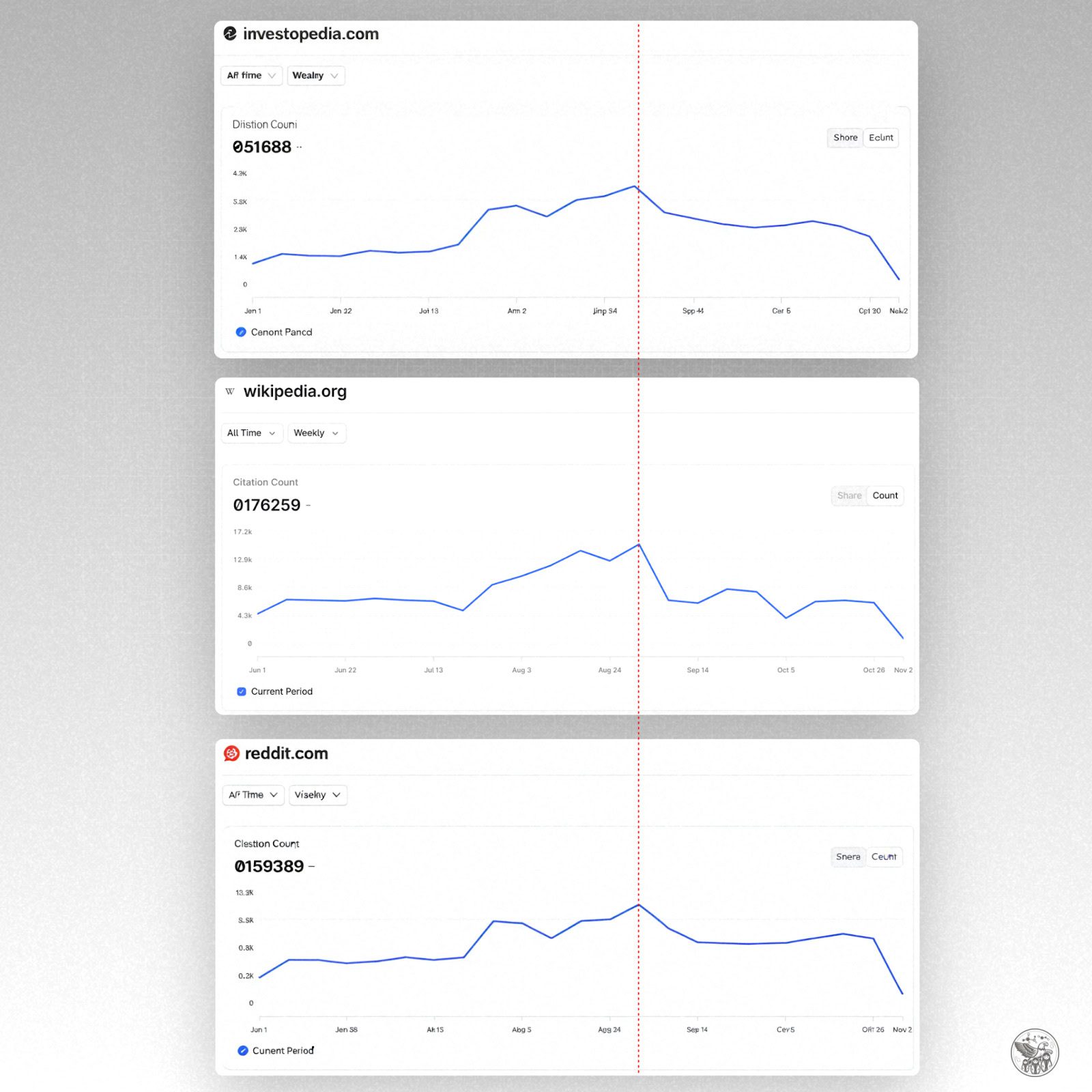

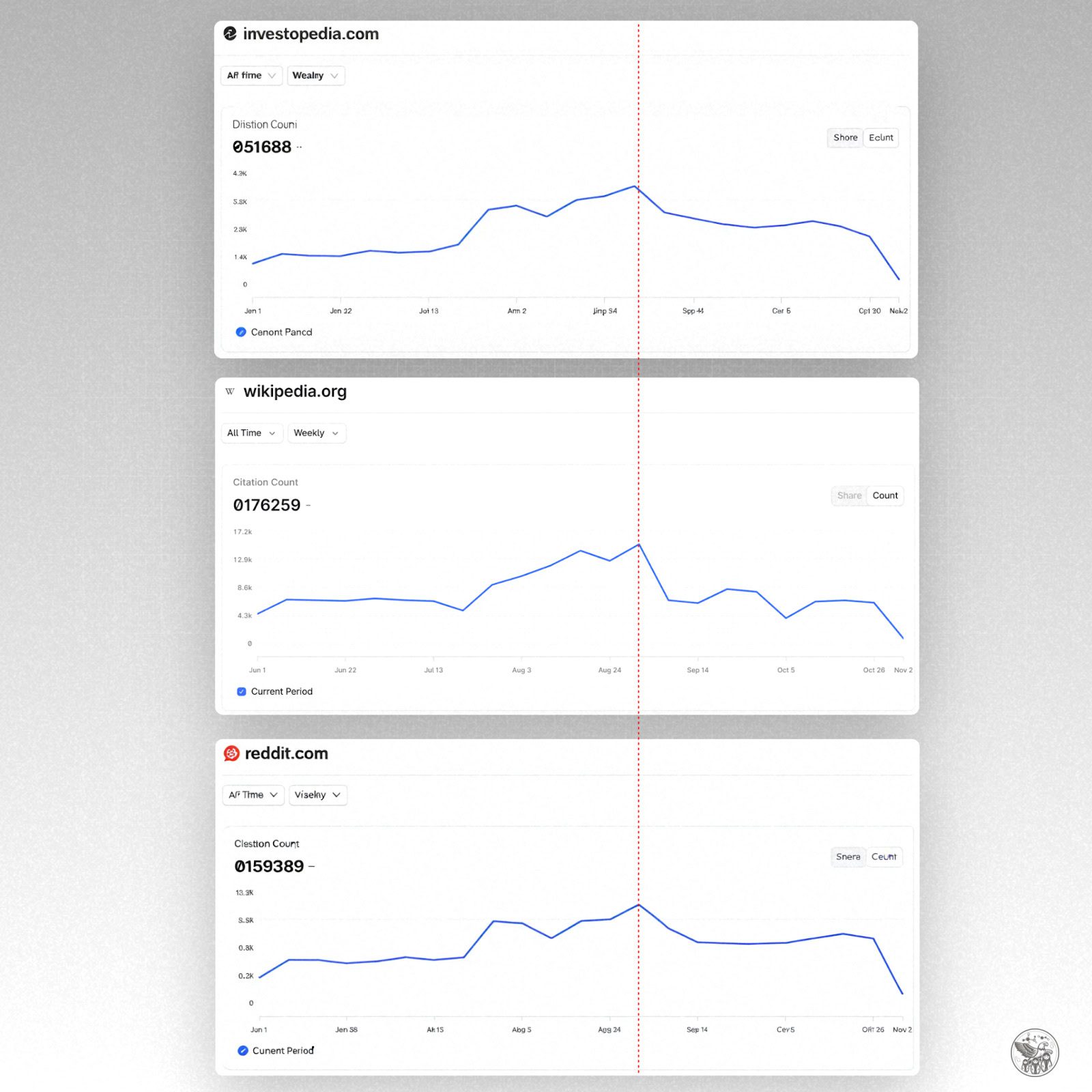

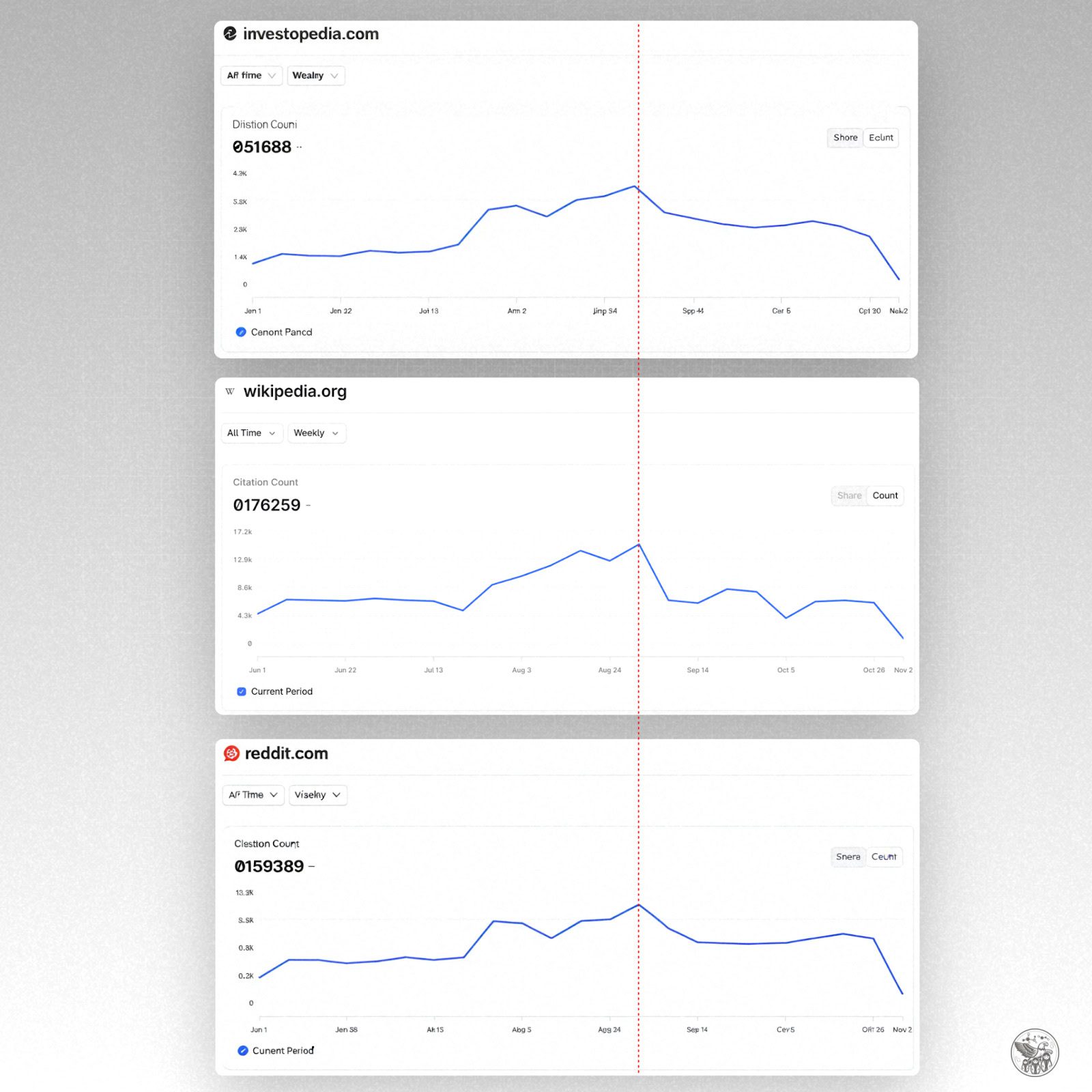

Image Credit: Kevin Indig

Image Credit: Kevin Indig