The astronaut training tourists to fly in the world’s first commercial space station

For decades, space stations have been largely staffed by professional astronauts and operated by a handful of nations. But that’s about to change in the coming years, as companies including Axiom Space and Sierra Space launch commercial space stations that will host tourists and provide research facilities for nations and other firms.

The first of those stations could be Haven-1, which the California-based company Vast aims to launch in May 2026. If all goes to plan, its earliest paying visitors will arrive about a month later. Drew Feustel, a former NASA astronaut, will help train them and get them up to speed ahead of their historic trip. Feustel has spent 226 days in space on three trips to the International Space Station (ISS) and the Hubble Space Telescope.

Feustel is now lead astronaut for Vast, which he advised on the new station’s interior design. He also created a months-long program to prepare customers to live and work there. Crew members (up to four at a time) will arrive at Haven-1 via a SpaceX Dragon spacecraft, which will dock to the station and remain attached throughout each 10-day stay. (Vast hasn’t publicly said who will fly on its first missions or announced the cost of a ticket, though competing firms have charged tens of millions of dollars for similar trips.)

Haven-1 is intended as a temporary facility, to be followed by a bigger, permanent station called Haven-2. Vast will begin launching Haven-2’s modules in 2028 and says it will be able to support a crew by 2030. That’s about when NASA will start decommissioning the ISS, which has operated for almost 30 years. Instead of replacing it, NASA and its partners intend to carry out research aboard commercial stations like those built by Vast, Axiom, and Sierra.

I recently caught up with Feustel in Lisbon at the tech conference Web Summit, where he was speaking about his role at Vast and the company’s ambitions.

Responses have been edited and condensed.

What are you hoping this new wave of commercial space stations will enable people to do?

Ideally, we’re creating access. The paradigm that we’ve seen for 25 years is primarily US-backed missions to the International Space Station, and [NASA] operating that station in coordination with other nations. But [it’s] still limited to 16 or 17 primary partners in the ISS program.

Following NASA’s intentions, we are planning to become a service provider to not only the US government, but other sovereign nations around the world, to allow greater access to a low-Earth-orbit platform. We can be a service provider to other organizations and nations that are planning to build a human spaceflight program.

Today, you’re Vast’s lead astronaut after you were initially brought on to advise the company on the design of Haven-1 and Haven-2. What are some of the things that you’ve weighed in on?

Some of the things where I can see tangible evidence of my work is, for example, in the sleep cores and sleep system—trying to define a more comfortable way for astronauts to sleep. We’ve come up with an air bladder system that provides distributed forces on the body that kind of emulate, or I believe will emulate, the gravity field that we feel in bed when we lie down, having that pressure of gravity on you.

Oh, like a weighted blanket?

Kind of like a weighted blanket, but you’re up against the wall, so you have to create, like, an inflatable bladder that will push you against the wall. That’s one of the very tangible, obvious things. But I work with the company on anything from crew displays and interfaces and how notifications and system information come through to how big a window should be.

How big should a window be? I feel like the bigger the better—but what are the factors that go into that, from an astronaut’s perspective?

The bigger the better. And the other thing to think about is—what do you do with the window? Take pictures. The ability to take photos out a window is important—the quality of the window, which direction it points. You know, it’s not great if it’s just pointing up in space all the time and you never see the Earth.

You’re also in charge of the astronaut training program at Vast. Tell me what that program looks like, because in some cases you’ll have private citizens who are paying for their trip that have no experience whatsoever.

A typical training flow for two weeks on our space station is extended out to about an 11-month period with gaps in between each of the training weeks. And so if you were to press that down together, it probably represents about three to four months of day-to-day training.

I would say half of it’s devoted to learning how to fly on the SpaceX Dragon, because that’s our transportation, and the greatest risk for anybody flying is on launch and landing. We want people to understand how to operate in that spacecraft, and that component is designed by SpaceX. They have their own training plans.

What we do is kind of piggyback on those weeks. If a crew shows up in California to train at SpaceX, we’ll grab them that same week and say, “Come down to our facility. We will train you to operate inside our spacecraft.” Much of that is focused on emergency response. We want the crew to be able to keep themselves safe. In case anything happens on the vehicle that requires them to depart, to get back in the SpaceX Dragon and leave, we want to make sure that they understand all of the steps required.

Another part is day-to-day living, like—how do you eat? How do you sleep, how do you use the bathroom? Those are really important things. How do you download the pictures after you take them? How do you access your science payloads that are in our payload racks that provide data and telemetry for the research you’re doing?

We want to practice every one of those things multiple times, including just taking care of yourself, before you go to space so that when you get there, you’ve built a lot of that into your muscle memory, and you can just do the things you need to do instead of every day being like a really steep learning curve.

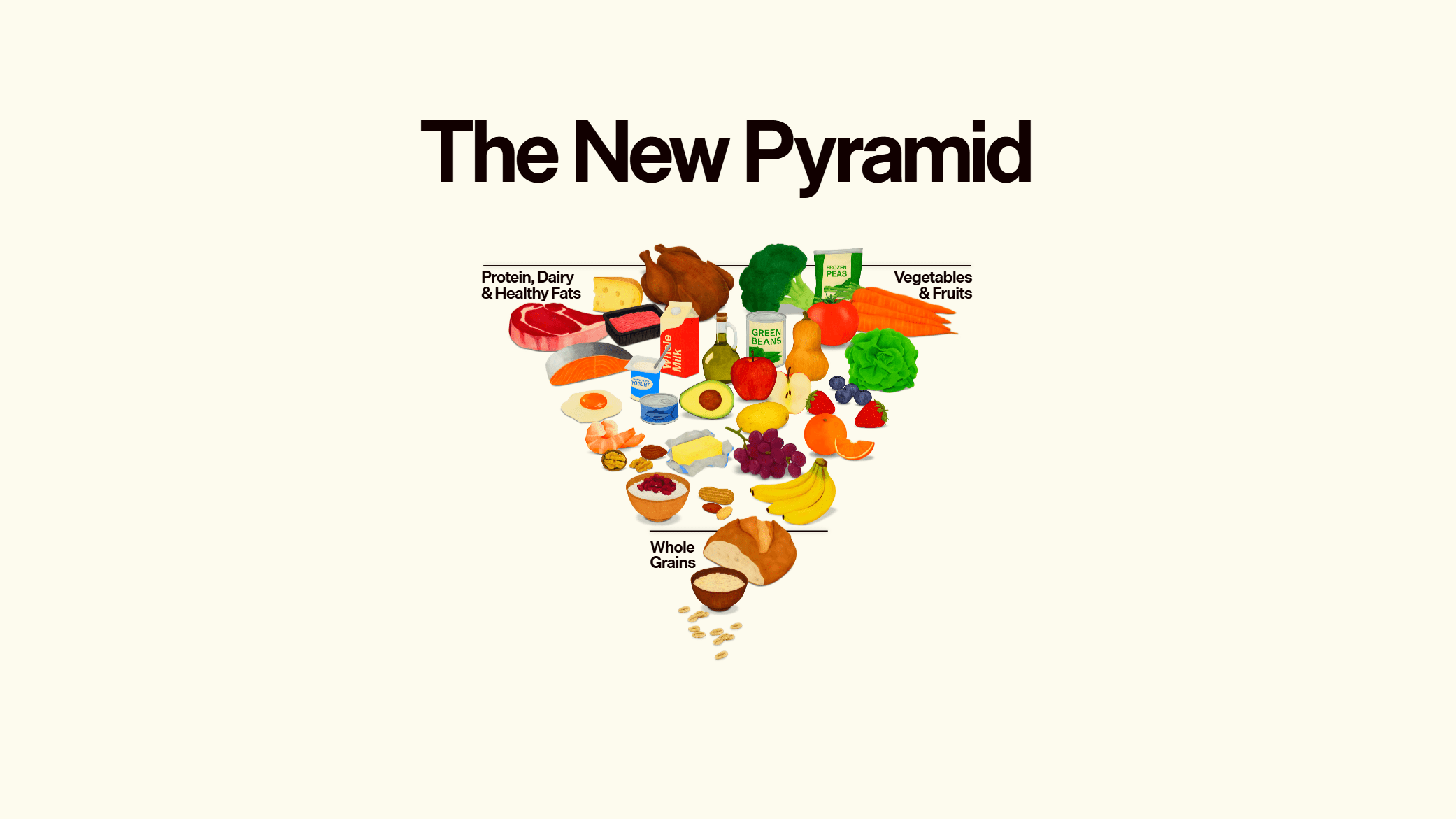

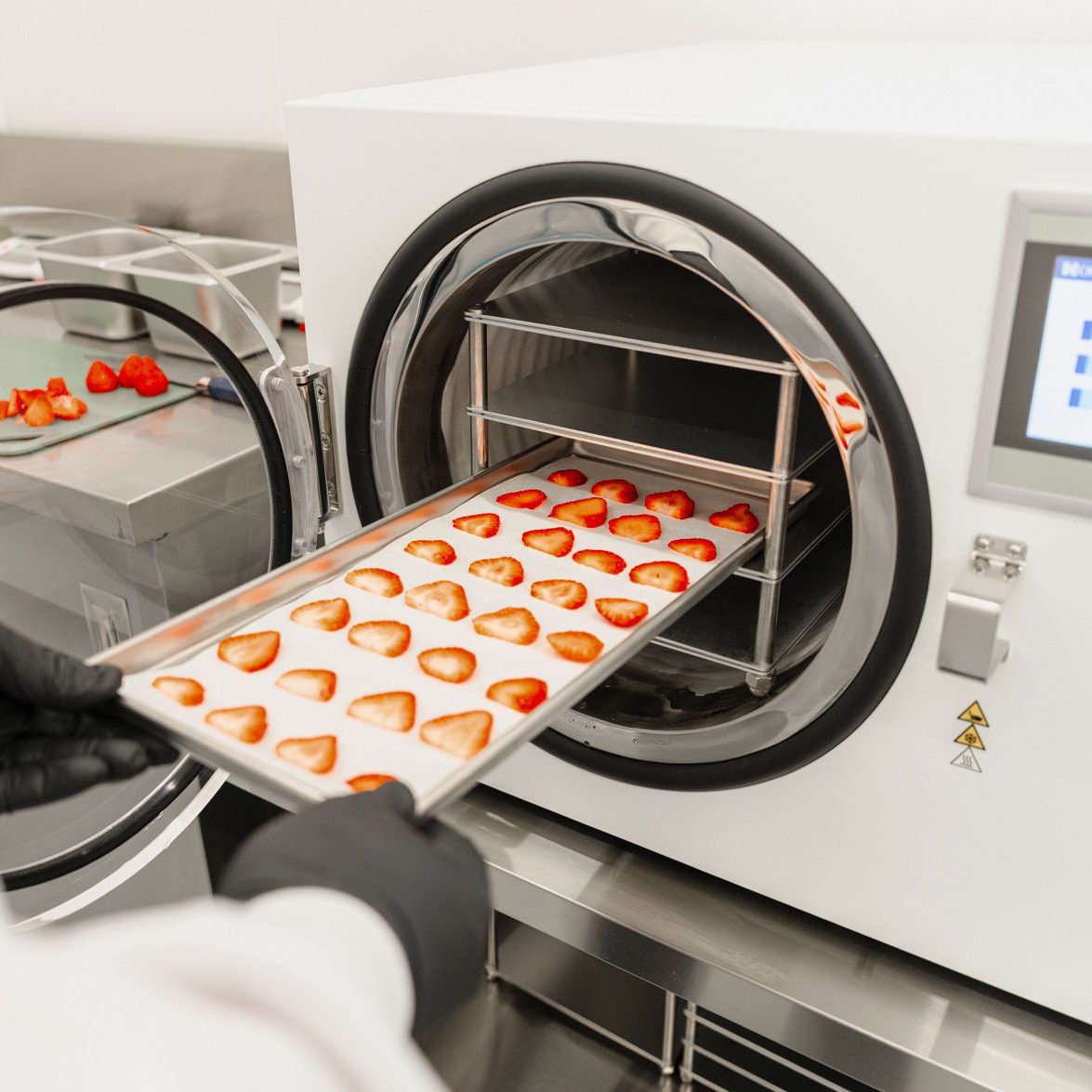

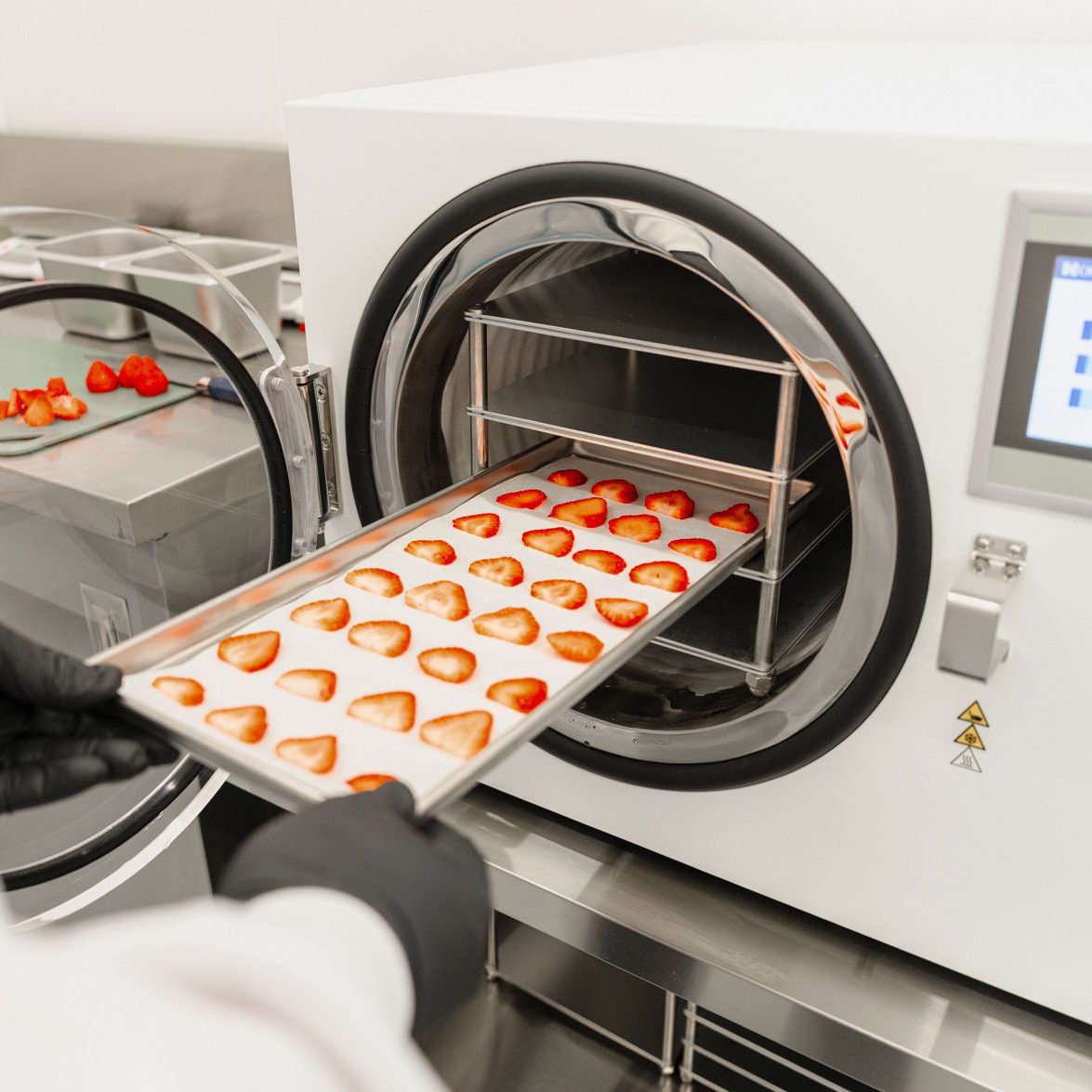

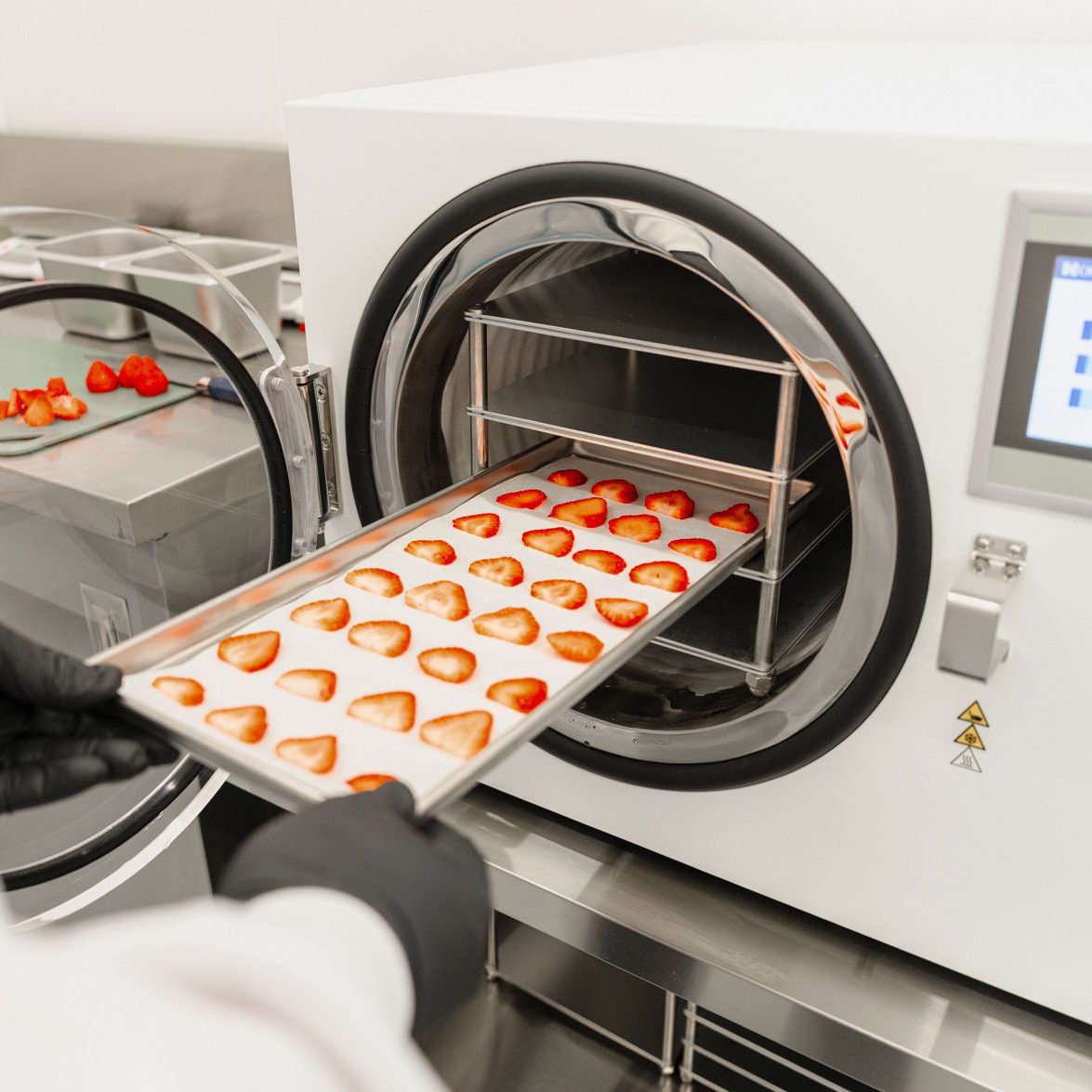

Strawberries and other perishable foods are freeze-dried by the Vast Food Systems team to prepare them for missions.

Do you have a facility where you’ll take people through some of these motions? Or a virtual simulation of some kind?

We have built a training mock-up, an identical vehicle to what people will live in in space. But it’s not in a zero-gravity environment. The only way to get any similar training is to fly on what we call a zero-g airplane, which does parabolas in space—it climbs up and then falls toward the Earth. Its nickname is the vomit comet.

But otherwise, there’s really no way to train for microgravity. You just have to watch videos and talk about it a lot, and try to prepare people mentally for what that’s going to be like. You can also train underwater, but that’s more related to spacewalking, and it’s much more advanced.

How do you expect people will spend their time in the station?

If history is any indication, they will be quite busy and probably oversubscribed. Their time will be spent basically caring for themselves, and trying to execute their experiments, and looking out the window. Those are the three big categories of what you’re going to do in space. And public relation activities like outreach back to Earth, to schools or hospitals or corporations.

This new era means that many more everyday people—though mostly wealthy ones at the beginning, because of ticket prices—will have this interesting view of Earth. How do you think the average person will react to that?

A good analogy is to say, how are people reacting to sub-orbital flights? Blue Origin and Virgin Galactic offer suborbital flights, [which are] basically three or four minutes of floating and looking down at the Earth from an altitude that’s about a third or a fifth of the altitude that actual orbital and career astronauts achieve when they circle the planet.

If you look at the reaction of those individuals and what they perceive, it’s amazing, right? It’s like awe and wonder. It’s the same way that astronauts react and talk when we see Earth—and say if more humans could see Earth from space, we’d probably be a little bit better about being humans on Earth.

That’s the hope, is that we create that access and more people can understand what it means to live on this planet. It’s essentially a spacecraft—it’s got its own environmental control system that keeps us alive, and that’s a big deal.

Some people have expressed ambitions for this kind of station to enable humans to become a multiplanetary species. Do you share that ambition for our species? If so, why?

Yeah, I do. I just believe that humans need to have the ability to live off of the planet. I mean, we’re capable of it, and we’re creating that access now. So why wouldn’t we explore space and go further and farther and learn to live in other areas?

Not to say that we should deplete everything here and deplete everything there. But maybe we take some of the burden off of the place that we call home. I think there’s a lot of reasons to live and work in space and off our own planet.

There’s not really a backup plan for no Earth. We know that there are risks from the space around us—dinosaurs fell prey to space hazards. We should be aware of those and work harder to extend our capabilities and create some backup plans.