The timing was eerie.

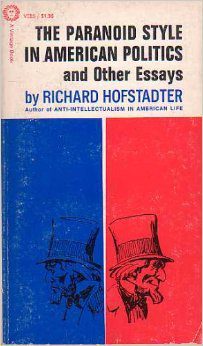

On November 21, 1963, Richard Hofstadter delivered the annual Herbert Spencer Lecture at Oxford University. Hofstadter was a professor of American history at Columbia University who liked to use social psychology to explain political history, the better to defend liberalism from extremism on both sides. His new lecture was titled “The Paranoid Style in American Politics.”

“I call it the paranoid style,” he began, “simply because no other word adequately evokes the qualities of heated exaggeration, suspiciousness, and conspiratorial fantasy that I have in mind.”

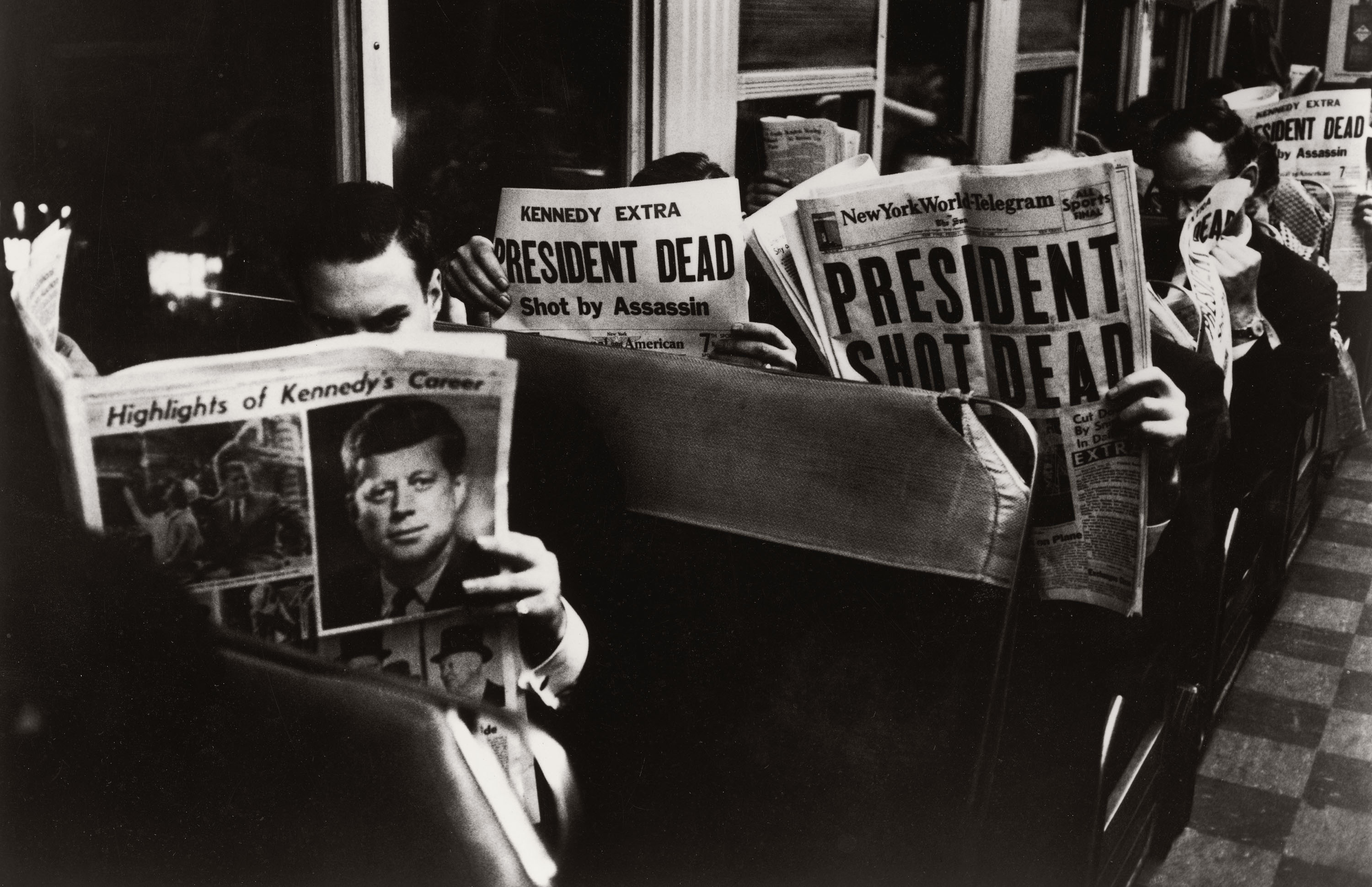

Then, barely 24 hours later, President John F. Kennedy was assassinated in Dallas. This single, shattering event, and subsequent efforts to explain it, popularized a term for something that is clearly the subject of Hofstadter’s talk though it never actually figures in the text: “conspiracy theory.”

This story is part of MIT Technology Review’s series “The New Conspiracy Age,” on how the present boom in conspiracy theories is reshaping science and technology.

Hofstadter’s lecture was later revised into what remains an essential essay, even after decades of scholarship on conspiracy theories, because it lays out, with both rigor and concision, a historical continuity of conspiracist politics. “The paranoid style is an old and recurrent phenomenon in our public life which has been frequently linked with movements of suspicious discontent,” he writes, tracing the phenomenon back to the early years of the republic. Though each upsurge in conspiracy theories feels alarmingly novel—new narratives disseminated through new technologies on a new scale—they all conform to a similar pattern. As Hofstadter demonstrated, the names may change, but the fundamental template remains the same.

His psychological reading of politics has been controversial, but it is psychology, rather than economics or other external circumstances, that best explains the flourishing of conspiracy theories. Subsequent research has indeed shown that we are prone to perceive intentionality and patterns where none exist—and that this helps us feel like a person of consequence. To identify and expose a secret plot is to feel heroic and gain the illusion of control over the bewildering mess of life.

Like many pioneering theories exposed to the cold light of hindsight, Hofstadter’s has flaws and blind spots. His key oversight was to downplay the paranoid style’s role in mainstream politics up to that point and underrate its potential to spread in the future.

In 1963, conspiracy theories were still a fringe phenomenon, not because they were inherently unusual but because they had limited reach and were stigmatized by people in power. Now that neither factor holds true, it is obvious how infectious they are. Hofstadter could not, of course, have imagined the information technologies that have become stitched into our lives, nor the fractured media ecosystem of the 21st century, both of which have allowed conspiracist thinking to reach more and more people—to morph, and to bloom like mold. And he could not have predicted that a serial conspiracy theorist would be elected president, twice, and that he would staff his second administration with fellow proponents of the paranoid style.

But Hofstadter’s concept of the paranoid style remains useful—and ever relevant—because it also describes a way of reading the world. As he put it, “The distinguishing thing about the paranoid style is not that its exponents see conspiracies or plots here or there in history, but they regard a ‘vast’ or ‘gigantic’ conspiracy as the motive force in historical events. History is a conspiracy, set in motion by demonic forces of almost transcendent power, and what is felt to be needed to defeat it is not the usual methods of political give-and-take, but an all-out crusade.”

Needless to say, this mystically unified version of history is not just untrue but impossible. It doesn’t make sense on any level. So why has it proved so alluring for so long—and why does it seem to be getting more popular every day?

What is a conspiracy theory, anyway?

The first person to define the “conspiracy theory” as a widespread phenomenon was the Austrian-British philosopher Karl Popper, in his 1948 lecture “Towards a Rational Theory of Tradition.” He was not referring to a theory about an individual conspiracy. He was interested in “the conspiracy theory of society”: a particular way of interpreting the course of events.

He later defined it as “the view that an explanation of a social phenomenon consists in the discovery of the men or groups who are interested in the occurrence of this phenomenon (sometimes it is a hidden interest which has first to be revealed), and who have planned and conspired to bring it about.”

Take an unforeseen catastrophe that inspires fear, anger, and pain—a financial crash, a devastating fire, a terrorist attack, a war. The conventional historian will try to unpick a tangle of different factors, of which malice is only one, and one that may be less significant than dumb luck.

The conspiracist, however, will perceive only sinister calculation behind these terrible events—a fiendishly intricate plot conceived and executed to perfection. Intent is everything. Popper’s observation chimes with Hofstadter’s: “The paranoid’s interpretation of history is … distinctly personal: decisive events are not taken as part of the stream of history, but as the consequences of someone’s will.”

According to Michael Barkun in the 2003 book A Culture of Conspiracy, the conspiracist interpretation of events rests on three assumptions: Everything is connected, everything is premeditated, and nothing is as it seems. Following that third law means that widely accepted and documented history is, by definition, suspect and alternative explanations, however outré, are more likely to be true. As Hannah Arendt wrote in The Origins of Totalitarianism, the purpose of conspiracy theories in 20th-century dictatorships “was always to reveal official history as a joke, to demonstrate a sphere of secret influences in which the visible, traceable, and known historical reality was only the outward façade erected explicitly to fool the people.” (Those dictators, of course, were conspirators themselves, projecting their own love of secret plots onto others.)

Still, it’s important to remember that “conspiracy theory” can mean different things. Barkun describes three varieties, nesting like Russian dolls.

The “event conspiracy theory” concerns a specific, contained catastrophe, such as the Reichstag fire of 1933 or the origins of covid-19. These theories are relatively plausible, even if they can not be proved.

The “systemic conspiracy theory” is much more ambitious, purporting to explain numerous events as the poisonous fruit of a clandestine international plot. Far-fetched though they are, they do at least fixate on named groups, whether the Illuminati or the World Economic Forum.

It is increasingly clear that “conspiracy theory” is a misnomer and what we are really dealing with is conspiracy belief.

Finally, the “superconspiracy theory” is that impossible fantasy in which history itself is a conspiracy, orchestrated by unseen forces of almost supernatural power and malevolence. The most extreme variants of QAnon posit such a universal conspiracy. It seeks to encompass and explain nothing less than the entire world.

These are very different genres of storytelling. If the first resembles a detective story, then the other two are more akin to fables. Yet one can morph into the other. Take the theories surrounding the Kennedy assassination. The first wave of amateur investigators created event conspiracy theories—relatively self-contained plots with credible assassins such as Cubans or the Mafia.

But over time, event conspiracy theories have come to seem parochial. By the time of Oliver Stone’s 1991 movie JFK, once-popular plots had been eclipsed by elaborate fictions of gigantic long-running conspiracies in which the murder of the president was just one component. One of Stone’s primary sources was the journalist Jim Marrs, who went on to write books about the Freemasons and UFOs.

Why limit yourself to a laboriously researched hypothesis about a single event when one giant, dramatic plot can explain them all?

The theory of everything

In every systemic or superconspiracy theory, the world is corrupt and unjust and getting worse. An elite cabal of improbably powerful individuals, motivated by pure malignancy, is responsible for most of humanity’s misfortunes. Only through the revelation of hidden knowledge and the cracking of codes by a righteous minority can the malefactors be unmasked and defeated. The morality is as simplistic as the narrative is complex: It is a battle between good and evil.

Notice anything? This is not the language of democratic politics but that of myth and of religion. In fact, it is the fundamental message of the Book of Revelation. Conspiracist thinking can be seen as an offshoot, often but not always secularized, of apocalyptic Christianity, with its alluring web of prophecies, signs, and secrets and its promise of violent resolution. After studying several millenarian sects for his 1957 book The Pursuit of the Millennium, the historian Norman Cohn itemized some common traits, among them “the megalomaniac view of oneself as the Elect, wholly good, abominably persecuted yet assured of ultimate triumph; the attribution of gigantic and demonic powers to the adversary; the refusal to accept the ineluctable limitations and imperfections of human experience.”

Popper similarly considered the conspiracy theory of society “a typical result of the secularization of religious superstition,” adding: “The gods are abandoned. But their place is filled by powerful men or groups … whose wickedness is responsible for all the evils we suffer from.”

QAnon’s mutation from a conspiracy theory on an internet message board into a movement with the characteristics of a cult makes explicit the kinship between conspiracy theories and apocalyptic religion.

This way of thinking facilitates the creation of dehumanized scapegoats—one of the oldest and most consistent features of a conspiracy theory. During the Middle Ages and beyond, political and religious leaders routinely flung the name “Antichrist” at their opponents. During the Crusades, Christians falsely accused Europe’s Jewish communities of collaborating with Islam or poisoning wells and put them to the sword. Witch-hunters implicated tens of thousands of innocent women in a supposed satanic conspiracy that was said to explain everything from illness to crop failure. “Conspiracy theories are, in the end, not so much an explanation of events as they are an effort to assign blame,” writes Anna Merlan in the 2019 book Republic of Lies.

But the systemic conspiracy theory as we know it—that is, the ostensibly secular variety—was established three centuries later, with remarkable speed. Some horrified opponents of the French Revolution could not accept that such an upheaval could be simply a popular revolt and needed to attribute it to sinister, unseen forces. They settled on the Illuminati, a Bavarian secret society of Enlightenment intellectuals influenced in part by the rituals and hierarchy of Freemasonry.

The group was founded by a young law professor named Adam Weishaupt, who used the alias Brother Spartacus. In reality, the Illuminati were few in number, fractious, powerless, and, by the time of the revolution in 1789, defunct. But in the imaginations of two influential writers who published “exposés” of the Illuminati in 1797—Scotland’s John Robison and France’s Augustin Barruel—they were everywhere. Each man erected a wobbling tower of wild supposition and feverish nonsense on a platform of plausible claims and verifiable facts. Robison alleged that the revolution was merely part of “one great and wicked project” whose ultimate aim was to “abolish all religion, overturn every government, and make the world a general plunder and a wreck.”

The Illuminati’s bogeyman status faded during the 19th century, but the core narrative persisted and proceeded to underpin the notorious hoax The Protocols of the Elders of Zion, first published in a Russian newspaper in 1903. The document’s anonymous author reinvented antisemitism by grafting it onto the story of the one big plot and positing Jews as the secret rulers of the world. In this account, the Elders orchestrate every war, recession, and so on in order to destabilize the world to the point where they can impose tyranny.

You might ask why, if they have such world-bending power already, they would require a dictatorship. You might also wonder how one group could be responsible for both communism and monopoly capitalism, anarchism and democracy, the theory of evolution, and much more besides. But the vast, self-contradicting incoherence of the plot is what made it impossible to disprove. Nothing was ruled out, so every development could potentially be taken as evidence of the Elders at work.

In 1921, the Protocols were exposed as what the London Times called a “clumsy forgery,” plagiarized from two obscure 19th-century novels, yet they remained the key text of European antisemitism—essentially “true” despite being demonstrably false. “I believe in the inner, but not the factual, truth of the Protocols,” said Joseph Goebbels, who would become Hitler’s minister of propaganda. In Mein Kampf, Hitler claimed that efforts to debunk the Protocols were actually “evidence in favor of their authenticity.” He alleged that Jews, if not stopped, would “one day devour the other nations and become lords of the earth.” Popper and Hofstadter both used the Holocaust as an example of what happens when a conspiracy theorist gains power and makes the paranoid style a governing principle.

The prominent role of Jewish Bolsheviks like Leon Trotsky and Grigory Zinoviev in the Russian Revolution of 1917 enabled a merger of antisemitism and anticommunism that survived the fascist era. Cold War red-baiters such as Senator Joseph McCarthy and the John Birch Society assigned to communists uncanny degrees of malice and ubiquity, far beyond the real threat of Soviet espionage. In fact, they presented this view as the only logical one. McCarthy claimed that a string of national security setbacks could be explained only if George C. Marshall, the secretary of defense and former secretary of state, was literally a Soviet agent. “How can we account for our present situation unless we believe that men high in this government are concerting to deliver us to disaster?” he asked in 1951. “This must be the product of a great conspiracy so immense as to dwarf any previous such venture in the history of man.”

This continuity between antisemitism, anticommunism, and 18th-century paranoia about secret societies isn’t hard to see. General Francisco Franco, Spain’s right-wing dictator, claimed to be fighting a “Judeo-Masonic-Bolshevik” conspiracy. The Nazis persecuted Freemasons alongside Jews and communists. Nesta Webster, the British fascist sympathizer who laundered the Protocols through the British press, revived interest in Robison and Barruel’s books about the Illuminati, which the pro-Nazi Baptist preacher Gerald Winrod then promoted in the US. Even Winston Churchill was briefly persuaded by Webster’s work, citing it in his claims of a “world-wide conspiracy for the overthrow of civilization … from the days of Spartacus-Weishaupt to the days of Karl Marx.”

To follow the chain further, Webster and Winrod’s stew of anticommunism, antisemitism, and anti-Illuminati conspiracy theories influenced the John Birch Society, whose publications would light a fire decades later under the Infowars founder Alex Jones, perhaps the most consequential conspiracy theorist of the early 21st century.

The villains behind the one big plot might be the Illuminati, the Elders of Zion, the communists, or the New World Order, but they are always essentially the same people, aspiring to officially dominate a world that they already secretly control. The names can be swapped around without much difficulty. While Winrod maintained that “the real conspirators behind the Illuminati were Jews,” the anticommunist William Guy Carr conversely argued that antisemitic paranoia “plays right into the hands of the Illuminati.” These days, it might be the World Economic Forum or George Soros; liberal internationalists with aspirations to change the world are easily cast as the new Illuminati, working toward establishing one world government.

Finding connection

The main reason that conspiracy theorists have lost interest in the relatively hard work of micro-conspiracies in favor of grander schemes is that it has become much easier to draw lines between objectively unrelated people and events. Information technology is, after all, also misinformation technology. That’s nothing new.

The witch craze could not have traveled as far or lasted as long without the printing press. Malleus Maleficarum (Hammer of the Witches), a 1486 screed by the German witch-hunter Heinrich Kramer, became the best-selling witch-hunter’s handbook, going through 28 editions by 1600. Similarly, it was the books and pamphlets “exposing” the Illuminati that allowed those ideas to spread everywhere following the French Revolution. And in the early 20th century, the introduction of the radio facilitated fascist propaganda. During the 1930s, the Nazi-sympathizing Catholic priest and radio host Charles Coughlin broadcast his antisemitic conspiracy theories to tens of millions of Americans on dozens of stations.

The internet has, of course, vastly accelerated and magnified the spread of conspiracy theories. It is hard to recall now, but in the early days it was sweetly assumed that the internet would improve the world by democratizing access to information. While this initial idealism survives in doughty enclaves such as Wikipedia, most of us vastly underestimated the human appetite for false information that confirms the consumer’s biases.

Politicians, too, were slow to recognize the corrosive power of free-flowing conspiracy theories. For a long time, the more fantastical assertions of McCarthy and the Birchers were kept at arm’s length from the political mainstream, but that distance began to diminish rapidly during the 1990s, as right-wing activists built a cottage industry of outrageous claims about Bill and Hillary Clinton to advance the idea that they were not just corrupt or dishonest but actively evil and even satanic. This became an article of faith in the information ecosystem of internet message boards and talk radio, which expanded over time to include Fox News, blogs, and social media. So when Democrats nominated Hillary Clinton in 2016, a significant portion of the American public saw a monster at the heart of an organized crime ring whose activities included human trafficking and murder.

Nobody could make the same mistake about misinformation today. One could hardly design a more fertile breeding ground for conspiracy theories than social media. The algorithms of YouTube, Facebook, TikTok, and X, which operate on the principle that rage is engaging, have turned into radicalization machines. When these platforms took off during the second half of the 2010s, they offered a seamless system in which people were able to come across exciting new information, share it, connect it to other strands of misinformation, and weave them into self-contained, self-affirming communities, all without leaving the house.

It’s not hard to see how the problem will continue to grow as AI burrows ever deeper into our everyday lives. Elon Musk has tinkered with the AI chatbot Grok to produce information that conforms to his personal beliefs rather than to actual facts. This outcome does not even have to be intentional. Chatbots have been shown to validate and intensify some users’ beliefs, even if they’re rooted in paranoia or hubris. If you believe that you’re the hero in an epic battle between good and evil, then your chatbot is inclined to agree with you.

It’s all this digital noise that has brought about the virtual collapse of the event conspiracy theory. The industry produced by the JFK assassination may have been pseudo-scholarship, but at least researchers went through the motions of scrutinizing documents, gathering evidence, and putting forward a somewhat consistent hypothesis. However misguided the conclusions, that kind of conspiracy theory required hard work and commitment.

Today’s online conspiracy theorists, by contrast, are shamelessly sloppy. Events such as the attack on Paul Pelosi, husband of former US House Speaker Nancy Pelosi, in October 2022, or the murders of Minnesota House speaker Melissa Hortman and her husband Mark in June 2025, or even more recently the killing of Charlie Kirk, have inspired theories overnight, which then evaporate just as quickly. The point of such theories, if they even merit that label, is not to seek the truth but to defame political opponents and turn victims into villains.

Before he even ran for office, Trump was notorious for promoting false stories about Barack Obama’s birthplace or vaccine safety. Heir to Joseph McCarthy, Barry Goldwater, and the John Birch Society, he is the lurid incarnation of the paranoid style. He routinely damns his opponents as “evil” or “very bad people” and speaks of America’s future in apocalyptic terms. It is no surprise, then, that every member of the administration must subscribe to Trump’s false claim that the 2020 election was stolen from him, or that celebrity conspiracy theorists are now in charge of national intelligence, public health, and the FBI. Former Democrats who hold such roles, like Tulsi Gabbard and Robert F. Kennedy Jr., have entered Trump’s orbit through the gateway of conspiracy theories. They illustrate how this mindset can create counterintuitive alliances that collapse conventional political distinctions and scramble traditional notions of right and left.

The antidemocratic implications of what’s happening today are obvious. “Since what is at stake is always a conflict between absolute good and absolute evil, the quality needed is not a willingness to compromise but the will to fight things out to the finish,” Hofstadter wrote. “Nothing but complete victory will do.”

Meeting the moment

It’s easy to feel helpless in the face of this epistemic chaos. Because one other foundational feature of religious prophecy is that it can be disproved without being discredited: Perhaps the world does not come to an end on the predicted day, but that great day will still come. The prophet is never wrong—he is just not proven right yet.

The same flexibility is enjoyed by systemic conspiracy theories. The plotters never actually succeed, nor are they ever decisively exposed, yet the theory remains intact. Recently, claims that covid-19 was either exaggerated or wholly fabricated in order to crush civil liberties did not wither away once lockdown restrictions were lifted. Surely the so-called “plandemic” was a complete disaster? No matter. This type of conspiracy theory does not have to make sense.

Scholars who have attempted to methodically repudiate conspiracy theories about the 9/11 attacks or the JFK assassination have found that even once all the supporting pillars have been knocked away, the edifice still stands. It is increasingly clear that “conspiracy theory” is a misnomer and what we are really dealing with is conspiracy belief—as Hofstadter suggested, a worldview buttressed with numerous cognitive biases and impregnable to refutation. As Goebbels implied, the “factual truth” pales in comparison to the “inner truth,” which is whatever somebody believes it be.

But at the very least, what we can do is identify the entirely different realities constructed by believers and recognize and internalize their common roots, tropes, and motives.

Those different realities, after all, have proved remarkably consistent in shape if not in their details. What we saw then, we see now. The Illuminati were Enlightenment idealists whose liberal agenda to “dispel the clouds of superstition and of prejudice,” in Weishaupt’s words, was demonized as wicked and destructive. If they could be shown to have fomented the French Revolution, then the whole revolution was a sham. Similarly, today’s radical right recasts every plank of progressive politics as an anti-American conspiracy. The far-right Great Replacement Theory, for instance, posits that immigration policy is a calculated effort by elites to supplant the native population with outsiders. This all flows directly from what thinkers such as Hofstadter, Popper, and Arendt diagnosed more than 60 years ago.

What is dangerously novel, at least in democracies, is conspiracy theories’ ubiquity, reach, and power to affect the lives of ordinary citizens. So understanding the paranoid style better equips us to counteract it in our daily existence. At minimum, this knowledge empowers us to spot the flaws and biases in our own thinking and stop ourselves from tumbling down dangerous rabbit holes.

On November 18, 1961, President Kennedy—almost exactly two years before Hofstadter’s lecture and his own assassination—offered his own definition of the paranoid style in a speech to the Democratic Party of California. “There have always been those on the fringes of our society who have sought to escape their own responsibility by finding a simple solution, an appealing slogan, or a convenient scapegoat,” he said. “At times these fanatics have achieved a temporary success among those who lack the will or the wisdom to face unpleasant facts or unsolved problems. But in time the basic good sense and stability of the great American consensus has always prevailed.”

We can only hope that the consensus begins to see the rolling chaos and naked aggression of Trump’s two administrations as weighty evidence against the conspiracy theory of society. The notion that any group could successfully direct the larger mess of this moment in the world, let alone the course of history for decades, undetected, is palpably absurd. The important thing is not that the details of this or that conspiracy theory are wrong; it is that the entire premise behind this worldview is false.

Not everything is connected, not everything is premeditated, and many things are in fact just as they seem.

Dorian Lynskey is the author of several books, including The Ministry of Truth: The Biography of George Orwell’s 1984 and Everything Must Go: The Stories We Tell About the End of the World. He cohosts the podcast Origin Story and co-writes the Origin Story books with Ian Dunt.