The State of AI: How war will be changed forever

Welcome back to The State of AI, a new collaboration between the Financial Times and MIT Technology Review. Every Monday, writers from both publications debate one aspect of the generative AI revolution reshaping global power.

In this conversation, Helen Warrell, FT investigations reporter and former defense and security editor, and James O’Donnell, MIT Technology Review’s senior AI reporter, consider the ethical quandaries and financial incentives around AI’s use by the military.

Helen Warrell, FT investigations reporter

It is July 2027, and China is on the brink of invading Taiwan. Autonomous drones with AI targeting capabilities are primed to overpower the island’s air defenses as a series of crippling AI-generated cyberattacks cut off energy supplies and key communications. In the meantime, a vast disinformation campaign enacted by an AI-powered pro-Chinese meme farm spreads across global social media, deadening the outcry at Beijing’s act of aggression.

Scenarios such as this have brought dystopian horror to the debate about the use of AI in warfare. Military commanders hope for a digitally enhanced force that is faster and more accurate than human-directed combat. But there are fears that as AI assumes an increasingly central role, these same commanders will lose control of a conflict that escalates too quickly and lacks ethical or legal oversight. Henry Kissinger, the former US secretary of state, spent his final years warning about the coming catastrophe of AI-driven warfare.

Grasping and mitigating these risks is the military priority—some would say the “Oppenheimer moment”—of our age. One emerging consensus in the West is that decisions around the deployment of nuclear weapons should not be outsourced to AI. UN secretary-general António Guterres has gone further, calling for an outright ban on fully autonomous lethal weapons systems. It is essential that regulation keep pace with evolving technology. But in the sci-fi-fueled excitement, it is easy to lose track of what is actually possible. As researchers at Harvard’s Belfer Center point out, AI optimists often underestimate the challenges of fielding fully autonomous weapon systems. It is entirely possible that the capabilities of AI in combat are being overhyped.

Anthony King, Director of the Strategy and Security Institute at the University of Exeter and a key proponent of this argument, suggests that rather than replacing humans, AI will be used to improve military insight. Even if the character of war is changing and remote technology is refining weapon systems, he insists, “the complete automation of war itself is simply an illusion.”

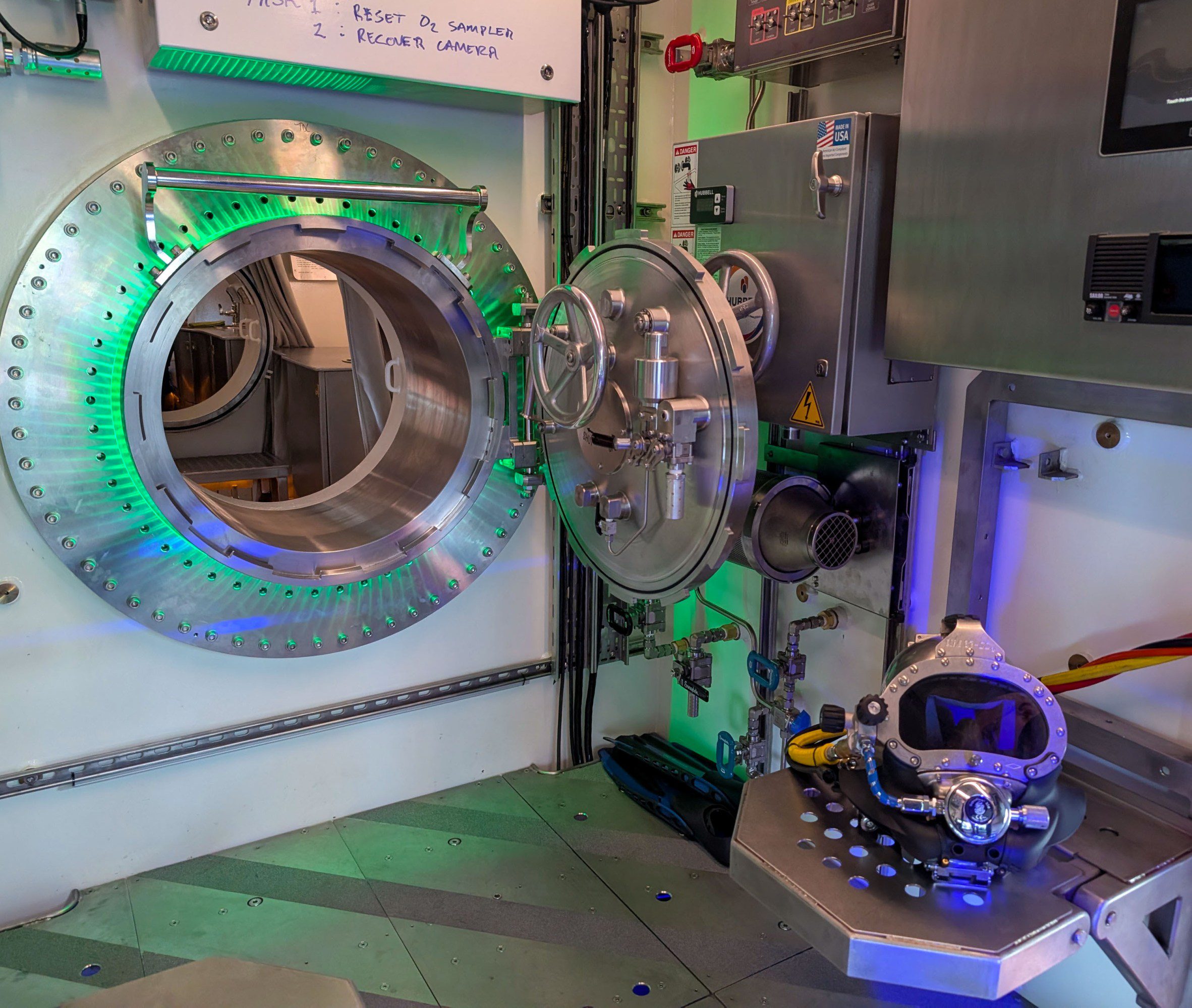

Of the three current military use cases of AI, none involves full autonomy. It is being developed for planning and logistics, cyber warfare (in sabotage, espionage, hacking, and information operations; and—most controversially—for weapons targeting, an application already in use on the battlefields of Ukraine and Gaza. Kyiv’s troops use AI software to direct drones able to evade Russian jammers as they close in on sensitive sites. The Israel Defense Forces have developed an AI-assisted decision support system known as Lavender, which has helped identify around 37,000 potential human targets within Gaza.

There is clearly a danger that the Lavender database replicates the biases of the data it is trained on. But military personnel carry biases too. One Israeli intelligence officer who used Lavender claimed to have more faith in the fairness of a “statistical mechanism” than that of a grieving soldier.

Tech optimists designing AI weapons even deny that specific new controls are needed to control their capabilities. Keith Dear, a former UK military officer who now runs the strategic forecasting company Cassi AI, says existing laws are more than sufficient: “You make sure there’s nothing in the training data that might cause the system to go rogue … when you are confident you deploy it—and you, the human commander, are responsible for anything they might do that goes wrong.”

It is an intriguing thought that some of the fear and shock about use of AI in war may come from those who are unfamiliar with brutal but realistic military norms. What do you think, James? Is some opposition to AI in warfare less about the use of autonomous systems and really an argument against war itself?

James O’Donnell replies:

Hi Helen,

One thing I’ve noticed is that there’s been a drastic shift in attitudes of AI companies regarding military applications of their products. In the beginning of 2024, OpenAI unambiguously forbade the use of its tools for warfare, but by the end of the year, it had signed an agreement with Anduril to help it take down drones on the battlefield.

This step—not a fully autonomous weapon, to be sure, but very much a battlefield application of AI—marked a drastic change in how much tech companies could publicly link themselves with defense.

What happened along the way? For one thing, it’s the hype. We’re told AI will not just bring superintelligence and scientific discovery but also make warfare sharper, more accurate and calculated, less prone to human fallibility. I spoke with US Marines, for example, who tested a type of AI while patrolling the South Pacific that was advertised to analyze foreign intelligence faster than a human could.

Secondly, money talks. OpenAI and others need to start recouping some of the unimaginable amounts of cash they’re spending on training and running these models. And few have deeper pockets than the Pentagon. And Europe’s defense heads seem keen to splash the cash too. Meanwhile, the amount of venture capital funding for defense tech this year has already doubled the total for all of 2024, as VCs hope to cash in on militaries’ newfound willingness to buy from startups.

I do think the opposition to AI warfare falls into a few camps, one of which simply rejects the idea that more precise targeting (if it’s actually more precise at all) will mean fewer casualties rather than just more war. Consider the first era of drone warfare in Afghanistan. As drone strikes became cheaper to implement, can we really say it reduced carnage? Instead, did it merely enable more destruction per dollar?

But the second camp of criticism (and now I’m finally getting to your question) comes from people who are well versed in the realities of war but have very specific complaints about the technology’s fundamental limitations. Missy Cummings, for example, is a former fighter pilot for the US Navy who is now a professor of engineering and computer science at George Mason University. She has been outspoken in her belief that large language models, specifically, are prone to make huge mistakes in military settings.

The typical response to this complaint is that AI’s outputs are human-checked. But if an AI model relies on thousands of inputs for its conclusion, can that conclusion really be checked by one person?

Tech companies are making extraordinarily big promises about what AI can do in these high-stakes applications, all while pressure to implement them is sky high. For me, this means it’s time for more skepticism, not less.

Helen responds:

Hi James,

We should definitely continue to question the safety of AI warfare systems and the oversight to which they’re subjected—and hold political leaders to account in this area. I am suggesting that we also apply some skepticism to what you rightly describe as the “extraordinarily big promises” made by some companies about what AI might be able to achieve on the battlefield.

There will be both opportunities and hazards in what the military is being offered by a relatively nascent (though booming) defense tech scene. The danger is that in the speed and secrecy of an arms race in AI weapons, these emerging capabilities may not receive the scrutiny and debate they desperately need.

Further reading:

Michael C. Horowitz, director of Perry World House at the University of Pennsylvania, explains the need for responsibility in the development of military AI systems in this FT op-ed.

The FT’s tech podcast asks what Israel’s defense tech ecosystem can tell us about the future of warfare

This MIT Technology Review story analyzes how OpenAI completed its pivot to allowing its technology on the battlefield.

MIT Technology Review also uncovered how US soldiers are using generative AI to help scour thousands of pieces of open-source intelligence.