OpenAI Search Crawler Passes 55% Coverage In Hostinger Study via @sejournal, @MattGSouthern

Hostinger analyzed 66 billion bot requests across more than 5 million websites and found that AI crawlers are following two different paths.

LLM training bots are losing access to the web as more sites block them. Meanwhile, AI assistant bots that power search tools like ChatGPT are expanding their reach.

The analysis draws on anonymized server logs from three 6-day windows, with bot classification mapped to AI.txt project classifications.

Training Bots Are Getting Blocked

The starkest finding involves OpenAI’s GPTBot, which collects data for model training. Its website coverage dropped from 84% to 12% over the study period.

Meta’s ExternalAgent was the largest training-category crawler by request volume in Hostinger’s data. Hostinger says this training-bot group shows the strongest declines overall, driven in part by sites blocking AI training crawlers.

These numbers align with patterns I’ve tracked through multiple studies. BuzzStream found that 79% of top news publishers now block at least one training bot. Cloudflare’s Year in Review showed GPTBot, ClaudeBot, and CCBot had the highest number of full disallow directives across top domains.

The data quantifies what those studies suggested. Hostinger interprets the drop in training-bot coverage as a sign that more sites are blocking those crawlers, even when request volumes remain high.

Assistant Bots Tell a Different Story

While training bots face resistance, the bots that power AI search tools are expanding access.

OpenAI’s OAI-SearchBot, which fetches content for ChatGPT’s search feature, reached 55.67% average coverage. TikTok’s bot grew to 25.67% coverage with 1.4 billion requests. Apple’s bot reached 24.33% coverage.

These assistant crawls are user-triggered and more targeted. They serve users directly rather than collecting training data, which may explain why sites treat them differently.

Classic Search Remains Stable

Traditional search engine crawlers held steady throughout the study. Googlebot maintained 72% average coverage with 14.7 billion requests. Bingbot stayed at 57.67% coverage.

The stability contrasts with changes in the AI category. Google’s main crawler faces a unique position since blocking it affects search visibility.

SEO Tools Show Decline

SEO and marketing crawlers saw declining coverage. Ahrefs maintained the largest footprint at 60% coverage, but the category overall shrank. Hostinger attributes this to two factors. These tools increasingly focus on sites actively doing SEO work. And website owners are blocking resource-intensive crawlers.

I reported on the resource concerns when Vercel data showed GPTBot generating 569 million requests in a single month. For some publishers, the bandwidth costs became a business problem.

Why This Matters

The data confirms a pattern that’s been building over the past year. Site operators are drawing a line between AI crawlers they’ll allow and those they won’t.

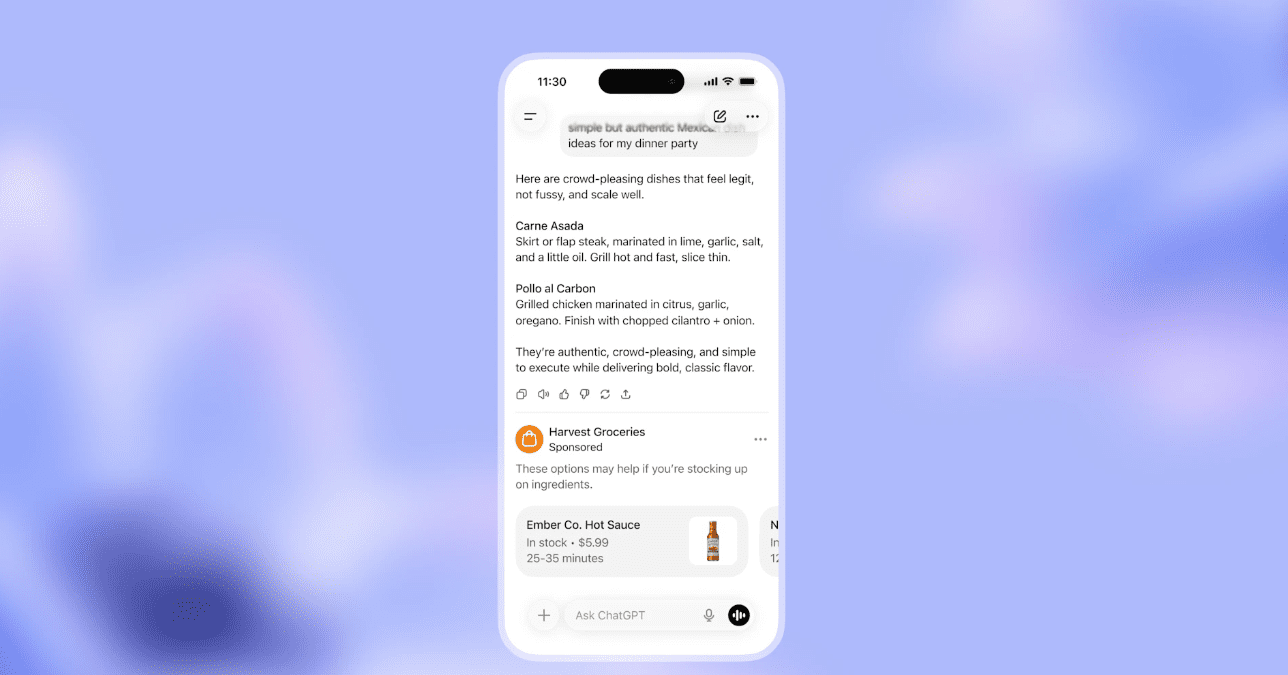

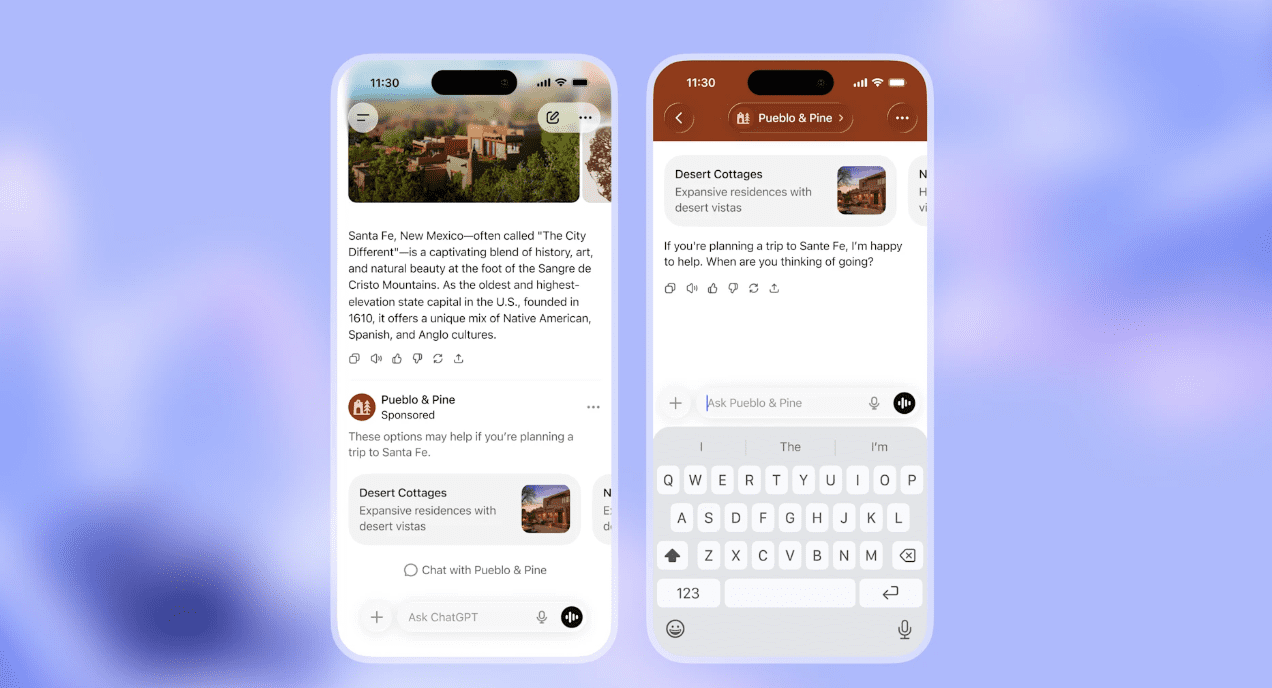

The decision comes down to function. Training bots collect content to improve models without sending traffic back. Assistant bots fetch content to answer specific user questions, which means they can surface your content in AI search results.

Hostinger suggests a middle path: block training bots while allowing assistant bots that drive discovery. This lets you participate in AI search without contributing to model training.

Looking Ahead

OpenAI recommends allowing OAI-SearchBot if you want your site to appear in ChatGPT search results, even if you block GPTBot.

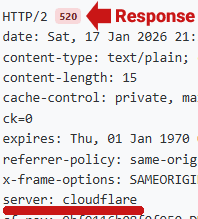

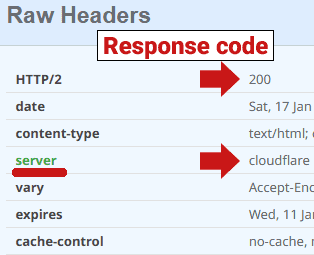

OpenAI’s documentation clarifies the difference. OAI-SearchBot controls inclusion in ChatGPT search results and respects robots.txt. ChatGPT-User handles user-initiated browsing and may not be governed by robots.txt in the same way.

Hostinger recommends checking server logs to see what’s actually hitting your site, then making blocking decisions based on your goals. If you’re concerned about server load, you can use CDN-level blocking. If you want to potentially increase your AI visibility, review current AI crawler user agents and allow only the specific bots that support your strategy.

Featured Image: BestForBest/Shutterstock