ChatGPT To Begin Testing Ads In The United States via @sejournal, @brookeosmundson

Just today, OpenAI confirmed it will begin testing advertising in the United States for ChatGPT Free and ChatGPT Go users in the coming weeks, marking the first time ads will appear inside the ChatGPT experience.

The test coincides with the U.S. launch of ChatGPT Go, a low-cost subscription tier priced at $8 per month that has been available internationally since August.

The details reveal a cautious approach, with clear limits on where ads can appear, who will see them, and how they will be separated from ChatGPT’s responses.

Here’s what OpenAI shared, how the tests will work, and why this shift matters for users and advertisers alike.

What OpenAI Is Testing

ChatGPT ads are not being introduced as part of a broader redesign or monetization overhaul. Instead, OpenAI is framing this as a limited test, with narrow placement rules and clear separation from ChatGPT’s core function.

Ads will appear at the bottom of a response, only when there is a relevant sponsored product or service tied to the active conversation. They will be clearly labeled, visually distinct from organic answers, and dismissible.

Users will also be able to see why a particular ad is being shown and turn off ad personalization entirely if they choose.

Just as important is where ads will not appear.

OpenAI stated that ads will not be shown to users under 18 and will not be eligible to run near sensitive or regulated topics, including health, mental health, and politics. Conversations will not be shared with advertisers, and user data will not be sold.

Timing Ad Testing with the Go Tier Launch

The timing of the announcement doesn’t seem accidental.

Alongside the ad testing plans, OpenAI confirmed that ChatGPT Go is now available in the United States.

Priced at $8 per month, Go sits between the free tier and higher-cost subscriptions, offering expanded access to messaging, image generation, file uploads, and memory.

Ads are positioned as a way to support both the free tier and Go users, allowing more people to use ChatGPT with fewer restrictions without forcing an upgrade.

At the same time, OpenAI made it clear that Pro, Business, and Enterprise subscriptions will remain ad-free, reinforcing that paid tiers are still the preferred path for users who want an uninterrupted experience.

Explaining the Guardrails of Early Ad Testing

OpenAI spent as much time explaining what ads will not do as what they will.

The company was explicit that advertising will not influence ChatGPT’s responses. Answers are optimized for usefulness, not commercial outcomes. There is no intent to optimize for time spent, engagement loops, or other metrics commonly associated with ad-driven platforms.

This is a notable departure from how advertising has historically been introduced elsewhere on the internet. Rather than retrofitting ads into an existing product and adjusting incentives later, OpenAI is attempting to define the rules up front.

Whether those rules hold over time is an open question. But the clarity of the initial framework suggests OpenAI understands the risk of getting this wrong.

What Early Ad Formats Tell Us

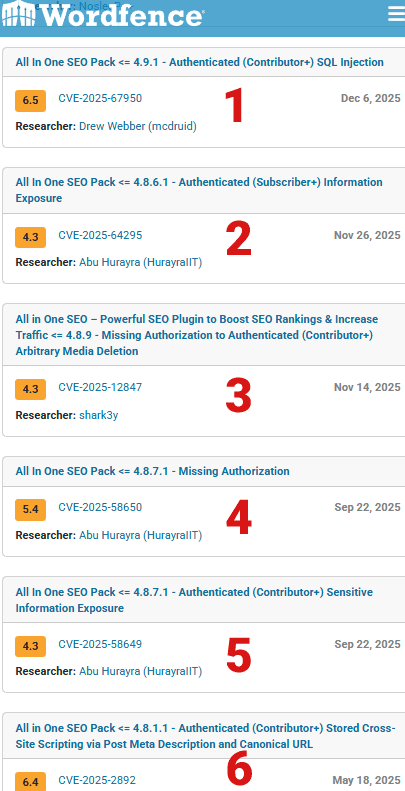

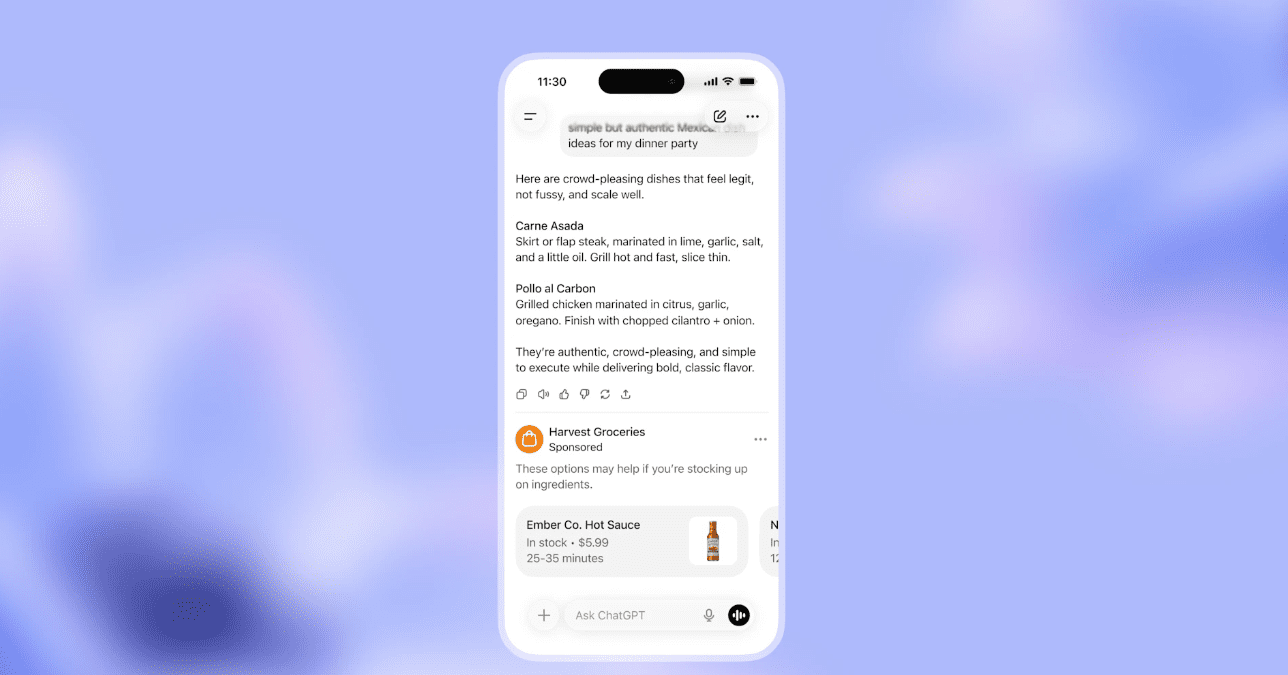

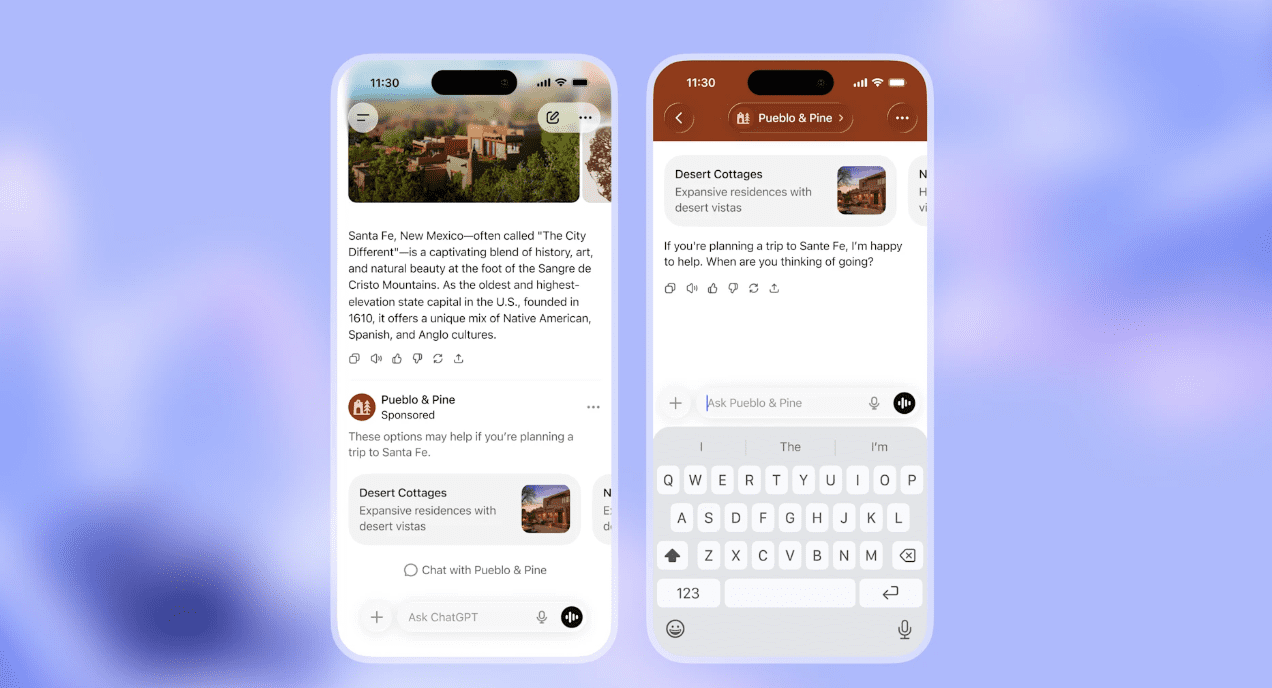

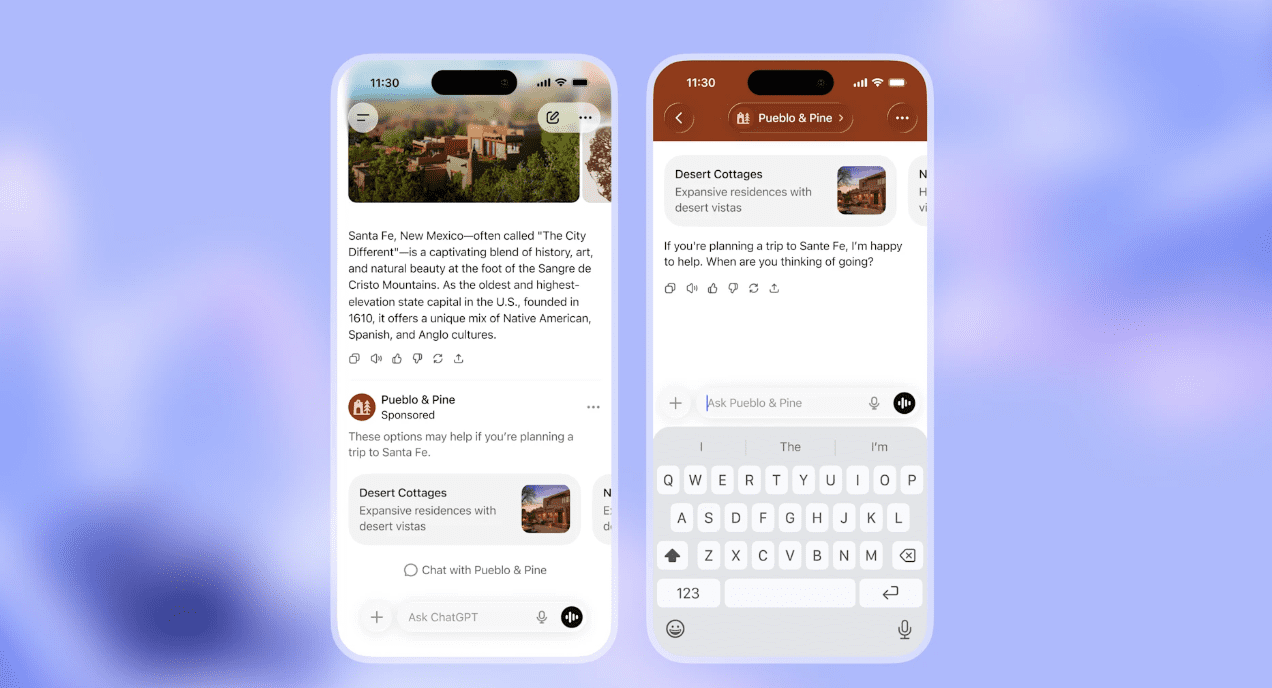

OpenAI shared two examples of the ad formats it plans to test inside ChatGPT.

In the first example, a ChatGPT response provides recipe ideas for a Mexican dinner party. Below the response, a sponsored product recommendation appears for a grocery item. The ad is clearly labeled and visually separated from the organic answer.

In the second example, ChatGPT responds to a conversation about traveling to Santa Fe, New Mexico. A sponsored lodging listing appears below the response, labeled as sponsored. The example also shows a follow-up chat screen, indicating that users can continue interacting with ChatGPT after seeing the ad.

In both examples, the ads appear at the bottom of ChatGPT’s responses and are presented as separate from the main answer. OpenAI stated that these formats are part of its initial ad testing and may change as testing progresses.

Why This Matters for Advertisers

This is not something advertisers can plan for just yet.

There are no announced buying models, no targeting details, no measurement framework, and no indication of when access might expand beyond testing. OpenAI has been clear that this is not an open marketplace at the moment.

Still, the implications are hard to ignore. Ads placed alongside high-intent, problem-solving conversations could eventually represent a different kind of discovery environment. One where usefulness matters more than volume, and where poor creative or loose targeting would feel immediately out of place.

If this becomes a real channel, it is unlikely to reward the same tactics that work in search or social today.

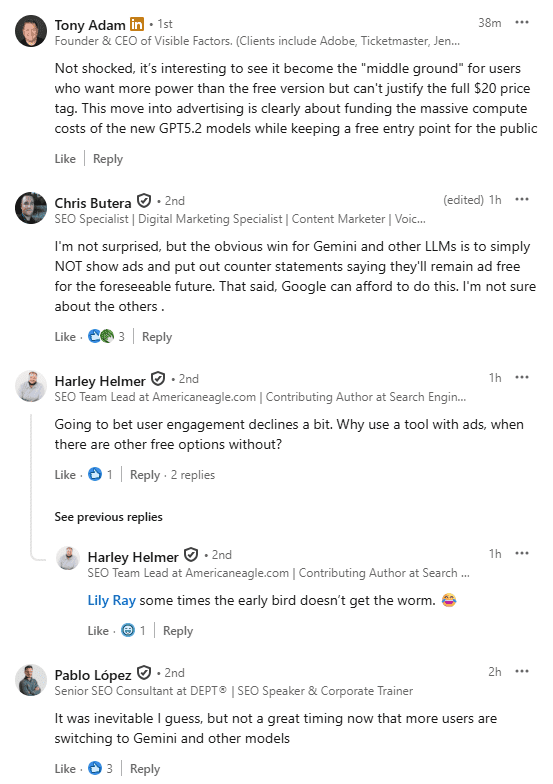

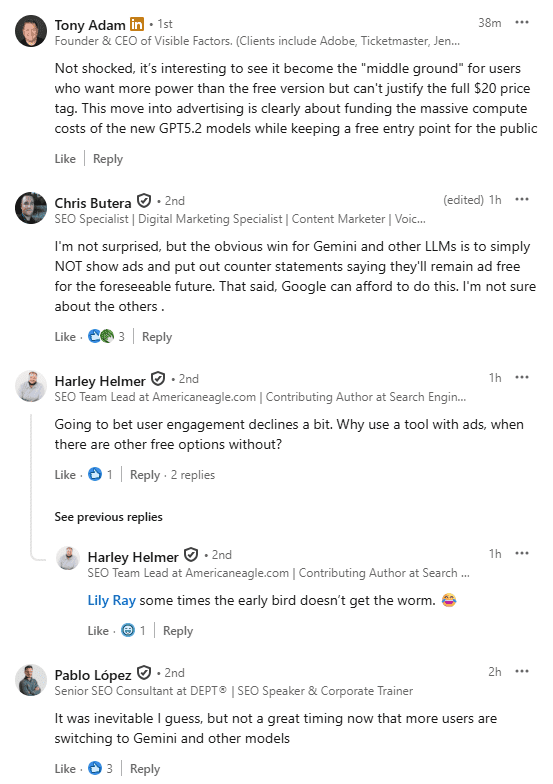

How Marketers Are Reacting So Far

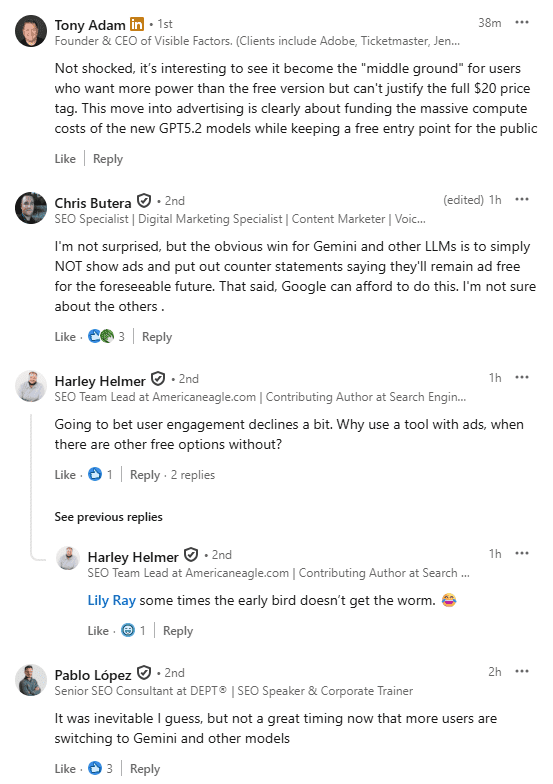

Early industry reaction has been measured, not alarmist.

Most commentary acknowledges that advertising inside ChatGPT was inevitable at this scale.

Lily Ray stated her curiosity to “see how this change impacts user experience.”

Most people in the comments of her post are not shocked by this:

There is also skepticism, particularly around whether relevance can be maintained over time without pressure to expand inventory. That skepticism is warranted. History suggests that once ads work, the temptation to scale them follows.

For now, though, this feels less like an ad platform launch and more like OpenAI testing whether ads can exist inside a conversational interface without changing how people trust the product.

The Bigger Signal for AI Platforms

For users, OpenAI is expanding access while trying to preserve the trust that has made ChatGPT widely used. Introducing ads without blurring the line between answers and monetization sets a high bar, especially for a product people rely on for personal and professional tasks.

Outside of ChatGPT itself, this update shows how AI-first products may think about revenue differently than search or social networks. Ads are positioned as a way to support access, not as the product, with paid tiers remaining central.

OpenAI says it will adjust how ads appear based on user feedback once testing begins in the U.S.

For now, this is a limited test rather than a full advertising launch. Whether those boundaries hold will matter, not just for ChatGPT, but for how monetization inside conversational interfaces is expected to work.