Google Ads Now Being Mixed In With Organic Results via @sejournal, @brodieseo

Google has an incentive to encourage users to click its sponsored ads – but this should not be to the detriment of user experience.

This aspect of Search seems to have gone awry in recent years, with Google engaging in activities that negatively impacted users.

Historically, search engine users are accustomed to ads either being placed at the top or the bottom of a SERP, with the page itself either being purely organic results or having the organic results placed in between the ads. Search features are often mixed in, too.

This has now changed.

A change was recently added to Google’s documentation, stating that:

“Top ads may show below the top organic results on certain queries.”

Detailing how placement for top ads is dynamic and may change.

In this article, we explore this change and its impact on users and organic search results.

Timeline Of Changes

Leading up to the change, Google had been testing mixing sponsored ads within organic listings in various capacities over a 10-month period.

Here is a timeline of the changes leading up to the official launch.

June 17th, 2023: Initial Testing

This was the first time the test appeared in Google’s search results, only showing on mobile devices at the time. Within this initial testing period, it was showing for very few users with more discrete inclusion only on mobile, easily being mistaken for an organic listing for users.

October 23rd, 2023: Heavier Testing

Within this testing period, it was the first time that the broader SEO community started to notice the ad labels appearing within organic listings, being visible across both mobile and desktop.

This testing period was more prolonged in the lead-up to launch.

March 28th, 2024: Launch

On this date, Google’s Ads Liaison announced that the change would be a permanent one, with a new definition being added to the “top ads” documentation. From this date, users were then to expect an official change where ads would be mixed in with organic results beyond limited testing.

Different Types Of Placements

Now that Google has been mixing sponsored ads within organic results for almost two months, we’re able to gain a better understanding of the extent of the change and how the sponsored ads are appearing.

Based on my research, there are two common situations where Google is presenting ads within organic listings.

Mixed With Organic Results

The standard approach involves a simple ad placement within the top organic results.

Based on my experience, it is common for there to be one or two ads that are placed together in this situation. It is rare for there to be a maximum of four ads in a row.

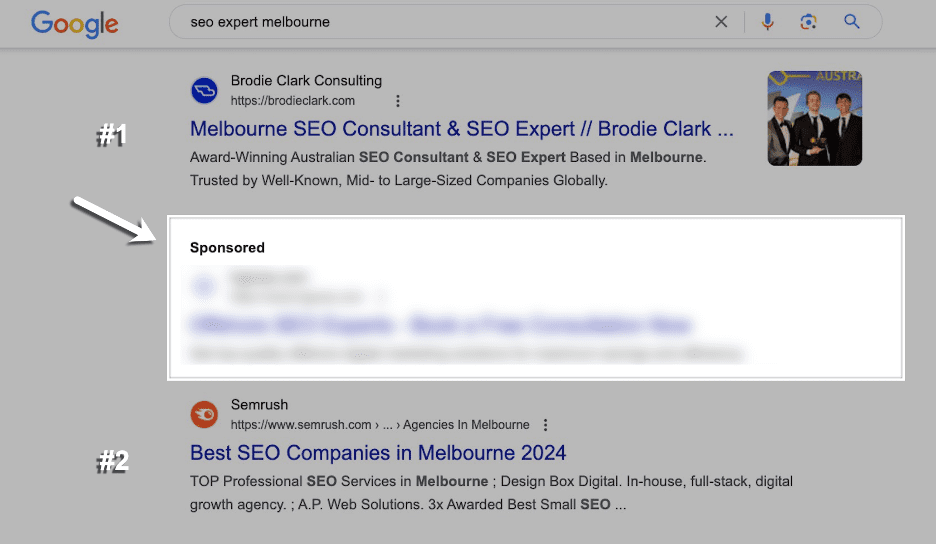

An example of this can be found below:

Screenshot from search for [seo expert melbourne], Google, May 2024

Screenshot from search for [seo expert melbourne], Google, May 2024In this example, the sponsored ad technically appears in position #2 on the page. Normally, the ad would have appeared above my page, but in this instance, it is below.

For the Semrush page, the visibility on the SERP would be unchanged if they were above, but for my page it is at an advantage in terms of ranking visibility.

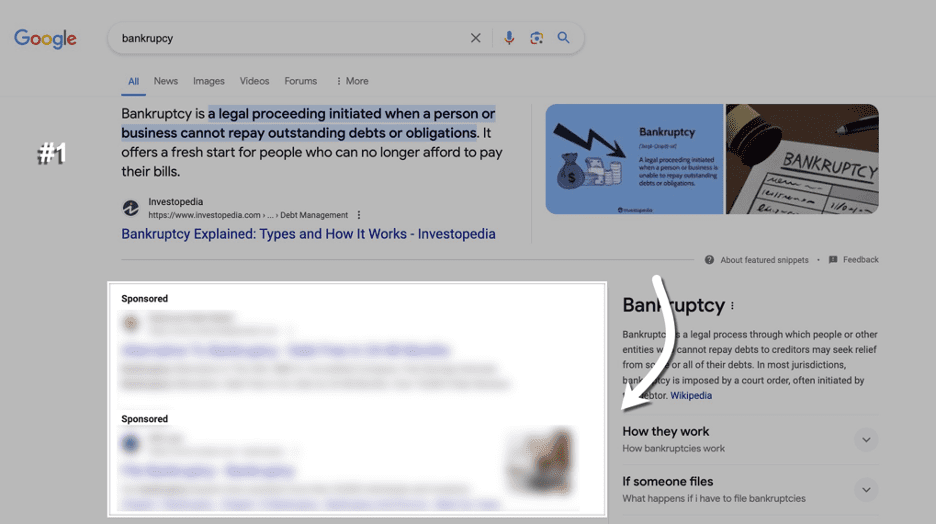

Directly Below Featured Snippets

What seems to be the most common way ads are mixed in with organic listings is by placing them directly below a featured snippet.

In cases like this, it is common for there to be a full lot of four ads that appear below the featured snippet. In this example, there are two ads that are appearing.

In the past, and still having the ability to show right now, ads would always be placed directly above the featured snippet.

This could have been perceived as a poor user experience, considering featured snippets tend to show when an answer to a query can be explained with a short description from the page.

What Are Google’s Intentions?

Each of the situations explained in the previous section could be interpreted differently.

The first situation (mixed within organic results) is pretty clear about Google’s intentions: to encourage more clicks on ads and desensitize users to ads appearing at the top, with users mistaking ads for organic listings.

In contrast, the second situation with featured snippets could be perceived differently. While ads continue to appear in the viewport on desktop, the answer to the user’s query is prominently displayed at the top of search results without ads getting in the way.

I can’t see this being a bad thing for users or SEO, as Google is making the organic listing more visible across these instances.

In general, I’m aware of Google’s need to prioritize ad revenue with changes to ad placement. While there are certainly arguments to be made from both angles with this change, my perception is that the outcome is fairly neutral from both sides.

Ads mixed in with organic results are still exceptionally rare, but featured snippet placements are a more common use case, and there are some clear upsides to this.

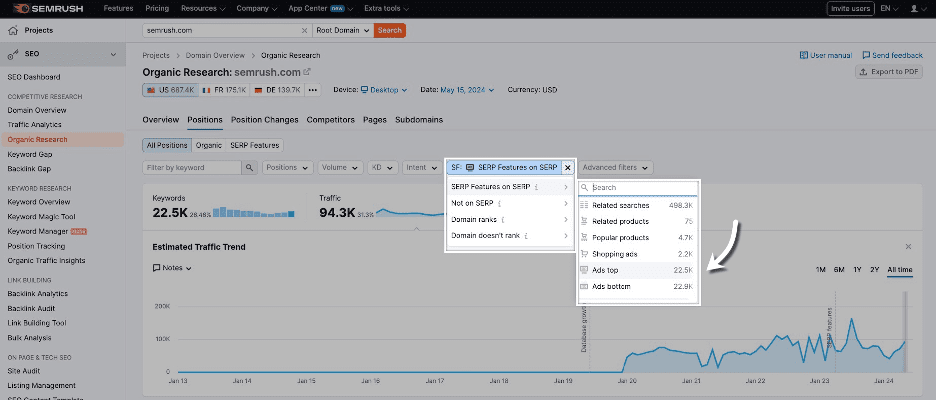

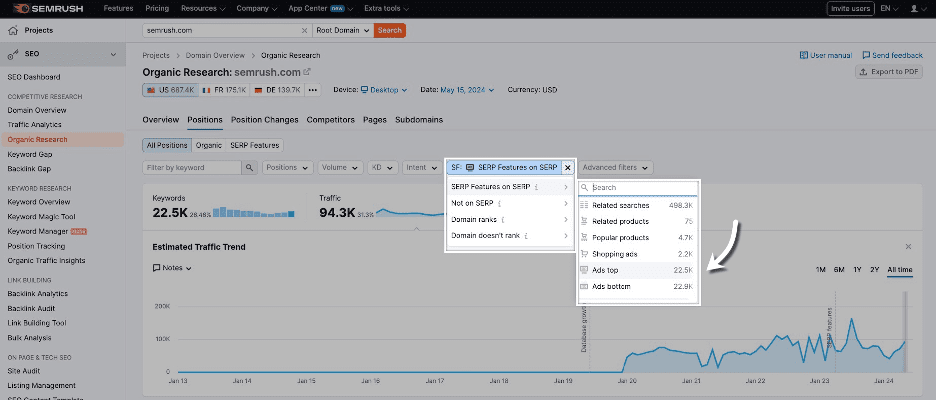

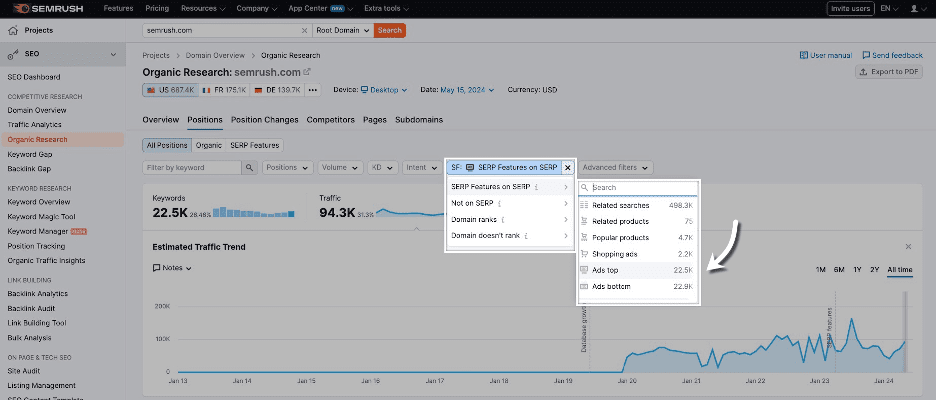

How To Analyze With Semrush

While Semrush does have an Advertising Research tool that shows you the position of your ads across various queries, I found that the data wasn’t being collected in a way that allows you to compare ad position relative to organic listings.

As an alternative, I found the best approach for analysis to be through using “Ads top” as a SERP feature filter through Organic Research to locate instances where ads were being mixed with organic listings.

Here’s where this filter is located:

This filtering doesn’t allow you to filter by URLs for a specific domain, with it instead showing instances where “top ads” are a SERP feature across the Semrush index.

Using this method, I’m able to review historical top ad inclusions since the launch in March and conclude that ads being mixed in with organic results is still exceptionally rare.

Final Thoughts

Overall, based on how Google currently operates, I’m not particularly concerned about this ad placement change from Google.

While the change is an official one based on the update to Google’s documentation, it still operates more like a test, where ads are continuing to appear in normal positions in the vast majority of instances.

Based on my research, I believe the change should be perceived as neutral for Google users and SEO. If you see ads being mixed with organic listings in the wild, keep your wits about you.

I’ll be keeping an eye on this change to make sure Google’s ad placements don’t get too carried away.

Google’s ad testing has more recently reverted back to using the “ad” labeling instead of “sponsored” on mobile, which was the previous treatment up until recent years.

We can certainly expect these types of tests to continue into the future, with there never being a boring day within our industry.

More resources:

Featured Image: BestForBest/Shutterstock