APAC Search Strategy Goes Beyond Google & Baidu via @sejournal, @motokohunt

If you approach the Asia-Pacific search strategy as simply an extension of your U.S. or European Google strategy, you will miss how discovery actually works across the region. Google is still dominant in many markets. But the landscape is far more fragmented than most global teams assume.

Japan is a clear example. Bing holds 31.63% of search share alongside Google’s 59.58%, which is enough to materially influence both SEO and paid performance.

South Korea tells a different story, but leads to the same conclusion. Google (46.81%) and Naver (43.96%) operate at near parity, making any Google-only strategy incomplete by design.

Even in Southeast Asia, where Google is often assumed to be universal, local engines still matter. In Vietnam, CocCoc holds a meaningful 5.34% share of the market, which is enough to influence visibility in some competitive categories.

These are not anomalies but a broader shift.

Information discovery is changing with AI-driven interfaces shortening the path from question to decision. Super-apps and platform ecosystems are also changing where that discovery happens. In many cases, users are no longer moving through the web step by step. They are interacting with systems that interpret, summarize, and guide decisions within a single experience. Put together, fragmentation and interface change are creating a very different competitive landscape.

The advantage in APAC is no longer about understanding a single algorithm or the top-ranking factors. It is about understanding how distribution works across multiple systems, each with its own logic, constraints, and opportunities. That shift requires a different mindset. Not how do we rank? But where do we need to exist?

The Forces Reshaping Discovery In APAC

To understand how search is evolving in APAC, it helps to step back from individual search engines and look at broader behavior patterns. Across Asian markets, four patterns are consistently changing how discovery happens.

The First Is The Rise Of AI-Driven Answer Systems

Search used to require effort. Users entered a query, reviewed results, compared options, and formed their own conclusions. That process is being compressed. A question goes in, and a synthesized answer comes back, often with built-in recommendations.

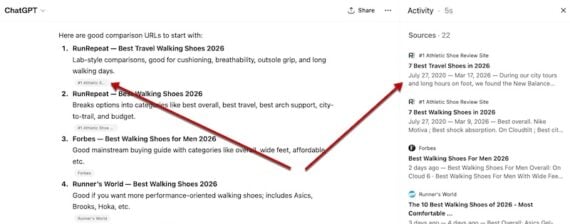

Visibility changes significantly in this new environment. Simply ranking in SERPS is no longer enough. Future-state content needs to be structured so it can be selected, understood, and cited.

The Second Force Is The Role Of Super-Apps

In markets like South Korea and Japan, discovery is not limited to a browser. It happens inside messaging platforms, content ecosystems, and integrated services. KakaoTalk and LINE are not just communication tools. They are environments where users search, evaluate, and act.

In Japan, it is common to see TV commercials directing users to a LINE account rather than a standalone app or website. For many brands, LINE has become the primary interface for engagement, offering promotions, customer service, and loyalty programs in one place.

That shift matters because users are not always navigating to a site or downloading a brand app. They are interacting within platforms they already use daily. For brands, being present on the web is no longer enough if the decision is made elsewhere.

The Third Force Is Distribution Through Telcos

This is one of the least discussed but most impactful changes. When telecom providers bundle AI tools into their offerings, they accelerate adoption at a scale that traditional product growth cannot match.

In India, Bharti Airtel partnered with Perplexity to provide its Pro offering to roughly 360 million users.

Reliance Jio has taken a similar approach, distributing access to Google’s Gemini AI across more than 500 million users through bundled plans.

In South Korea, SK Telecom also partnered with Perplexity to bring AI-powered search directly into its ecosystem, positioning it alongside traditional engines rather than outside them.

In these cases, adoption is not driven by users seeking new tools. It’s happening because those tools are already there. Pre-installed, bundled, or built into services people use every day.

Instead of gradual growth, usage can scale almost overnight, significantly changing the adoption curve. And because these tools are positioned as assistants rather than search engines, they reshape how users interact with information without requiring them to consciously change behavior.

For search teams, this creates a different kind of competitive dynamic. It’s no longer just about ranking in search engines. The real competition is for inclusion in systems being rolled out to millions of users via existing platforms they are already comfortable using.

The Fourth Force Is The Evolution Of Portal-Based Search

In South Korea and Japan, portals like Naver and Yahoo! are popular and function more like structured environments, with commerce modules, local listings, media, and knowledge panels built directly into the experience. Increasingly, these platforms are adding AI-generated summaries to answer questions without sending users elsewhere.

It changes what success looks like. Ranking still matters, but it’s not the whole story anymore. You also need to show up within these environments.

Once you look at it that way, the objective shifts. It’s less about visibility in one engine and more about being present wherever people are actually finding answers.

Market Realities That Change The Playbook

Once you recognize that APAC is a distributed landscape, the idea of a single “regional strategy” starts to break down. Each market introduces its own constraints and opportunities, and those differences materially affect how search should be approached.

Japan often gets rolled into global strategy, but the numbers don’t really support that. Bing’s share is high enough to affect both organic and paid performance, driven in part by default browser settings and enterprise environments. It’s one of those gaps that’s easy to miss until you look for it.

South Korea is a different kind of challenge. Naver sits at the center of how people discover content, and it doesn’t behave like a typical search engine. The formats, the way results are surfaced, and even what users expect to see all differ. If you approach it with a Google-first mindset, things start to break down pretty quickly.

Vietnam shows a different kind of opening. CocCoc’s share isn’t huge, but it doesn’t need to be. If competitors ignore it, that alone creates room to gain visibility. In markets like this, where local behavior doesn’t fully match global assumptions, those gaps tend to get picked up quickly.

Vietnam highlights a different type of opportunity. Even a smaller share for a local engine can create an advantage if competitors ignore it. In markets where local behavior diverges from global assumptions, these gaps can be leveraged relatively quickly.

India and Indonesia don’t follow the same pattern. Google still dominates, but something else is happening alongside it. AI tools are picking up faster than most teams expect. In some cases, that push isn’t coming from users at all. It’s coming through telco partnerships, bundled access, and tools showing up inside services people already use.

So the way discovery shifts in these markets can feel uneven. It doesn’t necessarily track with what we’ve seen in more mature regions.

The common thread across these markets is that the opportunity is not just in understanding each engine, but in recognizing where competitors are underinvesting.

Where Most SEO Strategies Fall Short

In APAC, the issue usually isn’t a lack of optimization knowledge. It’s how that knowledge gets applied. Most global teams are set up around a centralized model. The tools, processes, and reporting tend to revolve around Google by default. Regional differences are recognized, but they don’t always make it into how work actually gets done.

That’s where things start to drift.

Alternative engines are often pushed aside. Even when the data shows a meaningful share, they’re treated as secondary priorities. Over time, that creates an opening. Teams that do invest, even at a basic level, can pick up visibility that others leave behind.

Additionally, structured data and technical capabilities are not adapted to local ecosystems. What works for Google is assumed to work everywhere, even in environments where search behaves very differently.

Often experimentation is limited or not allowed. Many of the platforms that matter in APAC provide APIs, feeds, and tooling that enable more advanced strategies. These capabilities often go unused because they fall outside standard workflows.

None of these gaps is particularly complex to address. But they require a shift in how teams think about ownership and execution.

The Shift To Answer-Layer Visibility

One of the more subtle but important changes is the emergence of what can be described as the answer layer. Users are increasingly interacting with systems that provide direct responses rather than lists of options. In these environments, visibility is determined by whether your content is selected as a source, not just whether it ranks.

This changes how content should be created, requiring information to be structured in a way that is easy to extract and interpret. Clear definitions, comparisons, and step-by-step explanations become more valuable because they align with how AI systems assemble answers. At the same time, attribution becomes more important. Content that is well-organized, clearly sourced, and easy to validate is more likely to be used and cited.

This is not a replacement for traditional SEO. It is an extension of it. But it does require a different level of intentionality in how content is designed.

Measurement Needs To Catch Up

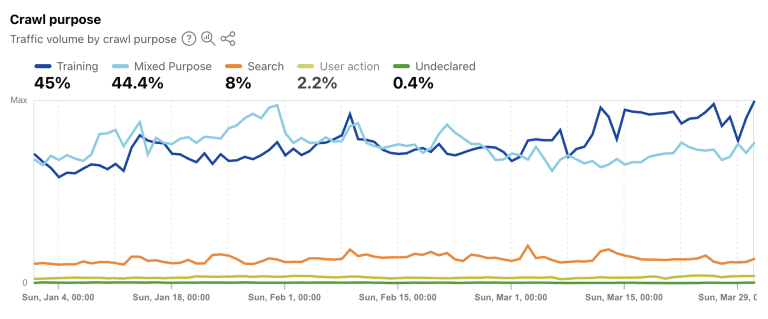

One of the challenges in adapting to this landscape is that measurement has not kept pace with behavior. Many teams still report on organic search as a single channel. In APAC, that approach obscures more than it reveals.

At a minimum, performance should be segmented by engine and by discovery type. Google, Bing, portal ecosystems, local engines, and AI-driven referrals each behave differently and should be evaluated separately.

Without this level of visibility, it becomes difficult to justify investment or identify where opportunities exist. This is particularly important as AI-driven traffic grows. Early data suggest that referrals from AI systems are increasing rapidly, but in many cases, they are not being tracked or attributed correctly, if at all.

The result is a blind spot in performance reporting at the exact moment when new discovery channels are emerging.

Regulation As A Strategic Constraint And Opportunity

Regulation is increasingly shaping how search and discovery operate across APAC.

Privacy laws in markets like Japan, South Korea, India, and Vietnam are tightening what teams can collect and how they can use it. At the same time, countries like Australia are putting more pressure on AI systems, especially regarding age verification and platform responsibility.

Most organizations still treat this as a compliance task. Something to deal with once it becomes unavoidable. But it doesn’t really work that way anymore.

The teams that plan for these constraints early tend to move faster. Their measurement holds up. Their content strategies translate more easily across markets. They don’t have to keep reworking things every time a new requirement shows up.

Others take a different path. They push harder on data collection, lean into short-term gains, and then end up rebuilding parts of their stack under pressure.

So regulation ends up doing more than limiting what’s possible. It quietly separates the teams that can adapt from the ones that will struggle to adapt to this new ecosystem.

What To Do Next

For teams trying to adapt, the next steps don’t need to be dramatic. Most of the gains come from getting the basics right in the markets that matter.

A good place to start is how you define search. In some markets, Bing deserves to be integrated as a primary channel given its market share. In South Korea, Naver needs to be approached as its own ecosystem rather than an alternative to Google. And in places like Vietnam, it’s worth taking a closer look at platforms like CocCoc to understand whether they contribute meaningful visibility for your category.

At the same time, begin building content that is designed for extraction and citation. This does not require a complete overhaul of your content strategy, but it does require more intentional structuring of key information.

Content that performs well in AI-driven environments tends to be clear, well-organized, and easy to interpret. Definitions, comparisons, step-by-step guidance, and well-supported claims are more likely to be selected and reused because they align with how answer systems assemble responses.

This is where many global teams overlook a significant advantage. Rather than creating entirely new content for each APAC market, there is often an opportunity to extend what already works in the U.S. or Europe. Content that has earned visibility, links, and engagement in one market has already demonstrated its value. When that content is adapted thoughtfully, not just translated, it can carry those strengths into new markets.

The key is in how that adaptation happens. Instead of treating localization as a linguistic exercise, it should be treated as a structural one. Core concepts, definitions, and frameworks can remain consistent, while local relevance is introduced through examples, regulatory context, and market-specific details.

This approach does two things.

First, it accelerates content development by building on proven assets rather than starting from scratch.

Second, it increases the likelihood that content will be recognized, interpreted, and cited across markets, particularly in AI-driven systems that prioritize clarity, consistency, and corroboration.

In a landscape where visibility increasingly depends on being selected as a source, not just ranked, this becomes a meaningful competitive advantage.

Finally, recognize that distribution is now a core part of the market-specific and regional search strategy. Whether through platforms, partnerships, or new interfaces, where your content appears is becoming just as important as how it ranks.

Closing Thought

APAC is often described as complex. That is true, but complexity is not the most important characteristic. Search is no longer defined by a single engine or a single interface. It is shaped by a network of systems that influence how users discover, evaluate, and act.

The teams that succeed will not be the ones that adapt their Google strategy to new markets but the ones that understand how discovery actually works and build their presence accordingly.

More Resources:

Featured Image: ktsdesign/Shutterstock