For well over a decade, SEOs and marketers have debated the importance of high-quality, original content. After just about every major update, the message from Google was clear: If you want to rank, cut it out with the derivative listicles and other quick-churn assets that are big on keywords and light on substance.

More recently, our current understanding of how LLMs select which sources to cite in responses has SEOs and content marketers championing high-quality, original, and in-depth content with renewed fervor. If you want AI to identify your content as the best source with which to answer a user’s query, logically, it must be among the best online content available on the topic.

While that’s all great in theory, I’m sure many of you reading this have experienced that crushing disappointment on publishing, only for it to sink like a stone with barely a ripple. Somehow, your magnum opus languishes on page 4 of the relevant search results, outranked by content that, in your humble opinion, isn’t that remarkable.

Can we really call something high quality if it doesn’t achieve the strategic outcome that led us to create it?

Even when our content succeeds, there’s still the nagging worry that we might perhaps be investing too much time and money trying to achieve content perfection. Did that white paper really need to be 10 pages? Or would a simpler, five-page version have done just as well?

Might it be possible to achieve the same results with a little less quality? How do we find the sweet spot? In short, what’s the minimal viable product?

I’m not going to pretend to have the answer. And that’s because the question isn’t clear on what we mean by quality content.

A Question Of Quality

I’m as guilty as anyone of writing about the need for high-quality content as if it’s obvious what it is and how to achieve it without any further explanation. It’s a form of industry shorthand that has become increasingly meaningless through overuse.

Ask 10 CMOs, SEOs, and content marketers to define what they mean by high-quality content, and you’ll probably get 15 different answers.

Is “quality” determined by thought leadership and subject matter expertise? Or can a few average thoughts be elevated to high quality with skilled writing, a strong layout, and some clever design work?

Is “depth” characterized by longer word counts and more detailed research? Or is it really about demonstrating a superior understanding of a topic by exploring more nuanced or highfalutin’ ideas? Never mind the graphs, can you somehow weave in some Ancient Greek philosophy to get the point across?

And how much originality adds up to “original”? If you reference someone else’s work, are you somehow detracting from your own originality score?

While I can’t confidently give you a single, unambiguous definition of what high quality is, I can tell you what it isn’t: While it may be important, high-quality content is no silver bullet.

Just because your content is meticulously researched and extremely well executed doesn’t mean it’s somehow entitled to high rankings.

Does Original Content Actually Perform Better?

I tasked my team with conducting some qualitative research to answer the question: Does original content perform better than repurposed, unoriginal content, in both traditional search and AI-generated responses?

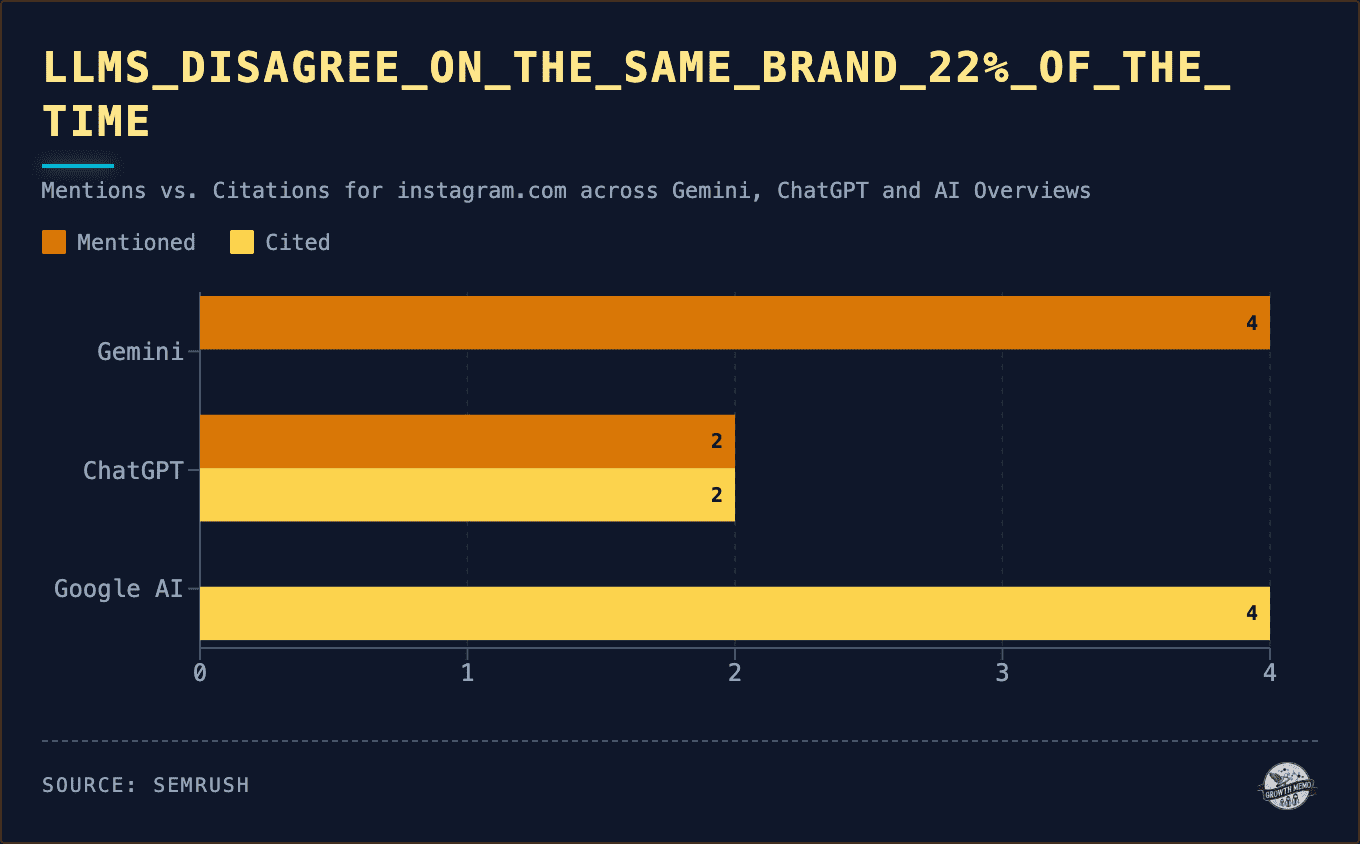

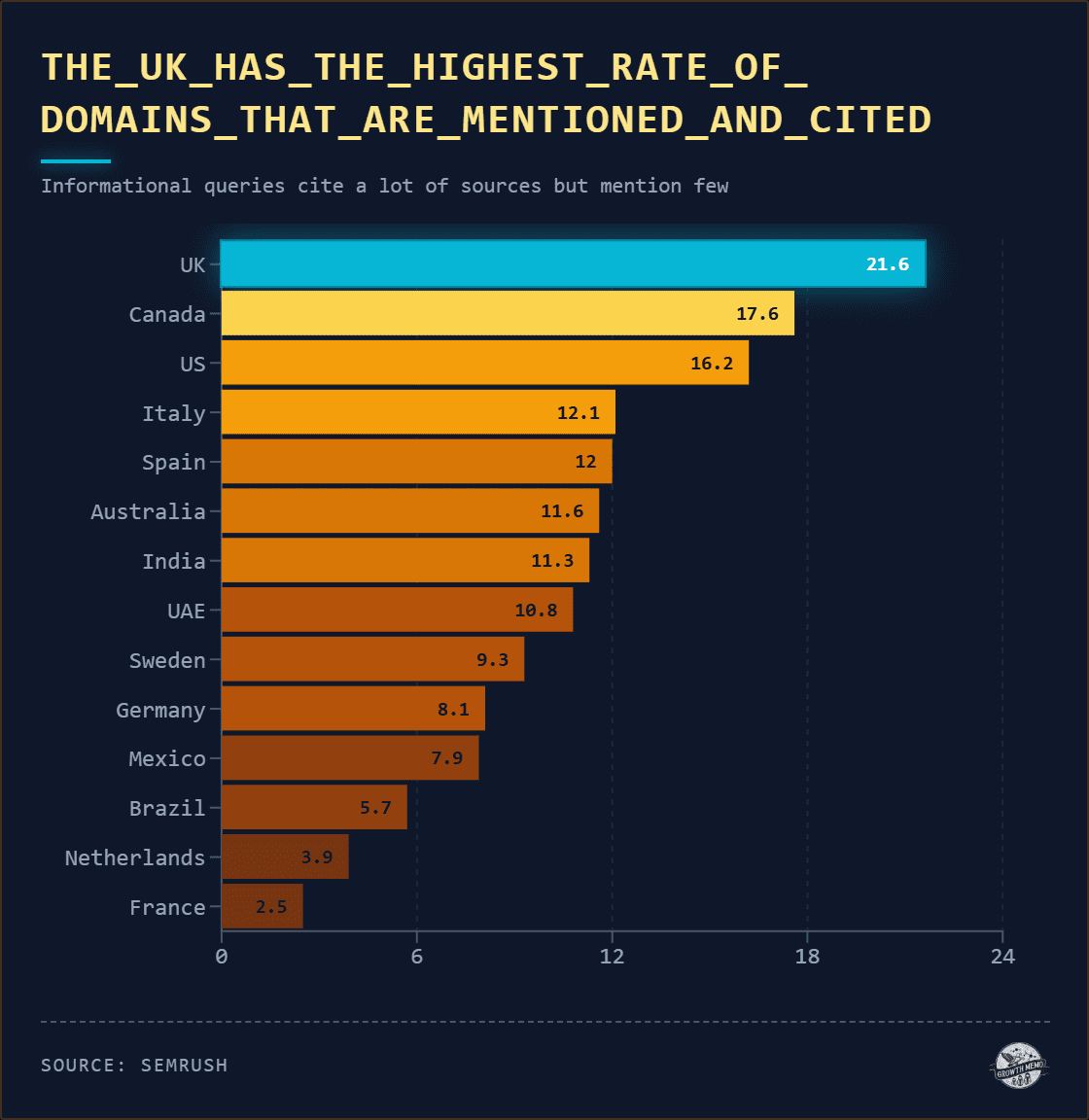

Of course, the internet is a big place (who knew?). So, for the purposes of this study, we restricted the definition of “search” to Google’s search results and to citations within AI platforms Gemini, ChatGPT, and Perplexity.

Similarly, because you’ve got to compare apples with apples, the team focused on popular search queries in the B2B SaaS and professional services space; mid-funnel, informational queries like “marketing automation tools” and “email deliverability tools.”

The team then identified and analyzed the top-ranking URLs for each query before assigning each one a score from 0 to 3 in five different categories.

- Primary contribution.

- Structural novelty.

- Interpretive depth.

- Source dependence.

- Contextual insight.

With a maximum total score of 15, each page was then classified as follows:

- 12-15: Group A (Original).

- 7-11: Borderline (Excluded).

- 0-6: Group B (Repurposed).

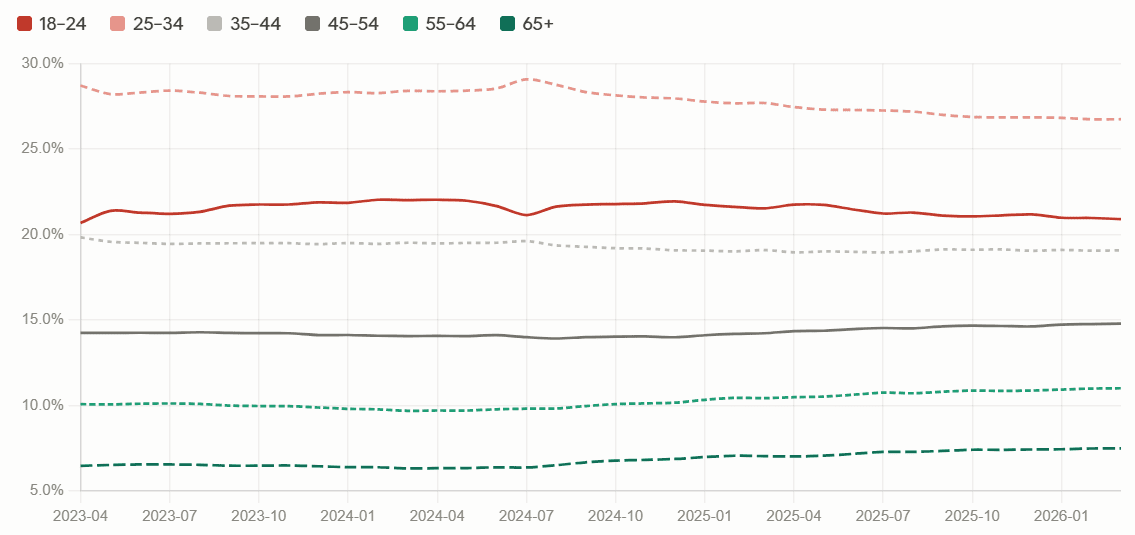

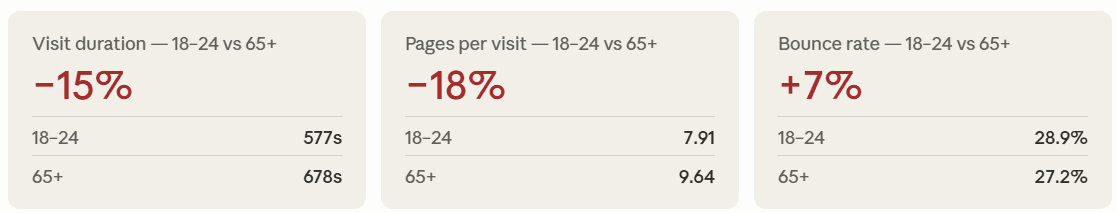

When the data came back, it appeared at first glance that URLs with higher originality scores (Group A) do tend to rank more consistently in Google and appear more frequently in AI responses than repurposed or derivative content (Group B).

However, before all the content marketers scream “I told you so” at anyone in earshot, you might want to read this next bit first.

Data analysts are notoriously skeptical of knee-jerk first glance conclusions (again, who knew?). The team crunched the data further, using data sciency techniques involving far more Greek letters than I’m used to seeing. They concluded that, while the correlation exists, it’s weak. Strong performance in one part of the dataset doesn’t reliably predict strong performance elsewhere in the dataset. The relationship simply isn’t consistent enough to say with any confidence that highly original content performs better every time.

Even so, while the correlation may be weak, it doesn’t appear to be entirely random. Looking at the overall averages, stripped of extreme cases that might skew the results, we did detect a pattern.

For example, original content appeared to perform better in relation to queries requiring interpretation or judgment, such as “benefits of marketing automation” or “email marketing best practices.” But that relationship virtually disappeared for more straightforward requests for information like “what is marketing automation.”

This makes sense. When the answer is factual, being original matters less than being accurate. When the answer requires perspective or judgment, originality becomes more valuable.

So, where does that leave us? We can’t confidently prove that original content always outperforms repurposed content. On the other hand, we can rule out the idea that originality has no impact at all. Therefore, what we can say is that original insight helps in some contexts, for some query types. It just isn’t a guaranteed lever you can pull for predictable results.

When Mediocre Content Has The Edge

Back in the 2010s, the API industry was booming. And that meant lots of content being published on every aspect of how APIs function. At the very least, a software company would need to publish detailed documentation for each of its APIs, from technical specifications and structures to implementation guides and walkthroughs.

This created a problem for one of our clients, a small startup of 10 people: How could they compete for visibility in search, let alone attract positive attention, when the entire conversation around APIs appeared to be dominated by industry giants? The competitors already had massive online footprints, larger content budgets, established domain authority, and significantly more comprehensive resources. How could we ever outrank them?

Conventional wisdom might have seen us attempt to fight quantity with quality by creating the best possible online resource on the topic of APIs. If we could publish content that goes far deeper and offers more value than the competition, we might gradually earn trust and authority through original, detailed research and thought leadership.

With enough budget and a long-term commitment, you could definitely build a strategy around such an approach. Except, of course, we would have needed both quality and quantity to have any chance of overtaking their competitors.

Trying to compete for visibility in every relevant subtopic and keyword on the subject of APIs would mean fighting on way too many fronts at once. How could we find an original angle on a topic that’s already well served online? How could we talk about APIs in a way that would differentiate their software from everyone else’s?

Short answer: We couldn’t. So, we flipped the problem. What if, instead of being last to join the race for the most relevant keywords today, we could be first out of the blocks in the race for whichever keyword might become relevant tomorrow?

I sent out a survey to the relevant audience, asking a bunch of typical users what search terms they would use in certain scenarios. The results revealed a plethora of short- and long-term keywords, but when we looked for any common themes, two words stood out. One was “API,” naturally. The other was “design.”

“API design” hadn’t cropped up in our initial keyword research as a potential opportunity. But as the search volume for “API design” was practically zero, that’s hardly surprising. Yet we now had clear evidence that, as the industry matured, so too would the search terms people used.

And because very few currently search for “API design,” none of the competitors appeared to be targeting the keyword or publishing content on the topic at all.

This was our window of opportunity. Never mind original content: We had an original keyword, an entire topic niche, to ourselves.

However, we also knew the value of that keyword would evaporate overnight if one or more competitors got there before us.

Forget spending six months developing an award-winning whitepaper series. We didn’t need perfection – with all the time, expense, and effort that entails – because we were staring at the SEO equivalent of an open goal.

In just a few days, we threw together a simple landing page focused on API design. It wasn’t exceptional. At only about 1,500 words, it wasn’t comprehensive. As content goes, it was pretty mediocre. But that’s all it took.

About 12 months later, just as predicted, the search volume materialized. Our single modest page continued to outrank every major competitor, even when they started chasing that new search volume with their own landing pages and content hubs.

Within two years, the keyword “API design” was worth approximately £200 per click. But our client didn’t need to pay for clicks. In effect, we won the space before anyone else even realized there was a space worth winning.

Perfection Is The Enemy Of Good

Striving to achieve the best possible iteration of your content, endlessly refining and polishing and second-guessing every detail, can get in the way of just getting it out there. Sometimes, good enough really is good enough.

I’m not arguing that we should stop striving for excellence in our content. As I hope our little study demonstrated, there are situations where well-researched, original content can give you an advantage. And, of course, success doesn’t end with rankings, citations, and clicks. Once they land on your content, you still want visitors to be wowed, persuaded, and motivated into action.

But like so many things in life, success depends on timing at least as much as it does on quality or originality. In a way, that’s what originality is all about; not necessarily being best but being first.

The API design landing page didn’t succeed because it was mediocre. It succeeded because they got there first. Quality mattered, but not in the way most content strategies define it.

This matters even more in AI search. LLMs can curate ideas and summarize information, but they can’t have original thoughts, provide firsthand experiences, or offer up fresh perspectives (as of now). While there are no guarantees, as our limited research shows, in AI at least, being the original source has influence.

Start asking what your content can say that hasn’t already been said, and then say it before someone else does.

More Resources:

Featured Image: ImageFlow/Shutterstock