What Are Google’s Core Topicality Systems? via @sejournal, @martinibuster

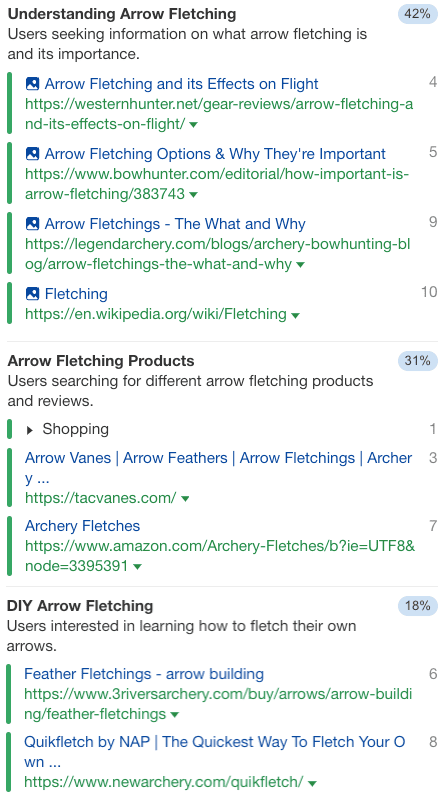

Topicality in relation to search ranking algorithms has become of interest for SEO after a recent Google Search Off The Record podcast mentioned the existence of Core Topicality Systems as a part of the ranking algorithms, so it may be useful to think about what those systems could be and what it means for SEO.

Not much is known about what could be a part of those core topicality systems but it is possible to infer what those systems are. Google’s documentation for their commercial cloud search offers a definition of topicality that while it’s not in the context of their own search engine it still provides a useful idea of what Google might mean when it refers to Core Topicality Systems.

This is how that cloud documentation defines topicality:

“Topicality refers to the relevance of a search result to the original query terms.”

That’s a good explanation of the relationship of web pages to search queries in the context of search results. There’s no reason to make it more complicated than that.

How To Achieve Relevance?

A starting point for understanding what might be a component of Google’s Topicality Systems is to start with how search engines understand search queries and represent topics in web page documents.

- Understanding Search Queries

- Understanding Topics

Understanding Search Queries

Understanding what users mean can be said to be about understanding the topic a user is interested in. There’s a taxonomic quality to how people search in that a search engine user might use an ambiguous query when they really mean something more specific.

The first AI system Google deployed was RankBrain, which was deployed to better understand the concepts inherent in search queries. The word concept is broader than the word topic because concepts are abstract representations. A system that understands concepts in search queries can then help the search engine return relevant results on the correct topic.

Google explained the job of RankBrain like this:

“RankBrain helps us find information we weren’t able to before by more broadly understanding how words in a search relate to real-world concepts. For example, if you search for “what’s the title of the consumer at the highest level of a food chain,” our systems learn from seeing those words on various pages that the concept of a food chain may have to do with animals, and not human consumers. By understanding and matching these words to their related concepts, RankBrain understands that you’re looking for what’s commonly referred to as an “apex predator.”

BERT is a deep learning model that helps Google understand the context of words in queries to better understand the overall topic the text.

Understanding Topics

I don’t think that modern search engines use Topic Modeling anymore because of deep learning and AI. However, a statistical modeling technique called Topic Modeling was used in the past by search engines to understand what a web page is about and to match it to search queries. Latent Dirichlet Allocation (LDA) was a breakthrough technology around the mid 2000s that helped search engines understand topics.

Around 2015 researchers published papers about the Neural Variational Document Model (NVDM), which was an even more powerful way to represent the underlying topics of documents.

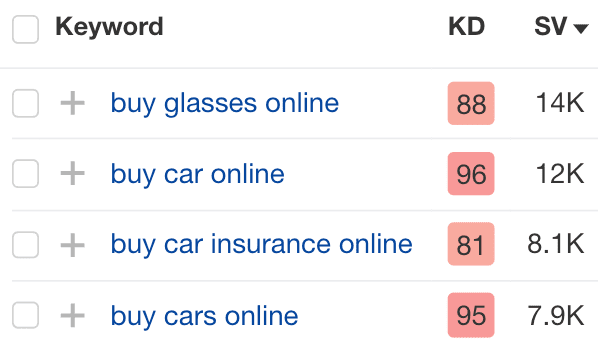

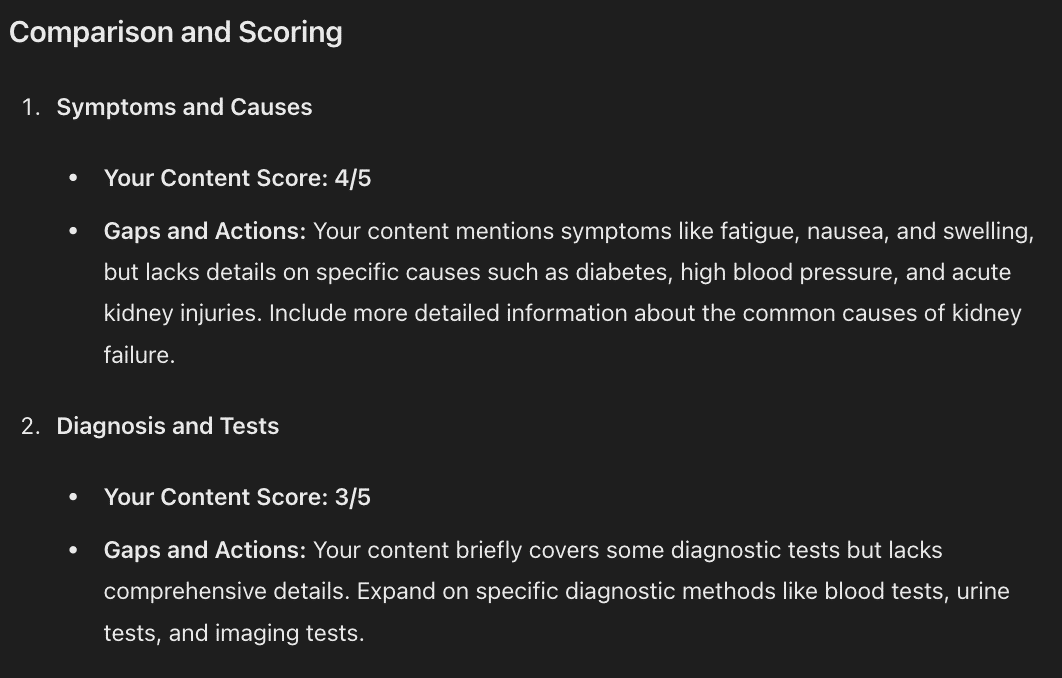

One of the most latest research papers is one called, Beyond Yes and No: Improving Zero-Shot LLM Rankers via Scoring Fine-Grained Relevance Labels. That research paper is about enhancing the use of Large Language Models to rank web pages, a process of relevance scoring. It involves going beyond a binary yes or no ranking to a more precise way using labels like “Highly Relevant”, “Somewhat Relevant” and “Not Relevant”

This research paper states:

“We propose to incorporate fine-grained relevance labels into the prompt for LLM rankers, enabling them to better differentiate among documents with different levels of relevance to the query and thus derive a more accurate ranking.”

Avoid Reductionist Thinking

Search engines are going beyond information retrieval and have been (for a long time) moving in the direction of answering questions, a situation that has accelerated in recent years and months. This was predicted in 2001 paper that titled, Rethinking Search: Making Domain Experts out of Dilettantes where they proposed the necessity to engage fully in returning human-level responses.

The paper begins:

“When experiencing an information need, users want to engage with a domain expert, but often turn to an information retrieval system, such as a search engine, instead. Classical information retrieval systems do not answer information needs directly, but instead provide references to (hopefully authoritative) answers. Successful question answering systems offer a limited corpus created on-demand by human experts, which is neither timely nor scalable. Pre-trained language models, by contrast, are capable of directly generating prose that may be responsive to an information need, but at present they are dilettantes rather than domain experts – they do not have a true understanding of the world…”

The major takeaway is that it’s self-defeating to apply reductionist thinking to how Google ranks web pages by doing something like putting an exaggerated emphasis on keywords, on title elements and headings. The underlying technologies are rapidly moving to understanding the world, so if one is to think about Core Topicality Systems then it’s useful to put that into a context that goes beyond the traditional “classical” information retrieval systems.

The methods Google uses to understand topics on web pages that match search queries are increasingly sophisticated and it’s a good idea to get acquainted with the ways Google has done it in the past and how they may be doing it in the present.

Featured Image by Shutterstock/Cookie Studio