Search has always been a moving target.

From the days when keyword match types and manual cost-per-click (CPCs) gave advertisers a sense of control, to the rise of Shopping ads, automated bidding, and Performance Max, Google has never stopped reshaping how search works.

Every step has chipped away at some level of control for marketers while making it easier for Google to monetize intent.

But what we’re seeing now with AI Overviews and AI Mode is not just another product update. It is a structural rewrite of how search itself functions, which has some serious implications for paid ads.

Instead of sending people to a list of blue links, Google is using AI to generate answers and guide users through multi-step, conversational journeys. Ads are being pulled directly into these experiences, sometimes above or below AI summaries, other times embedded right inside them.

Google calls this a way to “shorten the path from discovery to decision.” For advertisers, it means budgets are being funneled into surfaces that look and act very different from the SERPs we’ve optimized around for years.

The stakes are clear: If fewer people click through to websites, advertisers face tighter competition for attention, rising CPCs, brand safety concerns, and limited transparency into where money is going.

Marketing leaders can’t afford to treat AI Mode as a side experiment. This is the future of Google search, and your ads will either adapt to it or be left behind.

Google’s AI Search Vision And Ad Strategy

Google has been explicit about where it wants to go. At Google Marketing Live 2025, executives described AI Overviews as “one of the most successful launches in Search in the past decade,” citing increases in commercial queries in markets like the U.S. and India.

AI Mode builds on that success by creating a conversational environment where users can refine, compare, and act without returning to the static list of links that defined Google for 20 years.

The company frames this as a win-win: Users get answers more efficiently, and advertisers get placements where intent is clearer and actions are closer at hand.

Google explains that ads are pulled seamlessly into these surfaces from Search, Shopping, Performance Max, and App campaigns.

For the user, the ad is “just part of the journey.” For the advertiser, there is no opting out, no special campaign type, and no reporting that shows which impressions or clicks came from AI Mode versus traditional search.

This approach is not new. Every major change to Google’s results has tilted the balance toward monetization.

Shopping ads once displaced text ads. Featured Snippets and the Knowledge Graph began answering questions directly, cutting down on organic clicks. Performance Max combined inventory into a single system, obscuring where impressions were served.

AI Mode is the culmination of these shifts: Ads are not just on the page; they are woven into the answers themselves.

Competition is another driver. Microsoft has already integrated ads into Copilot. OpenAI is experimenting with sponsored results in ChatGPT. Perplexity, the AI search upstart, has raised millions while building advertiser interest in native placements.

Google cannot afford to sit back while others monetize AI-first search. Ads inside AI Mode aren’t an experiment; they’re an existential business necessity.

Industry experts see this direction clearly. Cindy Krum of MobileMoxie has argued that Google is merging AI Overviews, Discover, and conversational flows into a single journey-first system. She believes ads will become highly-targeted to users within that journey.

Krum further explained her opinion of Google’s intention for Ads in AI Mode:

You’ll have to be logged in to access AI Mode and when you’re logged in, they [Google] can collect all kinds of behavioral data and serve you incredibly personalized ads—ones you’re actually likely to click and convert on. That’s valuable to advertisers. Google can say, “We only show your ads to people who will convert.”

What I find concerning, though, is that advertisers are being asked to play along without the transparency they need to measure value. Seamless for users often means opaque for marketers, and this transition is no exception.

How AI Mode Changes User Behavior And Why It Matters For Ads

It’s easy to assume AI Mode is just another SERP redesign. But the data suggests it is changing how users behave, and those changes have direct implications for paid ads.

According to Pew Research, when an AI Overview appears:

- Only 8% of visits result in clicks on traditional results, compared to 15% when no overview is present.

- Only about 1% of visits include clicks on the links embedded inside the AI box.

Similarweb has tracked a sharp rise in zero-click searches, reaching nearly 70% of all queries by mid-2025, up from 56% the year before.

Authoritas found that in news-related queries, traffic to a top-ranking result dropped by almost 80% when an AI Overview appeared above it.

For advertisers, the math is simple.

- If fewer people leave Google, the competition for the remaining clicks intensifies.

- CPCs rise because ad real estate is scarcer.

- Campaign budgets have to stretch further just to maintain the same level of visibility.

- Organic traffic has always acted as a counterweight to paid spend.

- If that counterweight shrinks, paid budgets take on more pressure.

The effects differ by vertical. Ecommerce and travel sometimes see AI summaries spark more exploration of products, which can benefit Shopping ads.

Finance and insurance face mixed outcomes. Simplified comparisons may increase clicks in some cases but reduce brand-specific exposure in others.

News, health, and publishers are hit hardest, with traffic losses so steep that paid ads often become the only reliable way to reach audiences at scale.

Industry experts have not been shy about voicing their concerns.

Lily Ray, SEO director at Amsive, expressed her view after click-through rate data came out on AI Overviews:

“It was only a matter of time before new data & studies started to contradict Google’s messaging around the impact of AIOs on traffic.”

Rand Fishkin of SparkToro has been even more blunt:

“Zero click is taking over everything. Google is trying to answer searches without clicks. Facebook is trying to keep people on Facebook. LinkedIn wants to keep people on LinkedIn.”

I share that unease. This is a classic supply-and-demand problem. As free clicks shrink, advertisers will be forced to compete harder and pay more. Google benefits from this compression; advertisers absorb the costs.

Marketing leaders should stop treating this as a temporary adjustment. CPC inflation is becoming a structural reality of AI-powered search.

Ads Inside AI Journeys: Auctions, Costs, And Creative Implications

Google’s marketing spin around AI Mode is that ads are “a logical and natural next action to consumers exploring any topic.” That might be true from a user perspective, but from an advertiser’s perspective, the auction mechanics have changed in ways that deserve scrutiny.

Ads in AI Mode are not a distinct product. They are pulled from Search, Shopping, Performance Max, and App campaigns.

That means the inventory is blended, and advertisers don’t know whether impressions came from a standard SERP or an AI-generated summary.

Larger brands with broad match strategies, comprehensive product feeds, and robust budgets will have the advantage. Smaller or more niche advertisers risk being squeezed out, not because of poor strategy, but because the system is designed to privilege scale.

This dynamic almost guarantees CPC pressure. We saw the same thing when Shopping ads rose to prominence a decade ago.

As more real estate was given to paid placements, the remaining inventory became more competitive, and CPCs rose for the survivors. AI Mode is likely to trigger a similar cycle: fewer outbound clicks, fiercer bidding, higher costs.

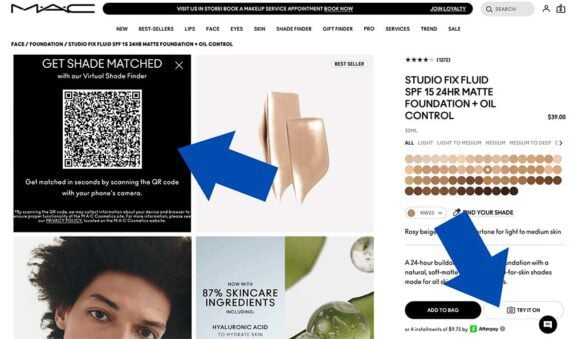

Google is also testing outcome-based formats that push this further. For example, in the retail vertical, early experiments allow users to use virtual try-on or track prices without ever leaving the AI journey.

By embedding ads as actions, Google can move from CPC toward cost-per-action pricing.

Fred Vallaeys of Optmyzr stated:

I have no doubt that Google and other ad platforms will find ways to appropriately monetize these advertising opportunities, even if there will be fewer impressions for each consumer journey.

He sees a potential upside for advertisers. I agree, but only if advertisers can prove that the actions driven inside AI Mode are incremental, not cannibalized from existing campaigns.

Creative expectations are also shifting. Conversational journeys demand conversational ads.

A blunt “Sign up today” may feel jarring inside a multi-step dialogue. Phrasing like “Find the right plan for your family” or “See how much you could save in minutes” fits better into the AI-driven flow.

I see opportunity here, but also risk. AI Mode could deliver more relevant matches between ad and intent. But without transparency into where ads appear and how they perform, advertisers are bidding blind. Google will extract more value from each interaction. Whether advertisers see the same value in return is far less certain.

The Transparency And Measurement Gap Of AI Mode

Perhaps the most glaring problem with AI Mode is measurement. Advertisers cannot see how their ads perform specifically in AI Overviews or AI Mode.

There is no column in Google Ads. Search Console offers no separate reporting. All performance is collapsed into existing campaigns.

This is more than a technical gap. For CMOs and CFOs, modeled attribution is not enough. Boards want to know where money is going and what it is producing.

If ad spend is being redirected into AI surfaces but not disclosed separately, how can leaders defend their budgets?

We’ve seen this before. Performance Max launched with almost no reporting visibility. Advertisers pushed back, and Google eventually provided more insights.

Transparency tends to lag product launches, but history suggests it comes only after sustained pressure from advertisers and agencies.

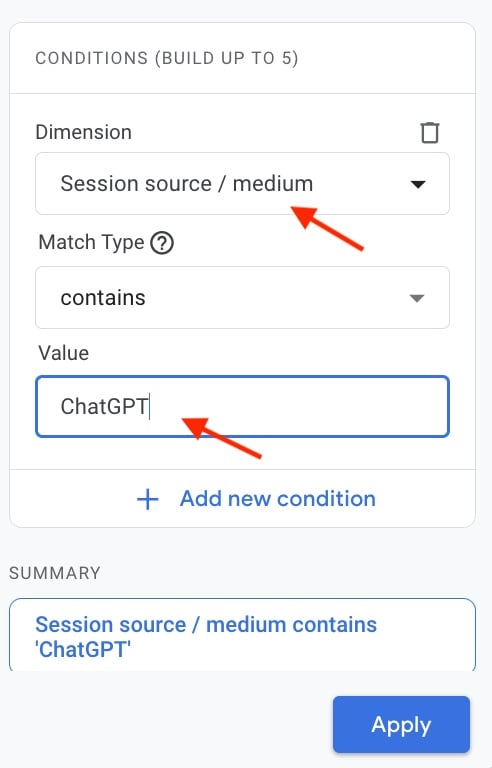

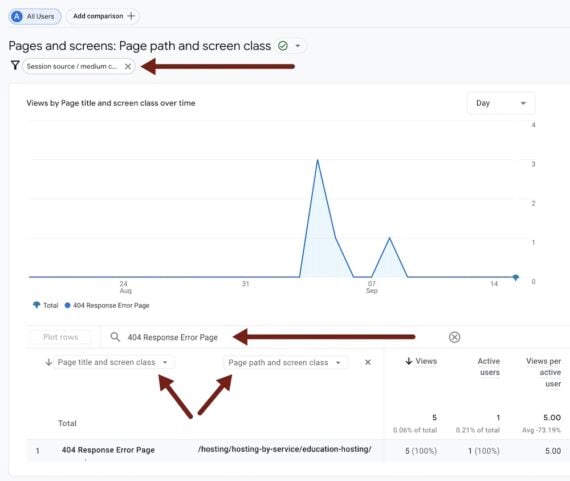

In the meantime, marketers have to fill the gap themselves. Some are building marketing mix models to estimate AI’s contribution. Others are connecting CRM systems more tightly to campaign spend.

Tracking mid-funnel events like demos or downloads is also becoming essential, since these signals often reveal whether AI-driven impressions are assisting conversion paths.

Modeled attribution can provide directional value, but it cannot replace true visibility.

Until Google surfaces AI-specific reporting, marketers should approach performance claims with skepticism and invest in their own measurement frameworks to avoid flying blind.

The Brand Safety And Trust Challenge With AI Overviews

AI Overviews have already produced embarrassing results, suggesting people put glue on pizza or eat rocks.

Google has since upgraded its models, grounding them in Gemini 2.5 and using “query fan-out” to cross-check responses. Accuracy has improved, but hallucinations still occur.

For advertisers, the risk goes beyond bad answers. It’s about adjacency. If your brand’s ad appears alongside a flawed or misleading AI-generated response, the reputational fallout could be significant.

This is a new kind of brand safety risk for search. In Display, adjacency concerns are expected. In search, ads have traditionally been “safe.” AI Mode changes that equation.

Regulators are also paying attention. The FTC and DOJ have already scrutinized Google’s dominance in search advertising.

If AI-driven ads blur the line between editorial and commercial, new antitrust challenges are possible. In Europe, the AI Act may impose stricter standards for how AI-generated content and ads are labeled.

Avoiding AI surfaces altogether isn’t realistic. The opportunity is too large. But brands must prepare frameworks to protect themselves.

That means actively monitoring where ads appear, setting internal thresholds for unacceptable contexts, and establishing escalation paths with Google when placements cross the line.

Trust cannot be outsourced. Advertisers must take responsibility for brand safety in AI environments, even if it means creating new workflows and raising difficult questions with their Google reps.

What Should Marketers Prioritize In The Face Of AI Mode And Overviews?

It’s tempting to wait until reporting improves and best practices become clearer. But hesitation is risky. The brands that adapt early will set the standards others follow.

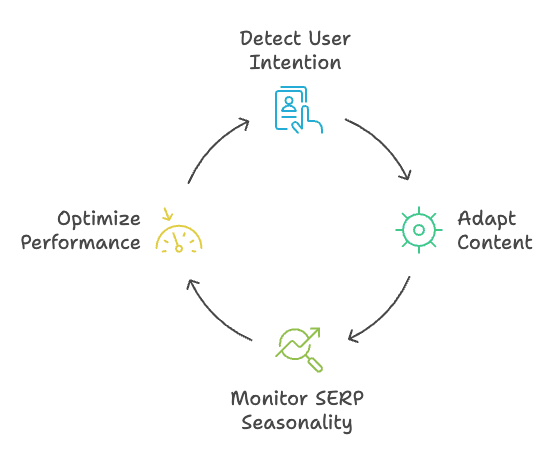

The most important shift is reframing search around journeys, not keywords.

AI Mode thrives on follow-ups and refinements. Campaigns should be designed with multi-step customer paths in mind.

An insurance company, for example, shouldn’t stop at “compare rates.” It should also anticipate “how to switch providers” or “what coverage works best for families.”

Automation is another reality. Performance Max and broad match are the engines of eligibility for AI surfaces. But these tools need guardrails.

Negative keywords, audience signals, and clean product feeds help prevent waste and maintain some level of control.

Tinuiti has emphasized media accountability and measurement tools to ensure campaigns optimize what works and limit waste.

Agencies like Seer Interactive have published data showing paid click-through rates drop significantly when AI Overviews are present, and recommend careful monitoring and automation guardrails so advertisers don’t get caught by surprise.

Asset quality also matters more than ever. Structured data, schema markup, and entity-rich product feeds aren’t optional. They determine whether ads are eligible to show inside AI responses at all. Poor data means invisibility.

Measurement, too, must evolve. Last-click cost-per-acquisition (CPA) no longer tells the story. Marketing leaders need to evaluate mid-funnel signals like lead quality, sales cycle speed, and assisted revenue.

These key performance indicators (KPIs) reveal whether AI-driven impressions are helping move customers forward.

Creative strategy is another frontier. Ads inside AI journeys need to read like natural next steps, not jarring interruptions.

Early tests in Microsoft Copilot and Perplexity show conversational CTAs, such as “Estimate your monthly cost in seconds,” outperform blunt directives. Marketers should begin experimenting now to build a playbook before these surfaces scale further.

Adaptation is non-negotiable. This isn’t about abandoning SEM fundamentals. It’s about extending them into a search environment where AI defines the journey. CMOs who build strategies around these realities will not just survive the shift; they’ll gain a competitive edge.

The Future Of Paid Search In An AI World

AI search complicates the three pillars paid search has relied on for decades:

- Transparency.

- Predictable intent.

- Measurable outcomes.

Ads are shifting from placements that sit beside results to actions that live inside AI-generated answers.

This isn’t unique to Google. Microsoft has integrated ads into Copilot. OpenAI is piloting sponsored answers in ChatGPT. Amazon and TikTok are testing AI-driven search monetization.

The entire industry is converging on the same model: AI-assisted journeys with ads embedded at critical decision points.

The outlook can be framed in scenarios.

In the best case, AI ads deliver more qualified clicks and higher efficiency, creating a win for advertisers.

In the middle case, some verticals see gains while frustrations over transparency persist.

In the worst case, CPCs inflate significantly, brand safety incidents mount, and ROI weakens, pushing advertisers to question their reliance on Google.

My conclusion is clear: This is not a passing experiment. It’s a structural shift. CMOs should treat AI search as a permanent change to the foundation of paid advertising.

That means reframing PPC as journey management, not keyword bidding. It means doubling down on first-party data and building attribution systems that don’t rely on Google’s word alone. And it means pressing Google for accountability at every step.

Because when ads become the answer, the brands that prepare early will be the ones that still get found.

More Resources:

Featured Image: Masha_art/Shutterstock