Can quantum computers now solve health care problems? We’ll soon find out.

<div data-chronoton-summary="

- A $5 million health care challenge: A nonprofit called Wellcome Leap is offering up to $5 million to quantum computing teams that can solve real-world health care problems classical computers can’t handle—using machines that are still noisy, error-prone, and far from perfect.

- Hybrid computing is the real breakthrough: Facing limited quantum hardware, all six finalist teams developed clever quantum-classical hybrid approaches—offloading most work to conventional processors, then using quantum only where classical methods fall short.

- Cancer, muscular dystrophy, and drug design are on the table: Teams are tackling problems ranging from identifying cancer origins to simulating light-activated cancer drugs to finding treatments for muscular dystrophy—applications previously impossible to model classically.

- Even failure would count as progress: The competition’s own director doubts anyone will claim the grand prize, but says the field has already been transformed—teams now know where quantum computing can genuinely matter, even if the machines to fully prove it don’t exist yet.

” data-chronoton-post-id=”1134409″ data-chronoton-expand-collapse=”1″ data-chronoton-analytics-enabled=”1″>

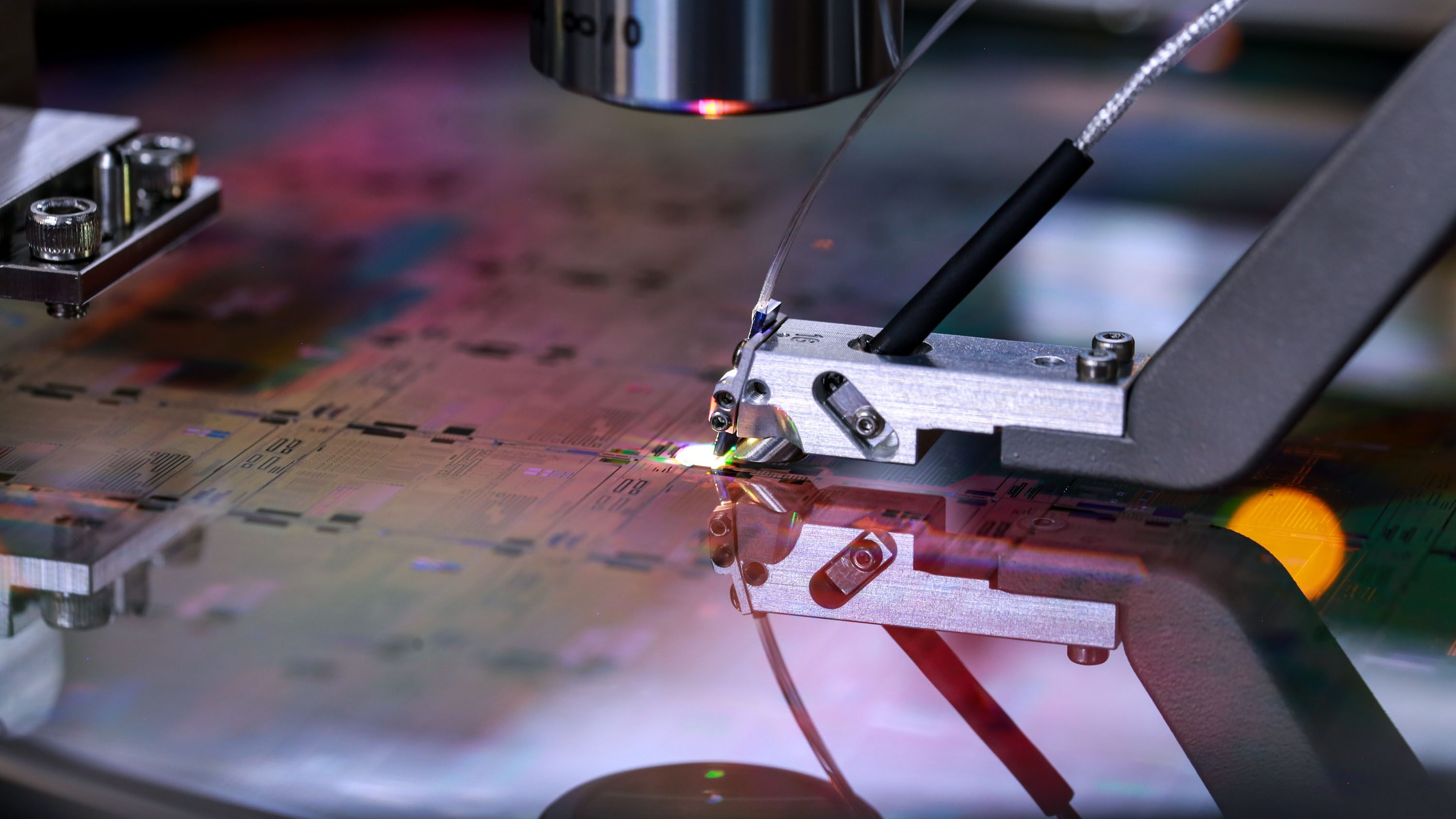

I’m standing in front of a quantum computer built out of atoms and light at the UK’s National Quantum Computing Centre on the outskirts of Oxford. On a laboratory table, a complex matrix of mirrors and lenses surrounds a Rubik’s Cube–size cell where 100 cesium atoms are suspended in grid formation by a carefully manipulated laser beam.

The cesium atom setup is so compact that I could pick it up, carry it out of the lab, and put it on the backseat of my car to take home. I’d be unlikely to get very far, though. It’s small but powerful—and so it’s very valuable. Infleqtion, the Colorado-based company that owns it, is hoping the machine’s abilities will win $5 million next week, at an event to be held in Marina del Rey, California.

Infleqtion is one of six teams that have made it to the final stage of a 30-month-long quantum computing competition called Quantum for Bio (Q4Bio). Run by the nonprofit Wellcome Leap, it aims to show that today’s quantum computers, though messy and error-prone and far from the large-scale machines engineers hope to build, could actually benefit human health. Success would be a significant step forward in proving the worth of quantum computers. But for now, it turns out, that worth seems to be linked to harnessing and improving the performance of conventional (also called classical) computers in tandem, creating a quantum-classical hybrid that can exceed what’s possible on classical machines by themselves.

There are two prize categories. A prize of $2 million will go to any and all teams that can run a significantly useful health care algorithm on computers with 50 or more qubits (a qubit is the basic processing unit in a quantum computer). To win the $5 million grand prize, a team must successfully run a quantum algorithm that solves a significant real-world problem in health care, and the work must use 100 or more qubits. Winners have to meet strict performance criteria, and they must solve a health care problem that can’t be solved with conventional computers—a tough task.

Despite the scale of the challenge, most of the teams think some of this money could be theirs. “I think we’re in with a good shout,” says Jonathan D. Hirst, a computational chemist at the University of Nottingham, UK. “We’re very firmly within the criteria for the $2 million prize,” says Stanford University’s Grant Rotskoff, whose collaboration is investigating the quantum properties of the ATP molecule that powers biological cells.

The grand prize is perhaps less of a sure thing. “This is really at the very edge of doable,” Rotskoff says. Insiders say the challenge is so difficult, given the state of quantum computing technology, that much of the money could stay in Wellcome Leap’s account.

With most of the Q4Bio work unpublished and protected by NDAs, and the quantum computing field already rife with claims and counterclaims about performance and achievements, only the judges will be in a position to decide who’s right.

A hybrid solution

The idea behind quantum computers is that they can use small-scale objects that obey the laws of quantum mechanics, such as atoms and photons of light, to simulate real-world processes too complex to model on our everyday classical machines.

Researchers have been working for decades to build such systems, which could deliver insights for creating new materials, developing pharmaceuticals, and improving chemical processes such as fertilizer production. But dealing with quantum stuff like atoms is excruciatingly difficult. The biggest, shiniest applications require huge, robust machines capable of withstanding the environmental “noise” that can very easily disrupt delicate quantum systems. We don’t have those yet—and it’s unclear when we will.

Wellcome Leap wanted to find out if the smaller-scale machines we have today can be made to do something—anything—useful for health care while we wait for the era of powerful, large-scale quantum computers. The group started the competition in 2024, offering $1.5 million in funding to each group of 12 selected teams.

The six Q4Bio finalists have taken a range of approaches. Crucially, they’ve all come up with ingenious ways to overcome quantum computing’s drawbacks. Faced with noisy, limited machines, they have learned how to outsource much of the computational load to classical processors running newly developed algorithms that are, in many cases, better than the previous state of the art. The quantum processors are then required only for the parts of the problem where classical methods don’t scale well enough as the calculation gets bigger.

For example, a team led by Sergii Strelchuk of Oxford University is using a quantum computer to map genetic diversity among humans and pathogens on complex graph-based structures. These will—the researchers hope—expose hidden connections and potential treatment pathways. “You can think about it as a platform for solving difficult problems in computational genomics,” Strelchuk says.

The corresponding classical tools struggle with even modest scale-up to large databases. Strelchuk’s team has built an automated pipeline that provides a way of determining whether classical solvers will struggle with a particular problem, and how a quantum algorithm might be able to formulate the data so that it becomes solvable on a classical computer or handleable on a noisy quantum one. “You can do all this before you start spending money on computing,” Strelchuk says.

In collaboration with Cleveland Clinic, Helsinki-based Algorithmiq has used a superconducting quantum computer built by IBM to simulate a cancer drug that is triggered by specific types of light. “The idea is you take the drug, and it’s everywhere in your body, but it’s doing nothing, just sitting there, until there’s light on it of a certain wavelength,” says Guillermo García-Pérez, Algorithmiq’s chief scientific officer. Then it acts as a molecular bullet, attacking the tumor only at the location in the body where that light is directed.

The drug with which Algorithmiq began its work is already in phase II clinical trials for treating bladder cancers. The quantum-computed simulation, which adapts and improves on classical algorithms, will allow it to be redesigned for treating other conditions. “It has remained a niche treatment precisely because it can’t be simulated classically,” says Sabrina Maniscalco, Algorithmiq’s CEO and cofounder.

Maniscalco, who is also confident of walking away from the competition with prize money, believes the methods used to create the algorithm will have wide applications: “What we’ve done in the period of the Q4Bio program is something unique that can change how to simulate chemistry for health care and life sciences.”

Infleqtion’s entry, running on its cesium-powered machine, is an effort to improve the identification of cancer signatures in medical data. Together with collaborators at the University of Chicago and MIT, the company’s scientists have developed a quantum algorithm that mines huge data sets such as the Cancer Genome Atlas.

The aim is to find patterns that allow clinicians to determine factors such as the likely origin of a patient’s metastasized cancer. “It’s very important to know where it came from because that can inform the best treatment,” says Teague Tomesh, a quantum software engineer who is Infleqtion’s Q4Bio project lead.

Unfortunately, those patterns are hidden inside data sets so large that they overwhelm classical solvers. Infleqtion uses the quantum computer to find correlations in the data that can reduce the size of the computation. “Then we hand the reduced problem back to the classical solver,” Teague says. “I’m basically trying to use the best of my quantum and my classical resources.”

The Nottingham-based team, meanwhile, is using quantum computing to nail down a drug candidate that can cure myotonic dystrophy, the most common adult-onset form of muscular dystrophy. One member of the team, David Brook, played a role in identifying the gene behind this condition in 1992. Over 30 years later, Brook, Hirst, and the others in their group—which includes QuEra, a Boston company developing a quantum computer based on neutral atoms—has now quantum-computed a way in which drugs can form chemical bonds with the protein that brings on the disease, blocking the mechanism that causes the problem.

Low expectations

The entrants’ confidence might be high, but Shihan Sajeed’s is much lower. Sajeed, a quantum computing entrepreneur based in Waterloo, Ontario, is program director for Q4Bio. He believes the error-prone quantum machines the researchers must work with are unlikely to deliver on all the grand prize criteria. “It is very difficult to achieve something with a noisy quantum computer that a classical machine can’t do,” he says.

That said, he has been surprised by the progress. “When we started the program, people didn’t know about any use cases where quantum can definitely impact biology,” he says. But the teams have found promising applications, he adds: “We now know the fields where quantum can matter.”

And the developments in “hybrid quantum-classical” processing that the entrants are using are “transformational,” Sajeed reckons.

Will it be enough to make him part with Wellcome Leap’s money? That’s down to a judging panel, whose members’ identities are a closely guarded secret to ensure that no one tailors their presentation to a particular kind of approach. But we won’t know the outcome for a while; the winner, or winners, will be announced in mid-April.

If it does turn out that there are no winners, Sajeed has some words of comfort for the competitors. The goal has always been about running a useful algorithm on a machine that exists today, he points out; missing the mark doesn’t mean your algorithm won’t be useful on a future quantum computer. “It just means the machine you need doesn’t exist yet.”