PPC Automation Layering: How Smart Advertisers Combine Automation With Strategy via @sejournal, @brookeosmundson

Automation has been part of PPC management for longer than many marketers realize.

Bid adjustments, keyword expansion, and audience targeting have been guided by machine learning inside platforms like Google Ads for years. What has changed is the depth of automation now influencing campaign performance.

Smart Bidding, automated assets, dynamic targeting, and recommendation engines now handle many tasks that used to require daily manual management.

That shift has changed the job of a PPC manager.

This is where PPC automation layering becomes useful. Instead of relying on a single automated feature, marketers combine multiple tools and signals to shape how campaigns perform.

Read on to learn more about automation layering and helpful use cases to make your job easier.

What Is Automation Layering?

PPC automation layering is the strategic use of multiple automation tools and rules to manage and optimize PPC campaigns.

The main goal of PPC automation layering is to improve the efficiency and effectiveness of your PPC efforts.

This is where automation layering comes in.

Instead of relying on one automated feature, advertisers use several layers of automation working together. Each layer contributes different inputs, signals, or guardrails.

Some examples of automation layering include:

- Smart Bidding strategies: Ad platforms take care of keyword bidding based on goals input within campaign settings. Examples of Smart Bidding include target CPA, target ROAS, maximize conversions, and more.

- Automated PPC rules: Ad platforms can run specific account rules on a schedule based on the goal of the rule. An example would be to have Google Ads pause time-sensitive sale ads on a specific day and time.

- PPC scripts: These are blocks of code that give ad platforms certain parameters to look out for and then have the platform take a specific action if those parameters are met.

- Google Ads Recommendations tab: Google reviews campaign performance and puts together recommendations for PPC marketers to either take action on or dismiss if irrelevant.

- Third-party automation tools: Tools such as Google Ads Editor, Optmyzr, Adalysis, and more can help take PPC management to the next level with their automated software and additional insights.

- AI-Powered analysis tools: Platforms like ChatGPT, Copilot, Claude, and Gemini all have different capabilities, from campaign analysis to keyword research, that can streamline your workflow and efficiency.

See the pattern here?

Automation and machine learning produce outputs of PPC management based on the inputs of PPC marketers to produce better campaign results.

How Has Automation Changed PPC Management?

Automation has gradually reshaped how paid media accounts are managed.

Ten to fifteen years ago, many PPC managers (including myself) spent most of their time adjusting bids, expanding keyword lists and negatives, and refining campaign structures. Success often came from tightly controlling every lever in the account.

Today, many of those levers are controlled by algorithms and automation.

Platforms automatically adjust bids in real time, assemble ad combinations dynamically, and expand targeting beyond the parameters advertisers originally set. These systems are designed to find conversions more efficiently than manual management.

In many cases, they do.

But automation introduces a new challenge. Algorithms are only as effective as the signals they receive.

For example, a few automation features built into the Google Ads platform include:

- Keyword and campaign bid management.

- Audience expansion.

- Automated ad asset creation.

- Keyword expansion.

- And much more.

Automation has essentially taken over many of the day-to-day management tasks that PPC advertisers were used to doing.

While everyone can agree that easier paid media management sounds great, the learning curve for marketers has been far from perfect.

This leads us to the next big question: Will automation replace PPC marketers?

Does Automation Replace PPC Experts?

Job layoffs and restructuring due to automation are certainly a sensitive topic.

In reality, automation has already replaced many repetitive tasks that once filled a marketer’s day. Bid adjustments, keyword expansion, and ad rotation are increasingly handled by machine learning systems.

But it’s time to settle this debate once and for all.

Automation will not replace the need for PPC marketers.

What we have, and will continue to see, is a shift in the role of PPC experts.

Since automation and machine learning take the role of day-to-day management, PPC experts will spend more time doing things such as:

- Analyzing data and data quality.

- Strategic decision making.

- Reviewing and optimizing outputs from automation.

- Identifying growth opportunities.

Automation and machines are great at pulling levers, making overall campaign management more efficient.

But automation tools alone cannot replace human touch in creating a story based on data and insights.

This is the beauty of PPC automation layering.

Lean into what automation tools have to offer, which leaves you more time to become a more strategic PPC marketer.

PPC Automation Layering Use Cases

There are many ways that PPC marketers and automation technologies can work together for optimal campaign results.

Below are just a few examples of how to use automation layering to your advantage.

1. Make The Most Of Smart Bidding Capabilities

As mentioned earlier in this guide, Smart Bidding is one of the most useful PPC automation tools.

Google Ads has developed its own automated bidding strategies to take the guesswork out of manual bid management. These have been around since 2016, so this isn’t necessarily a “new” automation tool compared to others.

However, Smart Bidding is not foolproof and certainly not a “set and forget” strategy.

Smart Bidding outputs can only be as effective as the inputs given to the machine learning system.

So, how should you use automation layering for Smart Bidding?

First, pick a Smart Bidding strategy that best fits an individual campaign goal. You can choose from:

Whenever starting a Smart Bidding strategy, it’s important to put some safeguards in place to reduce the volatility in campaign performance.

This could mean setting up an automated rule to alert you whenever significant volatility is reported, such as:

- Spike in cost per click (CPC) or cost.

- Dip in impressions, clicks, or cost.

Either of these scenarios could be due to learning curves in the algorithm, or it could be an indicator that your bids are too low or too high.

For example, say a campaign has a set target CPA goal of $25, but then all of a sudden, impressions and clicks fall off a cliff.

This could mean that the target CPA is set too low, and the algorithm has throttled ad serving to preserve only for individual users the algorithm thinks are most likely to purchase.

Without having an alert system in place, campaign volatility could go unnoticed for hours, days, or even weeks if you’re not checking performance in a timely manner.

2. Interact With Recommendations & Insights To Improve Automated Outputs

The goal of the ad algorithms is to get smarter every day and improve campaign performance.

But again, automated outputs are only as good as the input signals it’s been given at the beginning.

Many experienced PPC marketers tend to write off the Google Ads Recommendations or Insights tab due to perceptions of receiving irrelevant suggestions.

However, these systems were meant to learn from the input of marketers to better learn how to optimize.

Just because a recommendation is given on the platform does not mean you have to implement it.

The beauty of this tool is you have the ability to dismiss the opportunity and then tell Google why you’re dismissing it.

There’s even an option for “this is not relevant.”

Be willing to interact with the Recommendations and Insights tab on a weekly or bi-weekly basis to help better train the algorithms for optimizing performance based on what you signal as important.

Regularly reviewing recommendations, rather than ignoring them completely, creates another layer of automation feedback inside the account.

3. Automate Competitor Analysis With Tools

It’s one thing to ensure your ads and campaigns are running smoothly at all times.

Next-level strategy is using automation to keep track of your competitors and what they’re doing.

Multiple third-party tools have competitor analysis features to alert you on items such as:

- Keyword coverage.

- Content marketing.

- Social media presence.

- Market share.

- And more.

Keep in mind that these tools are a paid subscription, but many are useful in many other automation areas outside of competitor analysis.

Some of these tools include Moz, Google Trends, and Klue.

The goal is not simply to keep up with your competitors and copy what they’re doing.

Setting up automated competitor analysis helps you stay informed and can reinforce your market positioning or react in a way to help set you apart from competitor content.

4. Using LLM Platforms To Accelerate PPC Analysis

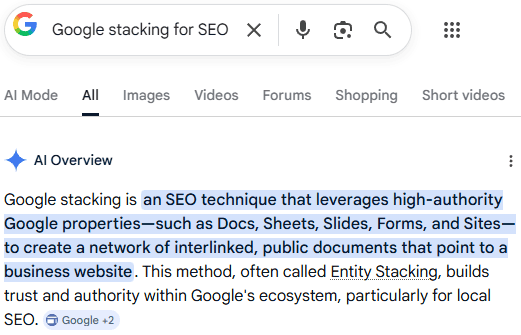

A newer layer of automation is emerging through large language model platforms such as ChatGPT, Claude, Gemini, and Copilot.

It’s important to note that these platforms do not control campaign delivery. Instead, they help marketers process and interpret information faster.

LLM platforms can assist with tasks such as reviewing exported performance data, identifying patterns across campaigns, or summarizing performance changes between reporting periods.

For example, marketers can upload campaign reports and ask targeted questions about cost trends, conversion performance, or impression share shifts. The model can quickly highlight patterns that might otherwise require significant manual analysis.

LLMs can also support areas like keyword expansion, creative brainstorming, and reporting summaries. When paired with platform automation features such as Smart Bidding or responsive ad formats, this approach helps advertisers produce stronger inputs for the algorithm to evaluate.

These tools should not replace human analysis, but they can accelerate many of the workflows surrounding campaign management.

In Summary

Automation now shapes nearly every part of paid media management.

Because of this, the role of the PPC practitioner continues to evolve.

Instead of managing every setting manually, marketers increasingly guide how automation systems operate. That guidance comes through better signals, stronger inputs, and thoughtful campaign structures.

Automation layering helps bring those elements together.

By combining platform automation, scripts, rules, external tools, and AI-driven analysis, advertisers can create a system where automation improves efficiency without losing control over their accounts.

The platforms may be running the mechanics of campaign delivery, but the direction still comes from the marketer.

More Resources:

Featured Image: Anton Vierietin/Shutterstock

FAQ

What are some key benefits of PPC automation layering?

PPC automation layering enhances the efficiency and effectiveness of PPC campaign management. It combines multiple automation tools and strategies like Smart Bidding, automated PPC rules, PPC scripts, and third-party platforms. By leveraging these technologies, marketers can focus on higher-level strategic tasks while the system manages routine tasks, such as keyword bidding, campaign bid management, and data analysis.

Will automation replace the need for PPC experts?

Automation will not replace PPC experts, but it will shift their role over time. While automation can handle many day-to-day management tasks like bid adjustments and ad scheduling, PPC experts should shift their focus to strategic decision-making, data analysis, and optimizing the outputs from automation tools. Human oversight remains crucial for effective campaign management.

What are some practical use cases for PPC automation layering?

Practical use cases for PPC automation layering include:

- Smart Bidding strategies: Choosing the best bidding strategy (e.g., Target CPA, Target ROAS) and setting up rules to monitor performance volatility.

- Recommendations & Insights: Regularly interacting with the Google Ads Recommendations and Insights tab to refine automated outputs.

- Competitor Analysis: Using third-party tools like Semrush, Moz, or Google Trends to automate competitor analysis, staying informed on market positioning without manually tracking competitors.

These strategies help optimize campaign results while allowing more time for strategic analysis and decision-making.