Why Google Ads Fails B2B (And How to Fix It)

This post was sponsored by Vehnta. The opinions expressed in this article are the sponsor’s own.

Why isn’t Google Ads working for my B2B marketing campaigns?

How do I improve lead quality in B2B Google Ads campaigns?

What’s the best way to scale Account-Based Marketing (ABM) using Google Ads?

The good news: Google Ads isn’t broken in B2B; it’s just being used wrong.

The platform works brilliantly for consumer brands because their strategies align with consumer behavior, but B2B operates in an entirely different universe with complex buying journeys involving multiple stakeholders.

This guide will help you modify Google Ads to perform better for B2B paid marketing campaigns.

Issue 1: AI Automation Optimizes For The Wrong B2B Objectives

Google’s AI-powered automation creates the biggest challenge for you at this time.

Why? The actions that signal customer engagement for Google Ads do not align with how B2B shoppers behave, leading to incorrect AI analysis of and actions taken on B2B ad success.

For example:

- Performance Max campaigns optimize for volume conversions rather than quality opportunities, resulting in a doubling of lead volume while halving lead quality.

- Google Smart Bidding tends to attract users who are likely to take lightweight actions, such as downloads or sign-ups; these actions are unlikely to result in qualified B2B buyers, leading to low-value conversions and wasted spend.

How To Fix Google Ad AI’s Misalignment For B2B PPC

Phase 1: Implement Strategic AI Controls

- Disable automatic audience expansion in Search campaigns to maintain targeting precision.

- Use Target ROAS instead of Target CPA, setting values based on actual customer lifetime value.

- Create separate campaigns for different buying stages with stage-appropriate conversion goals.

- Start Performance Max with limited budgets (20-30% of total spend) until optimization stabilizes.

Phase 2: Configure B2B-Specific Signals

- Upload customer lists with consistent firmographic data as audience signals.

- Set up similar audiences based on highest-value customers, not highest-converting leads.

- Monitor search terms weekly and add negatives aggressively.

- Use custom conversion goals weighted toward pipeline contribution, not form submissions.

The Easy Way

Vehnta accelerates campaign optimization, enabling precise targeting and performance tracking across your entire B2B account list.

With its Similarity feature and AI-powered Keyword & Ad Generator, you can create high-performing, B2B-optimized campaigns in minutes, avoiding wasted spend on low-value conversions.

Insights are available from day one, and campaigns can be optimized manually or with AI. Plus, with seamless Google Ads integration and automated multilingual message diversification at scale, Vehnta lets you go to market faster and more effectively.

What You Get

- Faster launch cycles.

- More qualified leads.

- Better performance.

- Scalable impact. without the usual manual overhead.

Campaigns are built on intelligent targeting and high-quality inputs, so optimization starts smart and improves from there.

The Result

- Reduced wasted budget on low-value conversions like downloads or sign-ups.

- Focused paid ad spend on high-intent, high-fit prospects.

Issue 2: Generic Targeting Wastes Budget On Wrong Audiences

Most B2B campaigns tend to target broad demographics rather than specific firmographics, resulting in wasted spend on prospects that are a poor fit.

Traditional metrics create a “metrics mirage” where campaigns focused on clicks draw unqualified leads instead of high-intent decision-makers.

Additionally, broad messaging often fails to resonate across diverse markets, whereas precise targeting is effective at scale.

One multinational retailer with 500+ locations across four countries cut costs by 60% and tripled engagement by implementing hyper-local, multilingual campaigns tailored to specific regions.

How To Fix PPC Ad Targeting Waste

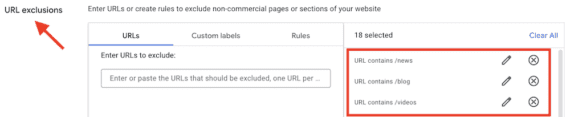

Phase 1: Implement Firmographic Precision

Phase 2: Configure Account-Level Monitoring

- Set up cross-domain tracking to monitor multiple touchpoints from the same organization.

- Use UTM parameters with company identifiers to track organizational buying patterns.

- Create audiences based on account-level engagement patterns.

The Easy Way

Vehnta’s Similarity engine leverages a 500M+ company database to identify prospects that match your best customers with surgical precision.

Simply:

- Insert one or more existing customers or your Ideal Customer Profile (ICP) into the Similarity Engine.

- The Similarty Engine analyzes economic data, industry sectors, and semantic relevance to find similar companies.

This approach makes targeting 10x faster than manual audience research.

Additionally, it provides precision that extends far beyond basic lookalike audiences.

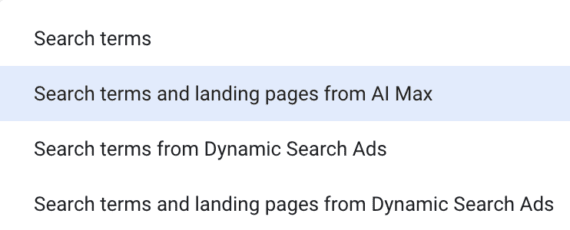

Then, the Search Terms feature provides full visibility into searches performed by your target audience, organized by company and location for actionable insights.

What You Get

- A radically faster, more precise way to build high-value target lists.

- Prospect lists that closely mirror your best customers, aligned to your ICP from day one.

- Full visibility into the actual search behavior of those companies.

The Result

- Smarter segmentation.

- Faster activation.

- Better-performing campaigns fueled by insight, not assumptions.

Issue 3: Marketing/Sales Alignment Problems

B2C metrics fail to capture the complexity of B2B interactions, resulting in a fundamental disconnect between marketing activities and sales outcomes.

Most B2B marketing teams operate under the myth that success requires high lead volumes, but this creates qualification bottlenecks since most B2B sales teams can effectively pursue only a few qualified opportunities simultaneously.

This quality-over-quantity approach delivers results: an enterprise SaaS provider targeting only $1B+ companies achieved 70% cost reduction and 3x engagement by focusing on ultra-precise targeting aligned with sales capacity.

Steps to Fix Marketing/Sales Misalignment

Align Campaigns with Sales Capacity

- Calculate your sales team’s true capacity for working on qualified opportunities.

- Set monthly lead generation goals that align with sales capacity, rather than arbitrary growth targets.

- Develop lead scoring systems that qualify prospects before they reach the sales team.

- Implement progressive profiling to gather firmographic information during conversion.

Optimize for Opportunity Quality

The Easy Way

Vehnta’s Insight Collection provides real-time business intelligence that automatically qualifies prospects, focusing on high-quality opportunities from pre-qualified target companies instead of generating hundreds of unqualified leads monthly.

The VisionSphere function provides a ranked list of companies most interested in your business, calculated by proprietary algorithms reflecting genuine buying interest.

What You Get

- Consistently higher-quality pipeline, driven by real-time insight into which companies actually show buying intent.

- Focused efforts on prospects that are already aligned with your offering.

- A ranked view of interested accounts.

- Clarity on where to prioritize and when to engage.

- More efficient sales motions.

- Stronger conversion rates.

- Faster deal velocity.

All the intelligence you need, without the noise.

Issue 4: Scalability Of ABM Approaches

The challenge of scaling Account-Based Marketing through Google Ads lies in managing hundreds of target accounts while maintaining surgical precision.

Traditional ABM approaches require significant manual effort and dedicated specialists, making it difficult to achieve scale without compromising quality.

However, this complexity can be overcome: a global manufacturer targeting 4,000+ plant locations reduced spend from $160K to $40K while generating 2.5x more qualified leads through automated ABM systems.

How To Fix Account-Based Marketing (ABM) Scalability

Phase 1: Implement Automated Account Intelligence

- Use advanced similarity algorithms to identify high-value prospects matching your best customers.

- Automate audience research and list-building processes that typically consume weeks of specialist time.

- Deploy AI-powered campaign creation that generates optimized targeting in minutes.

- Set up automated monitoring across hundreds of target accounts without additional team members.

Phase 2 Create Scalable Precision Systems

- Build campaigns that automatically diversify messaging across multiple languages.

- Implement systems providing full visibility into search behavior across target companies.

- Use proprietary algorithms to rank companies by genuine buying interest.

- Deploy real-time optimization eliminating manual analysis while maintaining quality.

The Easy Way

Vehnta accelerates campaign execution through a truly scalable ABM approach, enabling accurate targeting and real-time performance tracking across your entire B2B account list.

Integrated AI Campaign Generation allows marketers to generate highly relevant, B2B-tailored campaigns in minutes, not days, while minimizing budget waste on low-intent traffic. From day one, teams gain access to actionable insights and can fine-tune performance manually or through automated optimization.

Thanks to seamless Google Ads integration and automated multilingual message diversification at scale, Vehnta eliminates the operational friction that often stalls ABM at the execution phase.

What you get: ABM that finally matches the speed and scale of your growth ambitions, without the typical overhead. Campaigns go live faster, reach the right accounts with precision, and continuously improve through data-driven optimization. Marketing teams save time, reduce costs, and drive more qualified pipeline, while maintaining control and strategic clarity. The complexity is gone; the impact remains.

The Strategic Transformation: From Volume to Value

The transformation from failing to succeeding with B2B Google Ads requires fundamentally rethinking how paid search fits into complex, multi-stakeholder B2B sales processes. Companies achieving breakthrough results abandon volume-based B2C tactics for precision-focused, account-based strategies that create budget efficiency and market dominance within targeted segments.

The competitive opportunity is significant: while competitors chase high-volume keywords and vanity metrics, strategic B2B marketers focus on qualified accounts and pipeline impact using advanced targeting intelligence and automated optimization systems.

Ready to transform your B2B Google Ads approach?

Discover how Vehnta works and achieve precision at scale—cut costs, improve targeting, and align every campaign with how your customers actually buy.

Book a demo: boost leads, cut costs.

Image Credits

Featured Image: Image by Vehnta. Used with permission.