Ask An SEO: How Can I Turn Low-Converting Traffic Into High-Value Sessions? via @sejournal, @kevgibbo

This week’s Ask an SEO question comes from an ecommerce site owner who’s experiencing a common frustration:

“Our ecommerce site has decent traffic but poor conversion rates. What data points should we be analyzing first, and what are two to three quick conversion rate optimization (CRO) wins that most companies overlook?”

This is a great question. Having good traffic but poor conversion rates is really frustrating for ecommerce site managers.

You’ve successfully managed to get hundreds or even thousands of people onto your landing pages, but only a tiny proportion of them turn into paying customers.

What’s going wrong, and what can you do about it?

I’ve broken down my tips as follows:

- Start with your bigger picture goals.

- Double-check your targeting.

- Data points to analyze.

- Simulate the user journey.

- Quick CRO wins.

Thinking About The Bigger Picture First

Before answering your question, I think it’s valuable to take a step back and think about your approach to running your site – and what your goals are.

People often get lots of low-quality traffic for the following kinds of reasons:

- They’re attracting the wrong kinds of people.

- They’re using paid ads ineffectively.

- The content on the site gets clicks, but doesn’t solve visitors’ needs.

- The site is confusing, unclear, or even annoying to use.

For me, conversion is always built on the same key fundamentals:

- Quality over quantity: There’s no value in having millions of visitors if none of them convert. I’ve worked on ecommerce sites where we implemented changes that made traffic drop dramatically. However, the quality of the remaining traffic was much higher, meaning conversion rates – and revenue – soared.

- Focus on user experience (UX): It’s really important to understand the user journey from inception to conversion. What’s helping people navigate your site, and what’s hindering them? Often, this is simply about returning to the basics of UX. High-value sessions come from relevance, ease, and trust – all of which are fully within your control.

So, before making changes, I’d encourage you to step back and think about your goals and objectives for the site. Everything else will feed into that.

What’s Realistic?

It’s helpful to have a benchmark for what your conversion rate should be.

According to Shopify data, the average ecommerce site conversion rate is 1.4%. A very good rate is 3.2% or above, while very few sites hit more than 5%.

Double-Check Your Targeting

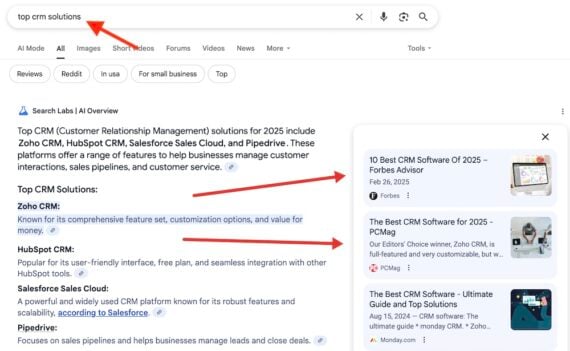

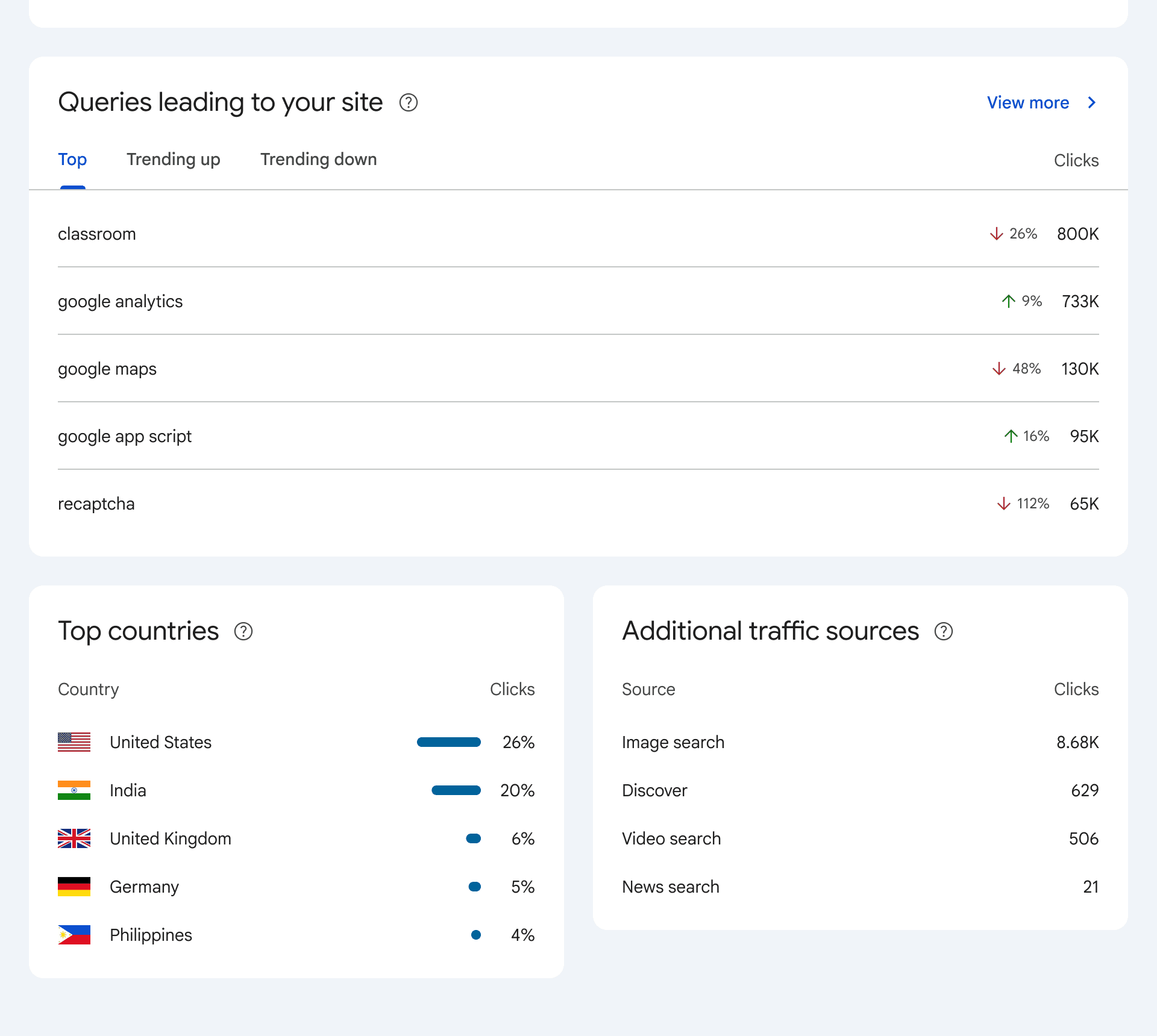

A common reason people get high traffic but low conversions is due to problems with their targeting. Essentially, they’re attracting the wrong kinds of site visitors.

For example, you might run a site selling tennis memorabilia. But most of the traffic you get is from people searching for tickets to tennis tournaments. As a consequence, most visitors bounce.

If this is the case, it’s time to rethink your SEO. Are you ranking for the right keywords? Are your landing pages aligned with the top queries for those search terms? Making changes here can make a big difference.

However, if your targeting is correct but conversion is still off, it’s time to look into CRO.

5 Kinds Of Conversion Rate Data To Analyze

By analyzing how people navigate your site, you can start to build a picture of how they’re using it – and which features of your site or the user journey are turning visitors off.

If you’re using a store builder like Shopify, Wix, or Squarespace, you should have access to quite a lot of CRO data within the dashboard. On older sites, it can be a bit trickier to figure these things out.

There are lots of metrics that can give you insights into conversion rates. But the following information is often most telling:

1. User Behavior Metrics

- Bounce rate and exit rate: This is especially important for key pages (such as product and checkout).

- Scroll depth: Are users seeing your calls to action and product info?

- Heatmaps: Are users interacting with intended elements?

- Entry points: Are there commonalities between entrances for users who aren’t converting versus those who are converting? If so, this may indicate a specific issue with certain user journeys.

2. Conversion Funnel Drop-Off

- Abandonment: Where are users abandoning the funnel (e.g., product page → add to cart → checkout)?

- Granularity: I’d also recommend looking at abandonment rates for each step.

3. Device & Browser Performance

- Device: Conversion rate by device (mobile often underperforms).

- Operating system: Technical glitches in specific browsers/OS versions can quietly hurt conversions.

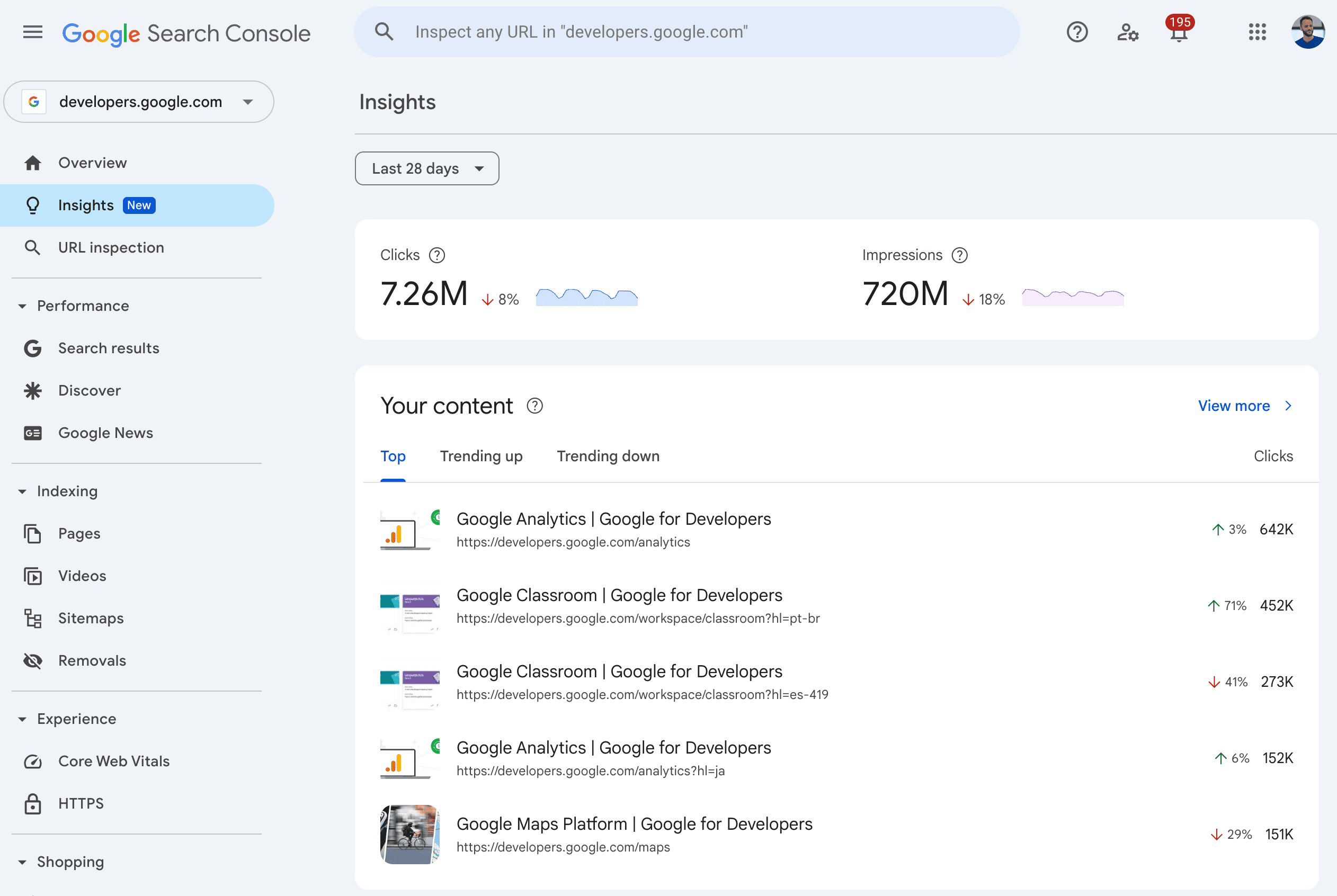

4. Site Speed & Core Web Vitals

- Page load time: This directly affects conversions, especially on mobile.

- Track it: Use tools like Google PageSpeed Insights or Lighthouse.

5. On-Site Search Behavior

- What are people searching for?

- Are searches returning relevant results?

- High search exit rate often signals poor relevance or UX.

This can seem like a lot of work! However, what you’re really looking for is a basic benchmark for each of the above points that you can plug into a spreadsheet.

You only need to gather this data once. Then, it’s just a case of seeing how changes you make affect those scores.

For example, say you have a high cart abandonment rate of 90%. You might decide to make some simple changes to the process (e.g., letting users check out as a guest). You’ll then be able to see what effect your change has had.

Simulate The User’s Journey

This is all about putting yourself in your users’ shoes. I’m often surprised by how few ecommerce site owners do this, yet you can’t understand what’s going wrong if you don’t use the site like a user would.

Simulating user journeys often exposes glaring usability issues.

For example, it’s quite common to land on a category page for, say, sports T-shirts, and find it’s full of broken links. You click on a T-shirt that looks good, but it leads to a 404. That’s such a turn-off to potential customers.

There are, of course, endless possible ways that people can navigate your site. I’d prioritize a handful of your most popular products and try to imagine how people would go through the process of buying them.

Here are some of the things to look out for:

Landing Page (First Impression)

- Is the value proposition clear within five seconds?

- Are headlines concise and benefit-driven?

- Is there a clear CTA above the fold?

- Are distractions minimized (pop-ups, autoplay, clutter)?

Navigation And Search

- Is site navigation intuitive and consistent?

- Can users find products in three clicks or fewer?

- Are filters/sorting options clear and responsive?

Category Pages

- Is key info shown (price, reviews, quick add)?

- Is the layout clean (think about devices here, mobile responsiveness, font size, etc.)?

- Are products visible above the fold?

Product Detail Pages

- Are product titles, descriptions, and photos compelling and complete?

- Is the price, shipping, and returns information visible without scrolling?

- Are reviews and ratings visible and credible?

- Is the “Add to Cart” button obvious and persistent?

Cart And Checkout

- Is the cart editable (quantity, remove item)?

- Are total costs (including shipping/tax) shown upfront?

- Can users check out as a guest?

- Are there too many form fields? (Trim non-essentials.)

- Are payment options clearly presented and working?

Speed

Quick CRO Wins That Are Often Overlooked

Conversion rate optimization doesn’t always require a root-and-branch site upgrade.

Here are some simple tweaks you can make that can be surprisingly impactful.

Improve Product Page Microcopy And Visual Hierarchy

If a user lands on a product page, it’s crucial to communicate key information to them. Yet, for many products, people have to scroll below the fold to find the information they need.

- Show total price, shipping, and returns at the top of the page.

- Have a clear image of the product (you’d be amazed, but this doesn’t always happen).

- Spell out the product name, color, type, and other information.

- Add urgency (“Only 3 left!”), real-time interest (“27 people viewed this today”), or social proof (UGC, ratings) near the CTA.

Make It Easy To Buy

It can sometimes be surprisingly difficult for people to know how to actually buy things on ecommerce sites, particularly when using mobile. I’d recommend:

- Making the “Add to Cart” button sticky on mobile. Make sure it’s in a clear, bold, contrasting color.

- Add subtle animations or color shifts to draw attention.

- Show trust badges (e.g., secure checkout, money-back guarantee).

Make It Easier To Find Items

Any ecommerce site today should have a search bar where people can look for products. Help people find products by offering auto-suggestions with images and categories.

I’d also recommend tracking no-results queries and fixing them with redirects or better tagging. You might also want to promote high-converting products in the top results.

Simplify The Checkout Experience

A poor checkout experience can be a real killer for conversion. The priority here is almost always about making things as easy as possible for buyers.

- Remove non-critical fields (phone number, company name).

- Offer guest checkout as default.

- Add progress indicators to reduce perceived friction.

Use Exit-Intent Offers Wisely

Exit-intent technology can be very helpful, at least on some kinds of websites.

However, it’s important to use it thoughtfully and appropriately (what makes sense on a fast-fashion website won’t look as good on a luxury goods store).

Instead of broad discounts, use behavioral targeting. Here are some options:

- Offer a free shipping incentive only to high-cart-value exits.

- Show email capture pop-ups only after a period of inactivity or product page scrolling.

- Use exit-intent popups with tailored offers (e.g., “Complete your order now and get 10% off”).

- Send a three-part abandoned cart email flow (reminder, offer, scarcity, i.e., “Items going fast!”)

A Final Note: Test It First

Last but not least, I’d always recommend A/B testing before rolling out whole site changes.

If you’ve tweaked a certain part of the user journey or the layout of a landing page, trial it for a week or so and see what results you get.

This avoids making damaging changes that harm conversion rates (and take a long time to rectify).

Preaching To The Converters

I hope these ideas for converting more of your ecommerce site’s visitors have helped.

As I’ve shown, there are tons of potential CRO techniques you can use, and it can get a bit overwhelming.

However, it’s often more straightforward than it seems, and you can often start with small steps that make a difference.

One of the reasons ecommerce site management can be so rewarding is the ability to experiment and see how small changes can make a big difference. Good luck!

More Resources:

Featured Image: Paulo Bobita/Search Engine Journal