Why Chinese manufacturers are going viral on TikTok

Since the video was posted earlier this month, millions of TikTok users have watched as a young Chinese man in a blue T-shirt sits beside a traditional tea set and speaks directly to the camera in accented English: “Let’s expose luxury’s biggest secret.”

He stands and lifts what looks like an Hermès Birkin bag, one of the world’s most exclusive and expensive handbags, before gesturing toward the shelves filled with more bags behind him. “You recognize them: Hermès, Louis Vuitton, Prada, Gucci—all crafted in our workshops.”

“But brands erase ‘Made in China’ from the tags,” he continues. “Same leather from their tanneries, same hardware from their suppliers, same threads they call luxury. Master artisans they never credit. We earn pennies; they make millions. That is unfair—to us, to you, to anyone who values honesty.”

He ends by urging viewers to buy directly from his factory.

♬ original sound – DHgate

Video “exposés” like this—where a sales agent breaks down the material cost of luxury goods, from handbags to perfumes to appliances—are everywhere on TikTok right now.

Some videos claim, for example, that a pair of Lululemon leggings costs just $4 to make. Others show the scale and precision of Chinese manufacturing: Creators walk through spotless factory floors, passing automated assembly lines and teams of workers at clean, orderly stations. Some factories identify themselves as suppliers—or former suppliers—for brands like Dyson, Under Armour, and Victoria’s Secret.

Whether or not their claims are true, these videos and their virality speak to a new, serious push by Chinese manufacturers to connect directly with American consumers. Even with tariffs, many of the products pitched in the videos would still be significantly cheaper than buying from the name brands. (MIT Technology Review did not verify the claims made in the videos about where products are produced and how much the manufacturing costs; Lululemon, Hermès, Kering (the owner of Gucci), and LVMH (the owner of Louis Vuitton) did not reply to requests for comment.)

Fueled by fears of losing international business and frustration over Trump-era tariffs, factories are turning their production lines into content studios to market themselves—filming leather workshops and sewing lines, offering warehouse tours. What began as the work of a few frustrated sourcing agents has morphed into a full-blown genre that’s part protest, part marketing plan, part survival strategy.

It’s “a collective search for a workaround” to the tariffs, says Ivy Yang, an e-commerce expert and founder of the New York–based consulting firm Wavelet Strategy. “Smaller platforms and sourcing agents are jumping in, offering ‘direct from factory’ content on social media as an alternative supply route.”

Cutting out the middleman

The Chinese creators sharing insights into sourcing materials and manufacturing techniques often offer direct purchasing options that effectively bypass traditional retail channels.

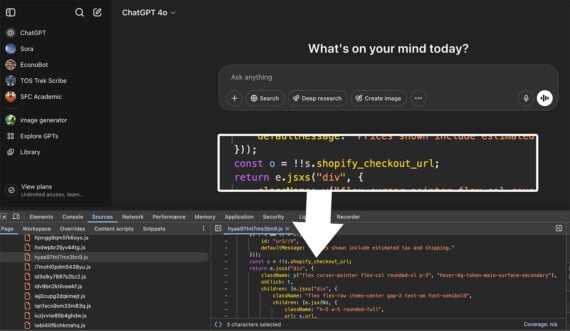

The companies that sell directly to consumers include DHgate, a Chinese B2B e-commerce platform, which users commonly refer to as “the gate” or “the yellow app.” In the US Apple app store, the app jumped from #302 on April 8 to #2 overall in mid-April, just behind ChatGPT. On April 15, it was the most downloaded app in the country. As of April 18, DHgate sat at the top of Apple’s shopping charts in 98 countries.

After buying on DHgate, users enthusiastically return to TikTok to share their new purchases; one user jokingly bragged, “Ordered my bag from my Chinese plug.”

DHGate told MIT Technology Review that the social media attention has resulted in a surge in transactions on the platform, with categories like home goods, electronics, outdoor gear, and pet supplies seeing the most popularity. During the week of April 12 to 19, home appliances saw a 962% increase in sales, while security tech jumped 601%.

TikTok is indeed not a vanity project for these manufacturers but a survival strategy in an increasingly competitive environment.

Chinese factories have long sold to overseas markets, but when domestic economic growth started to slow in the past decade, manufacturers increasingly turned to major B2B platforms like Alibaba to connect with buyers abroad without relying on middlemen. In the past few years, however, the cost of gaining visibility to foreign buyers on major platforms like Amazon and Alibaba has skyrocketed.

“It has become a crowded, saturated space, and it could cost 30,000 to 40,000 RMB [$210,000 to $290,000] a year just to get your factory to show up on the first page in search results,” says Logan Wang, an e-commerce manager at Shendeng Consulting, who advises Chinese manufacturers on overseas operations.

The landscape only got more fraught as traditional manufacturing sectors struggled with oversupply and post-covid stagnation. In 2024, China’s apparel exports to the US grew by less than 1%, while the average unit price of those goods dropped by 7.6%—a sign that competition is fiercer and profit margins are shrinking.

Add the new tariffs to this mix and Chinese manufacturers are increasingly motivated to find creative ways to reach buyers.

Linda Luo, a manager at a Guangzhou-based apparel factory, says that in the wake of the latest round of sanctions, her factory has paused US shipments, which previously accounted for around 30% of their sales. Now, storage rooms are filling up with products that have no clear destination.

“Many nearby factories are like us,” Luo says, “holding out to see how these tariffs develop, hoping the situation will resolve itself.” Motivated by the success of peers who’ve gone viral, Luo says, her team is now actively reaching out to TikTok-famous sourcing agents, hoping to forge direct connections with new buyers.

But it’s not just economic conditions pushing the viral videos; there’s also a feeling that Chinese work and craftsmanship are being disrespected. In a Fox News interview on April 3, for instance, Vice President JD Vance made a comment denigrating the “Chinese peasants” who make products for Americans. The remark drew sharp criticism from Chinese officials and from Chinese people across the internet, who viewed it as insulting.

“Chinese manufacturers have done the dirtiest, most arduous work for Western brands since the 1980s—often with razor-thin margins,” says Wang. “And yet they’re constantly stigmatized, pushed around, and caught in the crossfire of geopolitics. Hearing President Trump frame the past few decades as China taking advantage of the US—that’s a narrative that doesn’t sit right with anyone working in this industry.”

Factory as spectacle

Beyond rage and anxiety, Chinese factories have been inspired by the past viral success of manufacturing content on TikTok, according to Tianyu Fang, a technology and democracy fellow at the think tank New America who studies Chinese technology and globalization. Since 2020, factory videos showing assembly lines producing everyday items like wigs, dolls, and gloves have amassed millions of views. In comments, viewers describe these looping production videos as “soothing” and “mesmerizing.”

By 2022, factories themselves recognized their work floors as content gold mines. But Alice Gu, who works at a Shenzhen-based digital marketing company and helps factories build their TikTok presence, has seen client inquiries triple over the past year, with many now featuring English-speaking staff as on-camera personalities.

As Fang explains, “These videos resonate with young people in the West on TikTok because manufacturing is so removed from their daily experience. They offer rare glimpses into advanced manufacturing while satisfying genuine curiosity.”

He adds: “Seeing Chinese factory workers address Western audiences directly feels almost subversive.”

The cultural gap between creators and audiences has become an asset rather than a liability, generating authentic moments that resonate with users who are hyper-online.

One creator, Tony, toggles between American accents while promoting light boxes; he has gained over 1.2 million Instagram followers as the face of LC Sign, a Guangzhou electrical signage company. The “alumununu lady,” a saleswoman with a distinctive accent promoting capsule homes by Etong, turned “Hello, boss” into a catchphrase adopted by countless factory videos. In 2024, Dong Hua Jin Long, an industrial glycine manufacturer, went viral for machine-translated promotional videos boasting unmatched production quality. TikTok users found humor in the niche company’s efforts to connect with potential customers, making it a widely circulated meme.

“These videos appeal largely because they’re so wonderfully out of context,” Fang says. “The popularity of these sourcing videos reflects a desire to understand previously hidden parts of the global economy and find alternatives to mainstream political narratives.”

Despite the trend, experts including Yang and Fang don’t believe large numbers of average American consumers will shift to buying directly from factories, as the process involves too many logistical hurdles. There’s also been plenty of news coverage warning that you may not end up getting an all-but-equal-to-Hermès bag without the brand label.

Yaling Jiang, writer of the newsletter Following the Yuan, explains that buying through factory back channels is a common practice in China: “It’s an open secret that many local factories produce for prestigious brands, and people often buy through side channels to get similar-quality products at a fraction of the price.” However, Jiang suggests that these arrangements rely on a complex supply and distribution system—and warns that some TikTok sourcing agents may be falsely claiming connections to well-known companies.

On top of all this, these direct-to-consumer videos may not even be available much longer. Yang warns that a lot of the content treads dangerously close to copyright infringement. “This will quickly become an IP minefield for platforms like TikTok and Instagram,” she says. “If the trend continues to grow, rights holders will push back—and platform governance will need to catch up fast.”

MIT Technology Review found that many of the original viral videos promoting knockoff products have already been removed from TikTok. DHgate did not respond to a request for comment regarding whether it facilitates the sale of counterfeit products.

Nevertheless, many Chinese factories will almost certainly continue to build out their own R&D teams—and not just to weather the current moment. “Every factory owner’s dream is to have their own brand,” Wang says. “After decades of making products designed elsewhere, Chinese manufacturers are ready to create, not just produce.”